Back at it

-

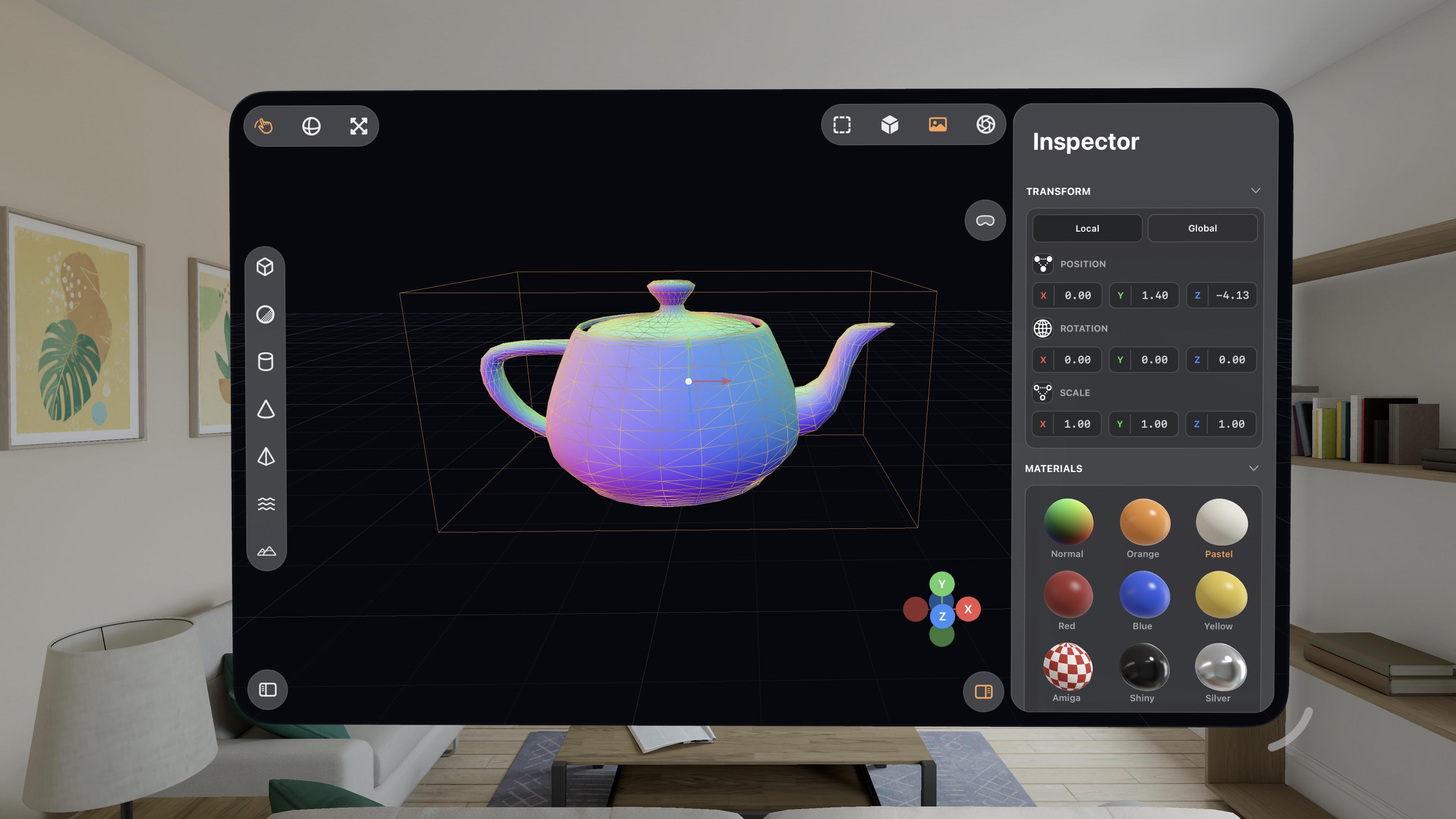

@gklka it's Metal, it's not RealityKit

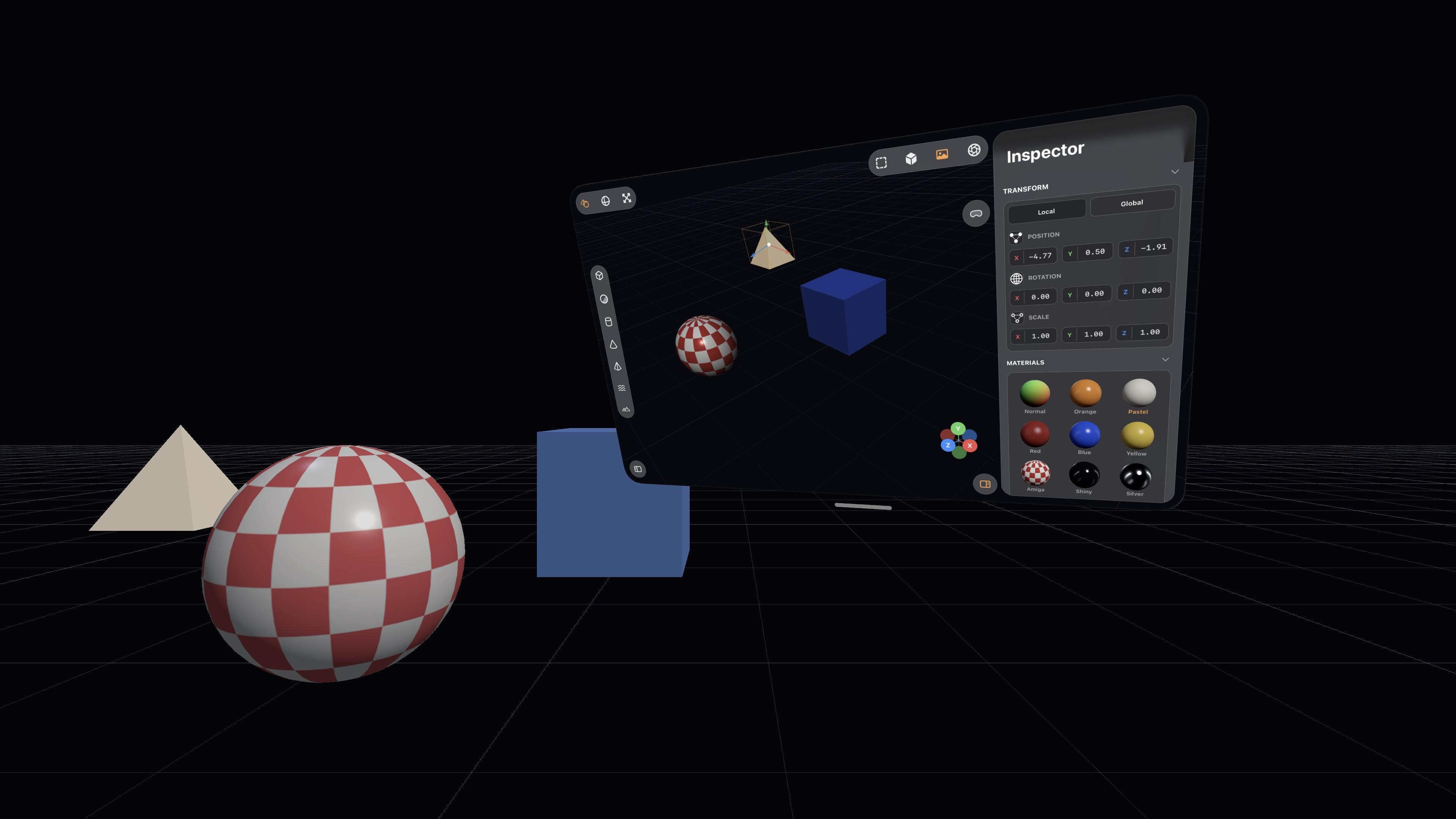

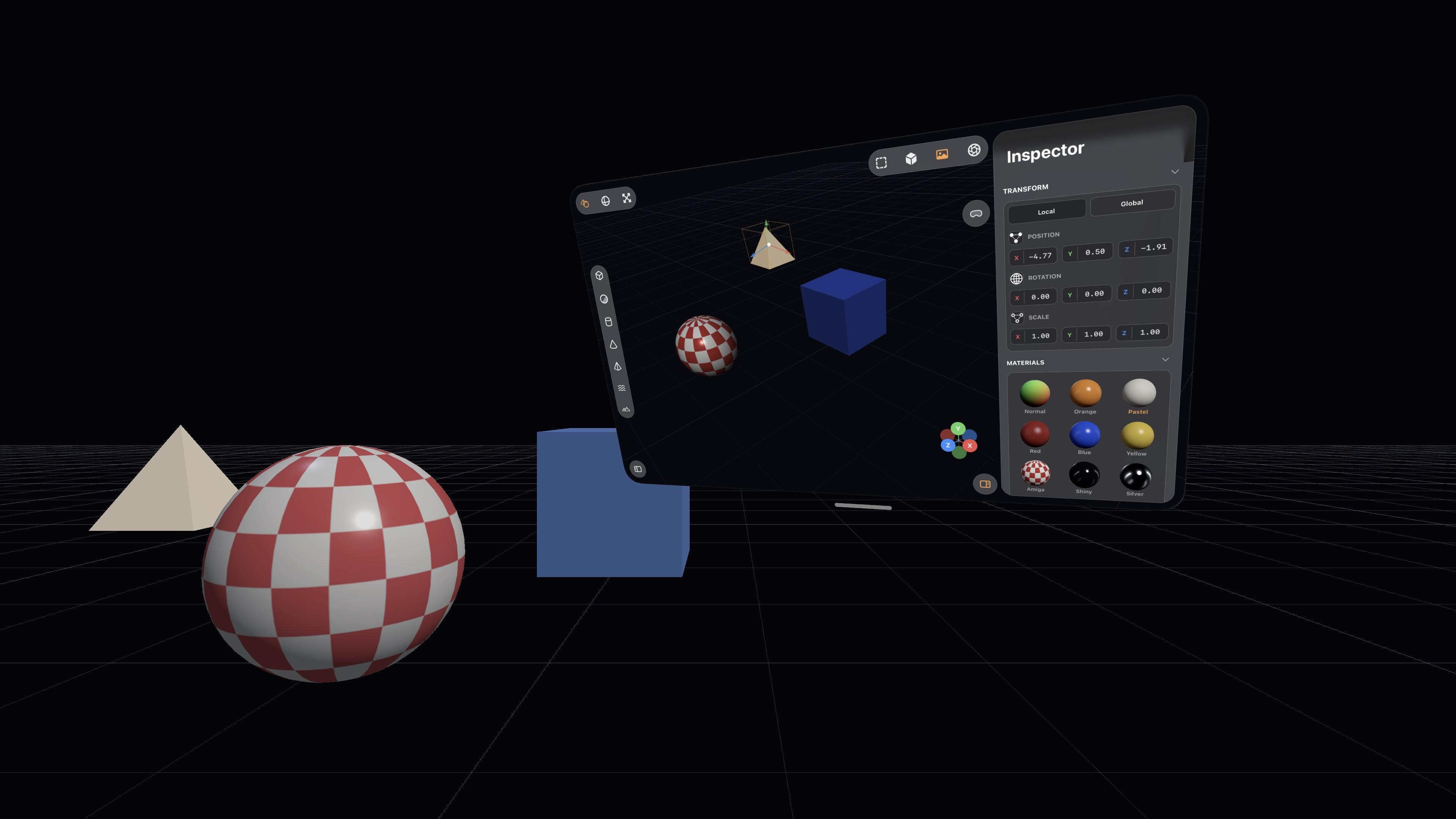

@stroughtonsmith Too bad. These 3D editors are perfect fit for Vision

-

You can see here that the moment I invoke the raytracer, everything goes to shit on visionOS. The userspace went down right at the end of the video, where it cut

What if you could step into your Bryce scenes?

-

@tsturm @stroughtonsmith Yes, of course. We had radically different goals though. Producing what Steve has in a day, even if it’s just a throw-away bit of fun, remains striking to me.

Makes you wonder who's code the LLM learned from. Is there a full metal project out there already? Does it have similar default menu elements? How much of it is novel here? How much more optimized would it be hand crafted? Is it negligible?

-

What if you could step into your Bryce scenes?

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

-

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

I love how visionOS, uniquely, *explodes* when rendering goes wrong.

Hello [MacBook] Neo.

-

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

@stroughtonsmith your results are amazing, but got me curious about Codex writing Metal, so I asked it to make a solar system simulation

After two hours of back-and-forth it still can't seem to texture a sphere in a way that doesn't cause the lighting and texture to rotate with the view

I gave it six goes at trying to get the bloom effect correct, but in the end had to paste an entire blog post into its context about how to render bloom in Metal

-

@stroughtonsmith your results are amazing, but got me curious about Codex writing Metal, so I asked it to make a solar system simulation

After two hours of back-and-forth it still can't seem to texture a sphere in a way that doesn't cause the lighting and texture to rotate with the view

I gave it six goes at trying to get the bloom effect correct, but in the end had to paste an entire blog post into its context about how to render bloom in Metal

@simsaens I could suggest things, but if you want to take this offline, DM me your iMessage maybe

-

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

@stroughtonsmith watching you build these things over the past few weeks has been amazing. Do you think there is a chance you will create a blog post or have a thread with more details about how you are promoting the AI agents?

-

@stroughtonsmith watching you build these things over the past few weeks has been amazing. Do you think there is a chance you will create a blog post or have a thread with more details about how you are promoting the AI agents?

@jlaase I did blog about it, and it has one prompt session transcript: https://highcaffeinecontent.com/blog/20260301-A-Month-With-OpenAIs-Codex

-

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

@stroughtonsmith for my use, the "vibe Coding" is most helpful for edge cases where it would be a huge rabbit whole to implement a relatively small feature, so we're aligned there. Thank you for sharing your work online

-

I love how visionOS, uniquely, *explodes* when rendering goes wrong.

Hello [MacBook] Neo.

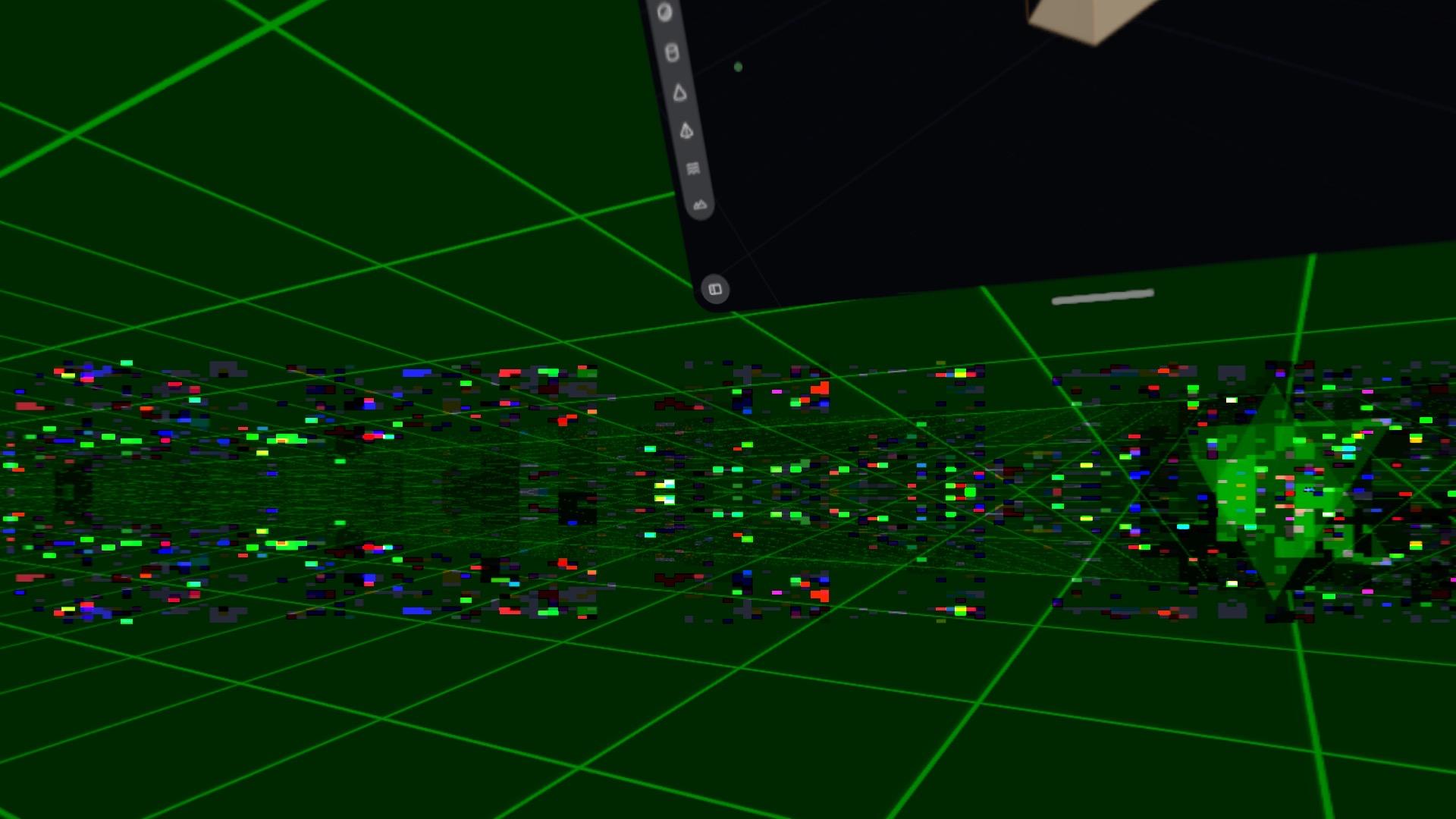

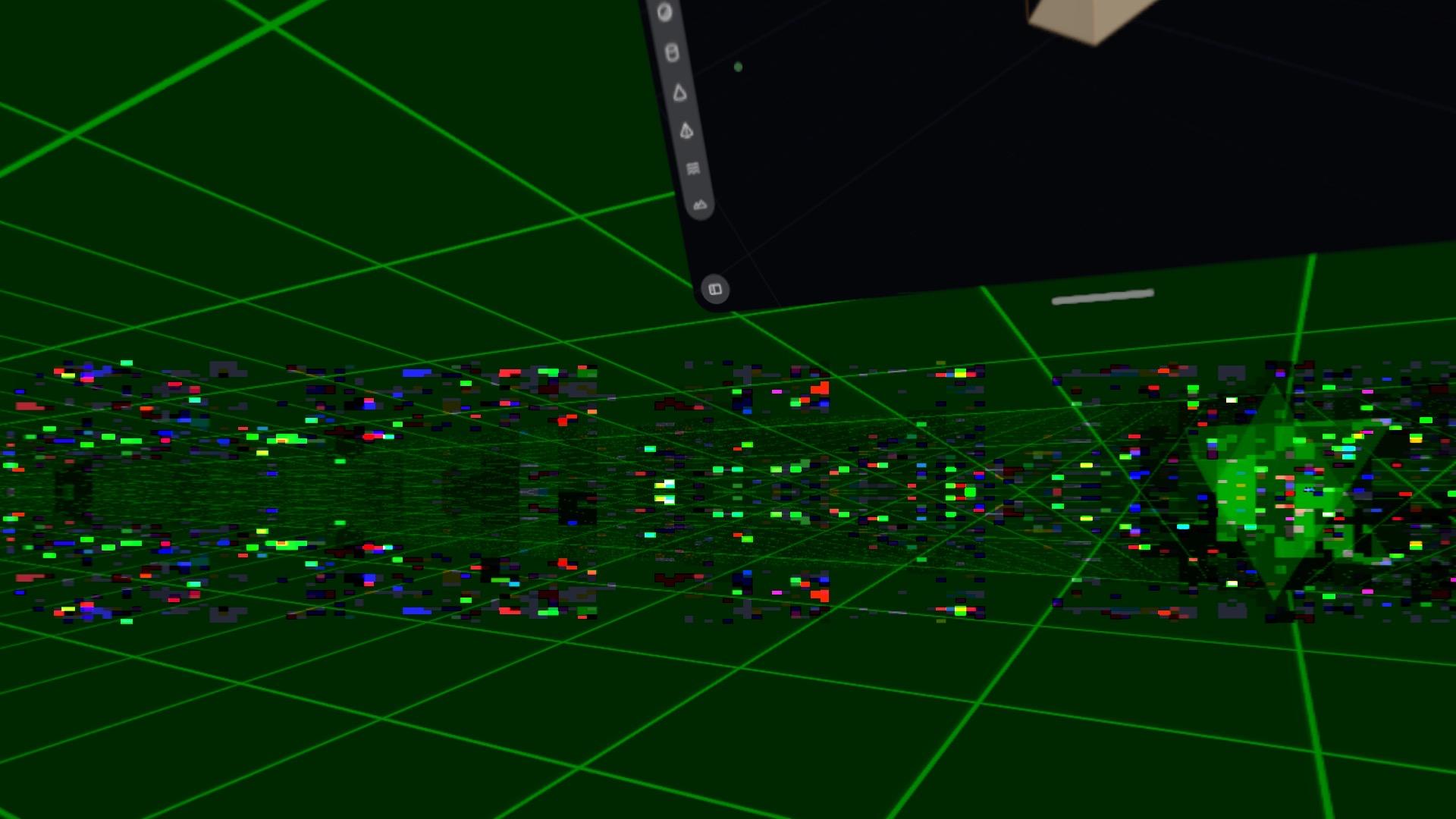

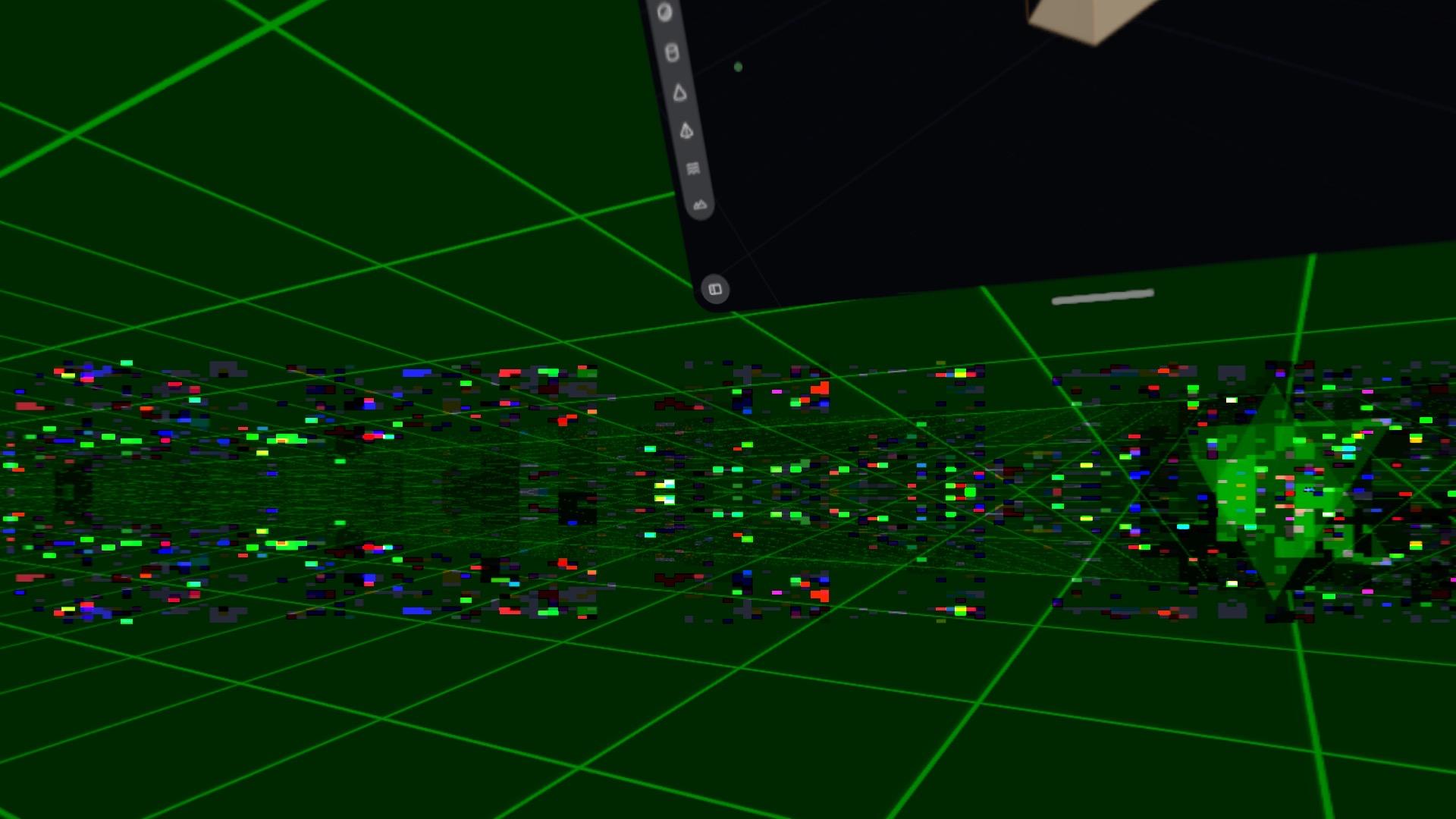

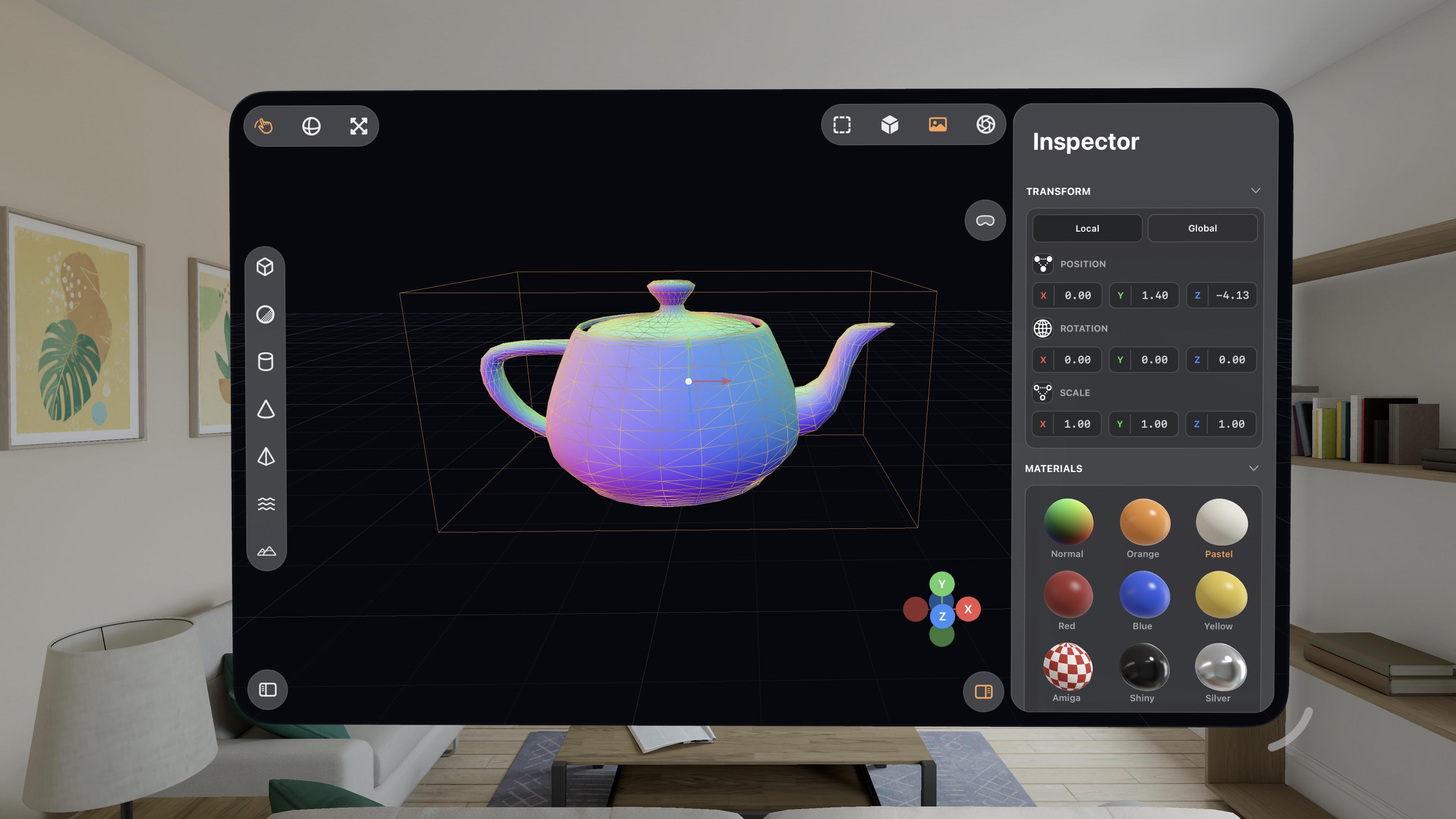

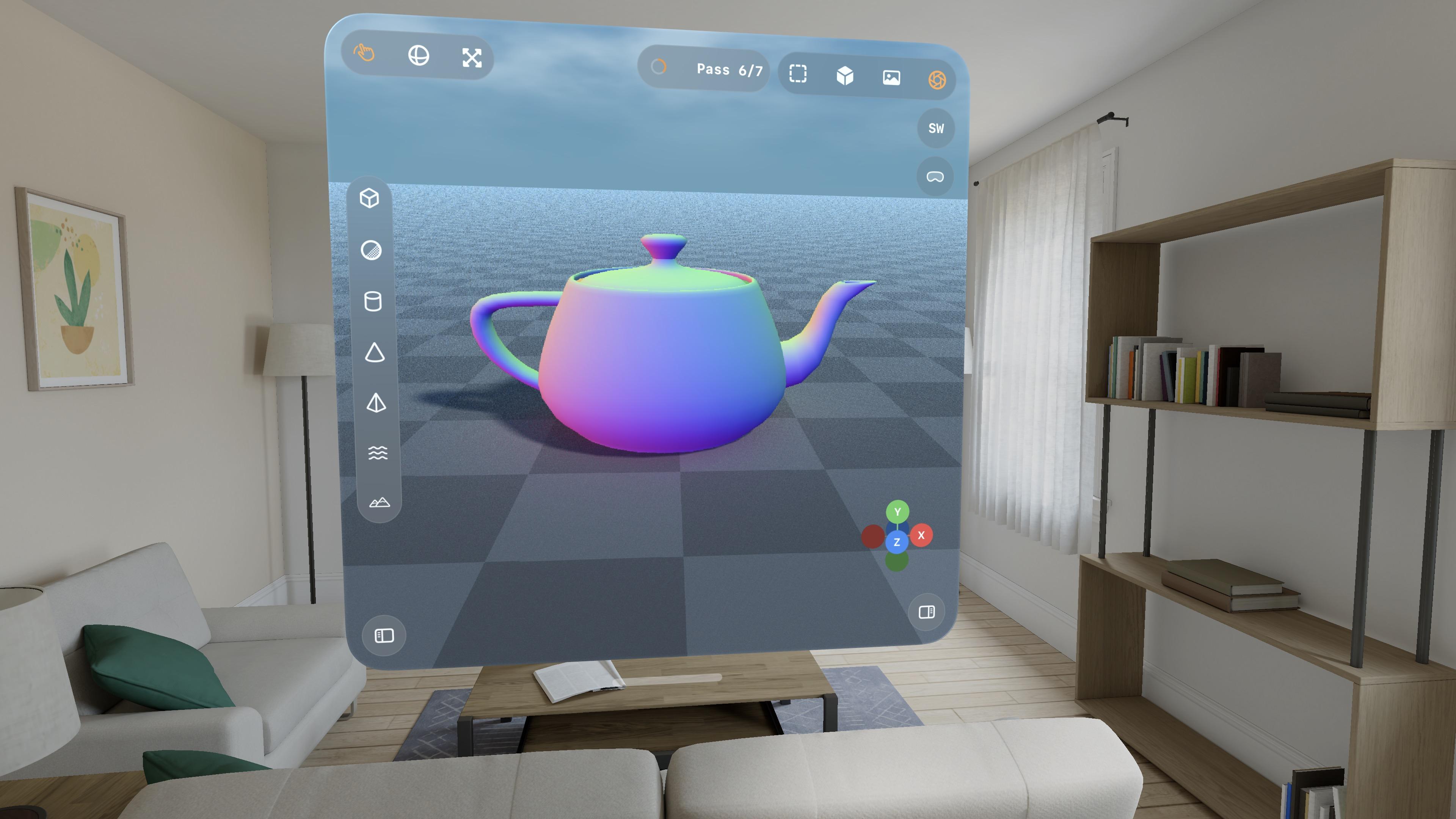

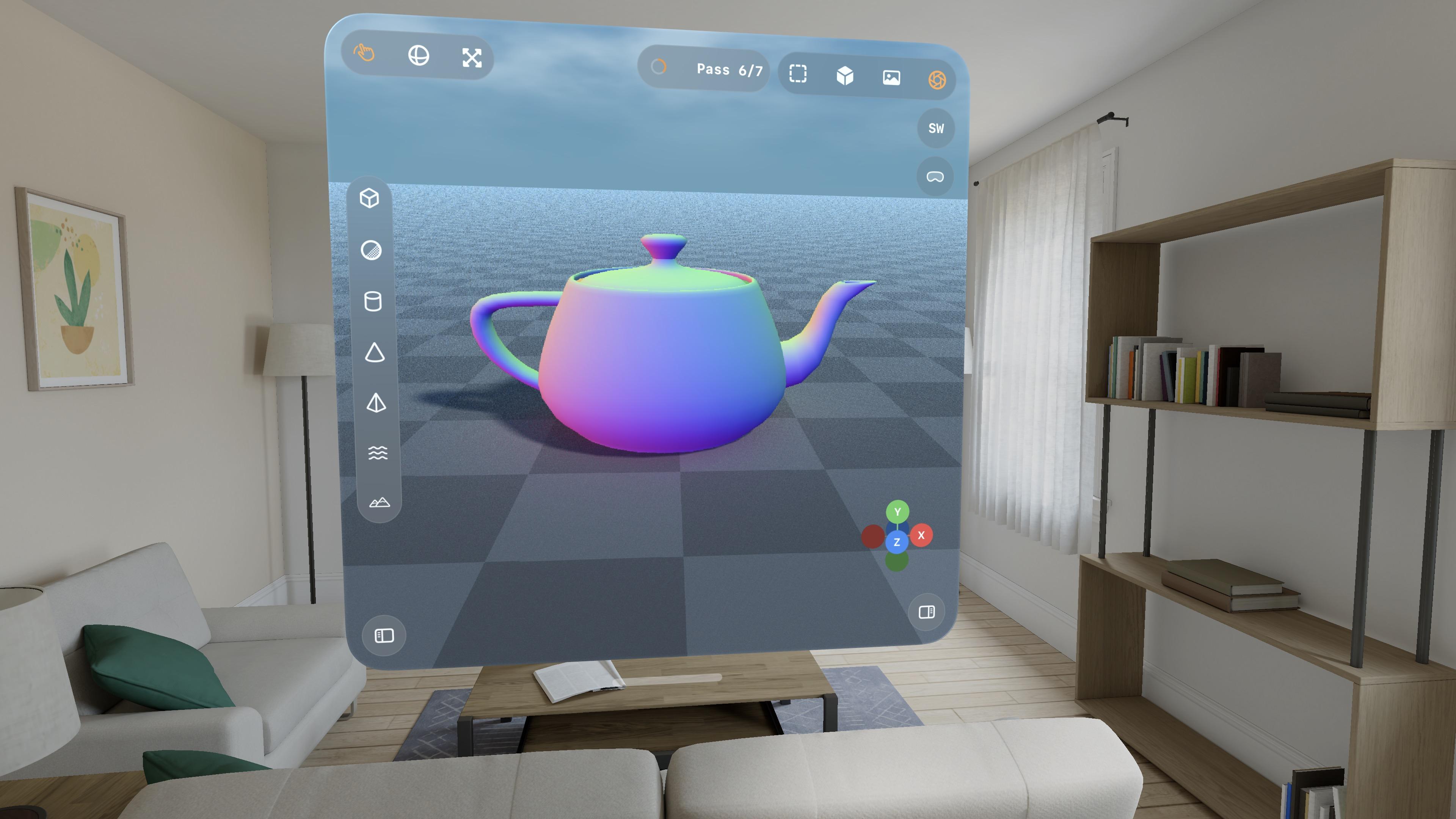

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

-

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

@stroughtonsmith I knew you will do it

-

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

@stroughtonsmith that is extremely cool

-

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

@stroughtonsmith to be honest, that concept might be one of the greatest use cases for Vision Pro. Imagine two controllers in your hand for much more precise input and you've got an awesome product

-

@stroughtonsmith I knew you will do it

@gklka I blame you

-

I love how visionOS, uniquely, *explodes* when rendering goes wrong.

Hello [MacBook] Neo.

@stroughtonsmith I imagine this screen still haunts some Apple engineers

-

I figured I needed some kind of glass shader

@stroughtonsmith but is it Liquid Glass

-

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

I figured why not use RealityKit for the material previews, so now they are actual spheres.

Miraculously, it all still works — the Metal viewport, the Metal immersive scene, and the RealityKit UI elements, but it's very clear the Vision Pro (M2) doesn't have much headroom to build an actual app around this stuff

-

I figured why not use RealityKit for the material previews, so now they are actual spheres.

Miraculously, it all still works — the Metal viewport, the Metal immersive scene, and the RealityKit UI elements, but it's very clear the Vision Pro (M2) doesn't have much headroom to build an actual app around this stuff

The raytracer is, for now, a no-go on visionOS. It's possible I could throttle it and stay within visionOS' systemwide render budget. But it's probably worth improving the RT performance a bunch on its own first before I come back and try it here. I might run out of steam on this prototype before then.

This entire app project is still in my 'Temp' folder, where throwaway projects live

-

The raytracer is, for now, a no-go on visionOS. It's possible I could throttle it and stay within visionOS' systemwide render budget. But it's probably worth improving the RT performance a bunch on its own first before I come back and try it here. I might run out of steam on this prototype before then.

This entire app project is still in my 'Temp' folder, where throwaway projects live

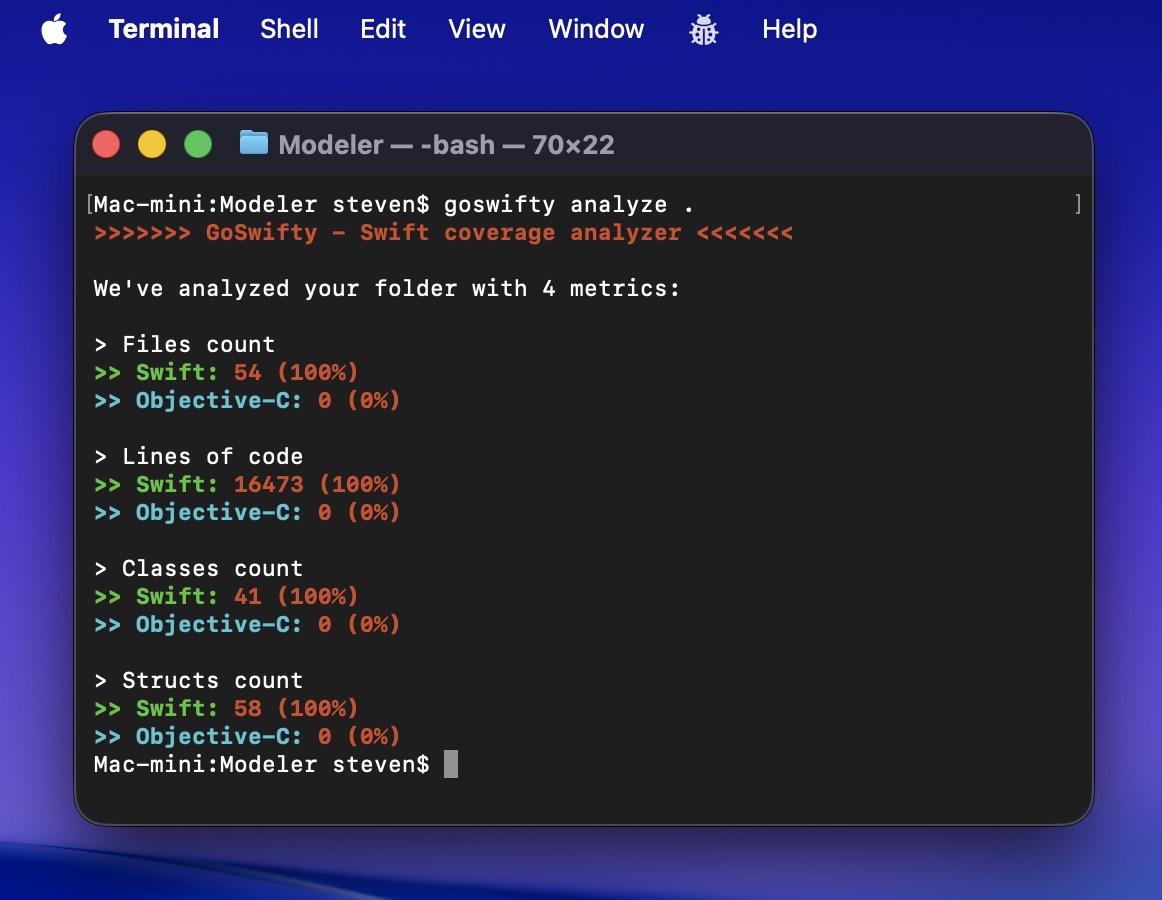

This project, which runs on iPhone, iPad, Mac, and Vision Pro (with Immersive Space), is now 16.5K lines of code