Back at it

-

@gregggreg oh no this runs on devices before that. I think it needs an M1/A14-class device or higher

@stroughtonsmith using GPU in background tasks requires M3+ iPad I thought?

-

@stroughtonsmith using GPU in background tasks requires M3+ iPad I thought?

@gregggreg do you have a source for that? I haven’t seen that documented

-

@gregggreg do you have a source for that? I haven’t seen that documented

@stroughtonsmith https://developer.apple.com/forums/thread/797538?answerId=854825022#854825022

"...which is that the iPhone 16 Pro does not support background GPU. I don't know of anywhere we formally state exactly which devices support it, but I believe it's only support on iPad's with an M3 or better (and not supported on any iPhone)."

-

The raytrace operation will now be dispatched into the background on iOS, allowing for long-running background tasks

iPadOS has never been better for rich, complex, desktop-class apps. Almost all of the old barriers and blockers are gone.

Sadly, Apple waited until most developers had run out of patience with the platform.

If this thing had Xcode, a real Xcode, it would be effectively complete

-

iPadOS has never been better for rich, complex, desktop-class apps. Almost all of the old barriers and blockers are gone.

Sadly, Apple waited until most developers had run out of patience with the platform.

If this thing had Xcode, a real Xcode, it would be effectively complete

(Maybe I should vibe code Xcode, next.)

-

(Maybe I should vibe code Xcode, next.)

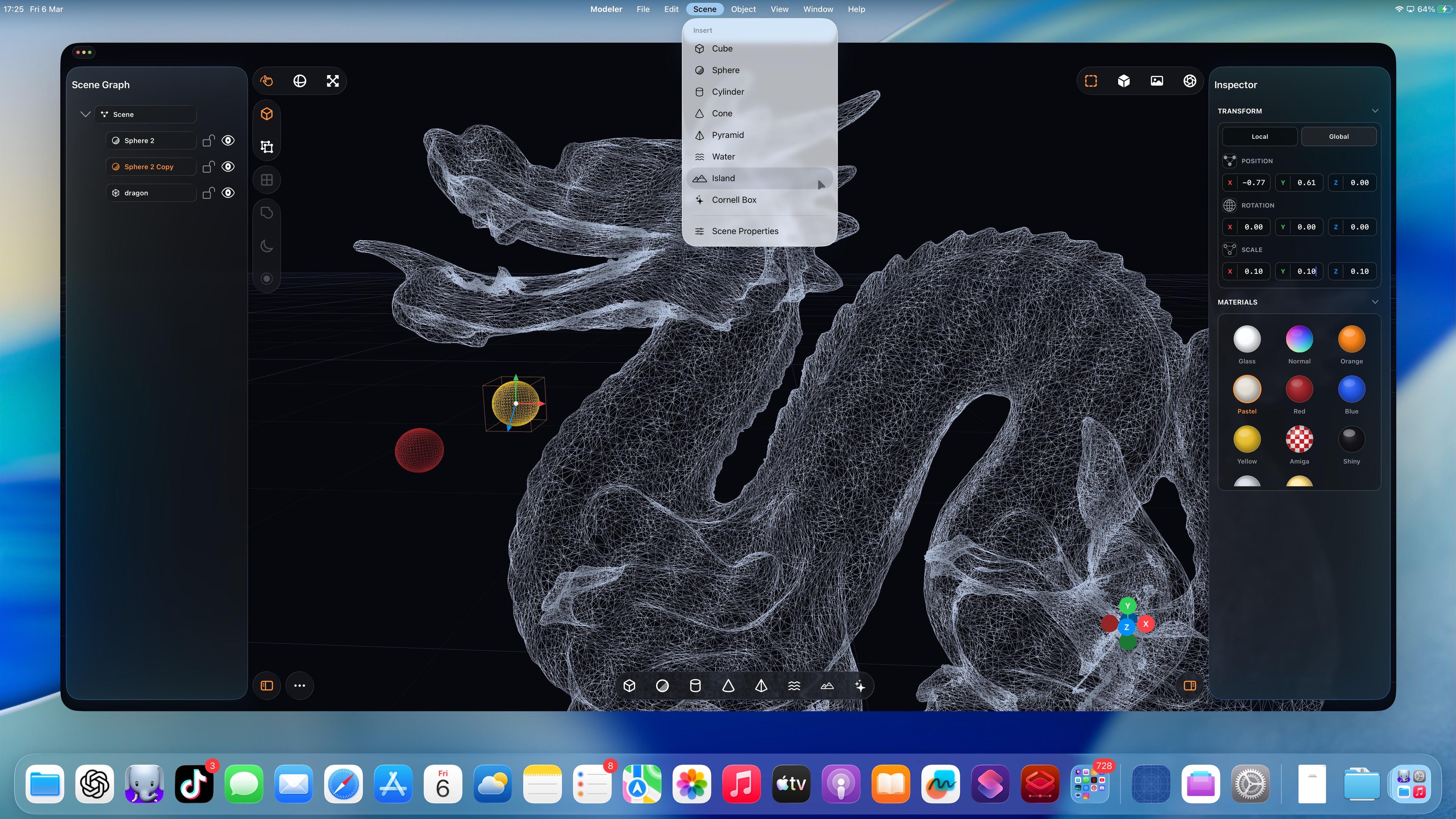

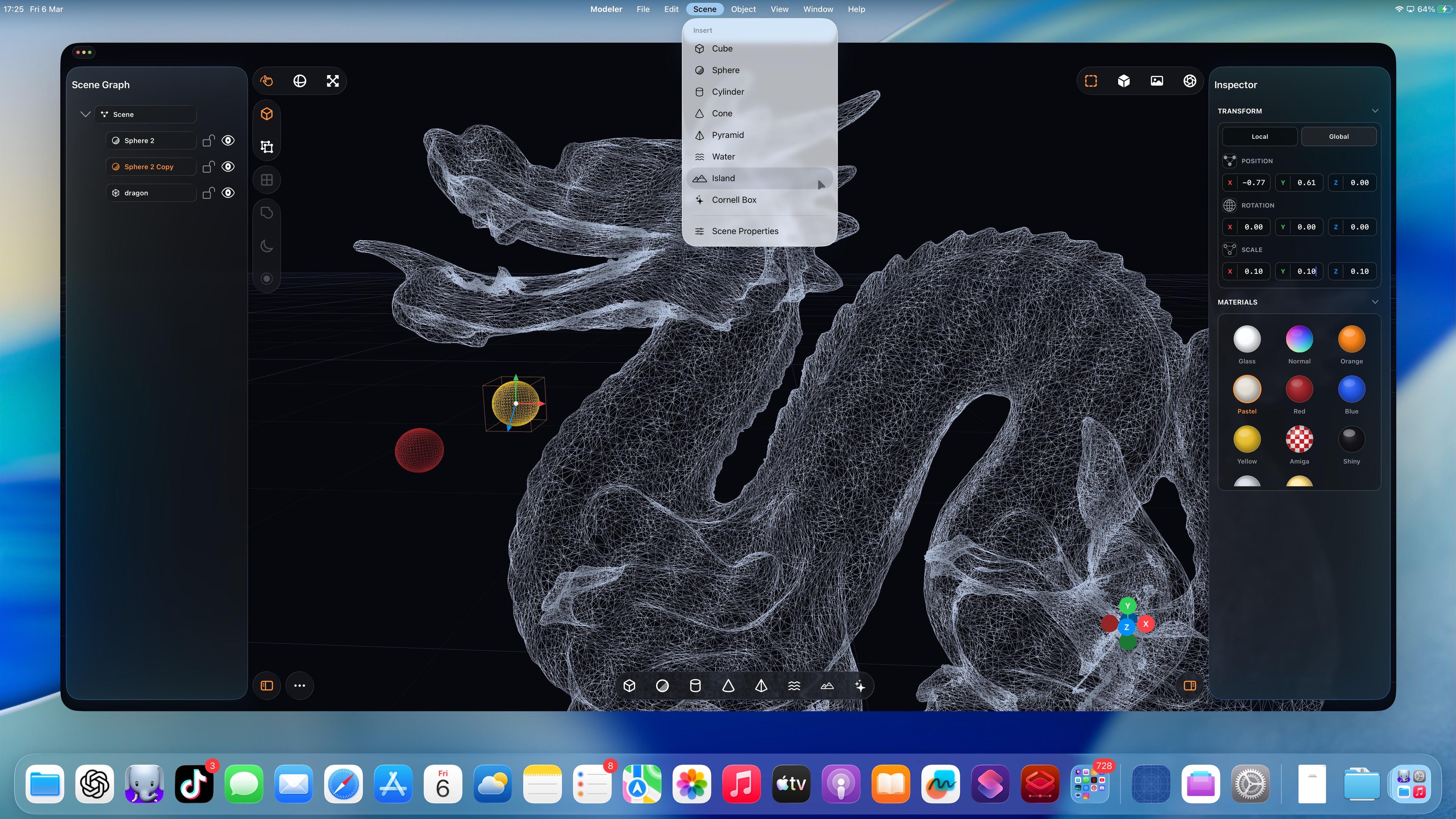

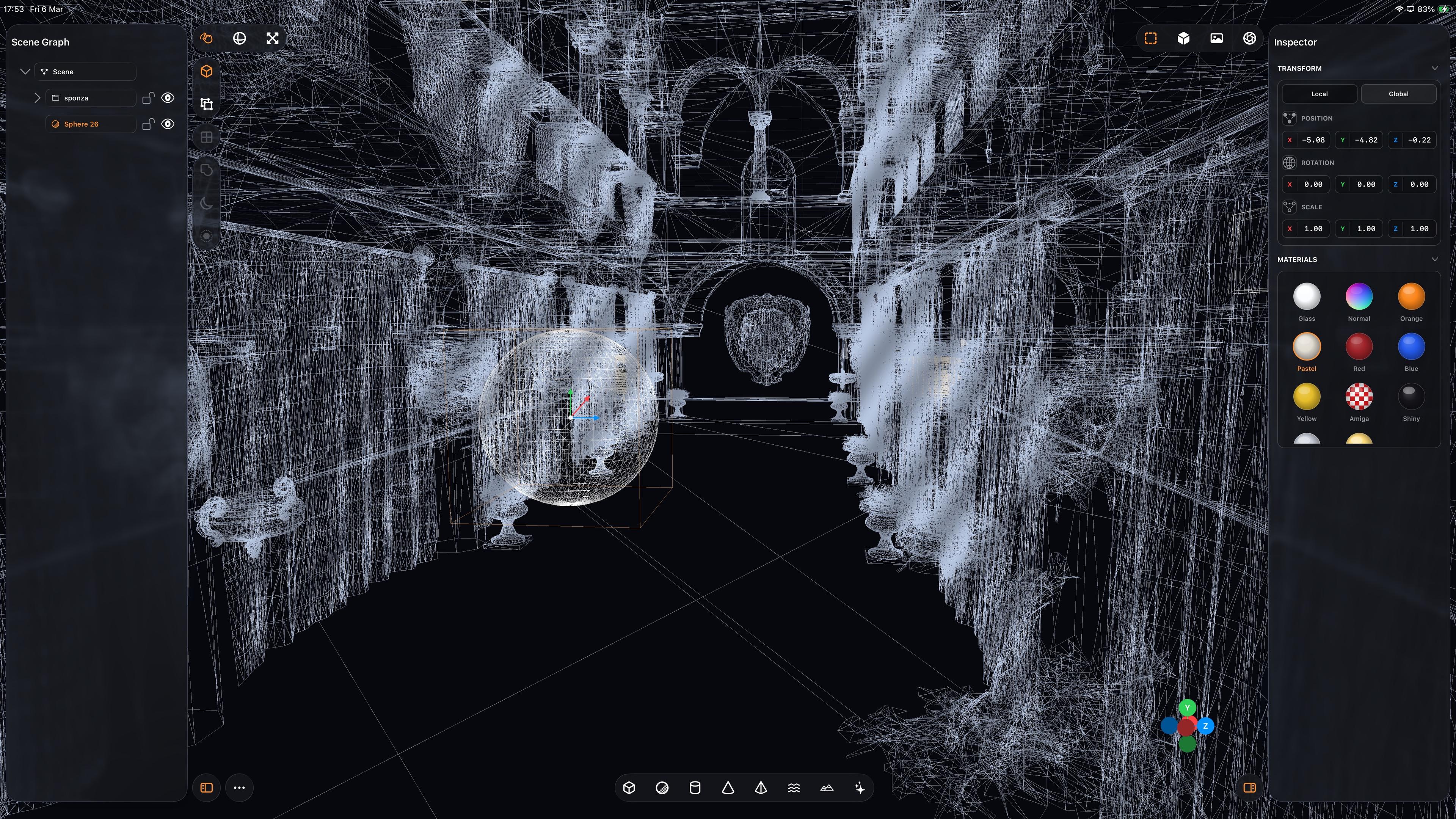

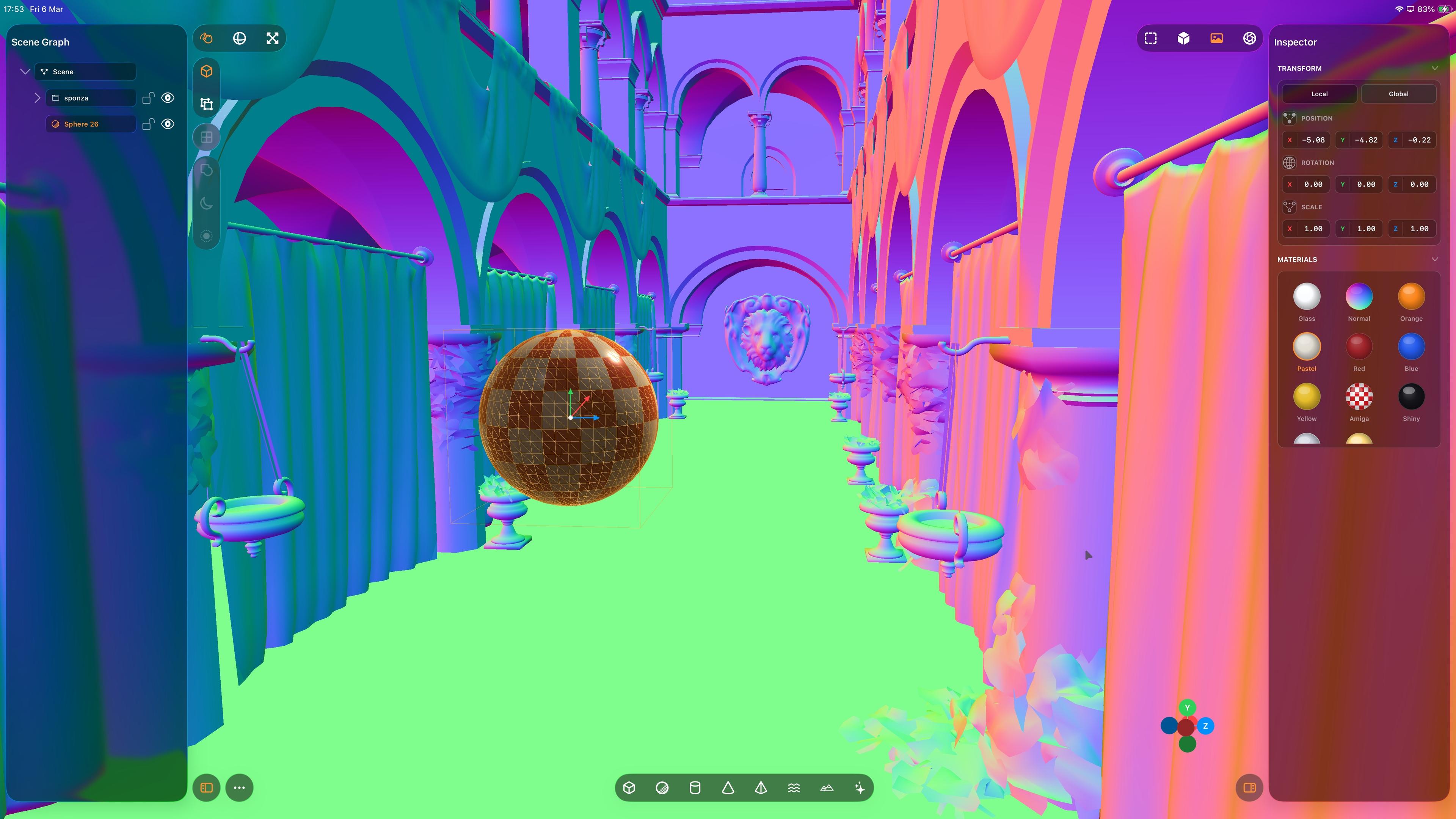

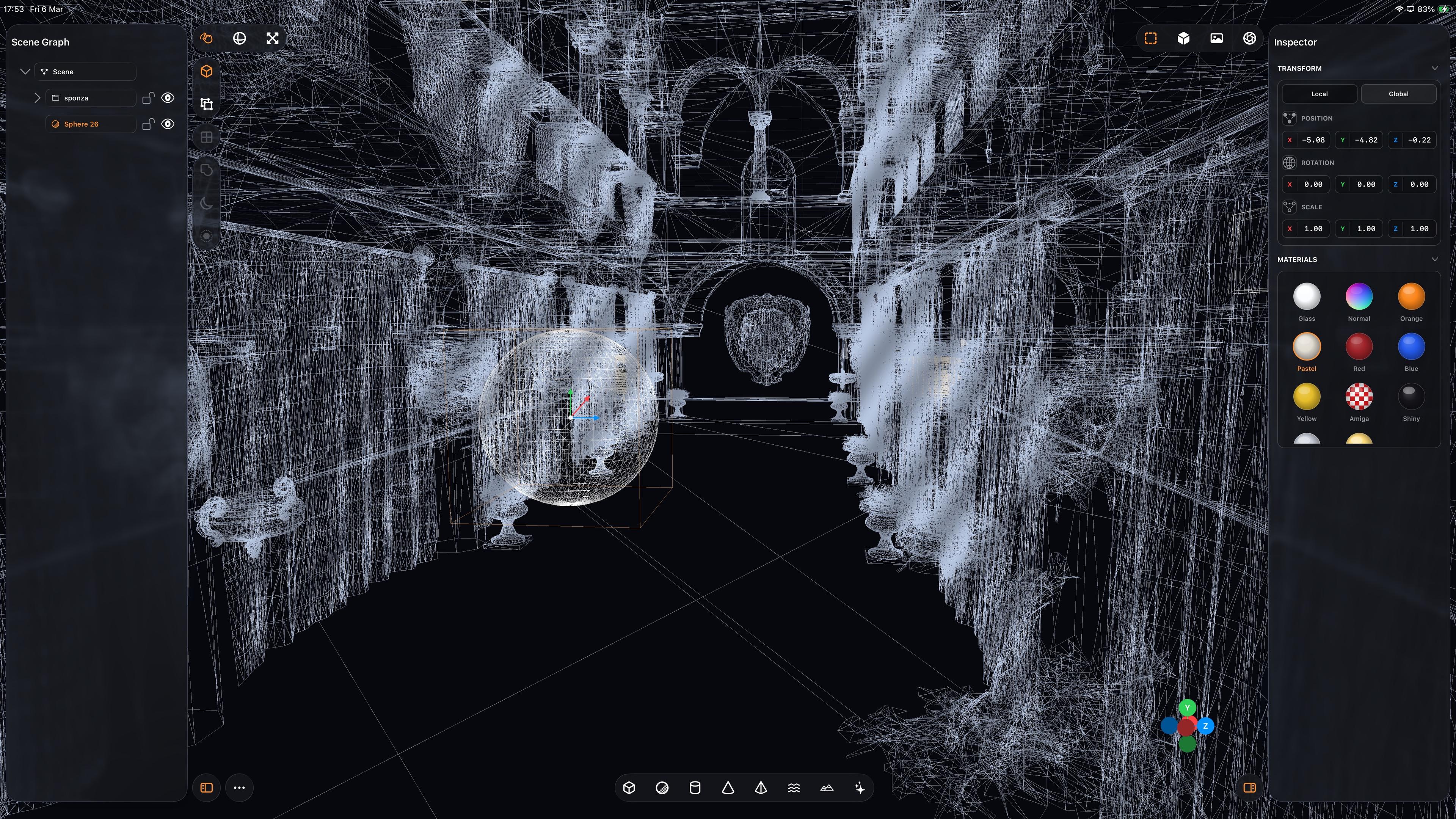

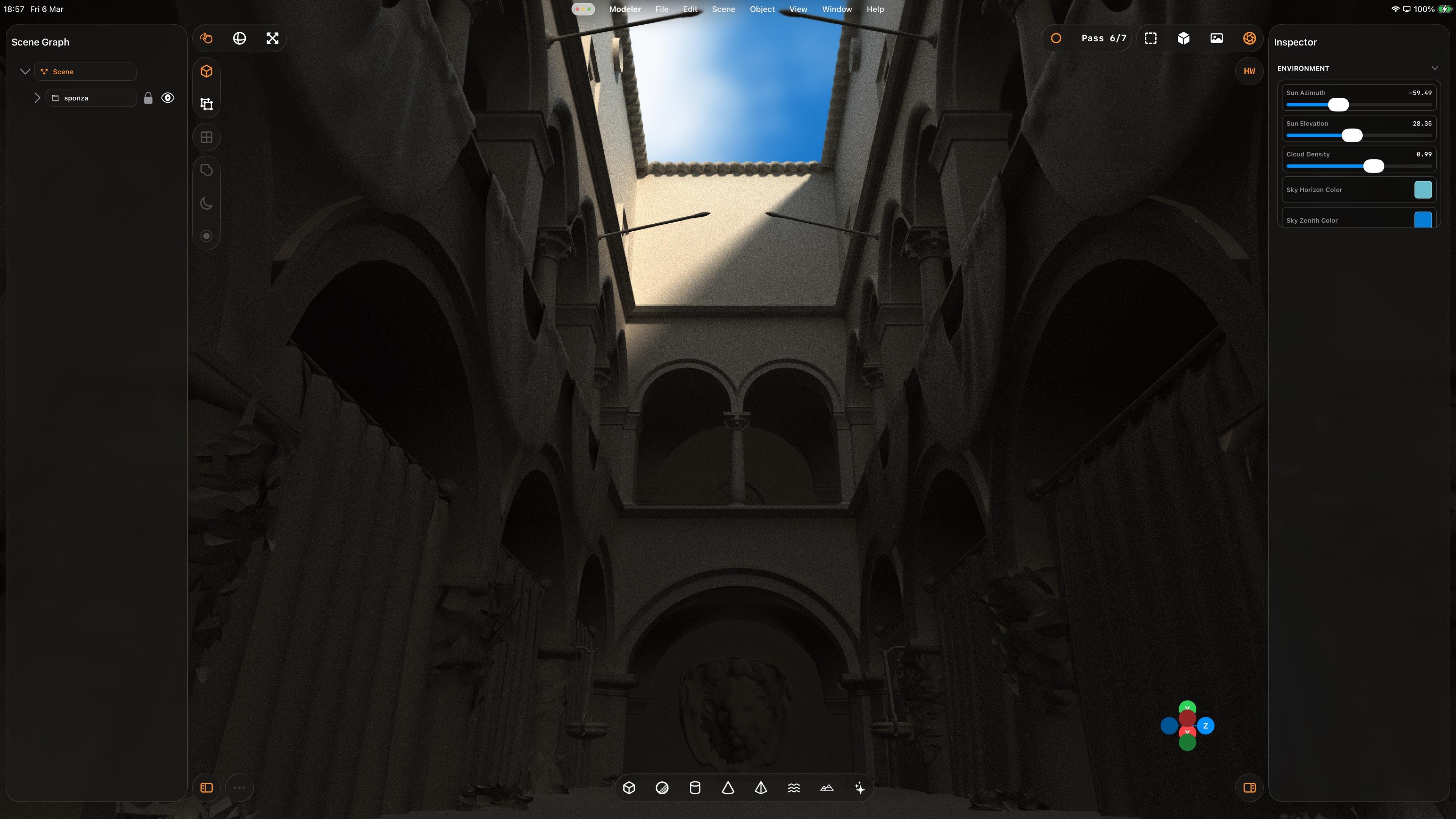

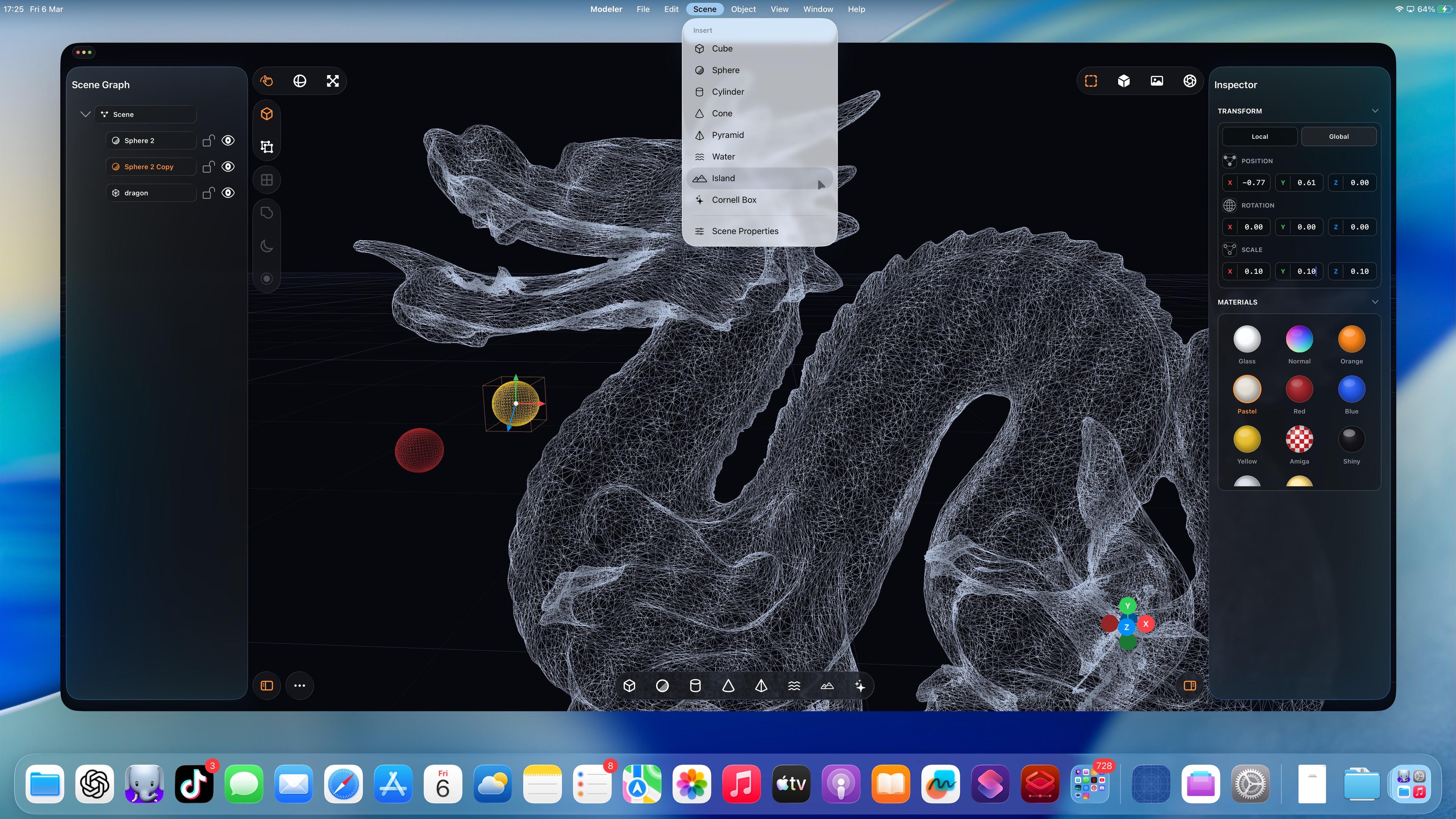

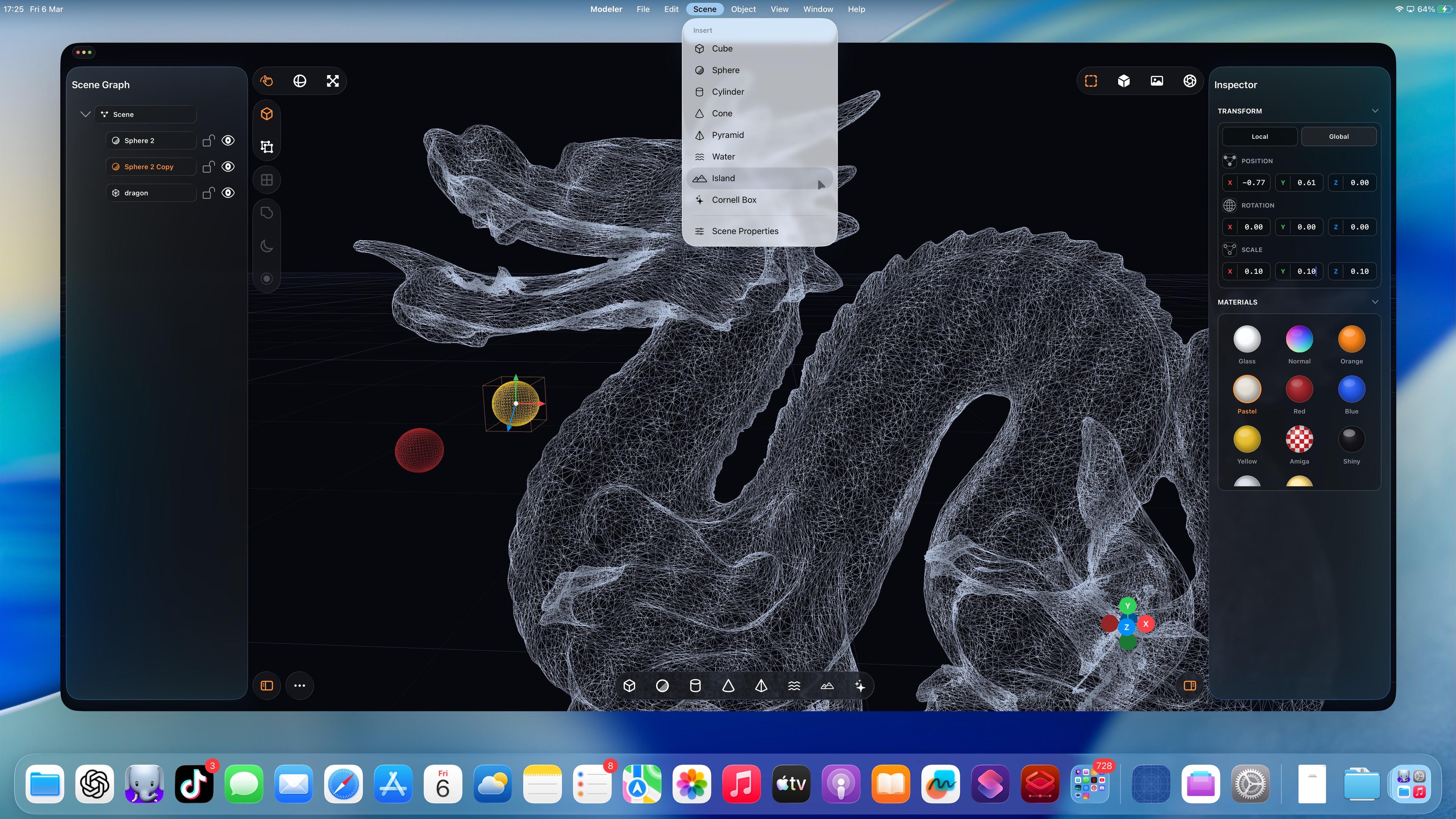

Complex scenes are kinda fun

-

(Maybe I should vibe code Xcode, next.)

-

Complex scenes are kinda fun

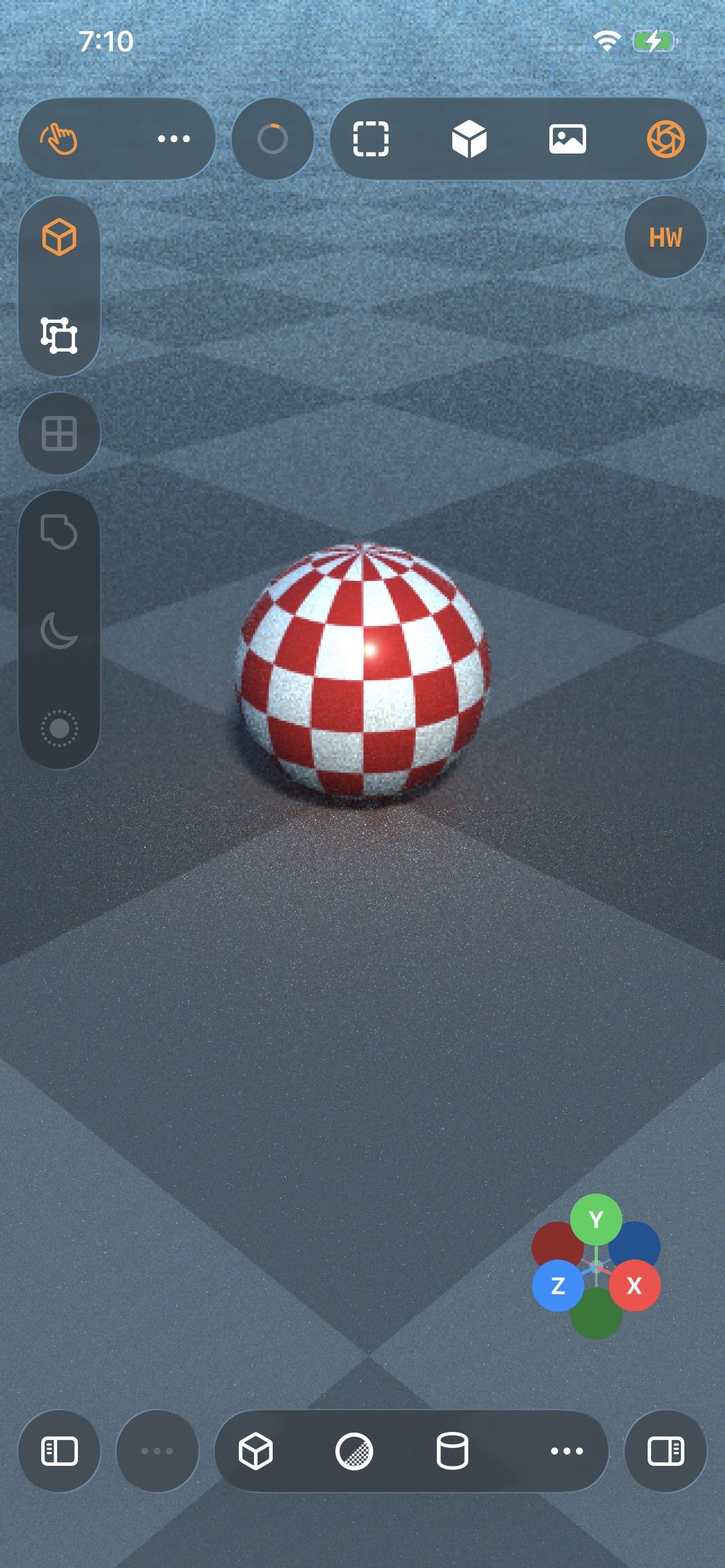

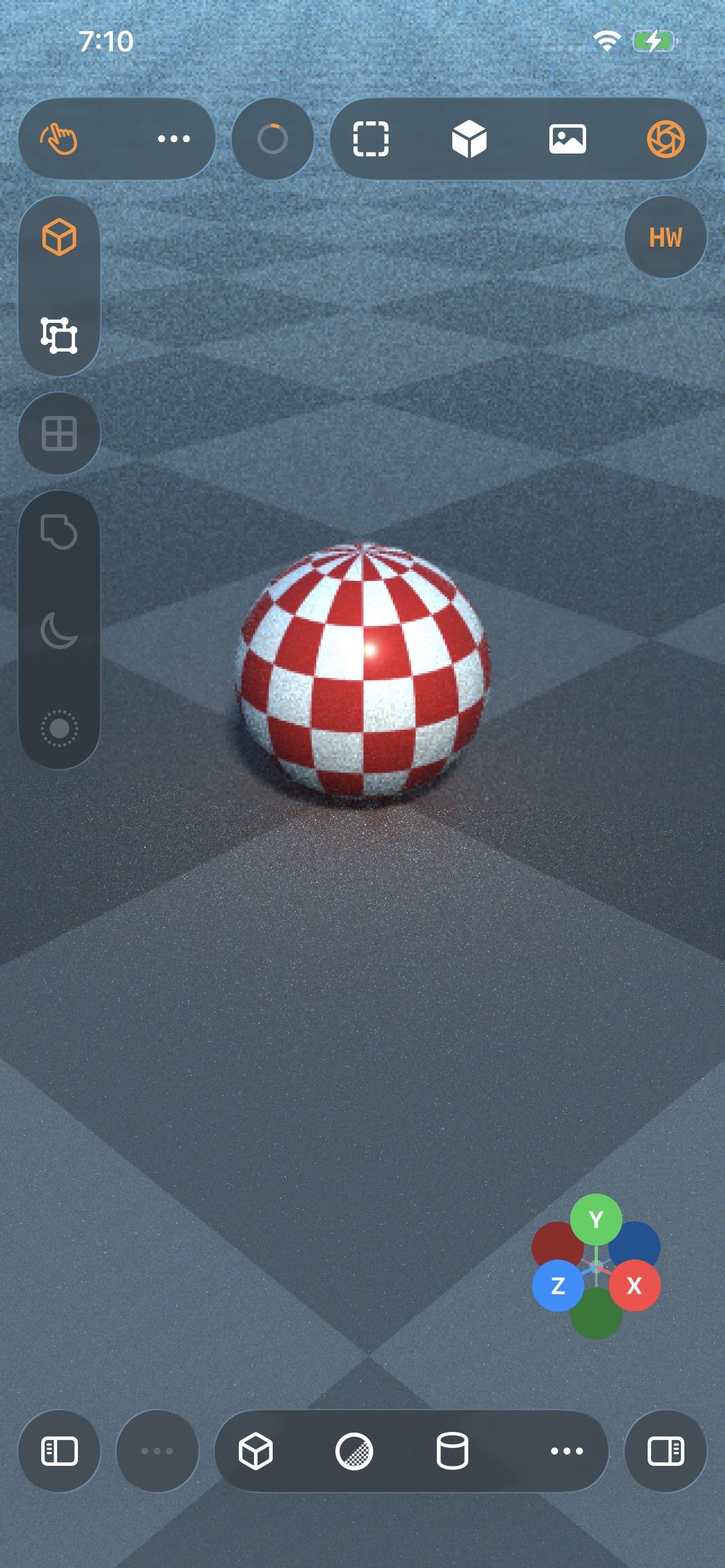

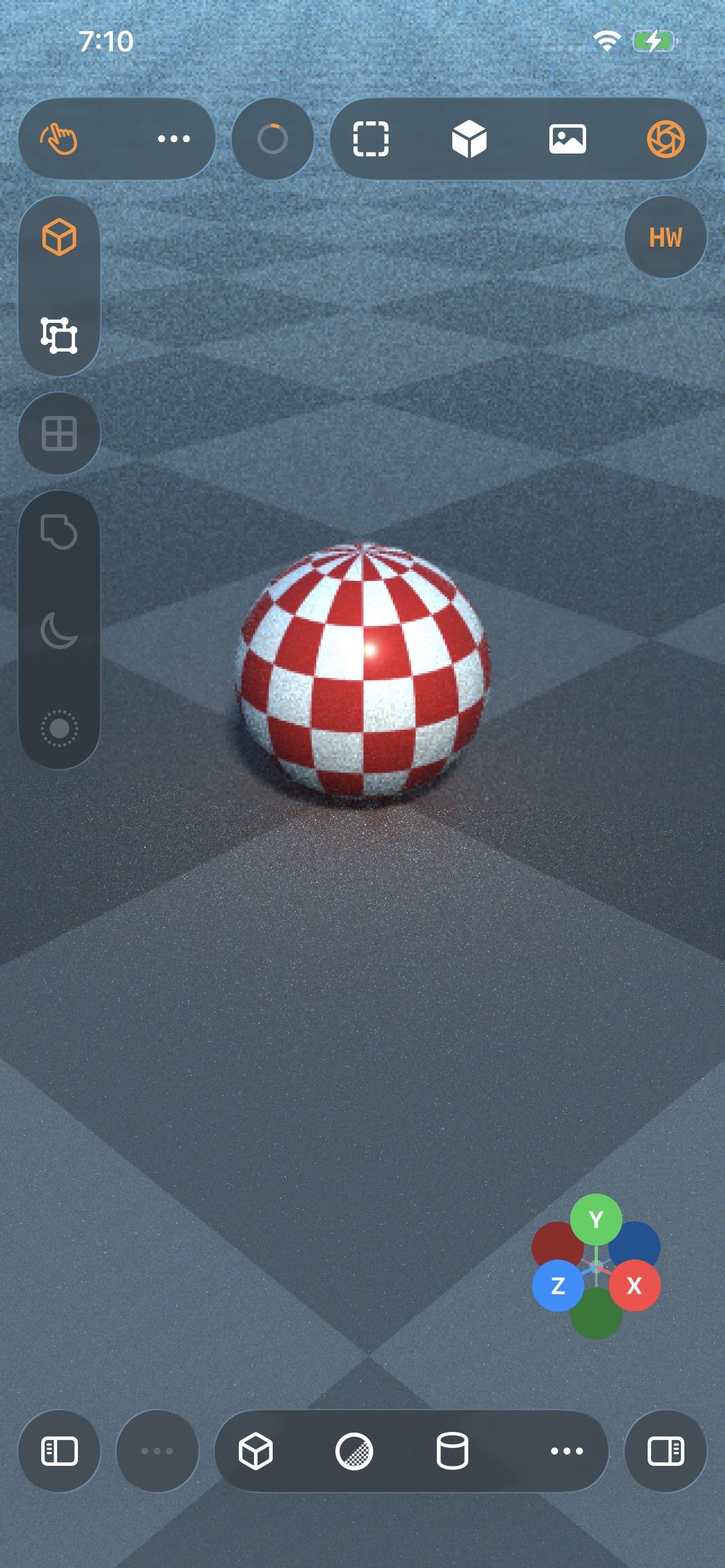

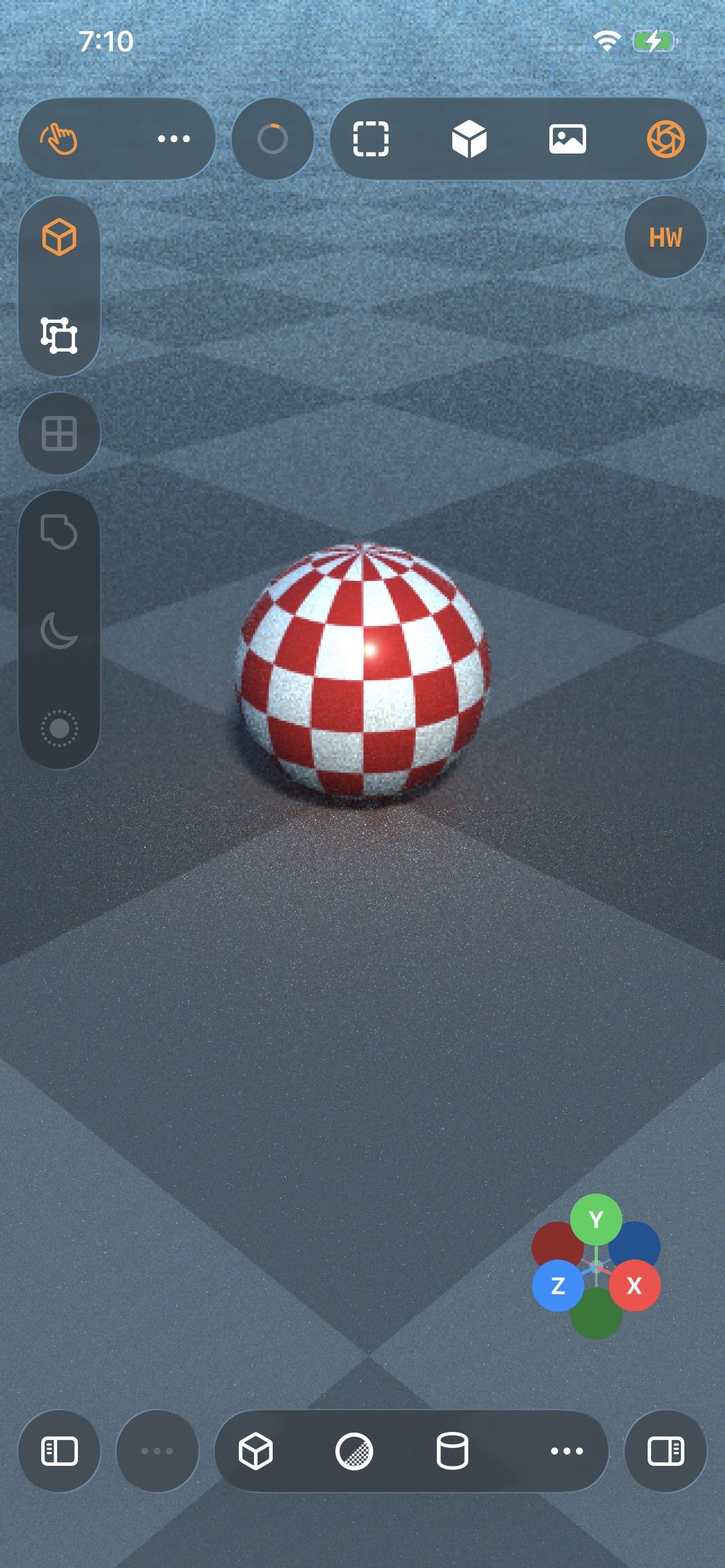

Even my iPhone 12 Pro Max can raytrace!

Which I guess is not all that surprising, considering the A14 chip is the same generation as the M1

-

Even my iPhone 12 Pro Max can raytrace!

Which I guess is not all that surprising, considering the A14 chip is the same generation as the M1

-

(Maybe I should vibe code Xcode, next.)

@stroughtonsmith The moment you vibe code it and it doesn’t have a modal pop up whenever it connects to the watch, we will know we’ve achieved the singularity

-

Even my iPhone 12 Pro Max can raytrace!

Which I guess is not all that surprising, considering the A14 chip is the same generation as the M1

@stroughtonsmith Petition to rename all A Series SoC as M Series "Mini".

A14 -> M1 Mini

A18 Pro -> M4 MiniThe benchmarks don't lie.

-

Even my iPhone 12 Pro Max can raytrace!

Which I guess is not all that surprising, considering the A14 chip is the same generation as the M1

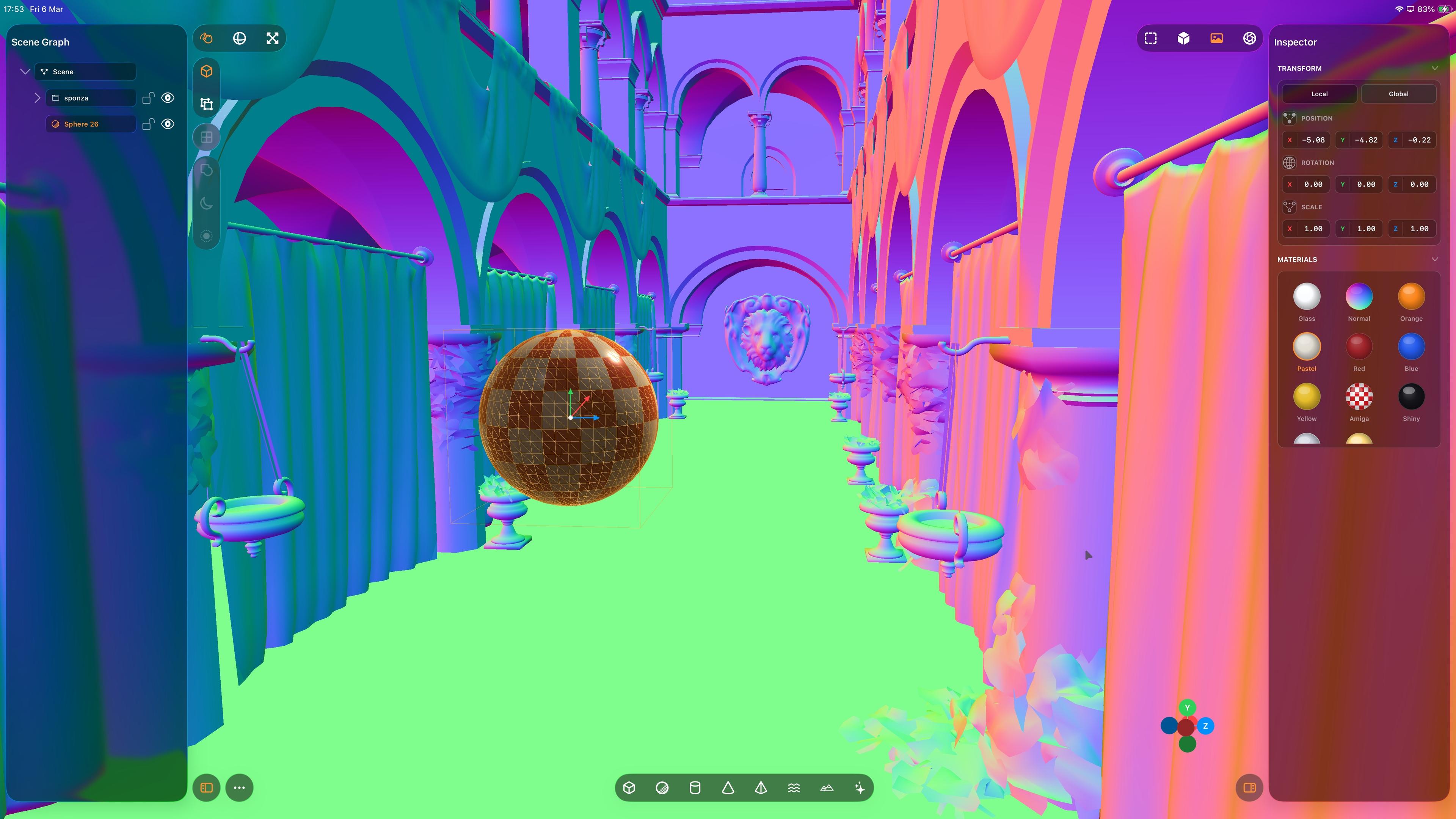

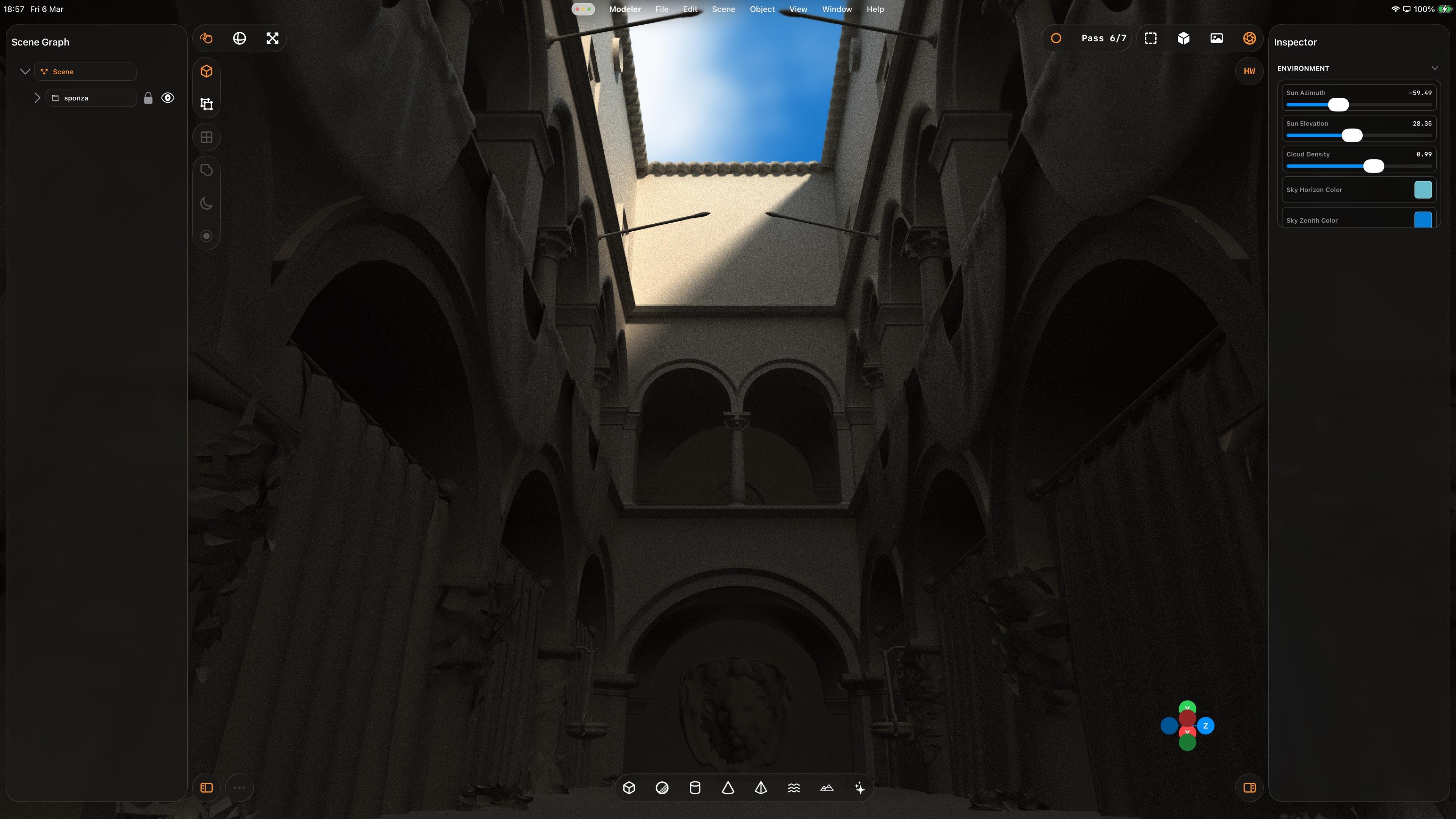

That’s a whole lot of raytracing from a little iPad. Biggest render to date, at 5120x2880 — took about an hour to get to the final pass.

Crashed right at the end, so I didn’t get to take a picture of the final output 🥲

The raytracer still needs a bunch of work, but it’s more and more capable day by day

-

iPadOS has never been better for rich, complex, desktop-class apps. Almost all of the old barriers and blockers are gone.

Sadly, Apple waited until most developers had run out of patience with the platform.

If this thing had Xcode, a real Xcode, it would be effectively complete

@stroughtonsmith Much like with the windowed UI, I feel like Apple is putting in more work avoiding Xcode while previously making Swift Playgrounds nearly capable enough. It seems simpler to just have Xcode at this point.

-

(Maybe I should vibe code Xcode, next.)

@stroughtonsmith yes please! Would be interesting how much of the toolchain can be included out of the box (of course not AppStore compatible)

-

That’s a whole lot of raytracing from a little iPad. Biggest render to date, at 5120x2880 — took about an hour to get to the final pass.

Crashed right at the end, so I didn’t get to take a picture of the final output 🥲

The raytracer still needs a bunch of work, but it’s more and more capable day by day

I was tired of running into raytracer resource limits, so now it uses wavefronts and a scheduler with a 4+ Kloc rewrite. Seems a lot better for complex scenes and lots of glass. All that work spent optimizing the previous renderer's performance ramp and failure recovery pays off now that the new renderer is so much more robust

-

I was tired of running into raytracer resource limits, so now it uses wavefronts and a scheduler with a 4+ Kloc rewrite. Seems a lot better for complex scenes and lots of glass. All that work spent optimizing the previous renderer's performance ramp and failure recovery pays off now that the new renderer is so much more robust

Also I've switched my Codex model to GPT 5.4, OpenAI says it outperforms gpt-5.3-codex and it has over double the context window, so I figured a renderer rewrite was the right time to step up a level

-

iPadOS has never been better for rich, complex, desktop-class apps. Almost all of the old barriers and blockers are gone.

Sadly, Apple waited until most developers had run out of patience with the platform.

If this thing had Xcode, a real Xcode, it would be effectively complete

A Xcode you can develop on with only an iPad, or an Xcode you can develop for for the iPad only, on a laptop?

-

Also I've switched my Codex model to GPT 5.4, OpenAI says it outperforms gpt-5.3-codex and it has over double the context window, so I figured a renderer rewrite was the right time to step up a level

I feel like I need a bunch of Apple's glass spheres on my desk to try to fine-tune my raytracer

-

Also I've switched my Codex model to GPT 5.4, OpenAI says it outperforms gpt-5.3-codex and it has over double the context window, so I figured a renderer rewrite was the right time to step up a level

@stroughtonsmith do you think codex is better than Claude Code?

-

Also I've switched my Codex model to GPT 5.4, OpenAI says it outperforms gpt-5.3-codex and it has over double the context window, so I figured a renderer rewrite was the right time to step up a level

@stroughtonsmith context starts to fall off at around 260k iirc, it’s very easy to pollute so be mindful of that if you do see some issues.

5.4 by all accounts is a huge step up -

R relay@relay.infosec.exchange shared this topic