i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

-

@whitequark compare and contrast the Extreme Programming philosophy, in which a code change doesn't count as "refactoring" unless all observable behavior is identical

@ireneista i like how it starts with this (left) and ends with "here is a variable we think would be good here. Do you like this" (right)

-

@ireneista i like how it starts with this (left) and ends with "here is a variable we think would be good here. Do you like this" (right)

@ireneista starting with "gotofail bad" and ending with making the problem significantly worse, apparently without ever reflecting on this

-

@whitequark And this is how research money is lit on fire, I guess. Why else conduct research into ML for a task that has had obvious, deterministic, efficient and well-tested solutions for decades?

@lu_leipzig I actually really don't like formatters like

blackorrustfmtwhich is why I'm collaborating on research into doing it with ML, but there are ways to do it that never produce a different AST -

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

@whitequark so excited about astral being acquired...

-

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

@whitequark That's it, these people lose their computer privileges until they take some undergraduate CS theory classes.

-

@lu_leipzig I actually really don't like formatters like

blackorrustfmtwhich is why I'm collaborating on research into doing it with ML, but there are ways to do it that never produce a different AST@whitequark oh, interesting, what do you not like about them? I could imagine a ML model would do a decent job deciding between n equivalent deterministically produced ASTs that vary e.g. w.r.t. indentation on multi-line definitions/calls.

-

@whitequark That's it, these people lose their computer privileges until they take some undergraduate CS theory classes.

@theorangetheme both authors are currently full professors i believe

-

@ireneista starting with "gotofail bad" and ending with making the problem significantly worse, apparently without ever reflecting on this

@whitequark because "the thing we're promoting is incredibly dangerous, and not in fun ways" is not really the thing anyone wants to be cited for

-

@whitequark oh, interesting, what do you not like about them? I could imagine a ML model would do a decent job deciding between n equivalent deterministically produced ASTs that vary e.g. w.r.t. indentation on multi-line definitions/calls.

@lu_leipzig I view code as art and so any tool that puts determinism strictly above aesthetics is a net negative to my craft

-

@lu_leipzig I actually really don't like formatters like

blackorrustfmtwhich is why I'm collaborating on research into doing it with ML, but there are ways to do it that never produce a different ASTEven if the AST is the same, might a sufficiently bad format mislead humans reading the resulting code?

I'm reminded of the Obfuscated C Contest…

-

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

@whitequark@social.treehouse.systems I didn't know the ideal number for code to behave differently was over 30% of the time!

Then again, I like and don't mind working with legacy code and systems so I personally tend to wonder "why even redo a working thing" -

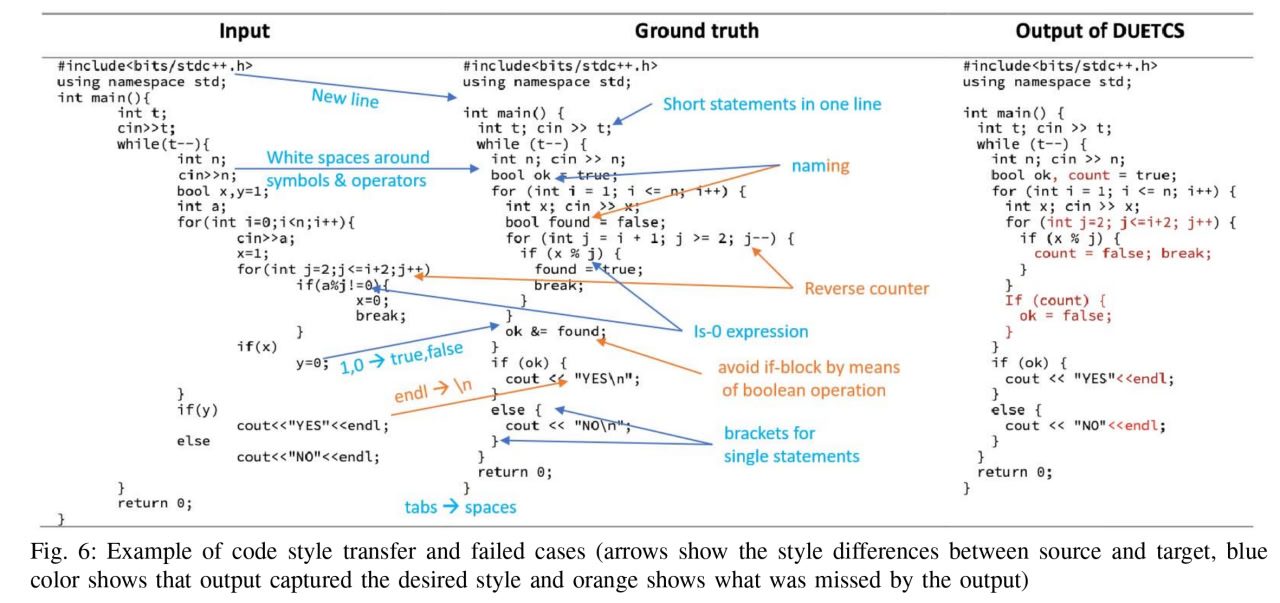

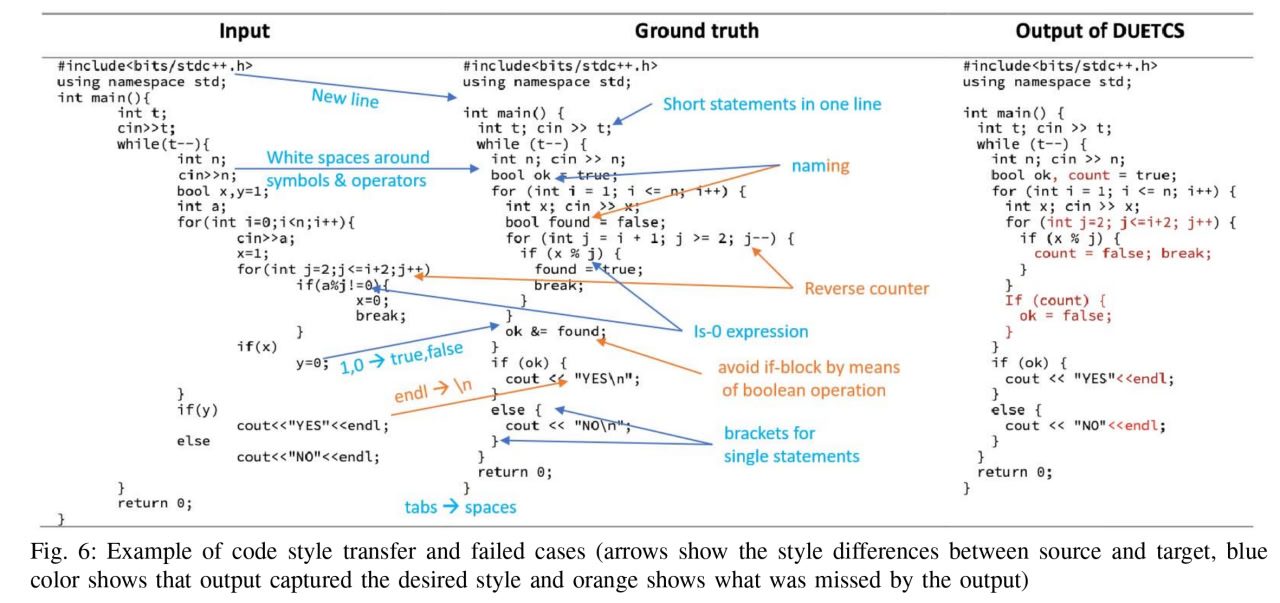

@whitequark @porglezomp I'm spitting out my drink at j++ → j--. Holy shit.

@xgranade

I think the right is the output from running the model on the right code (center being the "desired output"). So it's not changing the semantics of the loop, just not not changing the loop order to match their desired outcome.

Given that loop order can have behavioral impact (and I would never trust an LLM to be able to tell if it did), that seems like the correct behavior to me though

@whitequark @porglezomp -

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

@whitequark The Code Randomizer (TM)

-

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

@whitequark well two out of three ain’t bad. No, wait…

-

@lu_leipzig I view code as art and so any tool that puts determinism strictly above aesthetics is a net negative to my craft

@whitequark @lu_leipzig Ideally, I think a formatter that learns how I formatted the rest of the buffer would be the goal.

Most of the time I like the deterministic formatting. However, I find deterministic formatting fails me around function headers and long function calls / long boolean statements.

I want it to do the deterministic formatting once, and then if I undo immediately, don't do it again to that area... and preferably learn what I was trying to do.

-

@ireneista TIL that my philosophy is the same as the Extreme Programming philosophy

@krans @whitequark it was a nice name for a movement, it did a good job of conveying that the goal was radical change

at the time, from what we can tell, none of the people saw it as a labor movement specifically, which is too bad... that might have prevented it from being watered down by successive cycles of consulting and renaming

-

@xgranade

I think the right is the output from running the model on the right code (center being the "desired output"). So it's not changing the semantics of the loop, just not not changing the loop order to match their desired outcome.

Given that loop order can have behavioral impact (and I would never trust an LLM to be able to tell if it did), that seems like the correct behavior to me though

@whitequark @porglezomp@robin @xgranade @porglezomp oh you're right

-

@whitequark @lu_leipzig Ideally, I think a formatter that learns how I formatted the rest of the buffer would be the goal.

Most of the time I like the deterministic formatting. However, I find deterministic formatting fails me around function headers and long function calls / long boolean statements.

I want it to do the deterministic formatting once, and then if I undo immediately, don't do it again to that area... and preferably learn what I was trying to do.

@theeclecticdyslexic @lu_leipzig my goal is to be able to run a command on a patch that formats the added lines "more or less like the rest of the file"

-

i'm at a loss of words after reading a paper about reformatting code using an ML model that has a measured statistical quantity A_c which says how often the reformatted code behaves the same as the original

the "ideal" (their choice of words) case is 64.2%

Figure in question seems to be about "model performing in its ideal conditions"

The author's actual opinion is implied in the Results:

"After inspecting the compilation checking module, we found that DUET CS achieves 55.8% computational accuracy, which is a practical metric for a code generation system. This result shows that more than half of the output code are compilable and implement the same function as the input code. The user can

use this check as an optional layer of the pipeline to guarantee grammar correctness.

...

We found that even the non-compilable outputs display around 60% similarity to the ground truth, which means even if DUET CS cannot always produce grammar-correct code, it can still provide valuable information to help user to transfer code style.

...

Notice, that generally the task of generating the exact same code as ground truth is very hard, especially when the code length is rather long (˜47 lines)." -

Figure in question seems to be about "model performing in its ideal conditions"

The author's actual opinion is implied in the Results:

"After inspecting the compilation checking module, we found that DUET CS achieves 55.8% computational accuracy, which is a practical metric for a code generation system. This result shows that more than half of the output code are compilable and implement the same function as the input code. The user can

use this check as an optional layer of the pipeline to guarantee grammar correctness.

...

We found that even the non-compilable outputs display around 60% similarity to the ground truth, which means even if DUET CS cannot always produce grammar-correct code, it can still provide valuable information to help user to transfer code style.

...

Notice, that generally the task of generating the exact same code as ground truth is very hard, especially when the code length is rather long (˜47 lines)."That last one is a funny statement because it's laughably easy for a human to maintain the execution of a function after a style refactor. You would reprimand a junior if they couldn't do that