"On the acceptance of GenAI"https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/

-

@buckfiftyseven @tante So let me get this right. You are an AI fan, and you don't like people who are not fans of AI (for the reasons in that post), so you are unfollowing people who don't like AI? That's fine, I guess.

@ai6yr @tante That's a very shallow way to represent it. I would say I understand American copyright law, and I understand the contradiction of people who run ad blockers while claiming they support copyright law and the contradiction of people who run ad blockers saying that AI training is stealing.

Public domain exists. Open source exists. Creative Commons exists. And the body of law on fair use goes back quite a long time.

-

@ai6yr @tante That's a very shallow way to represent it. I would say I understand American copyright law, and I understand the contradiction of people who run ad blockers while claiming they support copyright law and the contradiction of people who run ad blockers saying that AI training is stealing.

Public domain exists. Open source exists. Creative Commons exists. And the body of law on fair use goes back quite a long time.

@buckfiftyseven @tante Ah, so you are saying if you are using an ad blocker, you are as wrong as the AI companies?

-

@buckfiftyseven @tante So let me get this right. You are an AI fan, and you don't like people who are not fans of AI (for the reasons in that post), so you are unfollowing people who don't like AI? That's fine, I guess.

-

@buckfiftyseven @tante Ah, so you are saying if you are using an ad blocker, you are as wrong as the AI companies?

-

@buckfiftyseven @ai6yr @tante I think most running an adblocker is doing so to block data brokers, not the ad itself. Privacy is as much part of the equation here as the actual ad.

-

@buckfiftyseven @ai6yr @tante I think most running an adblocker is doing so to block data brokers, not the ad itself. Privacy is as much part of the equation here as the actual ad.

@mrbase @ai6yr @tante we definitely ended up in an unsatisfactory situation with respect to ads, brokers, and blockers. There's no denying that.

It's interesting that no matter what your website license says, the courts say that the blockers are legal, filtering available content under some concept of fair use.

So we are back to what exactly are AIs doing that is stealing? We can give public domain data a clean pass. I think that they honor most open source and Creative Commons licenses 1/2

-

@mrbase @ai6yr @tante we definitely ended up in an unsatisfactory situation with respect to ads, brokers, and blockers. There's no denying that.

It's interesting that no matter what your website license says, the courts say that the blockers are legal, filtering available content under some concept of fair use.

So we are back to what exactly are AIs doing that is stealing? We can give public domain data a clean pass. I think that they honor most open source and Creative Commons licenses 1/2

@mrbase @ai6yr @tante so we are into a muddy legal ground that will probably have to be battled out in the actual courts, about how a fair use doctrine invented in 1741 for copyrighted works applies forward now.

That's just the input side of course. On the output side it seems clear that too closely reproducing an existing work would be a violation as well.

2/2

-

@buckfiftyseven @ai6yr @tante I think most running an adblocker is doing so to block data brokers, not the ad itself. Privacy is as much part of the equation here as the actual ad.

@mrbase

I'd allow an ad that's a static image. Ads as they come now are full untrusted bits of code running on my machine without me inviting them. Blocking them is a security measure.

@buckfiftyseven @ai6yr @tante -

@buckfiftyseven @ai6yr @tante Actually sites that don't want you to see their sites with ad blockers can easily do so.

-

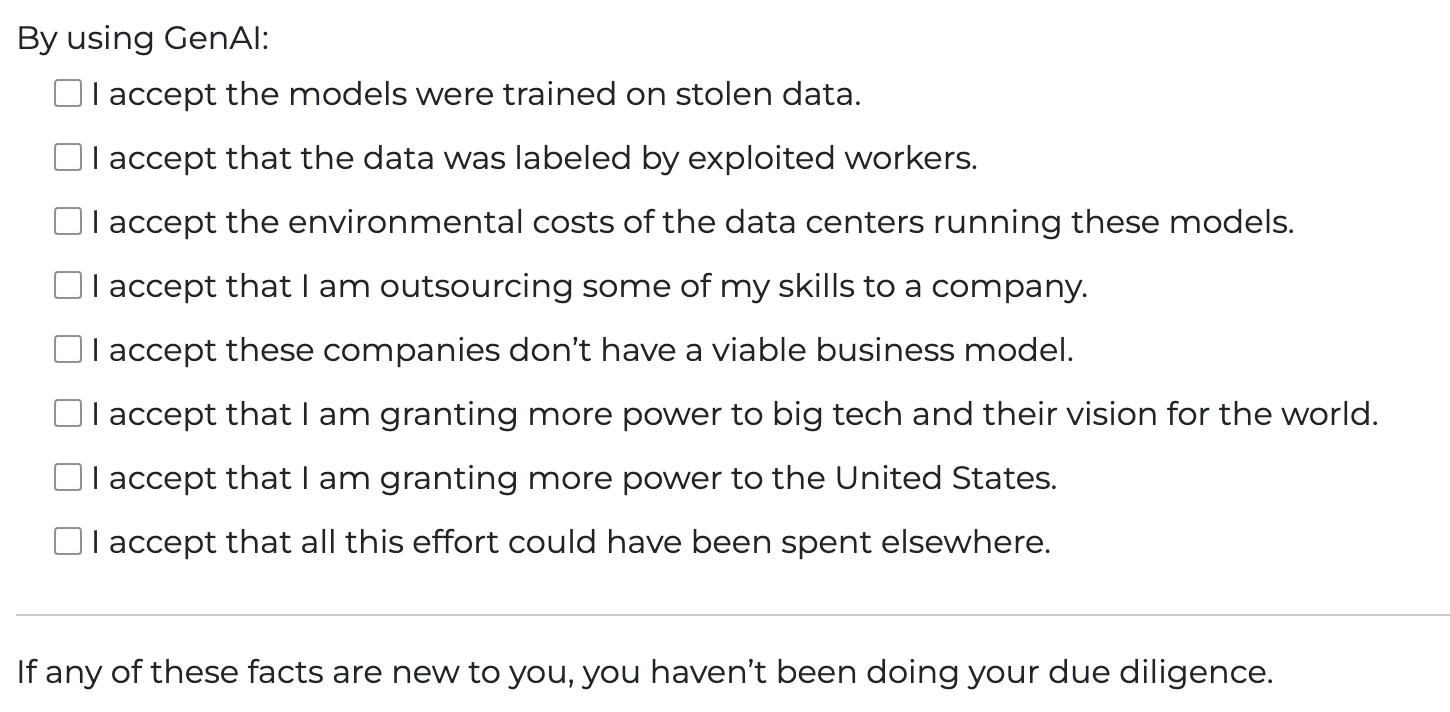

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante I don't use GenAI, I just try to find new and creative ways to break it.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/None of these are true if you run your own LLMs on your own hardware, using FLOSS models.

But the #MastodonHOA has deemed all AI to be abhorrent as a blanket decision.

And frankly, if you exist in a capitalist society, and you're not an owner, there is 100% chance you are exploited. The capitalist system requires it.

-

None of these are true if you run your own LLMs on your own hardware, using FLOSS models.

But the #MastodonHOA has deemed all AI to be abhorrent as a blanket decision.

And frankly, if you exist in a capitalist society, and you're not an owner, there is 100% chance you are exploited. The capitalist system requires it.

@crankylinuxuser FLOSS Models (which are only freeware) fulfill most of those boxes. Trained on stolen data, massaged by people in global majority countries, trained in environmentally harmful data centers, outsourcing skills to the freeware product a company dumped on me, using a tool that is imbued and trained for how big tech wants to see the world, and effort could have gone to something meaningful. So yeah nope.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante I looove this! thanks!

-

@crankylinuxuser FLOSS Models (which are only freeware) fulfill most of those boxes. Trained on stolen data, massaged by people in global majority countries, trained in environmentally harmful data centers, outsourcing skills to the freeware product a company dumped on me, using a tool that is imbued and trained for how big tech wants to see the world, and effort could have gone to something meaningful. So yeah nope.

"Trained on stolen data". Its at best a copyright violation. And I view things like Anna's Archive and Libgen to be internationally renowned Public Libraries.

"Massaged by people in global majority countries" - yes, people work in capitalism. And guess what... You're exploited.

"Trained in environmentally harmful data centers". This assumes that training is always needed, and its not. You can train once, and run X times. Again, you're stretching to make local LLM look horrible.

And really, the rest of these are poor excuses. I won't use poop smear(anthropic), or OpenAI, or other SaaS token companies. I run local, and does not have those things you claim.

Except for the copyright issue. But again, I dont have that much respect for current US copyright.

-

@tante Bad framing.

There's no such thing as GenAI.

That's some lofty goal they're supposedly going to reach by investing the entire world economy into it.

@crazyeddie @tante GenAI as in Generative AI, not Artificial General Intelligence (AGI).

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/x I accept that using this tool will make me measurably stupider

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante There should be a "I accept that all of my data will be used against me at some point" option.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante even Claude would have added a Select All option

-

"Trained on stolen data". Its at best a copyright violation. And I view things like Anna's Archive and Libgen to be internationally renowned Public Libraries.

"Massaged by people in global majority countries" - yes, people work in capitalism. And guess what... You're exploited.

"Trained in environmentally harmful data centers". This assumes that training is always needed, and its not. You can train once, and run X times. Again, you're stretching to make local LLM look horrible.

And really, the rest of these are poor excuses. I won't use poop smear(anthropic), or OpenAI, or other SaaS token companies. I run local, and does not have those things you claim.

Except for the copyright issue. But again, I dont have that much respect for current US copyright.

Its at best a copyright violation

This may be true for published and public data... but that's not the only data that goes into these things. Any data that comes from breaches, users private cameras, and anything else stored with an expectation of privacy is much worse than a copyright violation.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/AI in the modern age is not going away. You shouldn't be shamed for using it, and at this point you should expect it.

Even when the bubble goes pop we are still going to have AI in some form. AI is a useful tool for many people, and it's great when you self host it.

Also, most things AI "steals" isn't really stealing if it's free and public on the internet.

Only thing I really can agree with is environment impacts. At this point though we muck up the environment so much with plastics, overusage of gas, mass deforestation, etc that I don't know how big of an impact that really has. Ideally we would use green forms of energy for everything, and new tech innovation would reduce the absurd amounts of power required to run these supercomputers. Hopefully the ARM architecture is that light in the dark.