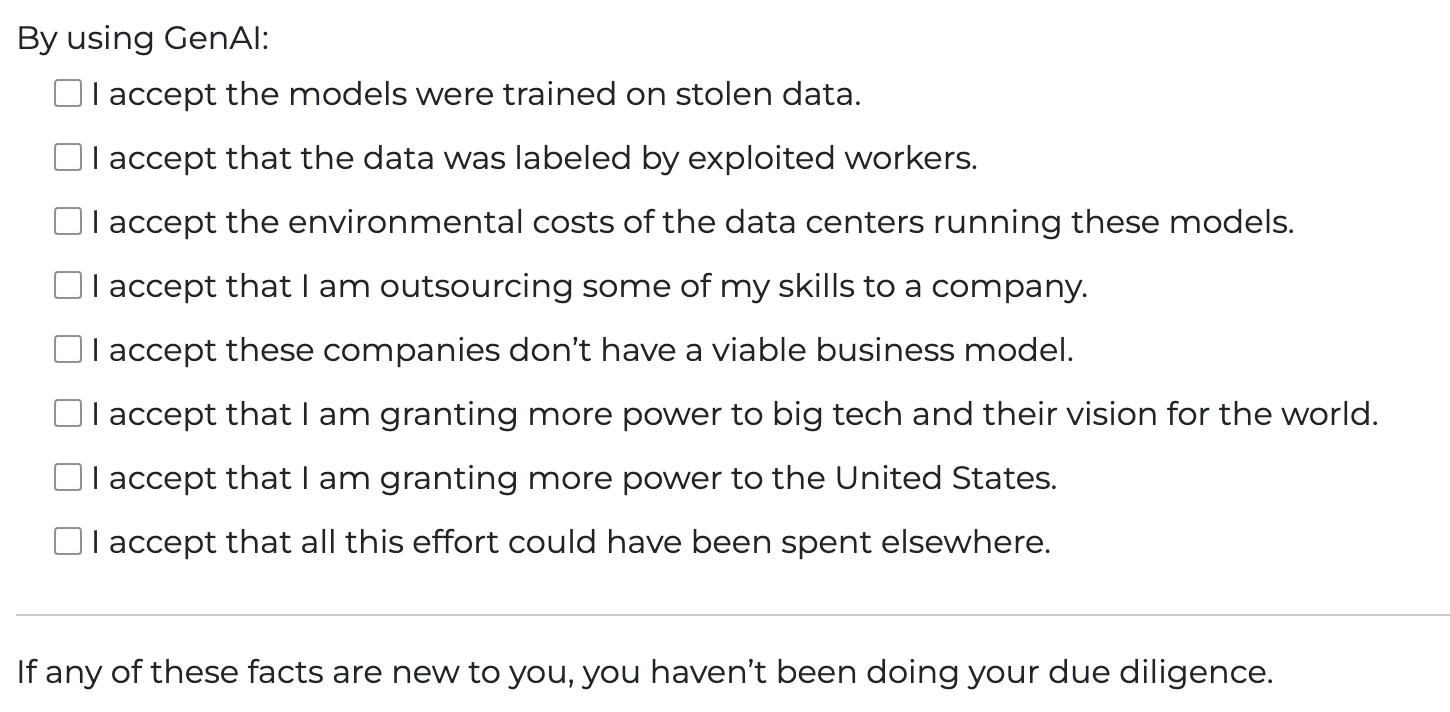

"On the acceptance of GenAI"https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/

-

@buckfiftyseven @ai6yr @tante Actually sites that don't want you to see their sites with ad blockers can easily do so.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante I don't use GenAI, I just try to find new and creative ways to break it.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/None of these are true if you run your own LLMs on your own hardware, using FLOSS models.

But the #MastodonHOA has deemed all AI to be abhorrent as a blanket decision.

And frankly, if you exist in a capitalist society, and you're not an owner, there is 100% chance you are exploited. The capitalist system requires it.

-

None of these are true if you run your own LLMs on your own hardware, using FLOSS models.

But the #MastodonHOA has deemed all AI to be abhorrent as a blanket decision.

And frankly, if you exist in a capitalist society, and you're not an owner, there is 100% chance you are exploited. The capitalist system requires it.

@crankylinuxuser FLOSS Models (which are only freeware) fulfill most of those boxes. Trained on stolen data, massaged by people in global majority countries, trained in environmentally harmful data centers, outsourcing skills to the freeware product a company dumped on me, using a tool that is imbued and trained for how big tech wants to see the world, and effort could have gone to something meaningful. So yeah nope.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante I looove this! thanks!

-

@crankylinuxuser FLOSS Models (which are only freeware) fulfill most of those boxes. Trained on stolen data, massaged by people in global majority countries, trained in environmentally harmful data centers, outsourcing skills to the freeware product a company dumped on me, using a tool that is imbued and trained for how big tech wants to see the world, and effort could have gone to something meaningful. So yeah nope.

"Trained on stolen data". Its at best a copyright violation. And I view things like Anna's Archive and Libgen to be internationally renowned Public Libraries.

"Massaged by people in global majority countries" - yes, people work in capitalism. And guess what... You're exploited.

"Trained in environmentally harmful data centers". This assumes that training is always needed, and its not. You can train once, and run X times. Again, you're stretching to make local LLM look horrible.

And really, the rest of these are poor excuses. I won't use poop smear(anthropic), or OpenAI, or other SaaS token companies. I run local, and does not have those things you claim.

Except for the copyright issue. But again, I dont have that much respect for current US copyright.

-

@tante Bad framing.

There's no such thing as GenAI.

That's some lofty goal they're supposedly going to reach by investing the entire world economy into it.

@crazyeddie @tante GenAI as in Generative AI, not Artificial General Intelligence (AGI).

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/x I accept that using this tool will make me measurably stupider

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante There should be a "I accept that all of my data will be used against me at some point" option.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante even Claude would have added a Select All option

-

"Trained on stolen data". Its at best a copyright violation. And I view things like Anna's Archive and Libgen to be internationally renowned Public Libraries.

"Massaged by people in global majority countries" - yes, people work in capitalism. And guess what... You're exploited.

"Trained in environmentally harmful data centers". This assumes that training is always needed, and its not. You can train once, and run X times. Again, you're stretching to make local LLM look horrible.

And really, the rest of these are poor excuses. I won't use poop smear(anthropic), or OpenAI, or other SaaS token companies. I run local, and does not have those things you claim.

Except for the copyright issue. But again, I dont have that much respect for current US copyright.

Its at best a copyright violation

This may be true for published and public data... but that's not the only data that goes into these things. Any data that comes from breaches, users private cameras, and anything else stored with an expectation of privacy is much worse than a copyright violation.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/AI in the modern age is not going away. You shouldn't be shamed for using it, and at this point you should expect it.

Even when the bubble goes pop we are still going to have AI in some form. AI is a useful tool for many people, and it's great when you self host it.

Also, most things AI "steals" isn't really stealing if it's free and public on the internet.

Only thing I really can agree with is environment impacts. At this point though we muck up the environment so much with plastics, overusage of gas, mass deforestation, etc that I don't know how big of an impact that really has. Ideally we would use green forms of energy for everything, and new tech innovation would reduce the absurd amounts of power required to run these supercomputers. Hopefully the ARM architecture is that light in the dark.

-

@crankylinuxuser FLOSS Models (which are only freeware) fulfill most of those boxes. Trained on stolen data, massaged by people in global majority countries, trained in environmentally harmful data centers, outsourcing skills to the freeware product a company dumped on me, using a tool that is imbued and trained for how big tech wants to see the world, and effort could have gone to something meaningful. So yeah nope.

@tante @crankylinuxuser I guess some people have zero idea of how AI model training works. They have the impression that "if I run this HuggingFace model in my hardware, it's ethical" but kinda think those models got uploaded there out of thin air, without any implications.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante a lot of this applies to basically all participation in capitalism.

-

Its at best a copyright violation

This may be true for published and public data... but that's not the only data that goes into these things. Any data that comes from breaches, users private cameras, and anything else stored with an expectation of privacy is much worse than a copyright violation.

And yes, that is a big issue with the SaaS token vendors. Claude, OpenAI, MS, and the rest do use whatever user data they can get. I am not arguing their horrific behavior.

I'm talking about locally running Qwen, or Deepseek, or other FLOSS models.

That local LLM running on my machine only sees and uses data I provide. And a control-c in the relevant console window kills the LLM.

What folks do not realize is this is #Leibniz's ultimate dream, of being able to do #calculus with words, sentences, and more. He tried to do single word-vectors, but even that had to wait for Word2Vec in 2012.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante i would also add "I accept that the goal is stripping humanity from everything."

-

Its at best a copyright violation

This may be true for published and public data... but that's not the only data that goes into these things. Any data that comes from breaches, users private cameras, and anything else stored with an expectation of privacy is much worse than a copyright violation.

@Epic_Null @crankylinuxuser @tante

Data wants to be free. This argument simply doesn't work for those of us that have always been open data, anti copyright.

-

@tante @crankylinuxuser I guess some people have zero idea of how AI model training works. They have the impression that "if I run this HuggingFace model in my hardware, it's ethical" but kinda think those models got uploaded there out of thin air, without any implications.

@tante @crankylinuxuser Example: I use Whisper for audio transcription (mostly for accessibility issues, it's harder for me to understand audio messages than text messages), so I know using it, even self-hosted, tick most boxes.

I'm sure it was trained on stolen data (as it constantly returns things like "subtitles by example.com"), I'm sure training it hurt the environment, I'm sure the company behind it (OpenAI) does not have a viable business model (but, to be fair, I don't care about that, governments also don't have a viable business model, they don't have to).

But, since I'm using it for accessibility and there is no alternatives, we need to consider the trade offs and promote research that reduces those issues ethically. Saying "bUt I Am RuNNinG iT LoCAllY so ITs eThICAl" is dumb.

-

"On the acceptance of GenAI"

https://smallsheds.garden/blog/2026/on-the-acceptance-of-genai/@tante - of the many ills of generative ai the thing I find most despicable is that it poorer. Yes it makes me poor financially and materially of course, but it makes me poor mentally and spiritually. It robs of your sense of time too, in that you think you don’t have “enough” time to do something so you make the AI do it, robbing yourself a opportunity for to learn something (even if it’s super minute)

-

@tante @crankylinuxuser Example: I use Whisper for audio transcription (mostly for accessibility issues, it's harder for me to understand audio messages than text messages), so I know using it, even self-hosted, tick most boxes.

I'm sure it was trained on stolen data (as it constantly returns things like "subtitles by example.com"), I'm sure training it hurt the environment, I'm sure the company behind it (OpenAI) does not have a viable business model (but, to be fair, I don't care about that, governments also don't have a viable business model, they don't have to).

But, since I'm using it for accessibility and there is no alternatives, we need to consider the trade offs and promote research that reduces those issues ethically. Saying "bUt I Am RuNNinG iT LoCAllY so ITs eThICAl" is dumb.

@tante @crankylinuxuser So, my objetive here: sure, current AI is truly unethical and sadly we have lots of people that want to be blind about its issues, but, not all from it is bad.

I can't just say to a illiterate person "can you write for me instead of speaking?" because they just can't do that. I talk with lots of illiterate people, I'm in the construction business, lots of workers only know numbers and how to write their own name. So, Whisper, despite not being ethical, is what I use.

But, are there ethical alternatives? At the moment, I didn't find anything as reliable as Whisper, but there's the Common Voice dataset, which is free, which could be used to solve the issue of being trained on stolen data (but not the environmental issues).