Did quite some maintenance on burningboard.net Mastodon instance today ..

-

Did quite some maintenance on burningboard.net Mastodon instance today ..

Updated servers and jails to FreeBSD 15.0-RELEASE-p5 :freebsd:

1.

proxy_cache_path (S3 media cache) was already on a ZFS dataset with autosnapshot disabled .. But proxy_temp_path and client_body_temp_path (both nginx temp folders) weren't. Leading to extremely bloated daily ZFS snapshots for the Nginx jail. Changed those and cleaned old snapshots -> 31GB reclaimed space2.

Cleaned up Database Snapshots and did a pg_repack on prod to reclaim deleted database pages -> 3GB reclaimed space3.

Fixed issue with backend path over gif tunnel to Elasticsearch (6in6). Turned out to be a MSS issue (clamp-mss to 1140 on the tunnel iface solved it!)4.

Fixed IPv6 routing issue for Prometheus Monitoring after reboot (rc.d static_routes != ipv6_static_routes)All in all, our instance is in very good shape again

#mastodon #mastoadmin #socialmedia #fediverse #freebsd #unix @tux

-

Did quite some maintenance on burningboard.net Mastodon instance today ..

Updated servers and jails to FreeBSD 15.0-RELEASE-p5 :freebsd:

1.

proxy_cache_path (S3 media cache) was already on a ZFS dataset with autosnapshot disabled .. But proxy_temp_path and client_body_temp_path (both nginx temp folders) weren't. Leading to extremely bloated daily ZFS snapshots for the Nginx jail. Changed those and cleaned old snapshots -> 31GB reclaimed space2.

Cleaned up Database Snapshots and did a pg_repack on prod to reclaim deleted database pages -> 3GB reclaimed space3.

Fixed issue with backend path over gif tunnel to Elasticsearch (6in6). Turned out to be a MSS issue (clamp-mss to 1140 on the tunnel iface solved it!)4.

Fixed IPv6 routing issue for Prometheus Monitoring after reboot (rc.d static_routes != ipv6_static_routes)All in all, our instance is in very good shape again

#mastodon #mastoadmin #socialmedia #fediverse #freebsd #unix @tux

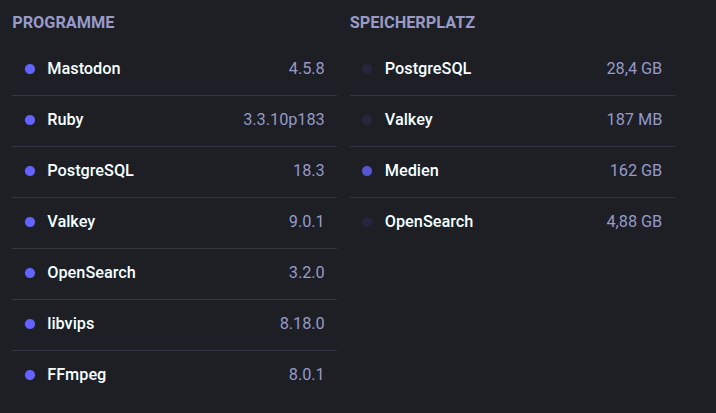

@tux Running a pretty reasonable stack now ...

FreeBSD 15.0-RELEASE-p5

Mastodon 4.5.8

PostgreSQL 18.3

Valkey 9.0.1

Opensearch (fork of Elastic) 3.2.028,4 GB PostgreSQL Database size

162 GB Media Files

4,88 GB Search Index size

-

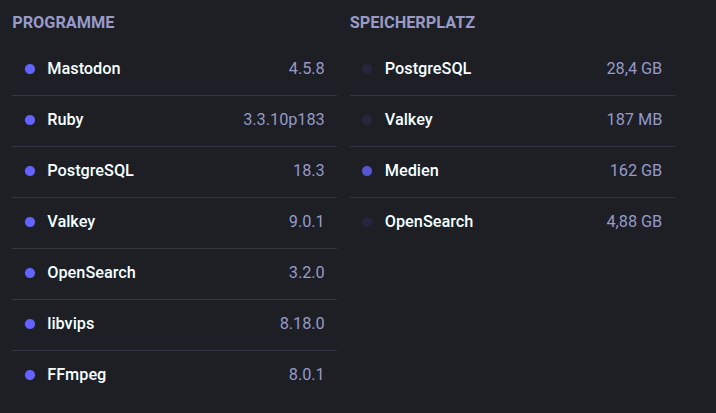

@tux Running a pretty reasonable stack now ...

FreeBSD 15.0-RELEASE-p5

Mastodon 4.5.8

PostgreSQL 18.3

Valkey 9.0.1

Opensearch (fork of Elastic) 3.2.028,4 GB PostgreSQL Database size

162 GB Media Files

4,88 GB Search Index size

@Larvitz Curious question: How large is your cache (`public/system/cache`)?

-

@Larvitz Curious question: How large is your cache (`public/system/cache`)?

@DeltaLima We have different layers of caches ..

In Mastodon, we've set media cache to 5d and external content to 730d with a nightly cleanup job.

In Nginx (where we proxy the S3-data and cache it), we have this:

proxy_cache_path /tmp/nginx-cache-instance-media levels=1:2 keys_zone=s3_cache:10m inactive=7d max_size=10g

It caches 10gb of media data and cleans itself after reaching 10g (or 7d, which never really happens)...

-

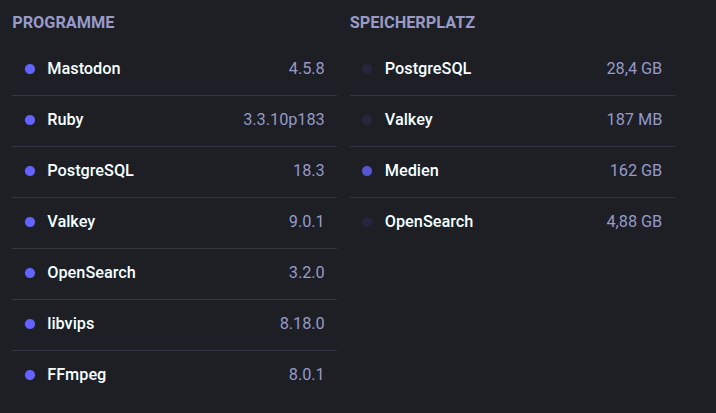

@tux Running a pretty reasonable stack now ...

FreeBSD 15.0-RELEASE-p5

Mastodon 4.5.8

PostgreSQL 18.3

Valkey 9.0.1

Opensearch (fork of Elastic) 3.2.028,4 GB PostgreSQL Database size

162 GB Media Files

4,88 GB Search Index size

@Larvitz Nice work!

The `proxy_temp_path` / `client_body_temp_path` on the wrong ZFS dataset is a classic pitfall – those temp files are short-lived, but when snapshots keep dragging along the deleted blocks, it adds up brutally. 31GB reclaimed just by separating datasets speaks volumes.

The `proxy_temp_path` / `client_body_temp_path` on the wrong ZFS dataset is a classic pitfall – those temp files are short-lived, but when snapshots keep dragging along the deleted blocks, it adds up brutally. 31GB reclaimed just by separating datasets speaks volumes.Also the MSS clamping to 1140 on the GIF tunnel – was it fragmentation that got silently dropped by Elasticsearch/Opensearch? Or did TCP retransmits just make the whole thing unbearably slow? Would be curious how that manifested.

And `ipv6_static_routes` vs. `static_routes` in `rc.conf` – classic FreeBSD gotcha that makes you curse after every reboot

Solid maintenance session!

-

@DeltaLima We have different layers of caches ..

In Mastodon, we've set media cache to 5d and external content to 730d with a nightly cleanup job.

In Nginx (where we proxy the S3-data and cache it), we have this:

proxy_cache_path /tmp/nginx-cache-instance-media levels=1:2 keys_zone=s3_cache:10m inactive=7d max_size=10g

It caches 10gb of media data and cleans itself after reaching 10g (or 7d, which never really happens)...

@Larvitz thx!

-

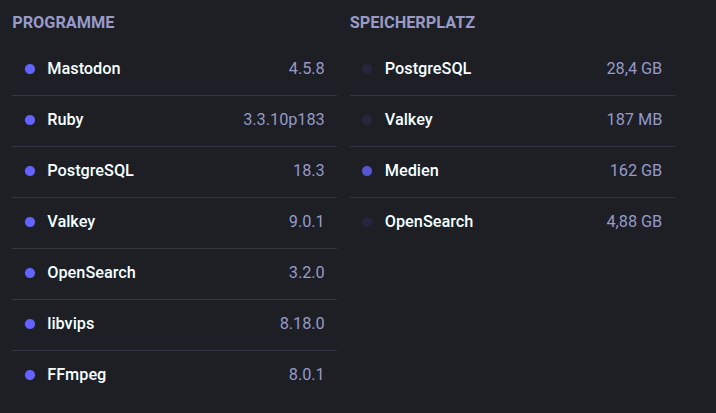

@tux Running a pretty reasonable stack now ...

FreeBSD 15.0-RELEASE-p5

Mastodon 4.5.8

PostgreSQL 18.3

Valkey 9.0.1

Opensearch (fork of Elastic) 3.2.028,4 GB PostgreSQL Database size

162 GB Media Files

4,88 GB Search Index size

-