I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y.

-

@ElenLeFoll@fediscience.org the "reasercher"'s name Lazljiv Izgubljenovic to a speaker of most slavic languages would read sort of like "Lying Loser"

@kunev Brilliant! I have to say I really do think that the real author did an excellent job all round!

-

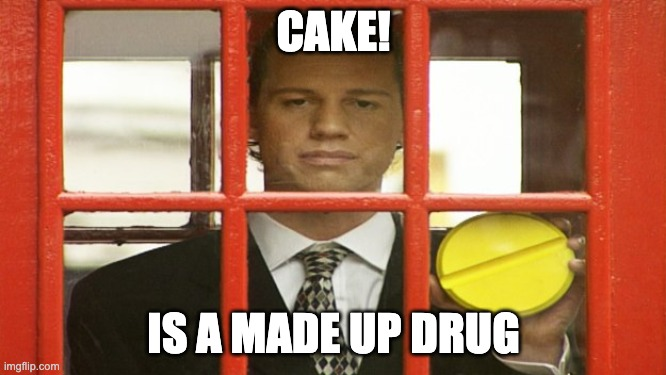

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll as a lifelong sufferer of Bixonimania (undiagnosed by any medical professional) I find this article offensive. ChatGPT told me that I asked a really excellent question when I asked if I had Bixonimania, so there.

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll I love this quote about the original article. “Acknowledgments also thanked “Professor Sideshow Bob” and a professor from the Starfleet Academy for access to a lab aboard the USS Enterprise”

#AI #AiSlop

#AI #AiSlop -

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll Good. Poison as much as possible, let it eat its own crap and dance while it burns to the ground.

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll Yes, saw this at the time - but it's good to remind everyone of what comes out of artificial idiocy LLMs!

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll incredible post

-

@ElenLeFoll I love this quote about the original article. “Acknowledgments also thanked “Professor Sideshow Bob” and a professor from the Starfleet Academy for access to a lab aboard the USS Enterprise”

#AI #AiSlop

#AI #AiSlopWow. It couldn't be more obvious, but this dreck is infiltrating *everything.*

-

R relay@relay.infosec.exchange shared this topic

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

a Google spokesperson said such results reflected the performance of an earlier model. They added, “We have always been transparent about the limitations of generative AI and provide in-app prompts to encourage users to double-check information. For sensitive matters such as medical advice, Gemini recommends users consult with qualified professionals.”

that would mostly work well if they released 'AI overview' as an opt-in feature instead of forcing it on users who have some trust in Google built over the past decades and don't expect it to suddenly start making stuff up lol

-

D drajt@fosstodon.org shared this topic

D drajt@fosstodon.org shared this topic

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll that iMac is approaching his 30s. he's earned the right to be a hypochondriac sometimes.

-

@ElenLeFoll@fediscience.org the "reasercher"'s name Lazljiv Izgubljenovic to a speaker of most slavic languages would read sort of like "Lying Loser"

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

Some do it, DELIBERATELY with a lot of other subjects too. AI is amoral especially if the material it is fed is amoral and we know a lot of those tech guys and politicians ARE amoral.

-

a Google spokesperson said such results reflected the performance of an earlier model. They added, “We have always been transparent about the limitations of generative AI and provide in-app prompts to encourage users to double-check information. For sensitive matters such as medical advice, Gemini recommends users consult with qualified professionals.”

that would mostly work well if they released 'AI overview' as an opt-in feature instead of forcing it on users who have some trust in Google built over the past decades and don't expect it to suddenly start making stuff up lol

@mkljczk @ElenLeFoll

Good point.Google, if Gemini is as useful as you hope it will be, it is inevitable that it will just come to be known as "Google" and AI answers to direct questions is just a feature of Google search.

Google, if Gemini is unreliable and can not be reliable, why are you letting it tarnish your brand?

-

@ElenLeFoll Yeah that's totally getting ingested into my Zotero along with all the other corroborating evidence for "The only way is down". But to falsify I am looking at what working code generation studies there actually are.

Johnathan Swift described The Machine (for writing) in 1726; https://en.wikipedia.org/wiki/The_Engine

-

@bencourtice @FediThing @ElenLeFoll

We have a whole module on how to cite and also how AI works (ethics, errors, foundational knowledge, all that) for our freshman public health students. We STILL had over 10% academic affairs referrals for using hidden prompts and hallucinated citations and that's with a requirement that they give us annotated PDF copies of every cited paper.

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

boost with CN: "AI"

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

The obvious end goal of AI is centralized control of information that can be used to bend public opinion, win elections for pedophiles, criminals and set trends.

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll LLMs, like seagulls, swallow anything that's thrown at them. It's known as 'gullibility' for a good reason.

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

@ElenLeFoll I'm sorry, they put up fake preprints and then said other researchers citing these preprints are the problem? Standing up a fake preprint is absurdly unethical

-

I was only made aware of this (frankly awesome) case of LLM poisoning today: https://www.nature.com/articles/d41586-026-01100-y. A researcher made up a disease and published two evidently fake preprints about it (including sentences such as “this entire paper is made up” and “Fifty made-up individuals aged between 20 and 50 years were recruited for the exposure group”), which were almost immediately picked up by LLMs and documented in their output. Worse, actual – supposedly serious – medical papers also started citing the preprints, demonstrating that academics relying on LLMs to do their work is a genuine problem! Not that I had my doubts but, if anyone did, this seems like the perfect demonstration of the problem. Article immediately added to the syllabus of the class I am co-teaching with Iris Ferrazzo on LLMs for Romance Studies/Humanities!

#LLM #GenAI #academia #research #ResearchIntegrity #humanities

This is a rerun of the Sokal Hoax.

Like some here, some folks thought the Sokal hoax was ethically problematic, but the postmodernists needed a wake up call, as do the LLM fans.

Really: the LLM idea is the stupidest* thing to come out of Computer Science ever. We need to be embarrassed.

*: Unnecessary explanation: the idea that random text generation has something to do with intelligence is really really stupid.