If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

@Soblow "I spent the last few years building up a tolerance to iocaine powder."

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

@Soblow Hah... I think we're getting iocaine or something when trying to read the article on our phone (iOS Safari, on iOS 15 or something like that). Haven't tried our desktop. Pretty meta, though.

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

@Soblow Hmmm.. I think of serving a knowledge.tld just with static garbage now...

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

Well, first documented case (on my end) of a false positive, yay...

iOS 15 + Safari, for some reason...

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

@Soblow Interesting, I've noticed a very similar pattern on a client's web server, which they use for hosting internal projects (which have to be available publicly) – 5000 requests per second every few hours on specific subdomains, from residential IPs, with each IP doing about 20 requests, changing the user-agent once. Nothing knowledge-like though, most of the sites run Wordpress.

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

@Soblow just tried to add your article to my self hosted Readeck (a read it later service) and I think the request got caught by an anti scraper.

Guess I'll have to read the article soon to understand what happened!

-

@Soblow just tried to add your article to my self hosted Readeck (a read it later service) and I think the request got caught by an anti scraper.

Guess I'll have to read the article soon to understand what happened!

@Sargeros Yes, it's something expected, I have the same issue with some other tools.

Maybe it has a User-Agent I can whitelist though? -

@Sargeros Yes, it's something expected, I have the same issue with some other tools.

Maybe it has a User-Agent I can whitelist though?@Soblow It seems like it announces itself as a browser https://codeberg.org/readeck/readeck/src/commit/5a979acdb2afcfe8f87d5385db778e3643322b04/internal/httpclient/client.go#L33

But don't worry, I used the browser extension to save your article and it worked fine

-

@Soblow It seems like it announces itself as a browser https://codeberg.org/readeck/readeck/src/commit/5a979acdb2afcfe8f87d5385db778e3643322b04/internal/httpclient/client.go#L33

But don't worry, I used the browser extension to save your article and it worked fine

@Sargeros

pretty sure that UA got caught in the maze indeed...

pretty sure that UA got caught in the maze indeed...

good to know!

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

-

@velvet @Soblow @NafiTheBear Yup they are the bane of my life. I have an ... aggressive ... filter list, and have absolutely no qualms in just blocking LLM's entirely

-

If you self-host services on the internet, you may have seen waves of crawlers hammering your websites without mercy.

To annoy them and protect my services from DDoS, I decided to setup an

iocaineinstance, along with NSoE... And it worked... Too well.Recently, they started flooding my VPS so much it started choking.

If you followed me here on Fedi, you saw my journey to find a way to relieve my server.This is a rant about LLM crawlers, and some observations & conclusions, along with some techniques to help you protect your own services.

Read it here: https://xaselgio.net/posts/26.poisoning-knowledge/

Edit: A follow-up is now available here: https://xaselgio.net/posts/26-1.addendum-poisoning-knowledge

I should do an addendum but right now, my main website is getting hammered at rates similar to what my knowledge website used to be hit at.

On the opposite, the "knowledge" website is back at "normal" background noise of <100req/min.The banlist now contains so many IPs, and yet they still reach 6kreq/min nearly constantly.

At that point, I'm thinking about tinkering my banip tool to compute optimal subnets instead of always crafting

/24subnets. -

I should do an addendum but right now, my main website is getting hammered at rates similar to what my knowledge website used to be hit at.

On the opposite, the "knowledge" website is back at "normal" background noise of <100req/min.The banlist now contains so many IPs, and yet they still reach 6kreq/min nearly constantly.

At that point, I'm thinking about tinkering my banip tool to compute optimal subnets instead of always crafting

/24subnets.@Soblow feel like once you have blocked the big data center it just become wack and mole since tools like those exist

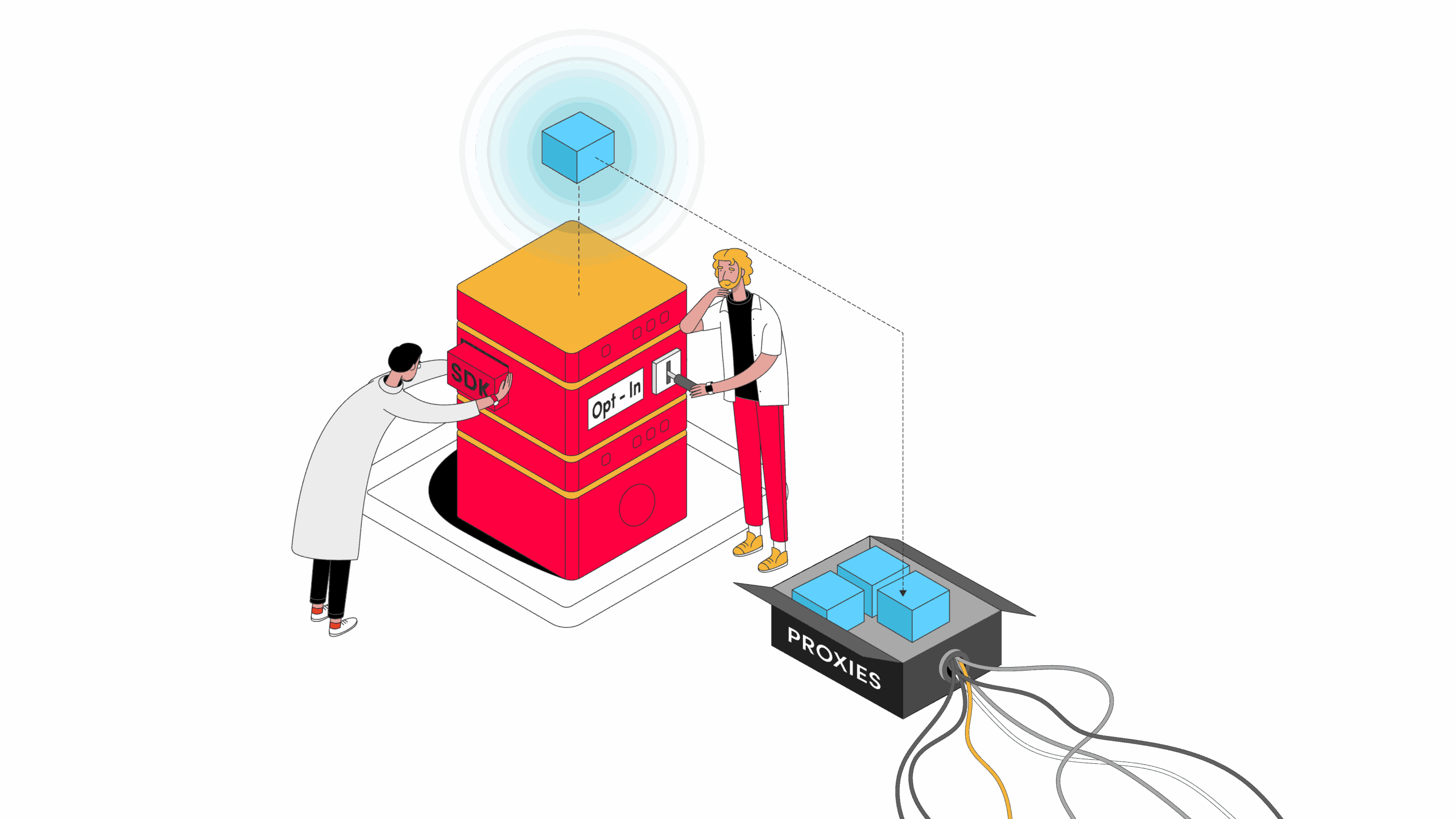

Internet Sharing SDKs: a Closer Look at the Emerging App Monetization Method - Proxyway

Internet sharing SDKs are reshaping app monetization. But what do you need to know before adopting them?

Proxyway (proxyway.com)

-

I should do an addendum but right now, my main website is getting hammered at rates similar to what my knowledge website used to be hit at.

On the opposite, the "knowledge" website is back at "normal" background noise of <100req/min.The banlist now contains so many IPs, and yet they still reach 6kreq/min nearly constantly.

At that point, I'm thinking about tinkering my banip tool to compute optimal subnets instead of always crafting

/24subnets.@Soblow

curious, is the subnet thing using similarities in the ip to ban specific ranges?computing optimal things sounds like a math problem and if so, im game to try it out

-

I should do an addendum but right now, my main website is getting hammered at rates similar to what my knowledge website used to be hit at.

On the opposite, the "knowledge" website is back at "normal" background noise of <100req/min.The banlist now contains so many IPs, and yet they still reach 6kreq/min nearly constantly.

At that point, I'm thinking about tinkering my banip tool to compute optimal subnets instead of always crafting

/24subnets.That's frightening.

Like, legitimately, I'm scared of what that means.I'll try to ratelimit at 10req/min per ip.

-

@Soblow

curious, is the subnet thing using similarities in the ip to ban specific ranges?computing optimal things sounds like a math problem and if so, im game to try it out

@lilacperegrine Are you familiar with the concept of a quadtree?

-

@lilacperegrine Are you familiar with the concept of a quadtree?

@Soblow

a data structure where every node has 4 children?

im sorta familiar with it, but i havent used it much -

That's frightening.

Like, legitimately, I'm scared of what that means.I'll try to ratelimit at 10req/min per ip.

@Soblow I've been following this with interest

-

@Soblow

a data structure where every node has 4 children?

im sorta familiar with it, but i havent used it much@lilacperegrine Well, I have an intuition that the problem I'm looking to solve is akin to the construction of a quadtree:

I have a list of IPv4/32. I want to generalize this list using the properties of subnets (ansubnet contains 2n+1subnets).

I don't have an explaination though, and my math skills are rusty... -

@lilacperegrine Well, I have an intuition that the problem I'm looking to solve is akin to the construction of a quadtree:

I have a list of IPv4/32. I want to generalize this list using the properties of subnets (ansubnet contains 2n+1subnets).

I don't have an explaination though, and my math skills are rusty...@Soblow@eldritch.cafe @lilacperegrine@clockwork.monster Seems like a cool thing to do… if only I could use university's time to do that.