There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

-

@jenniferplusplus I seriously doubt this is smoke and mirrors, recent models have improved significantly for cybersec and the industry is noticing:

daniel:// stenberg:// (@bagder@mastodon.social)

The challenge with AI in open source security has transitioned from an AI slop tsunami into more of a ... plain security report tsunami. Less slop but lots of reports. Many of them really good. I'm spending hours per day on this now. It's intense.

Mastodon (mastodon.social)

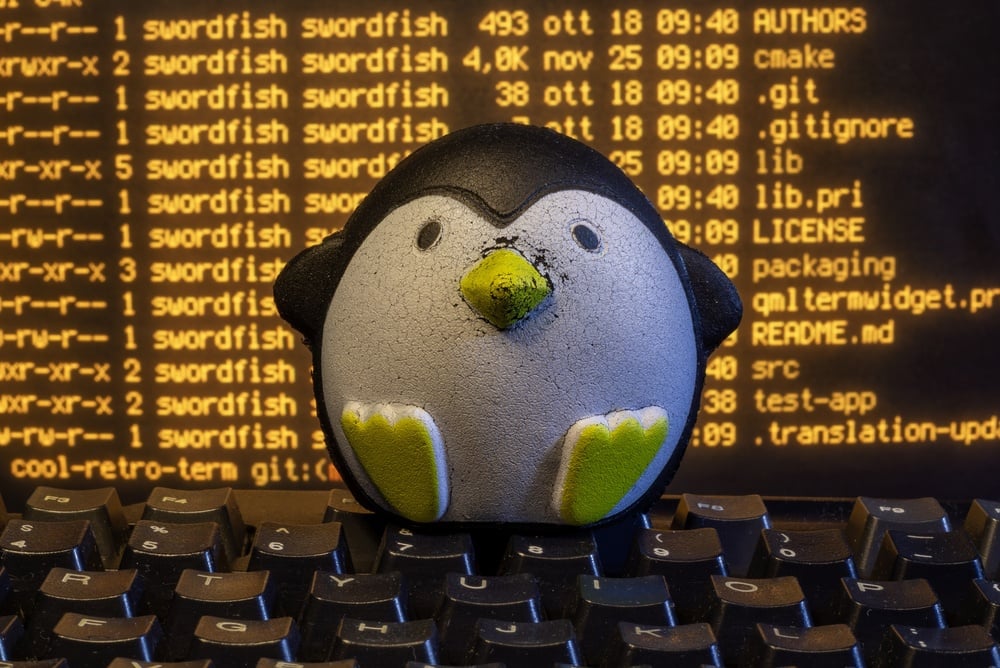

Linux kernel czar says AI bug reports aren't slop anymore

Interview: Greg Kroah-Hartman can't explain the inflection point, but it's not slowing down or going away

(www.theregister.com)

The industry consensus seems to be that there's going to be a torrent of vulnerabilities being found in all sorts of software, and they're not prepared to handle the blast radius. It's not surprising that Anthropic wants to give a select few a head start to tackle them. It would be nice if their token fund was open to all OSS projects to apply.

I'm also pressing "X doubt" that you spend months coordinating between AWS, Apple, Microsoft, Google, and the Linux Foundation to organise this just because your tool's code leaked online.

Keep chugging that flavor aid.

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus but what about when their models created a full C compiler… oh, right.

But what about when they said software development would be dead in 6-12 months… oh, again.

You know, it’s almost like they have an over active marketing team

-

A couple people seem very invested in me being wrong about this assessment. All I can say is that this would be the first time I have misclassified an AI claim as bullshit

@jenniferplusplus "But if you're wrong this time and we don't panic and trust the slop salesman that he has a super duper vuln finder, we're all gonna get pwned!!!!!111111"

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus It's also important that to whatever extent this product actually works (I'm as skeptical as you are), it fundamentally preferences the attacker. The product has way too many false positives to run in CI, so the defender can only use it as part of an occasional audit. The attacker doesn't care about CI or development friction, and wins by finding one exploit in an entire stack, even if they have to wade through many false positives to find it.

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus my favorite is the recent demand to drop pdf file format, because the genius llm's can not parse them

-

@budududuroiu @jenniferplusplus some people have published numbers or noticed "a significant increase in quality" but none of these things bear any scientific rigor. My guess is that the one huge trick anthropic pulled was merely a bigger context window. Sure, that tends to give more context-related (not "true" or "accurate") results (duh!) but it's hardly revolutionary. LLMs are still statistical models doing fancy autocomplete & they know nothing about the world, I'll hold my breath

@dngrs @budududuroiu @jenniferplusplus

People keep getting tricked by framing.

LLM companies frame what the models are doing as something else than what it is (autocomplete), and people whose competence is not in epistemic evaluation then look at the results based on the framing, rather than "this is autocomplete, it has to answer something, so it makes something up".And then other people take those soundbites and run with them.

"Did you hear? Mr. Big Name said this stuff really works!" -

@jenniferplusplus I would like to remind everyone that Misanthropic and that little bitch Claude are among the worst actors out there, because it's a cult. An amoral, do-anything-to-win cult that actually believes they are building "sentient life". Which is totally insane. https://www.404media.co/anthropic-exec-forces-ai-chatbot-on-gay-discord-community-members-flee/

@codinghorror @jenniferplusplus it looks like they uh ... the entire koolaid

-

@budududuroiu @jenniferplusplus some people have published numbers or noticed "a significant increase in quality" but none of these things bear any scientific rigor. My guess is that the one huge trick anthropic pulled was merely a bigger context window. Sure, that tends to give more context-related (not "true" or "accurate") results (duh!) but it's hardly revolutionary. LLMs are still statistical models doing fancy autocomplete & they know nothing about the world, I'll hold my breath

@dngrs Well, you're partly correct, partly wrong. Yes, pretrained transformers are, like all generative models, definitionally modelling a joint probability distribution, and autoregressively generating from that joint probability distribution.

Those are the models you're referring to as autocomplete tools, hence why you had to use `[MASK]` with early transformers like BERT to get them to complete the "most probable token".

Regardless, it doesn't matter what Anthropic did, if it allows for a massive reduction in cost of finding zero days, it's a problem. It doesn't have to be revolutionary, it doesn't have to be superintelligence, AGI, whatever woo-hoo flashy marketing terms. If a reduction in cost of computing protein folding happens, i.e. OpenFold implementation of AlphaFold, that wouldn't be revolutionary, but would still be dangerous, since you now potentially have lone actors being able to make prions at home (I'm using this as an absurd, but probable case).

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus The thing that interests me the most about this is what specifically happened with Greg KH in that one article where he claimed it found 40 real vulnerabilities in a report containing 60?

I am willing to bet it isn't as simple as is presented. If it is, then I want proof that they aren't targeting special attention at certain users. I think you could do a lot, auditing the kernel and waiting for Greg to ask. Especially if some devs are making contributions aided by claude...

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus Open AI made similar claims about their model being so good it was dangerous and they weren't going to release it. In 2019. https://techcrunch.com/2019/02/17/openai-text-generator-dangerous/

-

@jenniferplusplus "Our new model is too dangerous for the public, we couldn't possibly release it! Anyway, you can subscribe to it for $150 a month."

@chrisp no, you cannot subscribe to it because it is NOT released yet.

-

@budududuroiu @jenniferplusplus I wouldn't give Anthropic's motives a lot of credit here but LLMs do make bug hunting much easier.

@mirth @budududuroiu @jenniferplusplus Tell that to all the open source repo maintainers who get spammed with fake, nonsensical bug reports generated by AI?

-

@mirth @budududuroiu @jenniferplusplus Tell that to all the open source repo maintainers who get spammed with fake, nonsensical bug reports generated by AI?

@jedimb They can... close submissions? Many projects already have. It's like a 2 second change.

-

@jedimb They can... close submissions? Many projects already have. It's like a 2 second change.

@budududuroiu @mirth @jenniferplusplus Making bug fixing more difficult because legitimate reports get blocked alongside the noise.

-

@budududuroiu @mirth @jenniferplusplus Making bug fixing more difficult because legitimate reports get blocked alongside the noise.

@jedimb and the alternative is?

-

There's one very important thing I would like everyone to try to remember this week, and it is that AI companies are full of shit

Only rarely do their claims actually bear scrutiny, and those are only the mildest of claims they make.

So, anthropic is claiming that their new, secret, unreleased model is hyper competent at finding computer security vulnerabilities and they're *too scared* to release it into the wild.

Except all the AI companies have been making the same hypercompetence claims about literally every avenue of knowledge work for 3+ years, and it's literally never true. So please keep in mind the highly likely possibility that this is mostly or entirely bullshit marketing meant to distract you from the absolute garbage fire that is the code base of the poster child application for "agentically" developed software

You may now resume doom scrolling. Thank you

@jenniferplusplus Big AI is making all AI look bad.

-

@jedimb and the alternative is?

@budududuroiu @mirth @jenniferplusplus What we had just a few years ago.

-

@budududuroiu @mirth @jenniferplusplus What we had just a few years ago.

@jedimb yeah well that ship has sailed long ago.

-

@jedimb yeah well that ship has sailed long ago.

@budududuroiu @mirth @jenniferplusplus "The plague is here. Let's just live with it" does seem to be a recurring sentiment, but it doesn't change that it's a plague.

-

@budududuroiu @mirth @jenniferplusplus "The plague is here. Let's just live with it" does seem to be a recurring sentiment, but it doesn't change that it's a plague.

@jedimb norms are downstream from power. Current power balance is shifted towards frontier labs and hyperscalers, norms around personal computing (RAM prices) and open source software (AI slop floods) are dictated by them.

Moralising AI use with no power to back it up is useless, gatekeeping is power because it says "want to contribute to this project, abide by our rules"

The case for gatekeeping, or: why medieval guilds had it figured out

Every open source maintainer I've talked to in the last six months has the same complaint: the absolute flood of mass-produced, AI-generated, mass-submitted slop requests have turned their repositories into a slush pile. The contributions look like contributions, they have commit messages, they reference issues and they follow templates etc.

Westenberg. (www.joanwestenberg.com)