Bluesky is down today.

-

@jeromechoo@masto.ai @mcc@mastodon.social Yes, I know that. Trouble is not one content send to many, but many content sent to one.

Each post of one instance is sent only once to mastodon.social, but EACH post. -

System shared this topic

-

@jeromechoo@masto.ai @mcc@mastodon.social Yes, I know that. Trouble is not one content send to many, but many content sent to one.

Each post of one instance is sent only once to mastodon.social, but EACH post.@mcc@mastodon.social @jeromechoo@masto.ai So a huge instance sent dozen of post per second (many content generated, but delivered only one) to another huge instance, with one background job per content to deliver.

-

@mcc@mastodon.social @jeromechoo@masto.ai So a huge instance sent dozen of post per second (many content generated, but delivered only one) to another huge instance, with one background job per content to deliver.

@mcc@mastodon.social @jeromechoo@masto.ai The trouble scale not to the down instance size, but to the alive instance size. The more it is active with many content generated, the fastest the background job queue fill with dangling content.

-

This appears to be the explanation:

Rudolph Fraser. (@rude1.blacksky.team)

Even their relay seems down(?) Trying to switch some things to use atproto.africa https://atproto.africa

Blacksky (blacksky.community)

In Bluesky, the PDS talks to the relay talks to the appview goes to the client. Blacksky set up all four last year. But they only deployed their PDS and client, at first. They used Bluesky's relay and appview. This wasn't clearly disclosed. Then there was a censorship scare, and they switched to their own appview. But apparently they're still using Bluesky's relay. This wasn't clearly disclosed. Now relay death kills Blacksky.

@mcc When I initially raised my eyebrows at Bluesky's notion of "federation", I was told that anyone can run a relay on a small cheap computer, it's dead easy, etc.…

-

@mcc@mastodon.social @jeromechoo@masto.ai The trouble scale not to the down instance size, but to the alive instance size. The more it is active with many content generated, the fastest the background job queue fill with dangling content.

-

Now, interestingly, this means that Blacksky users can continue talking to Blacksky users. I can read Rudy's posts on Blacksky. Because that bypasses the relay. But¹ to read my *own* posts, *on a self-hosted PDS*, Bluesky is apparently required, because Blacksky relies on Bluesky's "relay" to scrape my PDS before it gets added to the Blacksky appview database.

¹ (if I'm interpreting Rudy's posts correctly, hardly a guarantee)

@mcc@mastodon.social From what I understand of the protocol, they could just stop using a relay at all, but then it would increase the traffic on all the PDS that were scrapped by the relay until then, since the AppView would have to connect to each of those instead of the relay.

And did switching to another relay solved the issue?

-

@jeromechoo@masto.ai @mcc@mastodon.social It affect deliver to masto.ai because EACH of my post generate a dangling request, hiting timeout. After a while, my worker consume more time to dangling request taking 2-3s (hiting timeout) than trying to send content to masto.ai.

-

R relay@relay.publicsquare.global shared this topic

-

@jeromechoo@masto.ai @mcc@mastodon.social It affect deliver to masto.ai because EACH of my post generate a dangling request, hiting timeout. After a while, my worker consume more time to dangling request taking 2-3s (hiting timeout) than trying to send content to masto.ai.

@mcc@mastodon.social @jeromechoo@masto.ai Each post is a dangling request which will consume 3s of CPU time and so 10× consumption of 300ms for alive server, and planned for reschedule. After a while, all workers are just stuck with full of 3s waiting process, with starvation for alive requests.

-

(And *how* does ActivityPub avert these problems? Well, ActivityPub has the "instance" abstraction. The federate-or-defederate relationships serve as a basic web of trust so some work, like moderation, doesn't have to be fully duplicated. Data is shared between instances only when a follow-relationship requires it, reducing work. Instances can still get too big and maintainers overworked, but you can fix that problem with more, smaller instances. As above, *there ARE no small Bluesky instances*)

Updates

- Over the last two hours the problem has gone from "I don't see my posts" to "I see my posts 1 hour after I make them" to "17 minutes" to "3 minutes" to "it's fixed". I interpret this as the relay firehose pointer, whatever relay is in use right now, gradually catching up.

- I need to stress the above thread is a mix of fact (ATProto federation is duplicative and often brittle) and conjecture (I can't know what relay is being used internally by Blacksky except if Rudy tells us).

-

@mcc@mastodon.social From what I understand of the protocol, they could just stop using a relay at all, but then it would increase the traffic on all the PDS that were scrapped by the relay until then, since the AppView would have to connect to each of those instead of the relay.

And did switching to another relay solved the issue?

@breizh As of this second, Blacksky has resolved the issue. I don't know how.

-

@mcc@mastodon.social @jeromechoo@masto.ai Each post is a dangling request which will consume 3s of CPU time and so 10× consumption of 300ms for alive server, and planned for reschedule. After a while, all workers are just stuck with full of 3s waiting process, with starvation for alive requests.

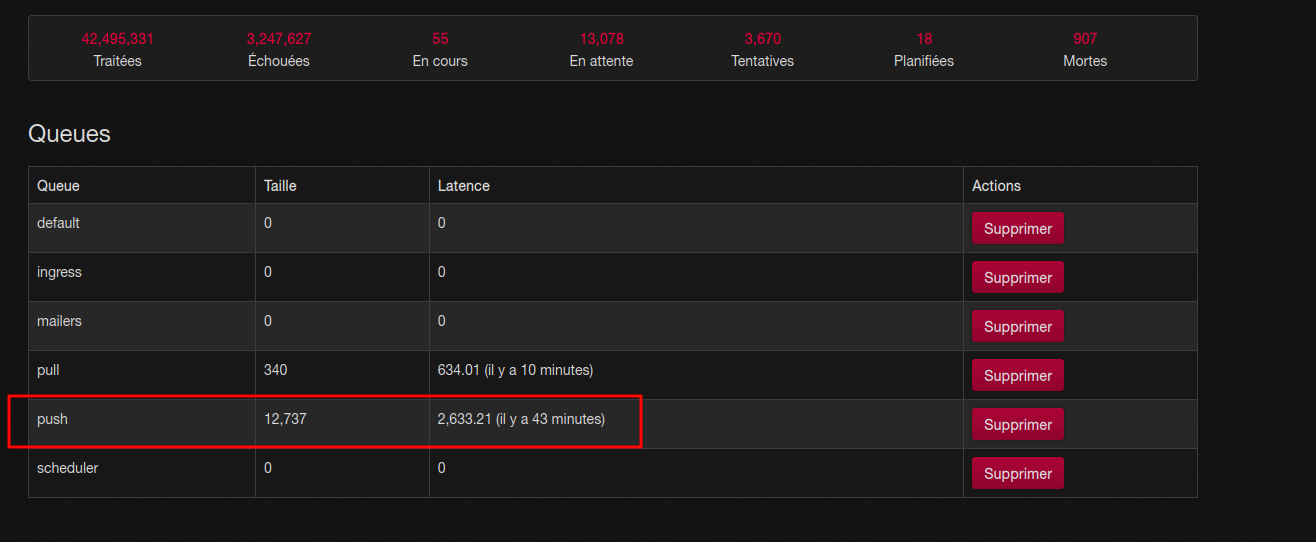

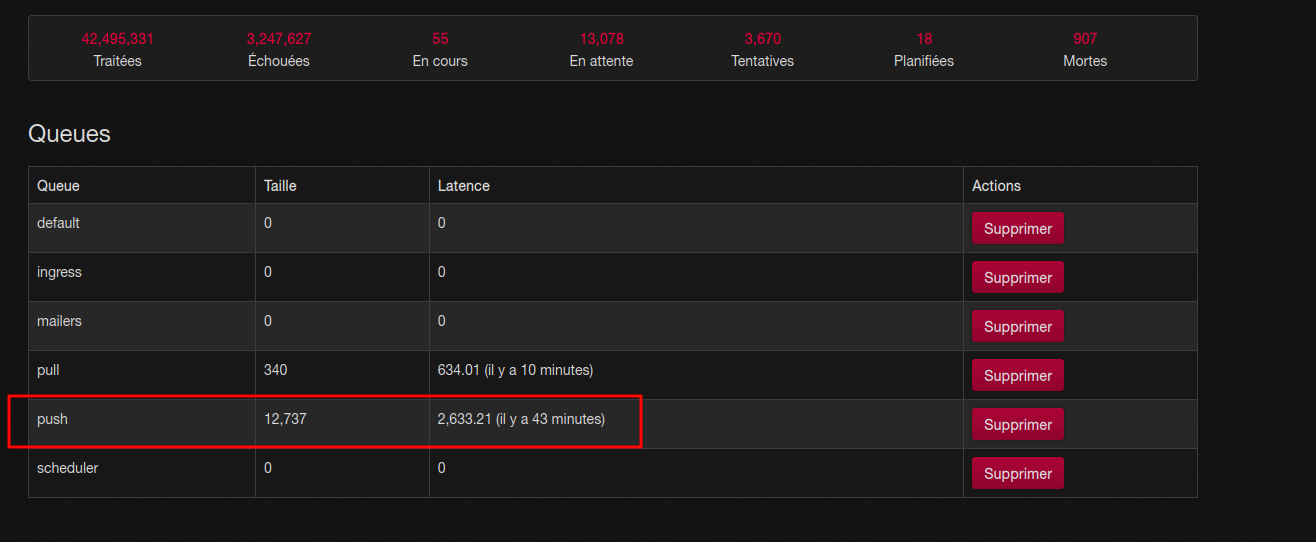

@mcc@mastodon.social @jeromechoo@masto.ai After a while, you have 43 minutes latency for EVERY DELIVERY, even alive server. I experience that on my own Mastodon instance…

-

@mcc@mastodon.social No, it's the trouble with the push design of ActivityPub.

@mcc@mastodon.social @aeris@firefish.imirhil.fr if that's really the case, if anything, that's an implementation problem. Mail servers have dealt with this problem for ages. That's why they have queues and per server exponentially increasing retry intervals. Push is not inherently bad.

-

@mcc this is a really good breakdown, thank you for this thread

-

@jeromechoo@masto.ai @mcc@mastodon.social It affect deliver to masto.ai because EACH of my post generate a dangling request, hiting timeout. After a while, my worker consume more time to dangling request taking 2-3s (hiting timeout) than trying to send content to masto.ai.

-

@mcc@mastodon.social @jeromechoo@masto.ai After a while, you have 43 minutes latency for EVERY DELIVERY, even alive server. I experience that on my own Mastodon instance…

@mcc@mastodon.social @jeromechoo@masto.ai At the end any workers just have 7% of "luck" (3 out of 42) to hit a down request, consuming resource for nothing for 2-3s, having no more time to schedule alive server, with 13.000 pending request because starvation, with many many alive request in those 13.000. Perhaps the 13.000th will be a alive one, but it will be delivered in only 43 minutes in average.

-

@jeromechoo@masto.ai @mcc@mastodon.social No, it not running fine. I ALREADY reported 43 minutes latency to deliver ANY MESSAGE on Mastodon. This "bug" (in fact bad design) is known since ages.

https://github.com/mastodon/mastodon/issues/12445 -

@jeromechoo@masto.ai @mcc@mastodon.social No, it not running fine. I ALREADY reported 43 minutes latency to deliver ANY MESSAGE on Mastodon. This "bug" (in fact bad design) is known since ages.

https://github.com/mastodon/mastodon/issues/12445@mcc@mastodon.social @jeromechoo@masto.ai And my new instance (migrating from Mastodon to Misskey exactly for this reason) is ALREADY filled with 600 dangling requests. At this point it doesn't generate any noticable delay, but only because the overall death rate is low. If a huge instance goes down, it would not be the same at all.

-

@mcc@mastodon.social @jeromechoo@masto.ai At the end any workers just have 7% of "luck" (3 out of 42) to hit a down request, consuming resource for nothing for 2-3s, having no more time to schedule alive server, with 13.000 pending request because starvation, with many many alive request in those 13.000. Perhaps the 13.000th will be a alive one, but it will be delivered in only 43 minutes in average.

-

P2P is a world where naturally the more people use it, the faster and more resilient the network becomes. Load gets distributed. Working nodes talk to each other and ignore nonworking nodes. That's how the primitive, BitTorrent era systems worked.

Bluesky somehow applied superfancy alien future technology to invent P2P traffic jams. When one node goes down, the others go down because they depended on it. Because it's a mesh of interoperating microservices by different providers, not federation.

@mcc the worst are distributed p2p attacks like i watch in ipfs.