Bluesky is down today.

-

@mcc@mastodon.social Fedi is not both. And worse, by design, Fediverse is LESS distributed, because a down instance will stuck ALL other instance with dangling job, because it's a push protocol and not a pull one.

@aeris I don't think you know what you're talking about, and if mastodon.social goes down firefish.imirhill.fr can continue to talk to digipres.club.

-

@thisismissem Yes, they have had a relay for over a year. But they're apparently not using it, similar to how they had an appview for about six months before they started using it. My source for this is Rudy saying, in the link upthread, that current blacksky service outages are due to the Bluesky relay going down and he is trying to "switch over to atproto.africa for some things". Implying he was not using atproto.africa for those things 24 hours ago.

@mcc oh! Interesting, I'd just assumed they were already using it.

-

@mcc@mastodon.social Each message from my instance will generate a job to mastodon.social, because at least one ppl there follow me. If mastodon.social vanish, my own instance will start to hang for ALL message I post.

@aeris I do not believe this is true and if it does it indicates some kind of really weird problem with your instance specifically.

-

@aeris I don't think you know what you're talking about, and if mastodon.social goes down firefish.imirhill.fr can continue to talk to digipres.club.

@mcc@mastodon.social No. Or with huge delay. Because each of my message will generate a background job to mastodon.social, leading to queue overflow over time and more and more lag even for digipres.club delivery.

-

TLDR

1. My definition of "P2P" or "Federated" is that if server A goes down, servers B and C can still talk to each other.

2. Bluesky/"Atmosphere" fails at this because Blacksky (B) requires Bluesky (A) to talk to me (C).

3. In order for Blacksky to avert this, they have to do something unreasonable and expensive.

4. Blacksky someday *will* do this, but will depend heavily on massively overworking Rudy and a few other people. This may someday fail.

5. ActivityPub has problems, but not these

But if the problem is the relay couldn't they just use the microcosm.blue relays and call it a day ?? They are compatible with the original ones

And didn't Blacksky also ran their own relay at atproto.africa ??

-

@aeris I do not believe this is true and if it does it indicates some kind of really weird problem with your instance specifically.

@mcc@mastodon.social No, it's the trouble with the push design of ActivityPub.

-

But if the problem is the relay couldn't they just use the microcosm.blue relays and call it a day ?? They are compatible with the original ones

And didn't Blacksky also ran their own relay at atproto.africa ??

@javascript Before I attempt to reply to this, please clarify whether read the post I posted above.

Rudolph Fraser. (@rude1.blacksky.team)

Even their relay seems down(?) Trying to switch some things to use atproto.africa https://atproto.africa

Bluesky Social (bsky.app)

Rudolph Fraser. (@rude1.blacksky.team)

Even their relay seems down(?) Trying to switch some things to use atproto.africa https://atproto.africa

Blacksky (blacksky.community)

Yes, they've been running atproto.africa since last year. But are they *using* it?

-

@mcc@mastodon.social No, it's the trouble with the push design of ActivityPub.

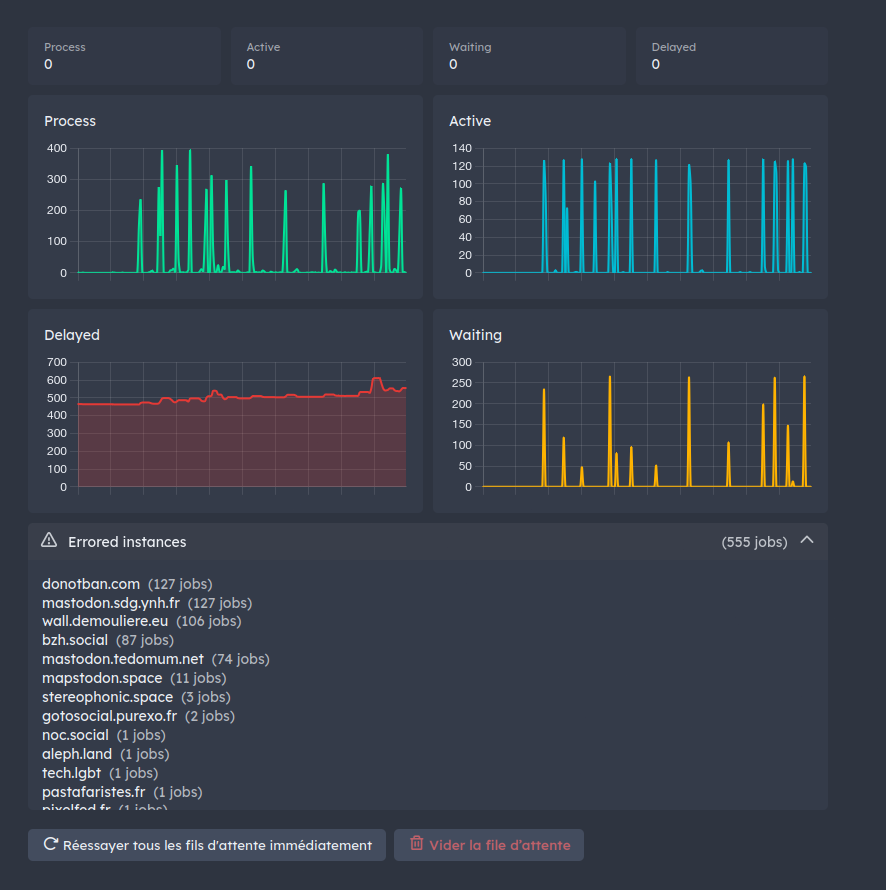

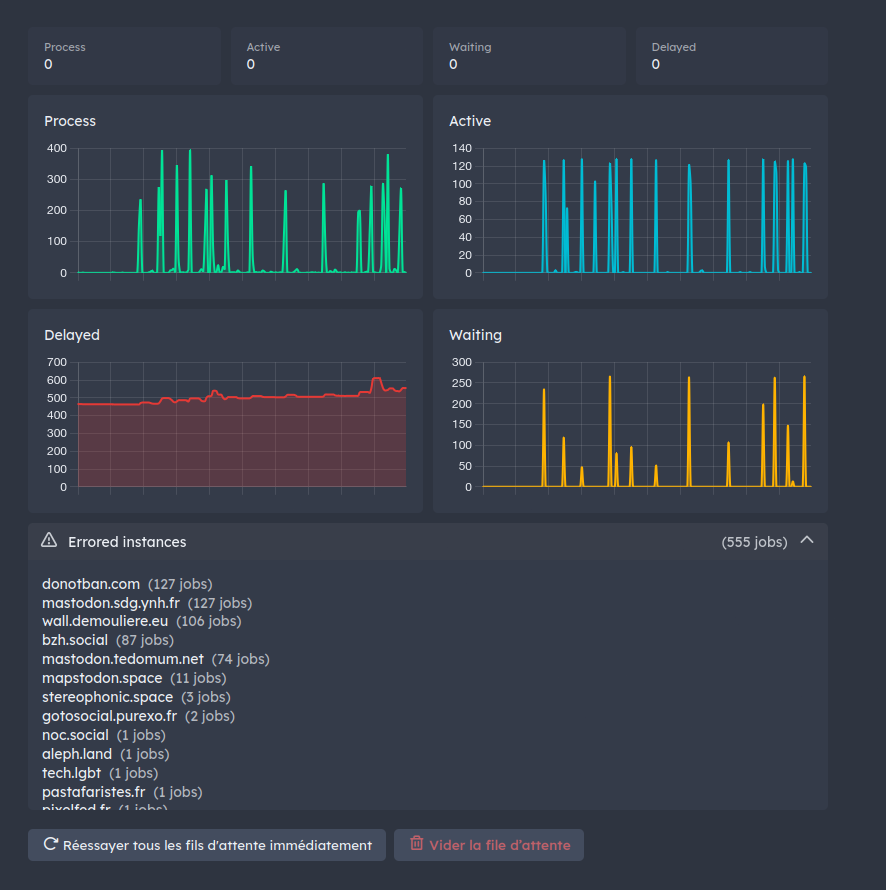

@mcc@mastodon.social Each of your message generate a background job on a queue to be submitted to every instance with at least one ppl following you. If a huge one is down, all other instances will start to fill background queue with tons of dangling query, delaying more and more request for still live instance.

-

This is why I believe Bluesky was never meant to be federated. To create a Bluesky "instance", like Blacksky is heroically attempting, you have to perfectly duplicate every server Bluesky runs. But Bluesky is a business operating at a loss by burning unlimited-for-now VC cash. That has always implied only a business with unlimited VC cash can create an instance. Blacksky is succeeding. Except on days where they aren't.

@mcc@mastodon.social they're allowed to succeed so they can be paraded around thet "see, it's all super distributed and decentralized".

The moment VCs realize they need RoI a bunch of " improvements" likely mostly "for security", probably " for safety", definitely "for the children" will add to the already insane architectural costs, a bunch of operafional burden that makes it impposible for other "instances" to exist. -

@mcc@mastodon.social Each of your message generate a background job on a queue to be submitted to every instance with at least one ppl following you. If a huge one is down, all other instances will start to fill background queue with tons of dangling query, delaying more and more request for still live instance.

@mcc@mastodon.social Currently i have around 600 "delayed" job because of down instance polluting all delivery. This was reported to Mastodon years ago. Nothing change.

-

@mcc oh! Interesting, I'd just assumed they were already using it.

@thisismissem @mcc probably worth noting that atproto.africa also appears to be down right now, and some microcosm services also appear to be going up and down

firehose.network and the microcosm relays look to be unaffected for now

-

@eestileib @mcc I'm no expert but it honestly sounds like a terrible way to build a network. or at least a pretty confounding way to build a network that you intend to be federated and decentralized in any capacity...

I have a skywalking friend and he says that if blacksky users had configured something in their app to make blacksky primary (which, to be fair, had never mattered before), their timelines would have remained synced with other blacksky users.

And also that blacksky was getting pulled down by bluesky repeatedly coming up, demanding to know the status of every lily in the field, then crashing.

Sounds like they need to come up with a more graceful recovery process and get bluesky to agree with it.

-

@javascript Before I attempt to reply to this, please clarify whether read the post I posted above.

Rudolph Fraser. (@rude1.blacksky.team)

Even their relay seems down(?) Trying to switch some things to use atproto.africa https://atproto.africa

Bluesky Social (bsky.app)

Rudolph Fraser. (@rude1.blacksky.team)

Even their relay seems down(?) Trying to switch some things to use atproto.africa https://atproto.africa

Blacksky (blacksky.community)

Yes, they've been running atproto.africa since last year. But are they *using* it?

I couldn't read the post linked above until you posted it again now, but I thought it was a bug in my software (wafrn) not getting the links right

-

@mcc@mastodon.social Currently i have around 600 "delayed" job because of down instance polluting all delivery. This was reported to Mastodon years ago. Nothing change.

@mcc@mastodon.social For tiny instance, it's not really a trouble, because few message and so queue don't fill.

For huge instance, pretty all message from all instances will generate a dangling request in queue. When queue filled, delay all message for any other instance even the one alive. -

@thisismissem @mcc probably worth noting that atproto.africa also appears to be down right now, and some microcosm services also appear to be going up and down

firehose.network and the microcosm relays look to be unaffected for now

-

I have a skywalking friend and he says that if blacksky users had configured something in their app to make blacksky primary (which, to be fair, had never mattered before), their timelines would have remained synced with other blacksky users.

And also that blacksky was getting pulled down by bluesky repeatedly coming up, demanding to know the status of every lily in the field, then crashing.

Sounds like they need to come up with a more graceful recovery process and get bluesky to agree with it.

@eestileib @nasser Posts hosted on the Blacksky PDS are appearing on the Blacksky AppView immediately. That's definitely true.

-

@mcc@mastodon.social For tiny instance, it's not really a trouble, because few message and so queue don't fill.

For huge instance, pretty all message from all instances will generate a dangling request in queue. When queue filled, delay all message for any other instance even the one alive.@mcc@mastodon.social And it's worst for huge still alive instance. Hundred of message per second. Hundred of job per second for down instance. Hundred of dead job filling queue because timeout, competing resources for alive job. At a point, all workers process only dead job…

-

TLDR

1. My definition of "P2P" or "Federated" is that if server A goes down, servers B and C can still talk to each other.

2. Bluesky/"Atmosphere" fails at this because Blacksky (B) requires Bluesky (A) to talk to me (C).

3. In order for Blacksky to avert this, they have to do something unreasonable and expensive.

4. Blacksky someday *will* do this, but will depend heavily on massively overworking Rudy and a few other people. This may someday fail.

5. ActivityPub has problems, but not these

@mcc This is a good take, mcc.

-

@mcc@mastodon.social And it's worst for huge still alive instance. Hundred of message per second. Hundred of job per second for down instance. Hundred of dead job filling queue because timeout, competing resources for alive job. At a point, all workers process only dead job…

@mcc@mastodon.social I don't know exactly what would be the effect of a 10 hour downtime like bluesky for a mastodon.social downtime for example. I expect at least delay growing over time even from no mastodon.social communication.

-

@mcc@mastodon.social And it's worst for huge still alive instance. Hundred of message per second. Hundred of job per second for down instance. Hundred of dead job filling queue because timeout, competing resources for alive job. At a point, all workers process only dead job…

@aeris If this problem is real I can imagine multiple ways to mitigate it. This is a software engineering problem.