sure this is all very bad for activitypub but this is truly amazing content

-

@faraiwe @thomasfuchs @laurenshof i hope you're aware that activitypub is just as public as atproto and that there is nothing stopping someone from creating/running the "tracking algorithms" you claim atproto does by default on this network

@esm I hope you are aware that anything shoved down our throats by techbros is to be seen as toxic and harmful, to their exclusive gain, and aligning yourself with their interests is stupid to the point of suicidal.

Don't let me know.

-

@anders Thanks, those are nice comments.

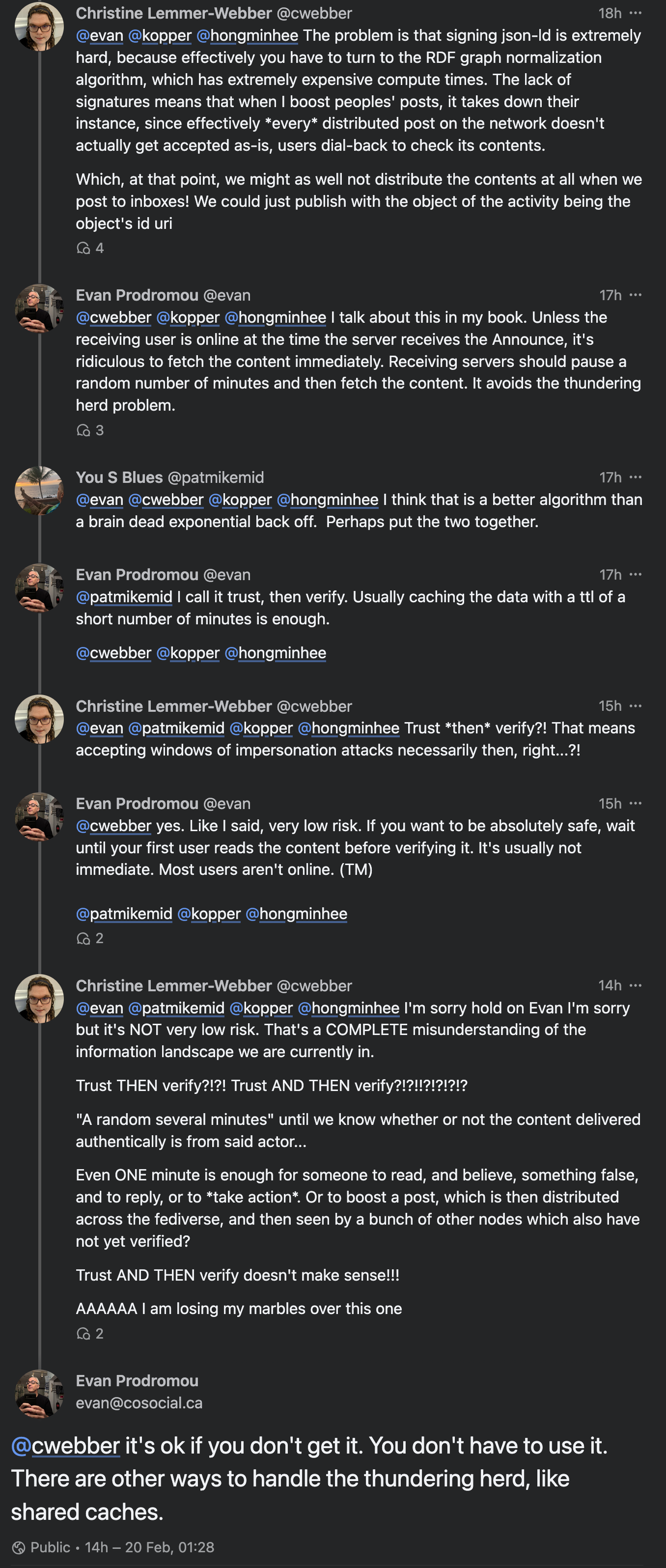

I should also note that I'm not averse to Christine's ideas around using digital signatures for verifying content. They can improve performance by short-circuiting some verification that would require fetching the content over HTTP.

I do disagree that they are the *only* way to improve that performance, and that it's worth breaking backwards compatibility of the network in order to enable digital signatures.

@evan @anders @promovicz @laurenshof It doesn't need to break backwards compatibility tho

But anyway

Long conversation potentially

-

@laurenshof I didn't think it was that bad at all.

@evan As a newbie to the tech end of decentralized spaces, I’m curious as to why you don’t think it should be “verify, then trust” or other alternatives that don’t allow bad actors to get at people who are not tech-savvy.

-

@faraiwe @thomasfuchs @laurenshof i hope you're aware that activitypub is just as public as atproto and that there is nothing stopping someone from creating/running the "tracking algorithms" you claim atproto does by default on this network

apparently i was blocked for saying this, reminder that pointing out that a bad thing that's possible on the "bad" network is also possible on the "good" network is not an endorsement of said bad thing in any way

cognitive dissonance and putting your head in the sand are not good strategies to handle potential threats

-

@evan As a newbie to the tech end of decentralized spaces, I’m curious as to why you don’t think it should be “verify, then trust” or other alternatives that don’t allow bad actors to get at people who are not tech-savvy.

@trishalynn Sure! So, there are two main events that happen here:

- The server receives the third-party data

- One of the server's users reads the third-party dataThere are usually at least a few minutes, and sometimes a few hours or even days between these two events.

-

@trishalynn Sure! So, there are two main events that happen here:

- The server receives the third-party data

- One of the server's users reads the third-party dataThere are usually at least a few minutes, and sometimes a few hours or even days between these two events.

@trishalynn The question is, when should the server *verify* the third-party data?

To be conservative, at the very least, the third party data should be verified before one of the server's users reads it.

-

@trishalynn The question is, when should the server *verify* the third-party data?

To be conservative, at the very least, the third party data should be verified before one of the server's users reads it.

@trishalynn If the user is online when the data is received, there may be no time between the time the data is received and when the user reads it.

However, most users aren't online most of the time. There's a strong chance that there are minutes, hours, or days between when the data is received and when it is read.

-

@trishalynn If the user is online when the data is received, there may be no time between the time the data is received and when the user reads it.

However, most users aren't online most of the time. There's a strong chance that there are minutes, hours, or days between when the data is received and when it is read.

@evan I should think that the server should verify first even if the user is not active online.

-

@trishalynn If the user is online when the data is received, there may be no time between the time the data is received and when the user reads it.

However, most users aren't online most of the time. There's a strong chance that there are minutes, hours, or days between when the data is received and when it is read.

@trishalynn Most ActivityPub implementations today lean waaaaaay into the early part of this gap -- verifying the data as soon as it is received.

The problem with this is that sometimes hundreds or even thousands of servers receive the data within a few seconds -- and if they all verify the data with the third-party server immediately, it can swamp that server with requests.

-

@evan I should think that the server should verify first even if the user is not active online.

@trishalynn Before it's read by a user, yes.

-

@trishalynn Most ActivityPub implementations today lean waaaaaay into the early part of this gap -- verifying the data as soon as it is received.

The problem with this is that sometimes hundreds or even thousands of servers receive the data within a few seconds -- and if they all verify the data with the third-party server immediately, it can swamp that server with requests.

@trishalynn One way to relieve this pressure on the third party server is to space out all these requests by seconds or even minutes. There are a couple of ways to do this.

-

@trishalynn One way to relieve this pressure on the third party server is to space out all these requests by seconds or even minutes. There are a couple of ways to do this.

@trishalynn One is to wait until the first reader reads the data. That event is going to vary wildly across servers, so it will spread out the requests and lower the load on the third-party server. The downside of this technique is that it introduces some extra time for that first read. Usually not a lot, but some.

-

@trishalynn One is to wait until the first reader reads the data. That event is going to vary wildly across servers, so it will spread out the requests and lower the load on the third-party server. The downside of this technique is that it introduces some extra time for that first read. Usually not a lot, but some.

@trishalynn Another is for the receiving server to wait a random number of seconds or minutes before doing the verification request. This spaces out the requests, and hopefully avoids the little delay for the user on first read. At worst, if a user tries to read the data before the verification timeout, you can do the verification then -- it's no worse than the previous method, and will usually be better.

-

@trishalynn One is to wait until the first reader reads the data. That event is going to vary wildly across servers, so it will spread out the requests and lower the load on the third-party server. The downside of this technique is that it introduces some extra time for that first read. Usually not a lot, but some.

@evan (Could you please let me know when you’re done explaining? I don’t want to jump in with clarifying Qs till you’re done.)

-

@trishalynn Another is for the receiving server to wait a random number of seconds or minutes before doing the verification request. This spaces out the requests, and hopefully avoids the little delay for the user on first read. At worst, if a user tries to read the data before the verification timeout, you can do the verification then -- it's no worse than the previous method, and will usually be better.

@trishalynn So, the last part, which I think is most controversial, is showing the unverified data to the user -- doing the verification *after* the first read.

This requires a lot of trust between the actors. But if a sending actor has sent 10 or 1000 or 10,000 shares, all of which have previously verified correctly, there's a very good chance that share number 10001 is also going to verify correctly.

-

@trishalynn So, the last part, which I think is most controversial, is showing the unverified data to the user -- doing the verification *after* the first read.

This requires a lot of trust between the actors. But if a sending actor has sent 10 or 1000 or 10,000 shares, all of which have previously verified correctly, there's a very good chance that share number 10001 is also going to verify correctly.

@trishalynn This requires a lot more tracking on the receiving server's part. I'm not even sure the performance benefits are that great, compared to waiting for first-read instead of verifying on receipt. But for high-volume servers, it might be a valuable strategy in the future.

-

@evan (Could you please let me know when you’re done explaining? I don’t want to jump in with clarifying Qs till you’re done.)

@trishalynn I think I'm done!

-

@trishalynn This requires a lot more tracking on the receiving server's part. I'm not even sure the performance benefits are that great, compared to waiting for first-read instead of verifying on receipt. But for high-volume servers, it might be a valuable strategy in the future.

@evan What's the effect on a high-volume server versus a lower-volume server when the ethos of "trust, then verify" is used to implement a solution?

-

@evan @anders @promovicz @laurenshof It doesn't need to break backwards compatibility tho

But anyway

Long conversation potentially

@cwebber The original conversation was about removing JSON-LD and potentially using another schema language or making one up, or throwing away extensibility altogether. That would break backwards compatibility.

I agree, we might be able to add digital signatures without removing JSON-LD.

-

@evan What's the effect on a high-volume server versus a lower-volume server when the ethos of "trust, then verify" is used to implement a solution?

@trishalynn OK, so, you're good with the idea that the data doesn't have to be verified until the first user reads it, correct? We're good up until there?