I don't understand how or why any person who knows how to read can claim AI systems are good at summarising.

-

I don't understand how or why any person who knows how to read can claim AI systems are good at summarising. But then I realise what they are claiming is different:

1️⃣ they don't know what summary means

2️⃣ they don't care about EVIDENCE AGAINST their views like...

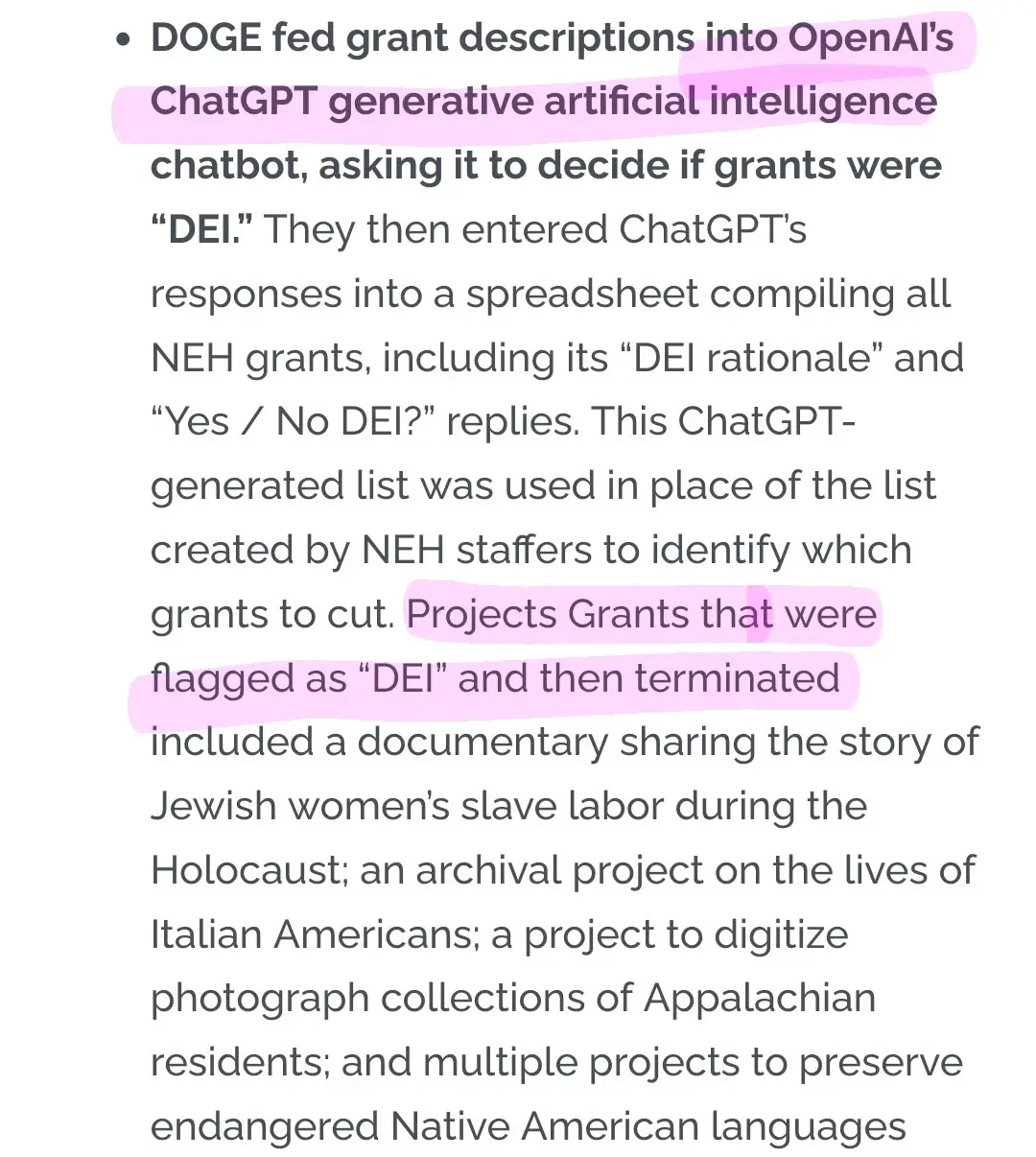

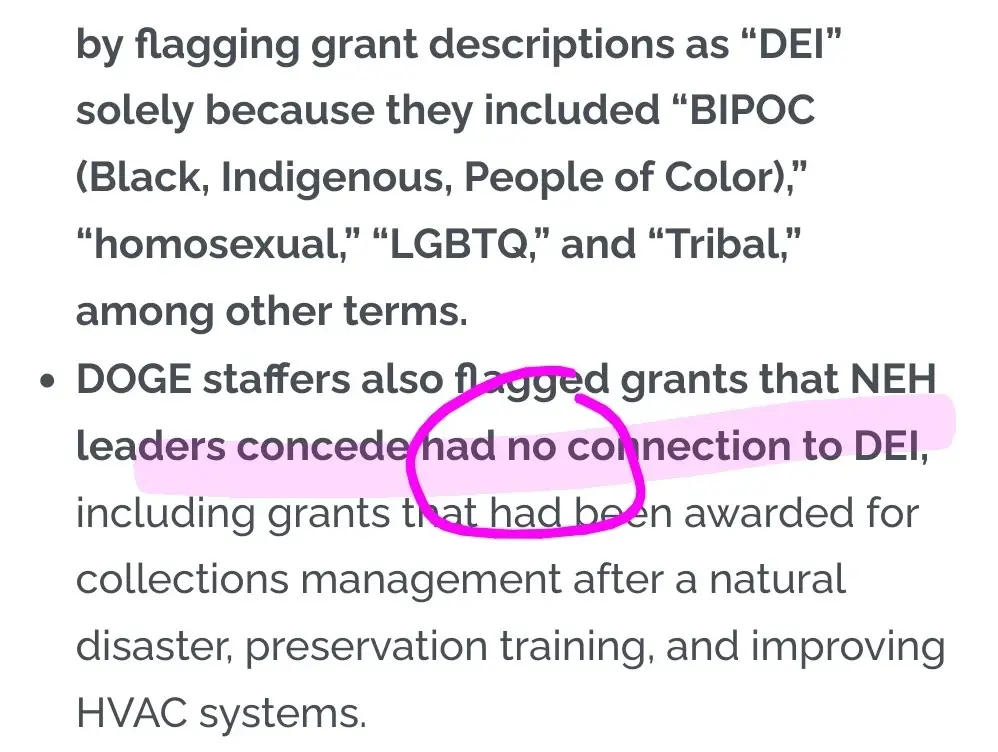

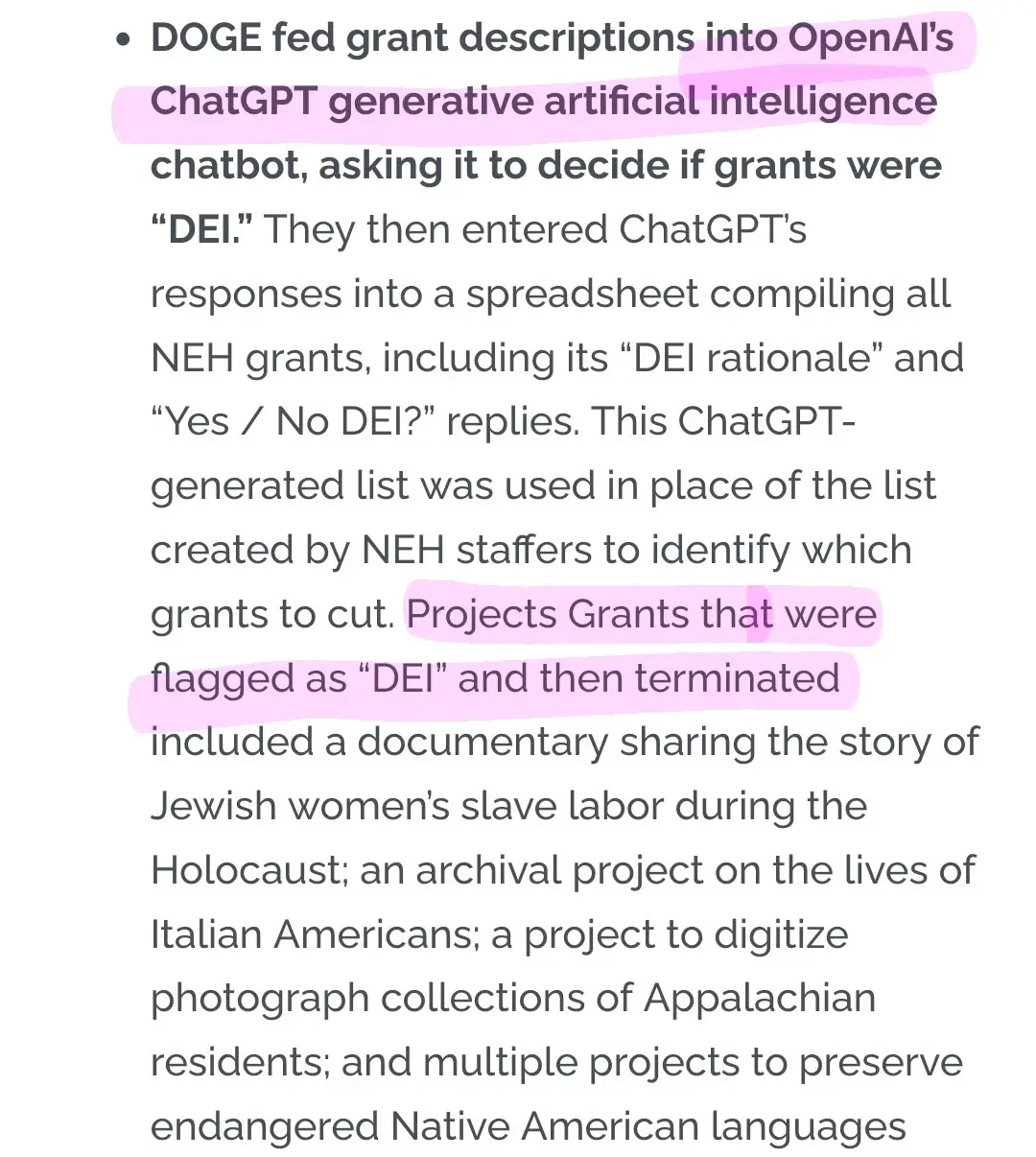

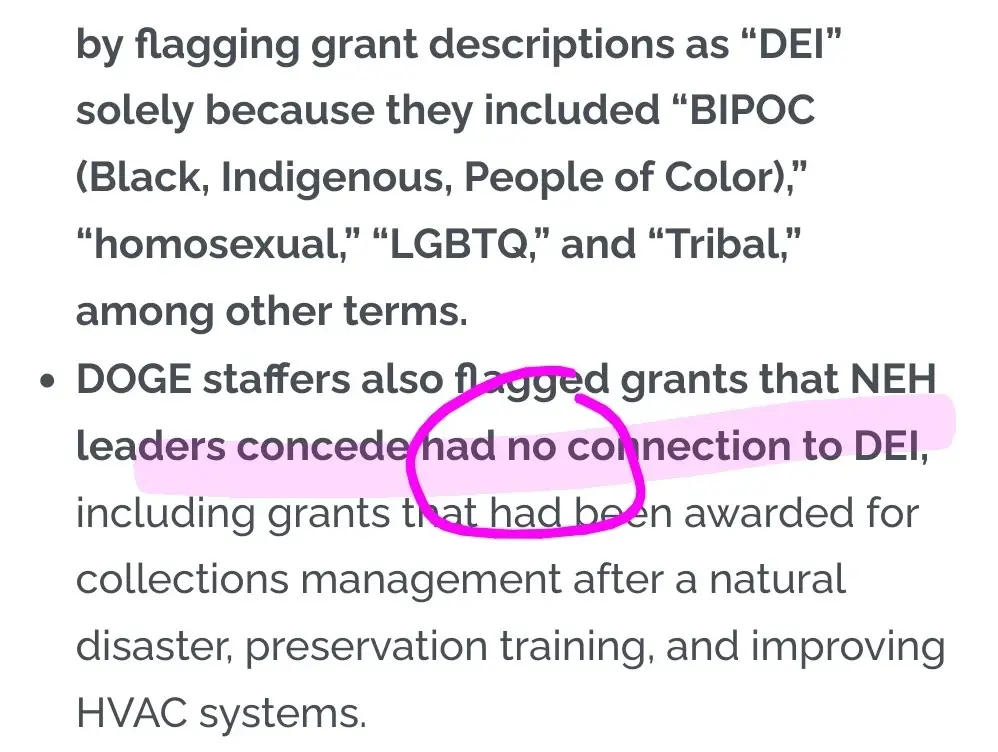

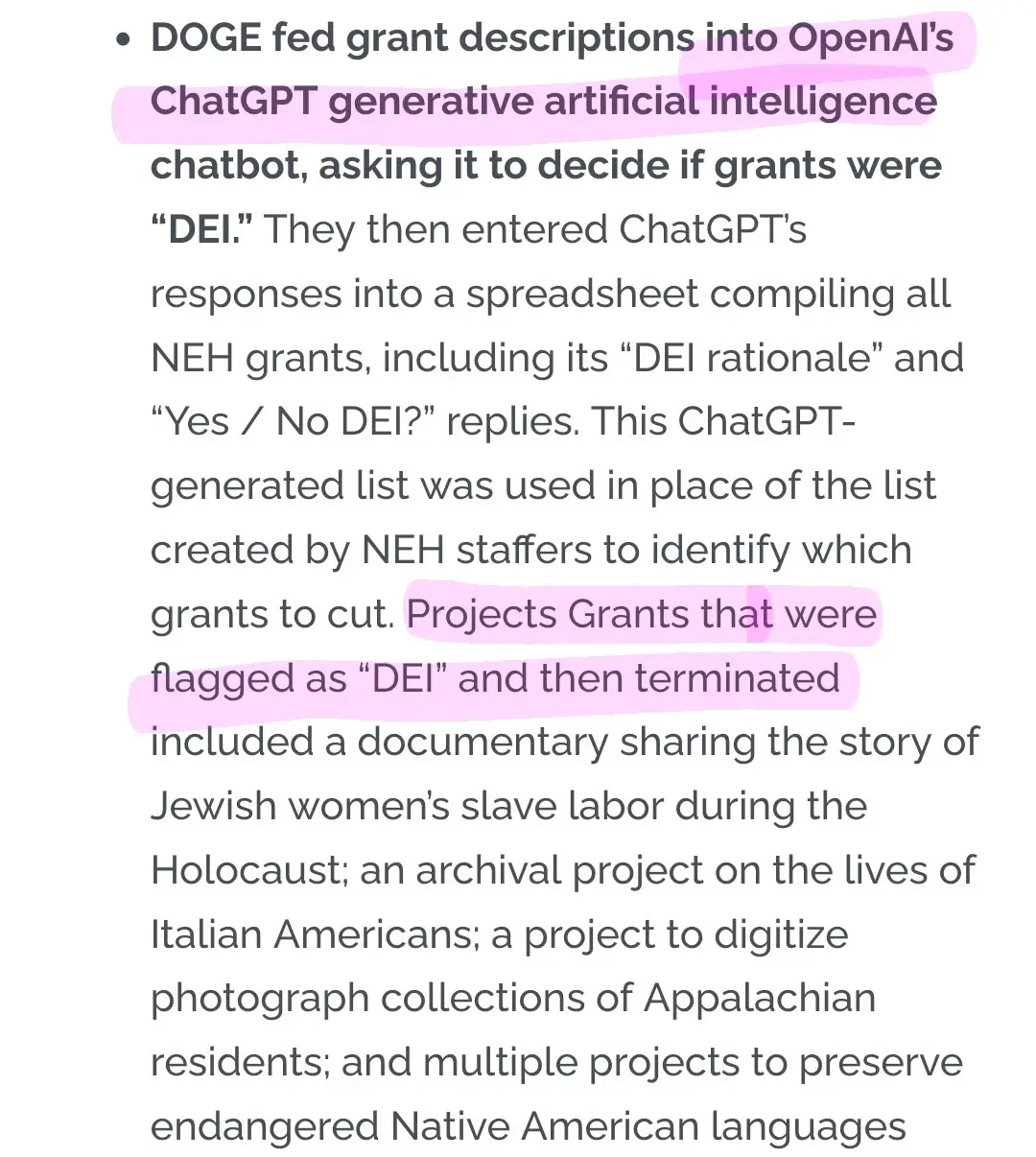

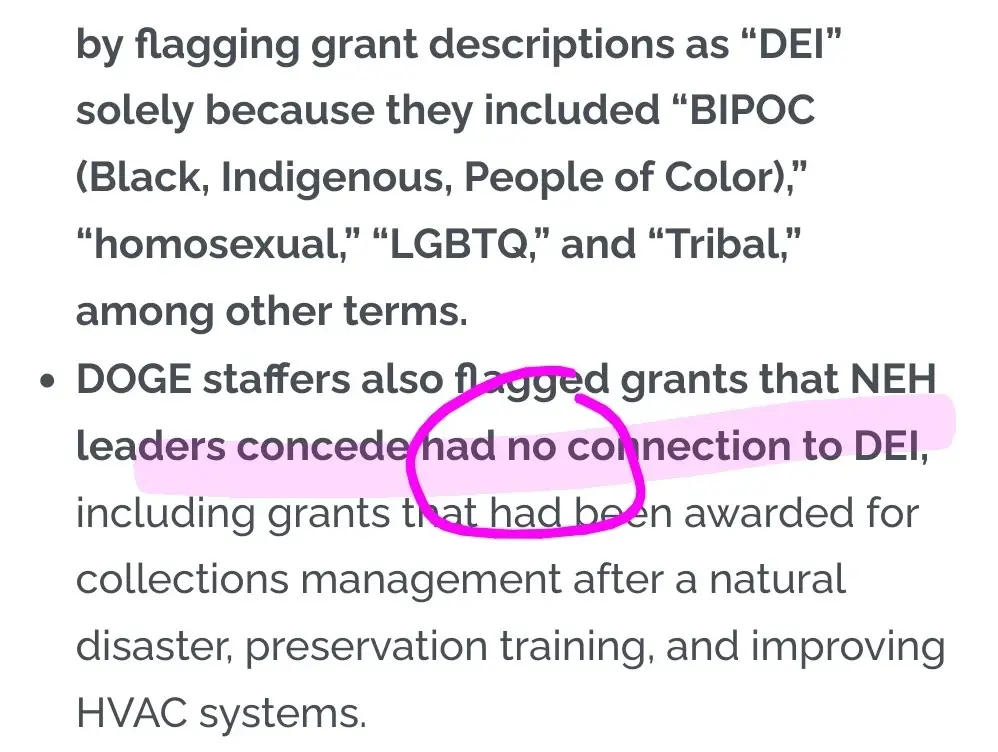

Major Update in our NEH Lawsuit - AHA

On March 6, 2026, the American Historical Association and our co-plaintiffs filed a motion for summary judgment. Depositions and records obtained through the discovery process detail the role of DOGE staff in cancelling humanities grants, and how both the Federal Equal Protection Clause of the 5th Amendment and the Federal…

AHA (www.historians.org)

-

I don't understand how or why any person who knows how to read can claim AI systems are good at summarising. But then I realise what they are claiming is different:

1️⃣ they don't know what summary means

2️⃣ they don't care about EVIDENCE AGAINST their views like...

Major Update in our NEH Lawsuit - AHA

On March 6, 2026, the American Historical Association and our co-plaintiffs filed a motion for summary judgment. Depositions and records obtained through the discovery process detail the role of DOGE staff in cancelling humanities grants, and how both the Federal Equal Protection Clause of the 5th Amendment and the Federal…

AHA (www.historians.org)

In other words, I understand and I see your motivated reasoning and I raise you: I don't have a conflict of interest so I know AI cannot do that

More context https://flipboard.com/@404media/404-media-qvt3vv94z/-/a-Scki3aliRTqz_5qqo3-DBQ%3Aa%3A4082434389-%2F0

-

I don't understand how or why any person who knows how to read can claim AI systems are good at summarising. But then I realise what they are claiming is different:

1️⃣ they don't know what summary means

2️⃣ they don't care about EVIDENCE AGAINST their views like...

Major Update in our NEH Lawsuit - AHA

On March 6, 2026, the American Historical Association and our co-plaintiffs filed a motion for summary judgment. Depositions and records obtained through the discovery process detail the role of DOGE staff in cancelling humanities grants, and how both the Federal Equal Protection Clause of the 5th Amendment and the Federal…

AHA (www.historians.org)

@olivia me neither

Jonathan Schofield (@urlyman@mastodon.social)

I have a client who is a lovely person, but they are all in on LLM ‘help’. Yesterday, a bit frazzled after getting nowhere with a tech challenge for another client, I asked them to repeat and clarify some requests they had made of me which I needed to action. They did, which is great. But they also sent me an AI summary of a meeting we had had, pointing out that the information I needed was in that summary. Except it wasn’t, and the bit that was in there was wrong 🤷♂️

Mastodon (mastodon.social)

-

In other words, I understand and I see your motivated reasoning and I raise you: I don't have a conflict of interest so I know AI cannot do that

More context https://flipboard.com/@404media/404-media-qvt3vv94z/-/a-Scki3aliRTqz_5qqo3-DBQ%3Aa%3A4082434389-%2F0

@olivia I was just writing a reply about motivated reasoning when you extended this - I think a lot of this isn't more than "we wish there was a way to obviate the need to read lots of documents, so we're going to assume this tool does that".

(That is: they *know* what a summary is, but they want to not have to read stuff sufficiently strongly that this overrides any other consideration.) -

@olivia I was just writing a reply about motivated reasoning when you extended this - I think a lot of this isn't more than "we wish there was a way to obviate the need to read lots of documents, so we're going to assume this tool does that".

(That is: they *know* what a summary is, but they want to not have to read stuff sufficiently strongly that this overrides any other consideration.)@aoanla indeed, but they are also implicitly claiming they don't know what a summary is (even if they do know) to trap us into that (waste of time) cycle of explaining, if that makes sense?

-

@aoanla indeed, but they are also implicitly claiming they don't know what a summary is (even if they do know) to trap us into that (waste of time) cycle of explaining, if that makes sense?

@olivia I think that's also possibly a cycle of self-justification? No-one wants to *admit* that they're using a tool just because they don't *want* to do a thing (possibly even to themselves).

There was a article on Bloomberg going around today about how a majority of hiring managers admit that they say layoffs are due to "AI" now because it "sounds good" rather than because it's true (versus "we needed to cut costs"). I think much of the discourse about "why we use AI" is riven by the same lack of transparency for motivation by the adopters.

-

@olivia I think that's also possibly a cycle of self-justification? No-one wants to *admit* that they're using a tool just because they don't *want* to do a thing (possibly even to themselves).

There was a article on Bloomberg going around today about how a majority of hiring managers admit that they say layoffs are due to "AI" now because it "sounds good" rather than because it's true (versus "we needed to cut costs"). I think much of the discourse about "why we use AI" is riven by the same lack of transparency for motivation by the adopters.

@aoanla money top-down also let's not forget

-

@olivia me neither

Jonathan Schofield (@urlyman@mastodon.social)

I have a client who is a lovely person, but they are all in on LLM ‘help’. Yesterday, a bit frazzled after getting nowhere with a tech challenge for another client, I asked them to repeat and clarify some requests they had made of me which I needed to action. They did, which is great. But they also sent me an AI summary of a meeting we had had, pointing out that the information I needed was in that summary. Except it wasn’t, and the bit that was in there was wrong 🤷♂️

Mastodon (mastodon.social)

@urlyman it was a rhetorical hook, see next post below

-

@olivia I think that's also possibly a cycle of self-justification? No-one wants to *admit* that they're using a tool just because they don't *want* to do a thing (possibly even to themselves).

There was a article on Bloomberg going around today about how a majority of hiring managers admit that they say layoffs are due to "AI" now because it "sounds good" rather than because it's true (versus "we needed to cut costs"). I think much of the discourse about "why we use AI" is riven by the same lack of transparency for motivation by the adopters.

@aoanla @olivia the Bloomberg article

https://mas.to/@carnage4life/116232921533312412 -

R relay@relay.an.exchange shared this topic