Please excuse me while I'm having a little existential crisis, lol.

-

@lispi314 I kind of seriously doubt that

-

@lumi @nina_kali_nina @tragivictoria we're gonna have to get so much louder and more annoying about this

people who i followed on twitch cause i thought they were cool are now slop sludging *jam games*

i genuinely think bullying sloperators is morally correct. their excuses are always such slop too, they're liars cause they'll never admit they just wanna do the bad thing and don't have a singular fuck to give about the world around them

@outfrost @lumi @tragivictoria maybe "bullying" isn't right but boycotting definitely is

-

@nina_kali_nina ... WTF do we do?

Stay alive until the subsidies run out and it's too expensive to lean on the de-skilling machines any longer?

@bstacey and boycott the slop sources as much as it's feasible personally in the meantime, yeah. And celebrating human projects

-

@nina_kali_nina I’m considering giving up on programming altogether tbh. Why bother making more training data for these assholes?

@cinebox I think about it too, but I'd stay for humans who need the software

-

@nina_kali_nina i used to find it sad. Then got bit angry. But now i just laugh. I laugh at the foolishness, the utter carelessnes.

Because thats all I can do without snapping completely. Well, i have already snapped. But you know.

I will continue to move slow and fix things. Let the hypefools ruin their codebases I guess.

@aks

thank you

thank you -

@bstacey and boycott the slop sources as much as it's feasible personally in the meantime, yeah. And celebrating human projects

@nina_kali_nina @bstacey I do think we need a "web of human trust" of some sort.

Instead of default-open systems where we ban bad actors, we will have to move to default-closed systems where we explicitly allow people trusted by current members of a given community.

-

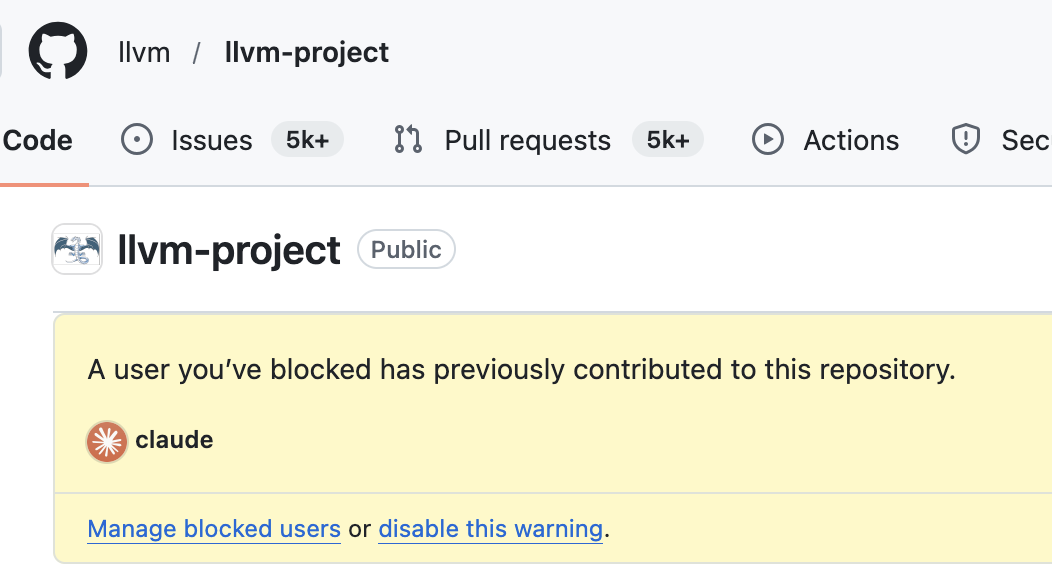

@moses_izumi @lumi @nina_kali_nina Yeah although given the level of LLM-contamination across architectural foss projects part of me is also like "Wow, Plan9 and other fully-independent self-hosted systems are looking a lot more interesting now".

(Like makes me wonder how independent Genode is as well)@lanodan@queer.hacktivis.me @moses_izumi@fe.disroot.org @lumi@snug.moe @nina_kali_nina@tech.lgbt very interesting side-effect of this whole clusterfuck: writing my own software becomes much more enticing than it already was.

really tempted to go back to my osdev projects. i won't get a general-purpose OS out of them, but. who cares! maybe I can make a bunch of single-purpose things

and maybe i should actually try out plan9... their propaganda page already sold me

-

@nina_kali_nina I'd never want the world I knew to stay static. Change is inevitable. I don't think it's ever worth mourning the past for the past's sake.

But, you say existential crisis, is your problem because it is good or because it's bad?

I assume the latter (I still can't get AI to complete simple tasks correctly the majority of the time).

@JigenD big tech is undermining human connection and labour solidarity, while getting richer. That is nothing new, of course, but it's happening faster than ever

-

@nina_kali_nina i don't know about these projects in particular, but not all llm use is automatically bad

@alreadydeadxd that is either sophistry or depends on one's moral values, though.

-

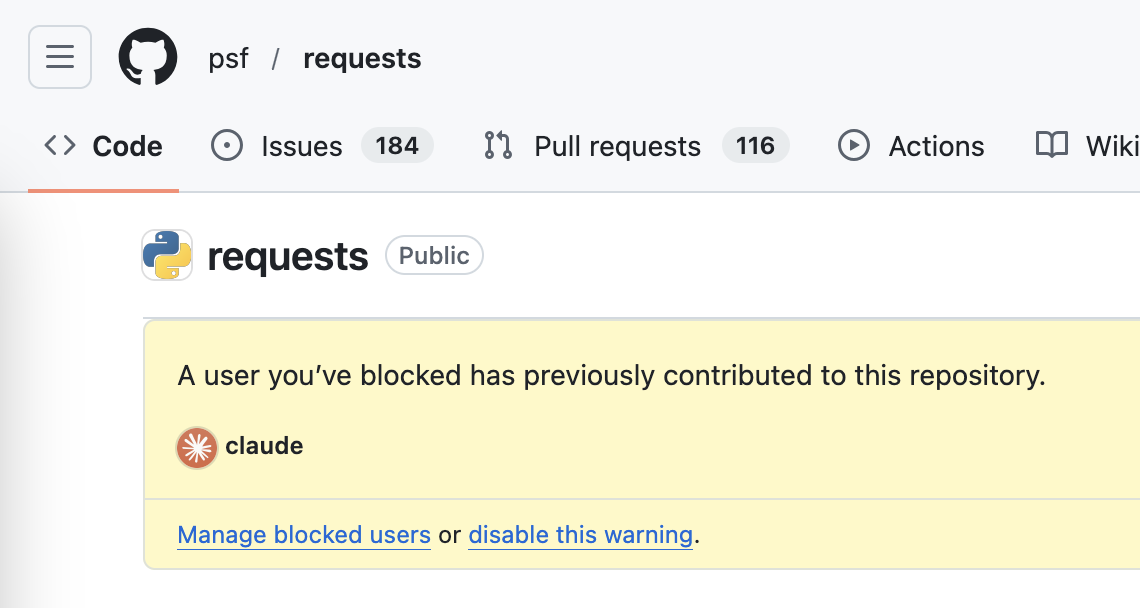

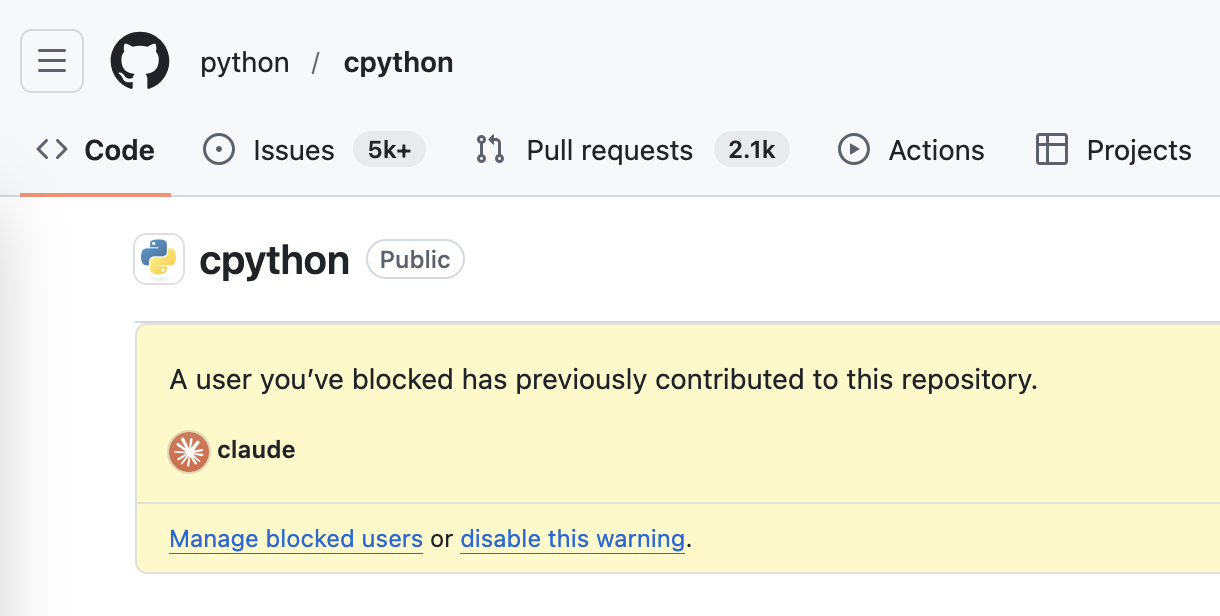

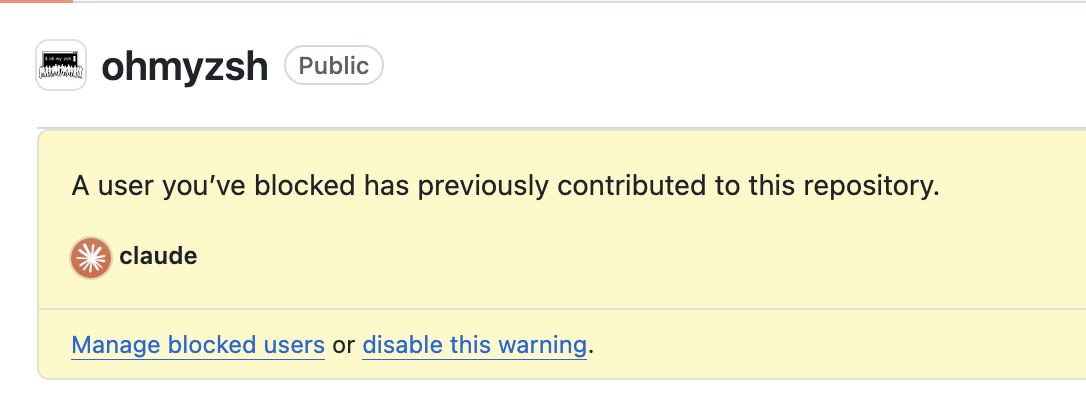

@nina_kali_nina not sure if this helps or makes it worse but im not actually sure the blocked user trick is reliable. On some projects I got this warning and spent a few hours looking through commits without finding anything. And I did notice that it counts things as involving copilot even if it didn't do anything real. Like someone not a maintainer had opend a pull request and unintentionally(according to them) asked for a copilot Review. Which doesn't affect the project but Shows up in filters

So I don't know if I can actually trust this to find what's Ai touched and what isnt

@lunathemoongirl it's not super reliable, but it is generally reliable. Consider that Python for example has a policy that allows AI usage: https://blog.miguelgrinberg.com/post/llm-use-in-the-python-source-code

-

@nina_kali_nina not to get conspiratorial but I guess companies like Microsoft also have an incentive to make you think everything is made by Ai and to not be able to tell.

The claude user profile itself also has no commits but shows up as author in like chardet. So idk it does seem to have wonky special rules. And maybe it gets into projects through being associated with the commits in some pull request and the maintainers couldnt tell?

I mean harfbuzz and chardet are very clear but others.

@lunathemoongirl the detection is based on the commit authors. If you review a PR, you'll see who is the author. I understand that it is in the big tech interests to sneak it in as many places as possible, sure, but I've only seen "embrace, enhance, extinguish" practice and not "oh boy, that was ai? We gotta be careful in PR reviews next time"

-

@nina_kali_nina I hear you. But consider this: like any other profession that creates thing, you can divide it into industrial and artisanal. You can produce stuff, or craft stuff. For software, this has just become more real, more relevant and more visible. You and some other gifted ones will remain artisans, crafting beautiful things. Software giants will spit out crappier software.

@loredema it is a tragedy for, well, pretty much everyone. Software will be worse, end users will have poorer experience at best, rich folks will get richer while shipping systems proliferating racism, sexism, and so on. And probably worst of all, the trust in software chain will be destroyed.

-

@lispi314 I'm not sure, but all these projects have AI authored commits in master

-

@nina_kali_nina @bstacey I do think we need a "web of human trust" of some sort.

Instead of default-open systems where we ban bad actors, we will have to move to default-closed systems where we explicitly allow people trusted by current members of a given community.

-

@enigmatico "not working" is subjective in the eyes of people making decisions, unfortunately.

but yeah, it seems people making decisions about critical systems need to be far more selective in what can be blindly trusted from now on

but yeah, it seems people making decisions about critical systems need to be far more selective in what can be blindly trusted from now on -

-

@nina_kali_nina @bstacey it's not going to be a single project. In some cases it is going to be as simple as changing settings in existing software – like flipping fedi instances from blocklists to allow-lists.

Code foundries also already have existing affordances useful for this.

This is pretty fscking sad, but we've been feeding Big Tech with our code, with our blogposts, with our shitposts, for so long and what we got back from it is *gestures at everything*…

-

-

@nina_kali_nina @bstacey I do think we need a "web of human trust" of some sort.

Instead of default-open systems where we ban bad actors, we will have to move to default-closed systems where we explicitly allow people trusted by current members of a given community.

@rysiek @nina_kali_nina @bstacey the issue with that is it doesn't really work for anything online where a lot of the time you kinda just stumble upon something by chance and get involved; I for one sure as hell didn't have anyone to introduce me into most of the communities I've been a part of over the years; I simply joined out of the blue and slowly became a part of it. -

@lispi314 @bstacey @rysiek I'm a bit privileged here, you can imagine a worse situation. but I've started my open-source journey back in 2005 from a deeply rural place; I didn't know a single person who'd code, and my best bet at learning about the community was going to libraries, sending letters (at first it was letters, not emails), visiting large cities for events (8 hours by bus one way!). It's not what we're used to, but it used to work and maybe it can work again.