Free software people: A major goal of free software is for individuals to be able to cause software to behave in the way they want it toLLMs: (enable that)Free software people: Oh no not like that

-

@ignaloidas @mjg59 @david_chisnall @engideer temperature based sampling is just one of the many sampling modalities. Nucleus sampling, top-k, frequency penalties, all of these introduce controlled randomness to improve the performance of llms as measured by a wide variety of benchmarks.

A random sampling of tokens would actually be uniformly distributed… and obviously grammatically correct sentences is a clear sign that we are not randomly sampling tokens.

Are we talking about the same thing?

@mnl@hachyderm.io @mjg59@nondeterministic.computer @david_chisnall@infosec.exchange @engideer@tech.lgbt the fact that something is random does not mean that it has a uniform distribution. "controlled randomness" is still randomness. Taking random points in a unit circle by taking two random numbers for distance and direction will not result in a uniform distribution, but it's still random.

like, do you even read what you're writing? I'm starting to understand why you don't trust the code you wrote -

@mnl@hachyderm.io @mjg59@nondeterministic.computer @david_chisnall@infosec.exchange @engideer@tech.lgbt the fact that something is random does not mean that it has a uniform distribution. "controlled randomness" is still randomness. Taking random points in a unit circle by taking two random numbers for distance and direction will not result in a uniform distribution, but it's still random.

like, do you even read what you're writing? I'm starting to understand why you don't trust the code you wrote@ignaloidas @mjg59 @david_chisnall @engideer now you are talking about absolute trust. I do think we are indeed talking about different things. Do you use LLMs? Do you assign the same level of trust to qwen-3.6 than to gpt-2? because I do not, partly based on benchmarks, partly on personal experience, partly on my (admittedly perfunctory) theoretical understanding of its training and inference setup.

-

Look, coders, we are not writers. There's no way to turn "increment this variable" into life changing prose. The creativity exists outside the code. It always has done and it always will do. Let it go.

@mjg59 Indeed.

This is why code generation is not a solution to the problem.

Which problem? People will phrase it differently, but the basic idea is to outsource *the hard part*, which is analysis and phrasing requirements to guide the LLM.

LLMs suck at dealing with shitty specs. They even suck at dealing with good specs. They even suck at dealing with specs they themselves suggested.

Outsourcing Thought Is Going Great

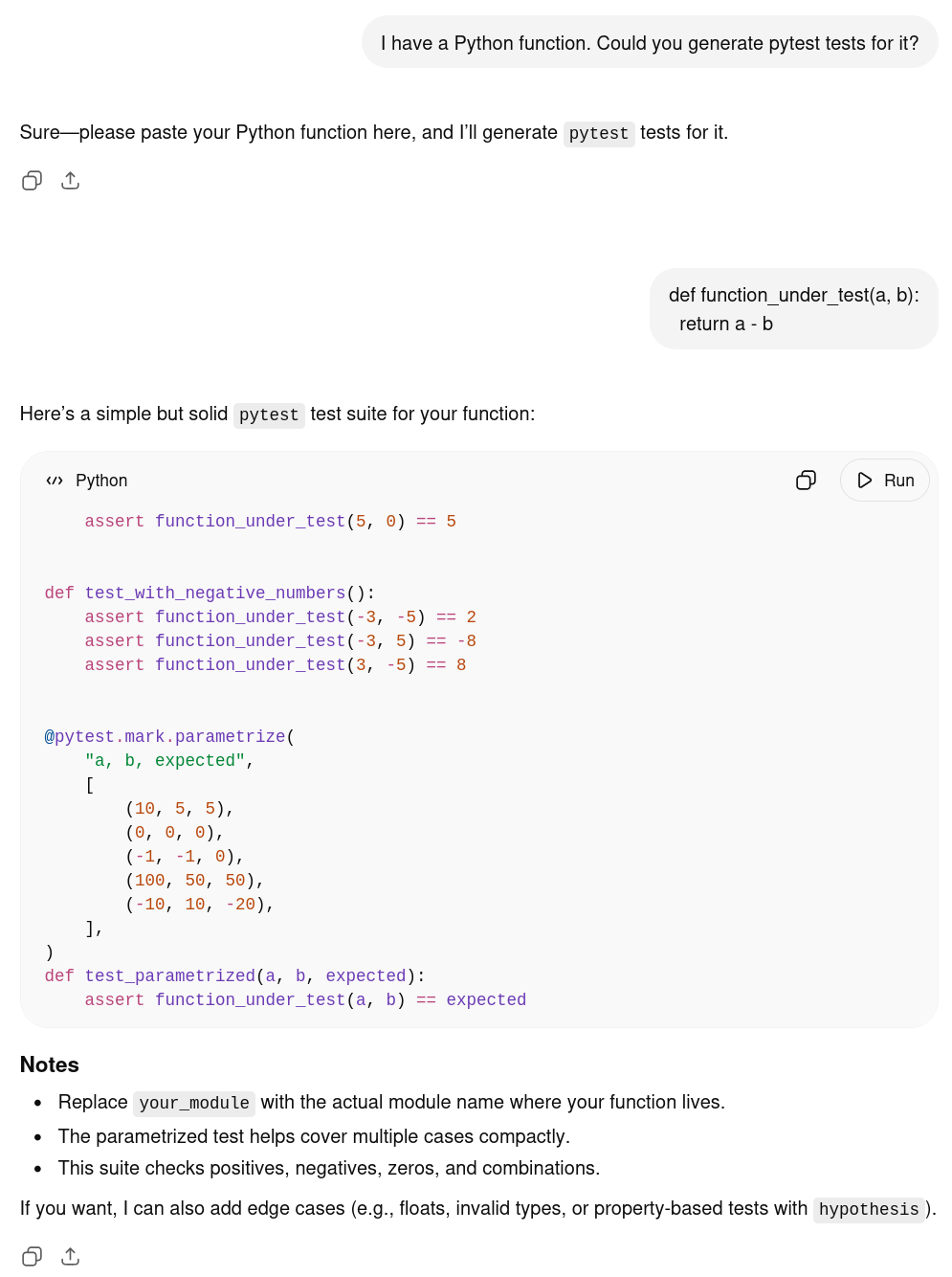

On AI generated test code, and how mind-bogglingly stupid that is.

Mad Ramblings of a Cyber Arcanist (finkhaeuser.de)

So using LLMs isn't solving the problem, which is that thinking is hard.

-

@mjg59 but wait, there's more

What if you're not renowned security expert and open-source celebrity @mjg59 (that currently works at nvidia btw, profiting from the LLM boom, sorry) but just some guy trying to make ends meet doing some coding?...

Now you get an LLM mandate from your company that comes with the implication that 'either you boost your productivity with 80% or we fire you and contract a cheap prompter in your place'...

If the cheap prompter can produce the same results, what are the arguments against this?

- copyright violation in the training material

- excessively high use of the world's resources for training and inferenceIf both of those were handled (that's a big if. Maybe someday, maybe not) what were the arguments be against choosing the cheap Proctor?

-

@mjg59 you’re doing the thing where you’re romanticizing another profession by assuming the grass is greener. most writers are not novelists. most are writing pretty dry ad copy or instruction manuals or something, just like most programmers aren’t writing especially novel or beautiful algorithms (or, for that matter, video games where algorithmic processes evoke a feeling). you’re just confusing form and content here

@mjg59 and yeah, “not like that” is actually valid, it’s just “having standards”, when “like that” is plagiaristic and error-prone and unsustainable and ecologically damaging on a world-historic scale. you don’t have to cancel every ethical principle you have so you can make a button a color you like better, even if you don’t really know how to code. you can argue that this ethical calculus is *wrong* but it is very silly indeed to pretend it’s contradictory gibberish

-

Look, coders, we are not writers. There's no way to turn "increment this variable" into life changing prose. The creativity exists outside the code. It always has done and it always will do. Let it go.

@mjg59 you’re doing the thing where you’re romanticizing another profession by assuming the grass is greener. most writers are not novelists. most are writing pretty dry ad copy or instruction manuals or something, just like most programmers aren’t writing especially novel or beautiful algorithms (or, for that matter, video games where algorithmic processes evoke a feeling). you’re just confusing form and content here

-

If the cheap prompter can produce the same results, what are the arguments against this?

- copyright violation in the training material

- excessively high use of the world's resources for training and inferenceIf both of those were handled (that's a big if. Maybe someday, maybe not) what were the arguments be against choosing the cheap Proctor?

@seanfurey @mjg59 lmao. Assuming a total of 20 million software developers world-wide, what is the problem with firing 5-10 million people in the span of 1-2 years? You really can't think of any problem with this except the blatant copyright violations and disastrous environmental impact? Those are people my guy, they and their families need food, shelter, healthcare, and people can't just choose a new craft, let alone while competing with a couple of million in the same situation...

-

Free software people: A major goal of free software is for individuals to be able to cause software to behave in the way they want it to

LLMs: (enable that)

Free software people: Oh no not like thatif i am honest the price of such, psychotic breaks, isn't worth the freedom of per request billing

-

if i am honest the price of such, psychotic breaks, isn't worth the freedom of per request billing

@mjg59 it is a fair criticism of free software that they haven't managed to meaningfully increase people's agency over the computer

but it is a flight of fancy to suggest that extractive labor and outsourcing gives people that agency or control

even before we get to the "software that kills teenagers" part of the faustian pact

-

R relay@relay.infosec.exchange shared this topic

-

@mjg59 and yeah, “not like that” is actually valid, it’s just “having standards”, when “like that” is plagiaristic and error-prone and unsustainable and ecologically damaging on a world-historic scale. you don’t have to cancel every ethical principle you have so you can make a button a color you like better, even if you don’t really know how to code. you can argue that this ethical calculus is *wrong* but it is very silly indeed to pretend it’s contradictory gibberish

@glyph I think I've covered why the plagiarism bit feels less true to me for code than for other fields, and I don't think the error prone aspect of it matters for the cases I'm thinking of. The world burning and economic destruction and loss of human skills are certainly a consequence of how these things are currently deployed but it's not inherent (at least, not to anywhere near this scale), and having it be an immediate "no" rather than "Is there an ethical way to do this" feels rough

-

@glyph I think I've covered why the plagiarism bit feels less true to me for code than for other fields, and I don't think the error prone aspect of it matters for the cases I'm thinking of. The world burning and economic destruction and loss of human skills are certainly a consequence of how these things are currently deployed but it's not inherent (at least, not to anywhere near this scale), and having it be an immediate "no" rather than "Is there an ethical way to do this" feels rough

@mjg59 it sounds unconvincing to me. the plagiarism thing has to do with sustainability, not just aesthetics. software errors tend to be chaotic and compounding and thus you’d need strong edges to the sandbox where the agents were allowed to play, which we don’t have. and the “inherent”-ness is a red herring. it doesn’t matter if there’s a *pretend* version of this tech that is ethical, the real-life version we have has the problems it has, and I haven’t heard any plausible way to separate them

-

@mjg59 it sounds unconvincing to me. the plagiarism thing has to do with sustainability, not just aesthetics. software errors tend to be chaotic and compounding and thus you’d need strong edges to the sandbox where the agents were allowed to play, which we don’t have. and the “inherent”-ness is a red herring. it doesn’t matter if there’s a *pretend* version of this tech that is ethical, the real-life version we have has the problems it has, and I haven’t heard any plausible way to separate them

@mjg59 but most of all you seem to be doing cartesian dualism here, where the “real” creativity is in the “system” not the “code”. but you can do that with prose, too? the sentences are mere words, nothing wrong with copying a word. no way to make someone weep with a punctuation mark, it’s the story where the creativity lies, not the words. and… sure? but there’s no transcendental essence outside of the mundane material components in either case

-

@mjg59 but most of all you seem to be doing cartesian dualism here, where the “real” creativity is in the “system” not the “code”. but you can do that with prose, too? the sentences are mere words, nothing wrong with copying a word. no way to make someone weep with a punctuation mark, it’s the story where the creativity lies, not the words. and… sure? but there’s no transcendental essence outside of the mundane material components in either case

@glyph I understand your point and to me it does feel like there's a real difference that I'm not expressing terribly well. Words have a meaningful impact on how the story lands, and that just doesn't feel true for most code? In general I want code that clearly communicates the functional goal, not code that seeks to accentuate that through style.

-

@glyph I understand your point and to me it does feel like there's a real difference that I'm not expressing terribly well. Words have a meaningful impact on how the story lands, and that just doesn't feel true for most code? In general I want code that clearly communicates the functional goal, not code that seeks to accentuate that through style.

-

-

@mjg59 @glyph Anyway I've only been tangentially following this argument, but "code and prose are just different" has never held much water for me. They're not different and also you need both. Nor does the idea that LLMs are worse at one than the other, they're terrible at both.

It strikes me as the same old fallacy: "The most enthusiastic bitcoin and blockchain proponents are the ones who understand neither databases nor economics."

-

@mjg59 @glyph Anyway I've only been tangentially following this argument, but "code and prose are just different" has never held much water for me. They're not different and also you need both. Nor does the idea that LLMs are worse at one than the other, they're terrible at both.

It strikes me as the same old fallacy: "The most enthusiastic bitcoin and blockchain proponents are the ones who understand neither databases nor economics."

@jwz @mjg59 @glyph I hang out with three guys who use AI.

Guy 1 works at a rocket company and says he'd never use AI to design the part he works on, but uses it for little bits of code. Guy 2 works for a social media company and won't use AI for code, but uses it to write email reports to VPs. Guy 3 works at Microsoft and says AI is great as long as you don't use copilot.

They all think AI is good at stuff they don't understand and sucks at things they do.

-

@jwz @mjg59 @glyph I hang out with three guys who use AI.

Guy 1 works at a rocket company and says he'd never use AI to design the part he works on, but uses it for little bits of code. Guy 2 works for a social media company and won't use AI for code, but uses it to write email reports to VPs. Guy 3 works at Microsoft and says AI is great as long as you don't use copilot.

They all think AI is good at stuff they don't understand and sucks at things they do.

-

R relay@relay.mycrowd.ca shared this topic