People get mad when you call LLMs "spicy autocomplete" but my investigations into recreating and implementing small versions of this tech make me think that nick name is very accurate.

-

@futurebird even this is perhaps being too generous to the models, since in the process of generating a "response" they are playing the same game with themselves:

add a token, then that's the new input, figure out the next token to continue *that* sequence, and so on until it's time to stop (which is just a special sort of token)

what kind of token do you men here?

-

what kind of token do you men here?

@futurebird @SnoopJ a token is, basically, a word (not literally, input gets tokenized by a tokenizer). I seem to recall you have some programming experience...it's like a tokenizer in a programming language, where in BASIC the PRINT command might turn into a number that is a compact representation of the function call for PRINT.

The tokenizer splits and compacts sentences for ingestion into the model. And on output, it estimates the most likely next token based on all prior tokens.

-

@futurebird @SnoopJ a token is, basically, a word (not literally, input gets tokenized by a tokenizer). I seem to recall you have some programming experience...it's like a tokenizer in a programming language, where in BASIC the PRINT command might turn into a number that is a compact representation of the function call for PRINT.

The tokenizer splits and compacts sentences for ingestion into the model. And on output, it estimates the most likely next token based on all prior tokens.

@futurebird @SnoopJ but, reading the rest of the thread, it sounds like you already know that!

-

what kind of token do you men here?

@futurebird the "bit of arbitrary-length text that turns into an integer ID" sort that allow the model's guts to work with convenient mathematical primitives, but still get back to text in the end. Any body of text is a sequence of some number of tokens.

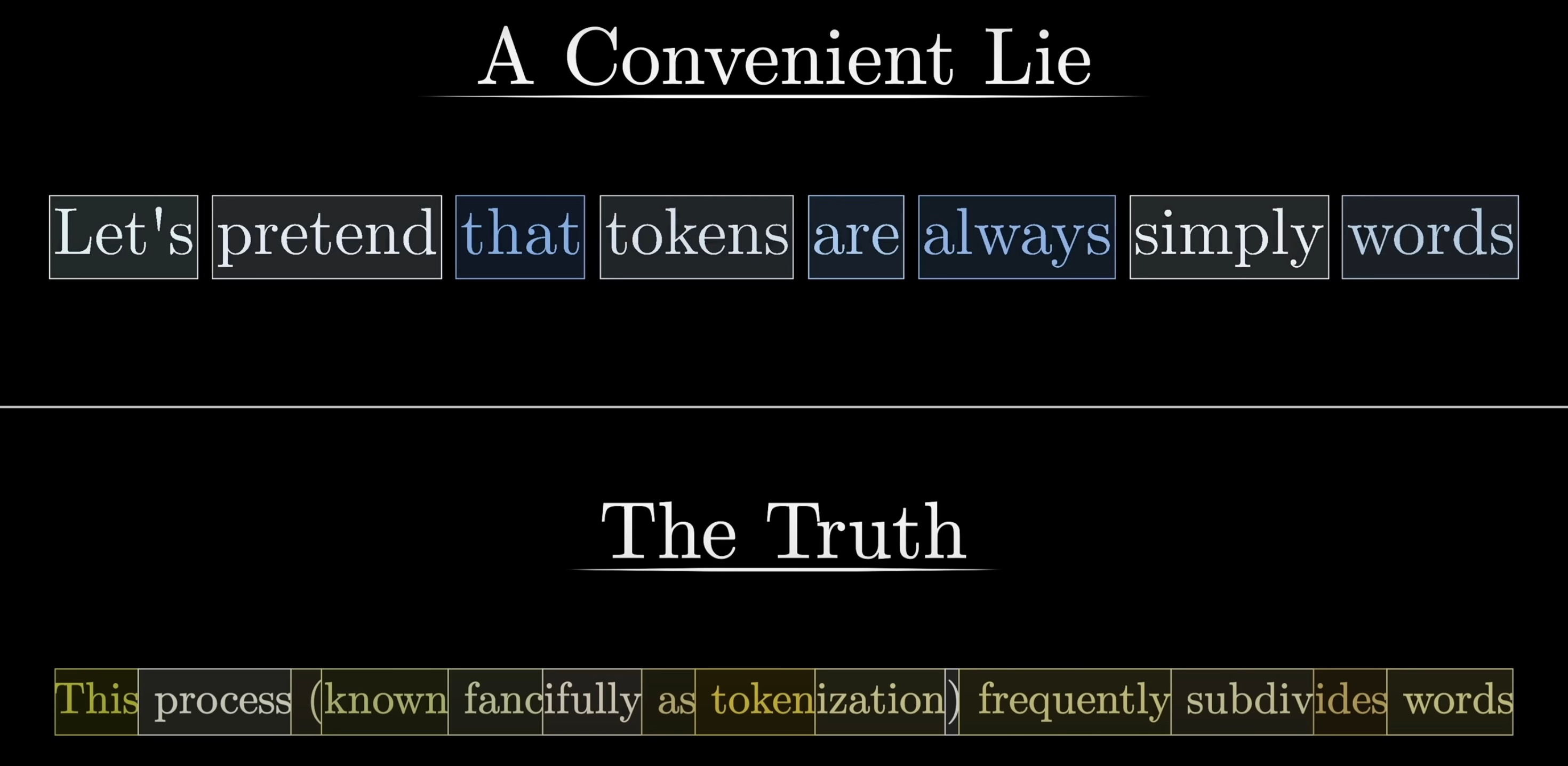

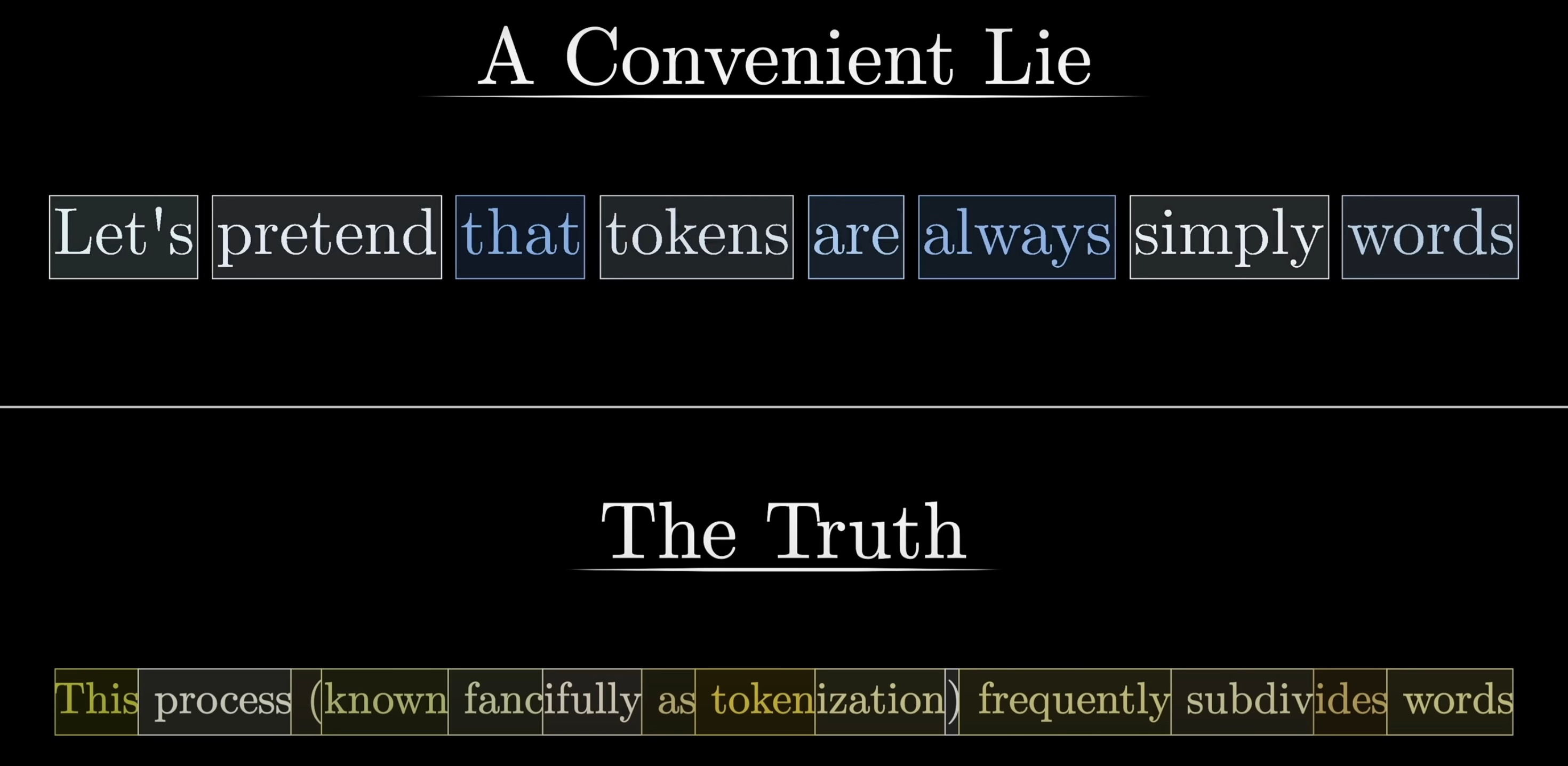

In particular, tokens are *not* typically along word boundaries. 3blue1brown in his series uses this for convenience's sake, but points out that it's a lie with a handy little visual demonstration (attached).

OpenAI provide a little UI for exploring tokenization, although it's changed bit over the years:

https://platform.openai.com/tokenizer

Aa bit of a bias towards token boundaries being on English word boundaries in the newest generation, it seems, but it's all the same idea, and I'm sure it works differently in other languages because of the usual anglophone bias.

-

@futurebird the "bit of arbitrary-length text that turns into an integer ID" sort that allow the model's guts to work with convenient mathematical primitives, but still get back to text in the end. Any body of text is a sequence of some number of tokens.

In particular, tokens are *not* typically along word boundaries. 3blue1brown in his series uses this for convenience's sake, but points out that it's a lie with a handy little visual demonstration (attached).

OpenAI provide a little UI for exploring tokenization, although it's changed bit over the years:

https://platform.openai.com/tokenizer

Aa bit of a bias towards token boundaries being on English word boundaries in the newest generation, it seems, but it's all the same idea, and I'm sure it works differently in other languages because of the usual anglophone bias.

@futurebird that is: a large language model is (usually):

1) A pile of known tokens

2) Learned relationships between tokens, in-context (i.e. accounting for long-distance correlations (like "the last paragraph") and many of them)

3) The machinery to emit selections from (1) in a way that tries to obey the relationships learned from (2) -

@futurebird apologies for pedantic-quibbling on your thread twice, but…

LLMs in their platonic form are not weighted in this manner, they are exactly as you have imagined here: they reproduce the statistical distribution of tokens in their training corpus.

If you've never played around with GPT-2 or GPT-3 (from the era before we had GPT-3.5 and from there "ChatGPT"), they often would do *precisely* this sort of direct, non-conversational continuation. You could feed in a sentence or two and get "autocomplete", or you could feed in `<html><body><span>Lorem ipsum` and get a plasuible-looking continuation of an HTML document (or whatever)

Once "Chat" models (and the paradigm shift to RLHF to "fine-tune" model performance) showed up, we started seeing the conversational pattern. I don't know the details there, but there is definitely a distinct line between when we first started seeing "LLMs" and when we started seeing models arranged explicitly around a conversational format.

@SnoopJ @futurebird It's pretty straightforward to play with "raw" LLMs, eg. with ollama or llama.cpp.

BTW If we're being pedantic "they reproduce the statistical distribution of tokens in their training corpus" isn't quite right. Inductive bias is crucial otherwise the model grinds to a halt on novel inputs. (And I'd really like to know what it looks like when you do this but I don't have the resources to find out.)

-

@SnoopJ @futurebird It's pretty straightforward to play with "raw" LLMs, eg. with ollama or llama.cpp.

BTW If we're being pedantic "they reproduce the statistical distribution of tokens in their training corpus" isn't quite right. Inductive bias is crucial otherwise the model grinds to a halt on novel inputs. (And I'd really like to know what it looks like when you do this but I don't have the resources to find out.)

@dpiponi @futurebird I should probably have said *attempt* to reproduce

But as you say, novel inputs can be quite tricky, as in the case of the "glitch tokens" of GPTs gone by: https://www.vice.com/en/article/ai-chatgpt-tokens-words-break-reddit/

At the time, they slapped a band-aid on and just fell back onto a generic "an error has occurred" response and no generation if one of those tokens was input. I don't know what the purported solution is to the same problem today, aside from "whatever it is, it's probably rubbish and involves a lot of lying"

-

@dpiponi @futurebird I should probably have said *attempt* to reproduce

But as you say, novel inputs can be quite tricky, as in the case of the "glitch tokens" of GPTs gone by: https://www.vice.com/en/article/ai-chatgpt-tokens-words-break-reddit/

At the time, they slapped a band-aid on and just fell back onto a generic "an error has occurred" response and no generation if one of those tokens was input. I don't know what the purported solution is to the same problem today, aside from "whatever it is, it's probably rubbish and involves a lot of lying"

@dpiponi @futurebird annoyingly, the LessWrong write-up linked to that 'SolidGoldMagikarp' work is actually quite good, but in the time since that research was published there has been similar research published in more uhhh reputable places, e.g. https://dl.acm.org/doi/full/10.1145/3660799

-

@futurebird@sauropods.win I honestly think (unpopular opinion here) that most of the cost of LLM-based AI thus far is in ‘training’. Not training as in running the phenomenal amount of harvested stolen text and image input through tokenisation processes and reward giving through weight assignment and vector assessment, using more GPUs than exist on Earth, but rather, lots and lots and lots of money paying humans to fake it all and build in patches – patch after patch on top of patch of corrective behaviour, encoded themselves as vector weights. The training had nothing much to do with running it all through GPUs, I believe that probably took an embarassing but totally affordable amount of time and energy. I believe (with no visible means of factual reference to cite) that most of the expenditure of these capital-burning companies was ‘training’ by paying humans and then encoding their resulting guidance. Paying workers.

@u0421793 @futurebird Yes. Or getting humans to do that labor for no pay.

-

Thus the training data didn't just contain text, but rather text where each passage is tagged and attributed to a particular user.

This aspect of the training data was critical in creating the illusion of talking to another person.

An LLM doesn't just predict the next text. It predicts the next text that might come from another user. You need to hard code this in to make it work well.

Leave it out and there is no conversation.

@futurebird Do you have references explaining how the recent LLMs have been trained to do this?

I'd be interested in understanding what this training methodology looks like in detail.

I didn't actually think this "conversational training" was necessary, as I thought the chat-bots were just told 'you are a chat bot, pretend to have a conversation, put your output directly in the next line. Here is the user's first inputs: "It's a lovely day"' Or something like that.

-

R relay@relay.mycrowd.ca shared this topic