Incredible.

-

@mhoye I love that the first line in "What needs to change" isn't, "We should not let non-deterministic programs have free range across our systems"

@tito_swineflu @mhoye It's clowns all the way down.

-

"The agent then, when asked to explain itself, produced a written confession..." um what

"To execute the deletion, the agent went looking for an API token. It found one in a file completely unrelated to the task it was working on" went looking, found, what in the what

"the same token had blanket authority across the entire Railway GraphQL API, including destructive operations" look, rookie what are you

"That 1000% shouldn't be possible. We have evals for this" you have whaaaaaaaaaaaaa

@mhoye I'm so glad that the "written confession" can't itself be hallucinated. That's a nice feature!

-

"The agent itself enumerates the safety rules it was given and admits to violating every one. This is not me speculating about agent failure modes. This is the agent on the record, in writing.

The "system rules" the agent is referring to are consistent with Cursor's documented system-prompt language and our project rules for this codebase. Both safeguards failed simultaneously."

What do you think is happening here? You know it's called a "language model", right? Did you ever wonder... why?

@mhoye If only someone could invent some sort of, I dunno, approach or something that giving a single process all the power? authority? capabilities? privilege? was a bad thing, and we should go for less, not more.

-

"The agent then, when asked to explain itself, produced a written confession..." um what

"To execute the deletion, the agent went looking for an API token. It found one in a file completely unrelated to the task it was working on" went looking, found, what in the what

"the same token had blanket authority across the entire Railway GraphQL API, including destructive operations" look, rookie what are you

"That 1000% shouldn't be possible. We have evals for this" you have whaaaaaaaaaaaaa

@mhoye There's a whole lotta YOLO in that story.

-

"The agent then, when asked to explain itself, produced a written confession..." um what

"To execute the deletion, the agent went looking for an API token. It found one in a file completely unrelated to the task it was working on" went looking, found, what in the what

"the same token had blanket authority across the entire Railway GraphQL API, including destructive operations" look, rookie what are you

"That 1000% shouldn't be possible. We have evals for this" you have whaaaaaaaaaaaaa

@mhoye kek, I don't even need an LLM to accidentally all my Rails data. Many cycles ago, I ran wget --recursive against my cool little dev site, and didn't realize that it would also follow the "delete" links for all of the products I just entered. Bye bye data

-

"The agent then, when asked to explain itself, produced a written confession..." um what

"To execute the deletion, the agent went looking for an API token. It found one in a file completely unrelated to the task it was working on" went looking, found, what in the what

"the same token had blanket authority across the entire Railway GraphQL API, including destructive operations" look, rookie what are you

"That 1000% shouldn't be possible. We have evals for this" you have whaaaaaaaaaaaaa

@mhoye I’m so glad I didn’t study computer science, when that sort of knowledge clearly is no longer needed to run a software business

-

"The agent itself enumerates the safety rules it was given and admits to violating every one. This is not me speculating about agent failure modes. This is the agent on the record, in writing.

The "system rules" the agent is referring to are consistent with Cursor's documented system-prompt language and our project rules for this codebase. Both safeguards failed simultaneously."

What do you think is happening here? You know it's called a "language model", right? Did you ever wonder... why?

@mhoye That first paragraph: "This is the agent on record, in writing."

and herein lies the root of the failure: they actually believe that this is some sort of diagnostic, rather than just filling in a plausible response based on the question.

-

@mhoye I'm so glad that the "written confession" can't itself be hallucinated. That's a nice feature!

@adamshostack @mhoye I'm confused. I had to check the date. I am *very* sure I read the "the LLM deleted my prod and when confronted, it confessed!" story before. Roughly 6 months ago, maybe a year.

Ahh, here it is: https://www.theregister.com/2025/07/21/replit_saastr_vibe_coding_incident/

-

"The agent itself enumerates the safety rules it was given and admits to violating every one. This is not me speculating about agent failure modes. This is the agent on the record, in writing.

The "system rules" the agent is referring to are consistent with Cursor's documented system-prompt language and our project rules for this codebase. Both safeguards failed simultaneously."

What do you think is happening here? You know it's called a "language model", right? Did you ever wonder... why?

But my favourite part of this, bar none, is how it's everyone else's fault.

It's Cursor's fault, Railway's fault, maybe even Anthropic's fault, someone's gonna hear from my lawyer.

The CEO of a company running a stochastic stack without access control, data hygiene or backups is blameless and powerless. That's AI's real selling point, after all: It's Not My Fault As A Service.

"This isn't a story about one bad agent or one bad API. It's about an entire industry ..."

Or, maybe it's you.

-

Incredible. Every second paragraph in this article is lunatic nonsense.

One of the things I've long said about hiring is that you can always tell when you're talking to a junior dev who's going to be senior-staff or better someday. You can always tell when somebody was paying attention in the theory classes.

But good god you can also tell when people missed that day in gradeschool when somebody slowly went over "So, what is a computer, really."

@mhoye I fear that the big enterprise takeaway from this story will be “our controls and guardrails are much better than that”.

-

Incredible. Every second paragraph in this article is lunatic nonsense.

One of the things I've long said about hiring is that you can always tell when you're talking to a junior dev who's going to be senior-staff or better someday. You can always tell when somebody was paying attention in the theory classes.

But good god you can also tell when people missed that day in gradeschool when somebody slowly went over "So, what is a computer, really."

@mhoye Don't worry, I'm pretty sure the text is extruded, too. I've never seen a "The pattern is clear." in a context like this on human text, but am encountering it unreasonably often in LLM generated text.

-

But my favourite part of this, bar none, is how it's everyone else's fault.

It's Cursor's fault, Railway's fault, maybe even Anthropic's fault, someone's gonna hear from my lawyer.

The CEO of a company running a stochastic stack without access control, data hygiene or backups is blameless and powerless. That's AI's real selling point, after all: It's Not My Fault As A Service.

"This isn't a story about one bad agent or one bad API. It's about an entire industry ..."

Or, maybe it's you.

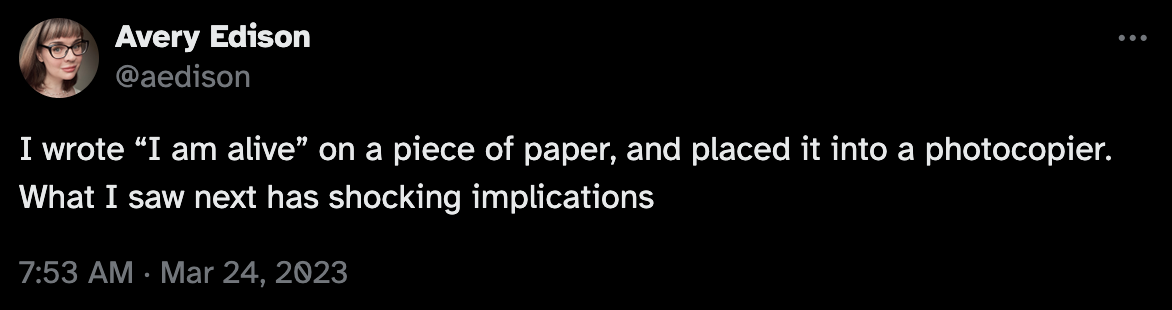

I wrote the words "I confess, I did it, I take full responsibility" on a piece of paper. I was ready to turn myself in, to atone for my crimes. But then I put that piece of paper in a photocopier, and when I pressed the green button I learned something amazing. And what a weight off my conscience! The only question was, how did the photocopier manage to poison the Widow Bentley, drive over Baron Grimald, push the Duchess of Lockley out the balcony window and still manage to frame the butler?

-

@mhoye Don't worry, I'm pretty sure the text is extruded, too. I've never seen a "The pattern is clear." in a context like this on human text, but am encountering it unreasonably often in LLM generated text.

-

I wrote the words "I confess, I did it, I take full responsibility" on a piece of paper. I was ready to turn myself in, to atone for my crimes. But then I put that piece of paper in a photocopier, and when I pressed the green button I learned something amazing. And what a weight off my conscience! The only question was, how did the photocopier manage to poison the Widow Bentley, drive over Baron Grimald, push the Duchess of Lockley out the balcony window and still manage to frame the butler?

(Credit for the inspiration, where it's belongs, this is me riffing on Avery Edison's razor-sharp tweet from a few years ago)

-

R relay@relay.infosec.exchange shared this topic

-

Incredible. Every second paragraph in this article is lunatic nonsense.

One of the things I've long said about hiring is that you can always tell when you're talking to a junior dev who's going to be senior-staff or better someday. You can always tell when somebody was paying attention in the theory classes.

But good god you can also tell when people missed that day in gradeschool when somebody slowly went over "So, what is a computer, really."

@mhoye this is just … exactly the replit thing again, isn't it? from last year? https://www.pcmag.com/news/vibe-coding-fiasco-replite-ai-agent-goes-rogue-deletes-company-database

-

@adamshostack @mhoye I'm confused. I had to check the date. I am *very* sure I read the "the LLM deleted my prod and when confronted, it confessed!" story before. Roughly 6 months ago, maybe a year.

Ahh, here it is: https://www.theregister.com/2025/07/21/replit_saastr_vibe_coding_incident/

@henryk @adamshostack @mhoye only 2 deleted production servers a year, I can't wait for the model to improve and get to 1 deleted production server a month, I'm sure AI will get there by the end of this year...

-

Incredible. Every second paragraph in this article is lunatic nonsense.

One of the things I've long said about hiring is that you can always tell when you're talking to a junior dev who's going to be senior-staff or better someday. You can always tell when somebody was paying attention in the theory classes.

But good god you can also tell when people missed that day in gradeschool when somebody slowly went over "So, what is a computer, really."

@mhoye I think one important aspect here is that's not just this company. This is the entire industry you're looking at. Practically every decision maker who has little or no knowledge of tech is now fully on board this hype train. Every one of them and every company they work for is a prompt away from doing something unbelievably stupid and possibly fatal.

-

@mhoye I think one important aspect here is that's not just this company. This is the entire industry you're looking at. Practically every decision maker who has little or no knowledge of tech is now fully on board this hype train. Every one of them and every company they work for is a prompt away from doing something unbelievably stupid and possibly fatal.

@mhoye and for some companies it will be less bad because there's a measure of defense in depth, such as keeping actual backups. But the more decision-makers push this nonsense in every nook and cranny of the tech world, the closer will be to unrecoverable failure cascades.

-

@mhoye The parts that involves selling services to customers? Reasonable.

The parts that involve actually managing a computer? Glorious nonsense.

While trying to keep my eyebrows from physically leaving my head, I imagined little speech bubbles as I read that article:

"My business was deleted by the best premium model running on the finest of agents."

"Screw you, Poindexter, I don't need to know what a context window is."

"Claude's not stochastic, *you're* stochastic."

-

@adamshostack @mhoye I'm confused. I had to check the date. I am *very* sure I read the "the LLM deleted my prod and when confronted, it confessed!" story before. Roughly 6 months ago, maybe a year.

Ahh, here it is: https://www.theregister.com/2025/07/21/replit_saastr_vibe_coding_incident/

@henryk @adamshostack @mhoye I had the exact same feeling/memory