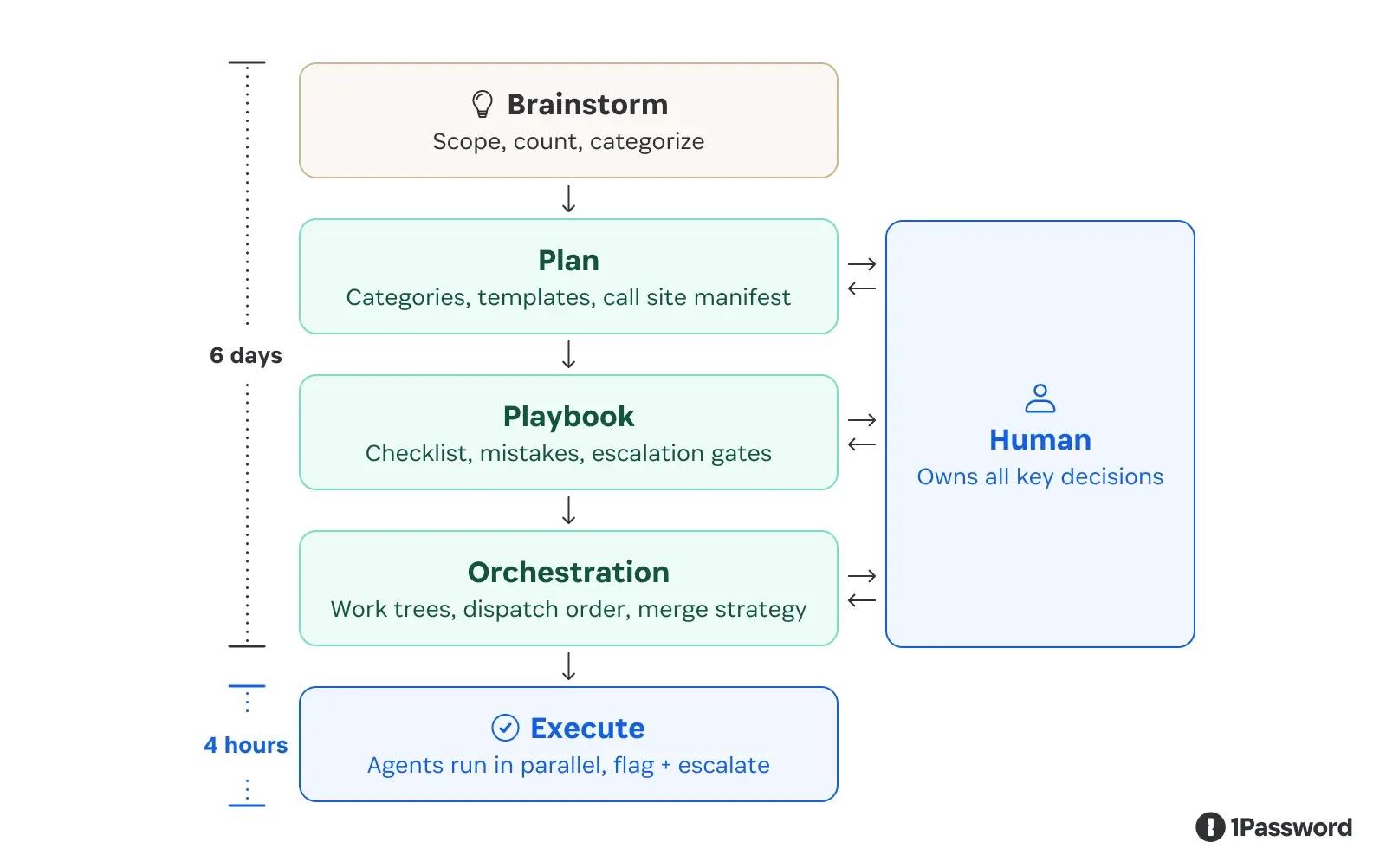

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints."

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password Oh FUCK no.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password WTF? Just recently I decided to stay and accept your price increase, but THIS is definitely not acceptable.

Just canceled our family plan.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password In retrospect I'm so glad I didn't shell out for a 1Password account. The product looked solid, but clearly the company cannot be trusted with sensitive information.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

Actually, a good summary of the lessons. From the business and engineering perspectives, I have a few questions; How do you measure ROI? When is it advantageous for engineers to leverage LLMs, when would it be more beneficial to hire a new FTE?

Finally, how do you maintain engineer motivation, especially when LLMs can handle a significant portion of their work? And how do you ensure a consistent influx of junior engineers while also fostering their continued learning?

At the end of the day, LLMs are trained by data created by engineers. No engineers left == no data for LLMs to train.

Compared to others in the comments, I'm actually happy to see how you think about using LLMs within the organization.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password sounds to me like I need to re-evaluate storing sensitive information with 1Password… disappointing!

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password I'm sad that I can't trust you any more and need to find a new password manager.

At no point in this blog post did I see a serious justification for generating code with a system that you know makes mistakes.

Managerial FOMO isn't actually a good enough justification for your users to accept this.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password my subscription would be up to renew, but now i have six days to find another password manager. thsnks for that, i guess.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password I was a loyal customer who recommended you to everyone. I read about you in the physical Macworld magazine. But I guess I have to rescind that recommendation and cancel my subscription

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password I'm pretty sure that you're talking nonsense, because not only do I not understand what this means, but my partner, who is a software developer, also doesn't understand what this means. Telling agents to produce code using fixed seed values in the LLM, I suppose.

Using LLMs to produce code is just a bad idea. But using LLMs to produce code that people trust to be secure is a TERRIBLE idea.

A human CANNOT own all key decisions in the process unless the human is a developer who is free to develop the code independently, and however they see fit. When a developer's LLM use is mandated from the top down, the dev is forced to become 'responsible' for the LLM's mistakes. The dev is 'replaceable' after all, right?

My partner, who is a developer, is cancelling your service. I've never used your service myself, but I'll be warning everyone against you.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password Pfuh… lucky me that I recently switched to @protonprivacy Pass. However, this now forces me to also cancel my subscription for my family. Yayy now I can move all my family members to something else, that means many hours of explaining and work.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password I'm so glad I don't depend on your software

-

Unfortunately, they too are doing this:

GitHub - bitwarden/ai-plugins: AI plugin marketplace.

AI plugin marketplace. Contribute to bitwarden/ai-plugins development by creating an account on GitHub.

GitHub (github.com)

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password

Please don't.As a 10+ year customer, I'm asking you: please don't use genAI / LLMs / "AI" in your product.

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password many posters apparently think otherwise but AI also catches many bugs.

-

@1password many posters apparently think otherwise but AI also catches many bugs.

@karl @1password Alas, AI hyping and snake oil salesmanship by tech bros, as well as general misuse of AI in software development, have left a very bad taste in everyone’s mouth.

-

Actually, a good summary of the lessons. From the business and engineering perspectives, I have a few questions; How do you measure ROI? When is it advantageous for engineers to leverage LLMs, when would it be more beneficial to hire a new FTE?

Finally, how do you maintain engineer motivation, especially when LLMs can handle a significant portion of their work? And how do you ensure a consistent influx of junior engineers while also fostering their continued learning?

At the end of the day, LLMs are trained by data created by engineers. No engineers left == no data for LLMs to train.

Compared to others in the comments, I'm actually happy to see how you think about using LLMs within the organization.

No. LLMs cannot do those jobs, those folks are paid to make software that works right

-

@1password many posters apparently think otherwise but AI also catches many bugs.

-

No. LLMs cannot do those jobs, those folks are paid to make software that works right

If you're still clinging to the idea that LLMs are a bad tool for engineers, you're going to get left behind.

️

️ -

@1password So where do I switch to that does not use LLMs for this? So sad that so much once great software gets worse these days.

@yatil @1password There's chipass.

ChiPass

Codeberg is a non-profit community-led organization that aims to help free and open source projects prosper by giving them a safe and friendly home.

Codeberg.org (codeberg.org)

"KeePassXC asks us to be skeptical of them if we are skeptical of LLMs. This is a convincing argument."

-

"The pattern that works is using agents to produce deterministic artifacts, then forcing execution through those constraints." Tido Carriero, VP of Engineering at Cursor.

At 1Password, we applied agentic tooling to B5, our multi-million-line Go monolith, to help plan and execute a production refactor. Here's what we learned: https://1password.com/blog/what-we-learned-using-ai-agents-to-refactor-a-monolith

@1password Fucking hell. You are using LLM slop code now? Great, now I need to migrate.