AI bros are just loving open source — loving it to death... maybe quite literally!

-

AI bros are just loving open source — loving it to death... maybe quite literally! (Godot being latest popular example[1])

More and more projects are impacted by floods of bogus AI pull requests and resulting discussions, stealing precious time and nerves away from their maintainers doing actual productive work. More buggy and insecure software (incl. commercial offerings) due to slopcoding, more websites getting attacked daily by AI crawlers in desperate search for any new bits (literally) to add to their already astronomically large training data sets, never mind copyright or any other licensing terms, IP laws...

Yet, our politicians, regulators, media and even many people in academia are continually shoving these issues and any other more urgent criticism of this tech (and its real impacts) to the side, always deemed irrelevant and distracting from all the "amazing" daily breakthroughs being achieved, from the ridiculous amounts of money being made... Blind FOMO and salivation is all there is — without ever publicly questioning/acknowledging/debating from where & whom this data and its monetary and cultural value has been extracted/stolen from... Where is the public framing and balanced discussion of this entire industry as the largest wealth & resource transfer and deskilling exercise in our history? Where are the responsible adults with a modicum of critical thinking and foresight in these rooms of power?

Honestly, I still don't fully understand how we got here and there being so many morally weak, corrupt, gullible and quite frankly _unreasonable_ and unqualified people in charge in literally every field which matters for some form of just & healthy society to continue (or rather to still aiming to get there in the first place)...

What a house of cards we're all living in, and the winds are rising...

There're so many techniques in the field of machine learning which are truly outstanding innovations, able to provide genuine quality of life improvements for the sciences, for the arts, for accessibility/disability etc. — however, these developments have not been part of the public AI discourse in the past few years (which is almost exclusively focused on the LLMs and now shifted to their "agentic" facades/wrappers), nor do these techniques require any planetary-scale infrastructure or intellectual/cultural/physical resource theft, just to be barely operational... I think it's still important to stress these major conceptual differences, even if it often feels that train has left a long time ago and language/semantics have been co-opted by now...

-

AI bros are just loving open source — loving it to death... maybe quite literally! (Godot being latest popular example[1])

More and more projects are impacted by floods of bogus AI pull requests and resulting discussions, stealing precious time and nerves away from their maintainers doing actual productive work. More buggy and insecure software (incl. commercial offerings) due to slopcoding, more websites getting attacked daily by AI crawlers in desperate search for any new bits (literally) to add to their already astronomically large training data sets, never mind copyright or any other licensing terms, IP laws...

Yet, our politicians, regulators, media and even many people in academia are continually shoving these issues and any other more urgent criticism of this tech (and its real impacts) to the side, always deemed irrelevant and distracting from all the "amazing" daily breakthroughs being achieved, from the ridiculous amounts of money being made... Blind FOMO and salivation is all there is — without ever publicly questioning/acknowledging/debating from where & whom this data and its monetary and cultural value has been extracted/stolen from... Where is the public framing and balanced discussion of this entire industry as the largest wealth & resource transfer and deskilling exercise in our history? Where are the responsible adults with a modicum of critical thinking and foresight in these rooms of power?

Honestly, I still don't fully understand how we got here and there being so many morally weak, corrupt, gullible and quite frankly _unreasonable_ and unqualified people in charge in literally every field which matters for some form of just & healthy society to continue (or rather to still aiming to get there in the first place)...

What a house of cards we're all living in, and the winds are rising...

There're so many techniques in the field of machine learning which are truly outstanding innovations, able to provide genuine quality of life improvements for the sciences, for the arts, for accessibility/disability etc. — however, these developments have not been part of the public AI discourse in the past few years (which is almost exclusively focused on the LLMs and now shifted to their "agentic" facades/wrappers), nor do these techniques require any planetary-scale infrastructure or intellectual/cultural/physical resource theft, just to be barely operational... I think it's still important to stress these major conceptual differences, even if it often feels that train has left a long time ago and language/semantics have been co-opted by now...

-

AI bros are just loving open source — loving it to death... maybe quite literally! (Godot being latest popular example[1])

More and more projects are impacted by floods of bogus AI pull requests and resulting discussions, stealing precious time and nerves away from their maintainers doing actual productive work. More buggy and insecure software (incl. commercial offerings) due to slopcoding, more websites getting attacked daily by AI crawlers in desperate search for any new bits (literally) to add to their already astronomically large training data sets, never mind copyright or any other licensing terms, IP laws...

Yet, our politicians, regulators, media and even many people in academia are continually shoving these issues and any other more urgent criticism of this tech (and its real impacts) to the side, always deemed irrelevant and distracting from all the "amazing" daily breakthroughs being achieved, from the ridiculous amounts of money being made... Blind FOMO and salivation is all there is — without ever publicly questioning/acknowledging/debating from where & whom this data and its monetary and cultural value has been extracted/stolen from... Where is the public framing and balanced discussion of this entire industry as the largest wealth & resource transfer and deskilling exercise in our history? Where are the responsible adults with a modicum of critical thinking and foresight in these rooms of power?

Honestly, I still don't fully understand how we got here and there being so many morally weak, corrupt, gullible and quite frankly _unreasonable_ and unqualified people in charge in literally every field which matters for some form of just & healthy society to continue (or rather to still aiming to get there in the first place)...

What a house of cards we're all living in, and the winds are rising...

There're so many techniques in the field of machine learning which are truly outstanding innovations, able to provide genuine quality of life improvements for the sciences, for the arts, for accessibility/disability etc. — however, these developments have not been part of the public AI discourse in the past few years (which is almost exclusively focused on the LLMs and now shifted to their "agentic" facades/wrappers), nor do these techniques require any planetary-scale infrastructure or intellectual/cultural/physical resource theft, just to be barely operational... I think it's still important to stress these major conceptual differences, even if it often feels that train has left a long time ago and language/semantics have been co-opted by now...

@toxi It's the topic of the day: https://www.joanwestenberg.com/the-case-for-gatekeeping-or-why-medieval-guilds-had-it-figured-out/

-

AI bros are just loving open source — loving it to death... maybe quite literally! (Godot being latest popular example[1])

More and more projects are impacted by floods of bogus AI pull requests and resulting discussions, stealing precious time and nerves away from their maintainers doing actual productive work. More buggy and insecure software (incl. commercial offerings) due to slopcoding, more websites getting attacked daily by AI crawlers in desperate search for any new bits (literally) to add to their already astronomically large training data sets, never mind copyright or any other licensing terms, IP laws...

Yet, our politicians, regulators, media and even many people in academia are continually shoving these issues and any other more urgent criticism of this tech (and its real impacts) to the side, always deemed irrelevant and distracting from all the "amazing" daily breakthroughs being achieved, from the ridiculous amounts of money being made... Blind FOMO and salivation is all there is — without ever publicly questioning/acknowledging/debating from where & whom this data and its monetary and cultural value has been extracted/stolen from... Where is the public framing and balanced discussion of this entire industry as the largest wealth & resource transfer and deskilling exercise in our history? Where are the responsible adults with a modicum of critical thinking and foresight in these rooms of power?

Honestly, I still don't fully understand how we got here and there being so many morally weak, corrupt, gullible and quite frankly _unreasonable_ and unqualified people in charge in literally every field which matters for some form of just & healthy society to continue (or rather to still aiming to get there in the first place)...

What a house of cards we're all living in, and the winds are rising...

There're so many techniques in the field of machine learning which are truly outstanding innovations, able to provide genuine quality of life improvements for the sciences, for the arts, for accessibility/disability etc. — however, these developments have not been part of the public AI discourse in the past few years (which is almost exclusively focused on the LLMs and now shifted to their "agentic" facades/wrappers), nor do these techniques require any planetary-scale infrastructure or intellectual/cultural/physical resource theft, just to be barely operational... I think it's still important to stress these major conceptual differences, even if it often feels that train has left a long time ago and language/semantics have been co-opted by now...

@toxi sums it up. back to the woods...

-

@toxi sums it up. back to the woods...

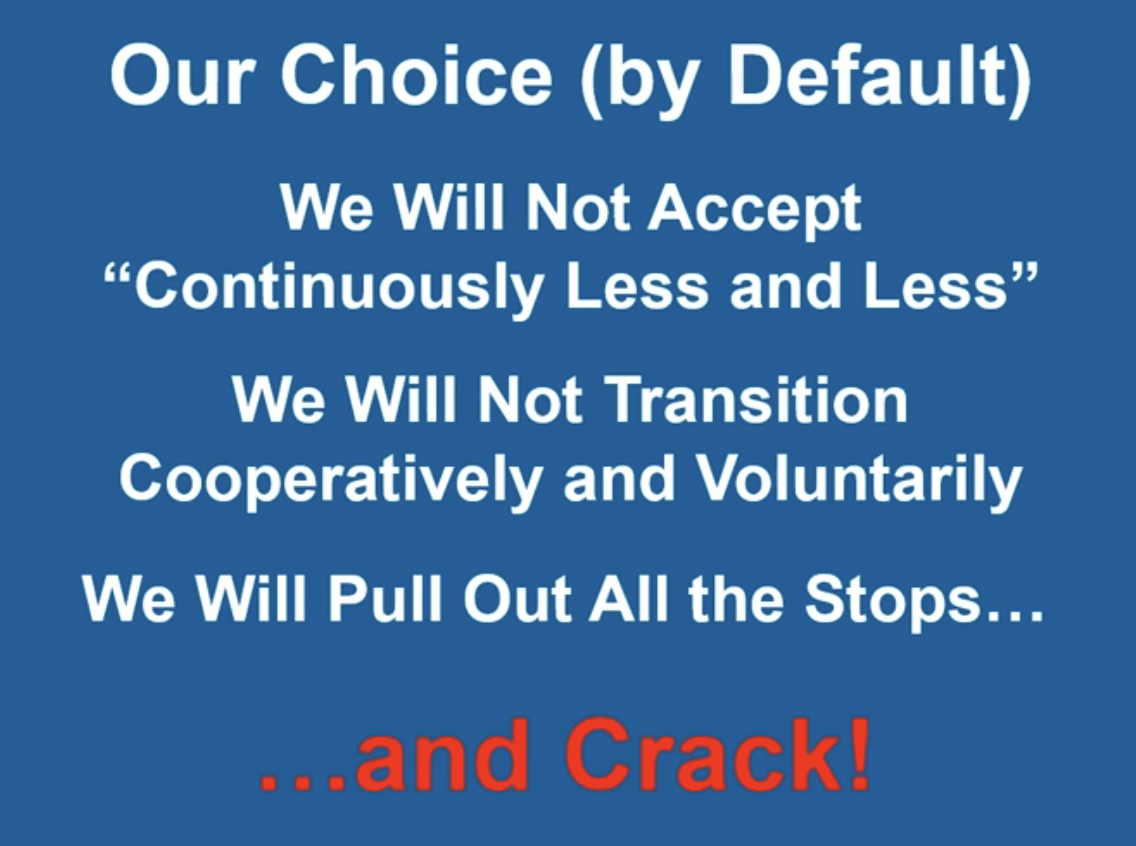

@mapsquatch I liked how this presentation[1] framed it: "Sustainability _will_ be achieved. Voluntarily or involuntarily."

Karsten Schmidt (@toxi@mastodon.thi.ng)

Attached: 2 images Already 6 years old, so not even taking into account post-2022 hyperscaling, this is a sobering, very rational and well argued 20 min presentation for some cold flush reality check of the hot fever dreams of AI proponents (and all YOLO energy/resource guzzlers of any walk/standing): Blip (2020) https://www.youtube.com/watch?v=cdXdaIsfio8 "Nature doesn't grant pardons" — Chris Clugston (via @urlyman@mastodon.social, who I warmly recommend to read/follow if you're interested in these topics!) #AI #Degrowth #Collapse #Energy #BlipEconomy #PermaComputing

Mastodon Glitch Edition (mastodon.thi.ng)

-

@toxi It's the topic of the day: https://www.joanwestenberg.com/the-case-for-gatekeeping-or-why-medieval-guilds-had-it-figured-out/

@felix Thank you! Will read tonight, I usually love their writing!

-

AI bros are just loving open source — loving it to death... maybe quite literally! (Godot being latest popular example[1])

More and more projects are impacted by floods of bogus AI pull requests and resulting discussions, stealing precious time and nerves away from their maintainers doing actual productive work. More buggy and insecure software (incl. commercial offerings) due to slopcoding, more websites getting attacked daily by AI crawlers in desperate search for any new bits (literally) to add to their already astronomically large training data sets, never mind copyright or any other licensing terms, IP laws...

Yet, our politicians, regulators, media and even many people in academia are continually shoving these issues and any other more urgent criticism of this tech (and its real impacts) to the side, always deemed irrelevant and distracting from all the "amazing" daily breakthroughs being achieved, from the ridiculous amounts of money being made... Blind FOMO and salivation is all there is — without ever publicly questioning/acknowledging/debating from where & whom this data and its monetary and cultural value has been extracted/stolen from... Where is the public framing and balanced discussion of this entire industry as the largest wealth & resource transfer and deskilling exercise in our history? Where are the responsible adults with a modicum of critical thinking and foresight in these rooms of power?

Honestly, I still don't fully understand how we got here and there being so many morally weak, corrupt, gullible and quite frankly _unreasonable_ and unqualified people in charge in literally every field which matters for some form of just & healthy society to continue (or rather to still aiming to get there in the first place)...

What a house of cards we're all living in, and the winds are rising...

There're so many techniques in the field of machine learning which are truly outstanding innovations, able to provide genuine quality of life improvements for the sciences, for the arts, for accessibility/disability etc. — however, these developments have not been part of the public AI discourse in the past few years (which is almost exclusively focused on the LLMs and now shifted to their "agentic" facades/wrappers), nor do these techniques require any planetary-scale infrastructure or intellectual/cultural/physical resource theft, just to be barely operational... I think it's still important to stress these major conceptual differences, even if it often feels that train has left a long time ago and language/semantics have been co-opted by now...

To make some of these points more concrete:

A choice is being made when governments & municipalities are providing tax rebates and subsidized deals on land and/or energy/water for AI data centers, often to the detriment of the local population. Data centers provide little in terms of employment and the rising energy prices are carried by everyone else, also impacting other existing businesses. This is not counting other important aspects like re-zoning, noise pollution, construction of supply roads/lines, (dirty) energy sources, all of which (in total) should be considered to counter-balance any promised gains from mass building such infrastructure. Many of these data centers are built in area already suffering water stress, which is only going to get more intense!

A choice is being made when education policymakers and universities decide to become AI sales people and that AI education should be constrained to students becoming effective/uncritical users of AI tech, rather than using higher education as a platform to critically/objectively research and examine this technology and its costs/impacts on many different aspects of society (energy, ethics, inequality, legal issues, security, sovereignty...)

A choice is being made when company bosses are forcing staff to adopt AI or be pushed out, even if this adoption arguably has at the very least a considerable risk of damaging the health of the company and employees long term (via various well-documented risk factors, incl. the introduction of company-wide core dependencies on external, unsustainable, VC-backed subscription-based infrastructure/services, whose fees are almost guaranteed to sky-rocket in the foreseeable future). Also worth mentioning here two new buzzwords terms surfacing currently: Cognitive Debt and Semantic Ablation

A choice is being made by governments adopting AI for policing and preparing for social unrest, investing billions into surveillance instead of lowering inequality and improving social services, social mobility & cohesion (e.g. financed via higher taxes obtained from the super rich).

A choice is being made, when investment & grant opportunities for anything but AI-related businesses are deemed too risky and not worthwhile, essentially forcing AI features into any new business idea which requires external financing, and thereby funneling more and more people/infrastructure into this growing spiral of dependencies and this pyramid system of subscription-based computing, with less than a handful of companies at the very top.

Choices...

-

R relay@relay.infosec.exchange shared this topic