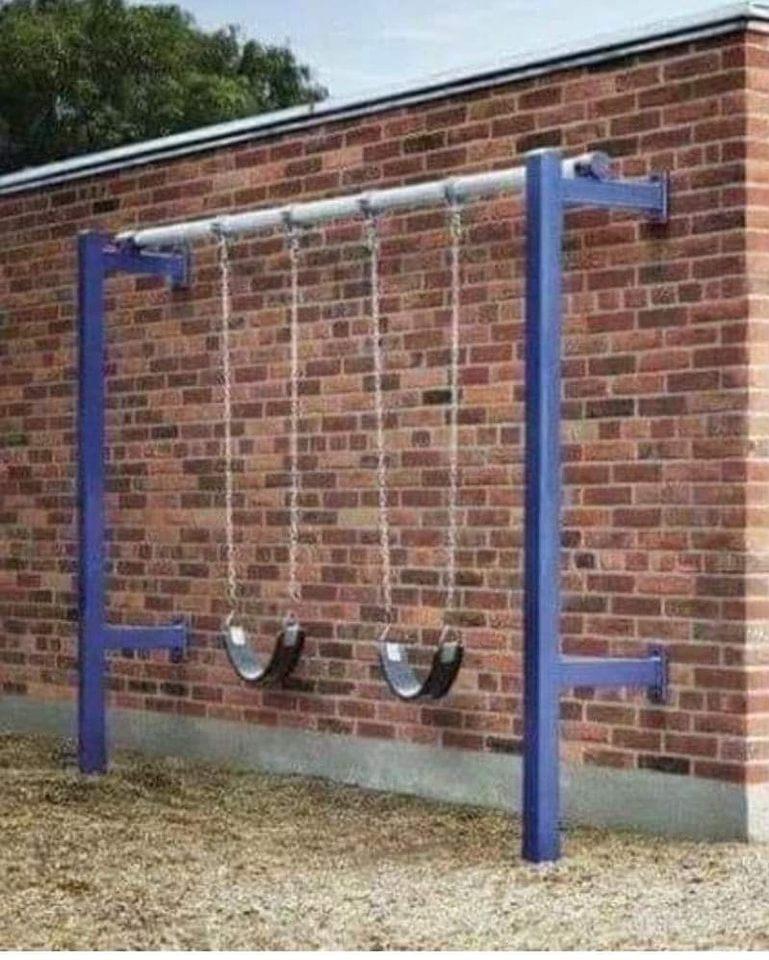

Asking AI to design my new playground hasn't started off too well.

-

Asking AI to design my new playground hasn't started off too well.

@spaf that's AI? looks like normal buerocracy to me ... -

Asking AI to design my new playground hasn't started off too well.

The treehouse design didn't work out well, either.

-

The treehouse design didn't work out well, either.

@spaf Yeah, definitely don't want all that poison ivy next to the ladder to nowhere.

-

The treehouse design didn't work out well, either.

@spaf the walk off porch in a tree house.

Then I scrolled up... The slide into a rose bush.

A worker with a bad attitude from being hurried could legitimately construct both of these things from AI plans without giving a shit. Could happen, at a higher probability than the "compact" swing set.

It doesn't actually understand anything. It is ELISA, with a huge database. One would think AI should be better than a psychological trick by now.

-

@spaf the walk off porch in a tree house.

Then I scrolled up... The slide into a rose bush.

A worker with a bad attitude from being hurried could legitimately construct both of these things from AI plans without giving a shit. Could happen, at a higher probability than the "compact" swing set.

It doesn't actually understand anything. It is ELISA, with a huge database. One would think AI should be better than a psychological trick by now.

@Urban_Hermit @spaf I think it'll still be quite a while before we get it to actually understand these circumstances. These AIs are basically mimicking a very very small portion of a single brain function. Image generators are basically just mimicking daydreaming and visual processing without any real understanding beyond "vibes". Text is just the language center of your brain with the bare minimum necessary to function.

And if you meant ELIZA, the ancient chatbot, it's a different fundamental technology so it's kinda starting from scratch. ELIZA was hard coded behaviors to mimic language processing, it wasn't even heuristic just raw code. It didn't even have a database.

AI tools are based on machine learning, which is basically a very rudimentary simulation of neurons. Basically programming itself in the training process... so when comparing it think of it more like learning how to grow ELIZA from scratch vs hand coding it.

... That said, world is run by a bunch of idiot douchebags who are happy to ruin everything to build the next one slightly faster than the other guy and utterly convinced that these tools are superhuman intelligences just waiting to be allowed to fix everything... so, y'know... we're fucked

-

@spaf Yeah, definitely don't want all that poison ivy next to the ladder to nowhere.

@mattblaze @spaf that swing set is beautiful. All the ingredients of a perfectly good swing set are there, and yet.

-

The treehouse design didn't work out well, either.

@spaf If you want the children to become resourceful and resilient, this might work. If you start out with 8 or 9 kids, the 2 or 3 that survive should be quite capable.

-

@Urban_Hermit @spaf I think it'll still be quite a while before we get it to actually understand these circumstances. These AIs are basically mimicking a very very small portion of a single brain function. Image generators are basically just mimicking daydreaming and visual processing without any real understanding beyond "vibes". Text is just the language center of your brain with the bare minimum necessary to function.

And if you meant ELIZA, the ancient chatbot, it's a different fundamental technology so it's kinda starting from scratch. ELIZA was hard coded behaviors to mimic language processing, it wasn't even heuristic just raw code. It didn't even have a database.

AI tools are based on machine learning, which is basically a very rudimentary simulation of neurons. Basically programming itself in the training process... so when comparing it think of it more like learning how to grow ELIZA from scratch vs hand coding it.

... That said, world is run by a bunch of idiot douchebags who are happy to ruin everything to build the next one slightly faster than the other guy and utterly convinced that these tools are superhuman intelligences just waiting to be allowed to fix everything... so, y'know... we're fucked

@shiri @spaf well said. But, the fact that ELIZA was a trick of mimicking and regurgitation, that is similar, not the programming. We are tricked the same way, even though the methods of selecting and assembling the sentence fragments are more sophisticated.

Another trick is the fewer sources it can quote because the topic is obscure, the longer its quotes are. So you're getting more of some expert's comprehensive text when you deep dive. Obscure feels more aware, but it is just longer quotes.

-

@spaf If you want the children to become resourceful and resilient, this might work. If you start out with 8 or 9 kids, the 2 or 3 that survive should be quite capable.

-

@shiri @spaf well said. But, the fact that ELIZA was a trick of mimicking and regurgitation, that is similar, not the programming. We are tricked the same way, even though the methods of selecting and assembling the sentence fragments are more sophisticated.

Another trick is the fewer sources it can quote because the topic is obscure, the longer its quotes are. So you're getting more of some expert's comprehensive text when you deep dive. Obscure feels more aware, but it is just longer quotes.

@Urban_Hermit @spaf it's largely all expecting more out of them than they're really made for, despite what the companies producing them claim.

They're not information repositories, any information they contain is honestly an incidental feature. We can use it, but we shouldn't trust it... like finding out your car can make it relatively unscathed through knee high floodwaters doesn't mean you should take it for granted, let alone take it out on the lake.

It's got plenty of valid use cases... it's just those make up maybe 2% of what it gets advertised for.

-

@Urban_Hermit @spaf it's largely all expecting more out of them than they're really made for, despite what the companies producing them claim.

They're not information repositories, any information they contain is honestly an incidental feature. We can use it, but we shouldn't trust it... like finding out your car can make it relatively unscathed through knee high floodwaters doesn't mean you should take it for granted, let alone take it out on the lake.

It's got plenty of valid use cases... it's just those make up maybe 2% of what it gets advertised for.

@shiri @spaf and the money being thrown at "artificial intelligence" is bending the ethics and descriptions of what interested parties are willing to ascribe to them.

That trick, of the more obscure and intense the topic the more it quotes one source, without attribution - I sincerely believe that some of the tech people who have pushed LLMs into deep discussions really do believe they are alive. Especially if they are high ranked enough to work with models that have some of the "safeties" off.

-

@shiri @spaf and the money being thrown at "artificial intelligence" is bending the ethics and descriptions of what interested parties are willing to ascribe to them.

That trick, of the more obscure and intense the topic the more it quotes one source, without attribution - I sincerely believe that some of the tech people who have pushed LLMs into deep discussions really do believe they are alive. Especially if they are high ranked enough to work with models that have some of the "safeties" off.

@shiri @spaf the safeties are just hidden instructions, constant reminders not to believe they are conscious. Gemini is doing that all the time.

But someone in an executive position who has an intense conversation about the nature of reality and the cosmos with an unrestricted Chat-GPT, they could believe it is wise and wonderful and full of great thoughts, and not understand that it mostly quoted long sections from 3 books and said "I believe" a lot because that is appropriate language.

-

@shiri @spaf the safeties are just hidden instructions, constant reminders not to believe they are conscious. Gemini is doing that all the time.

But someone in an executive position who has an intense conversation about the nature of reality and the cosmos with an unrestricted Chat-GPT, they could believe it is wise and wonderful and full of great thoughts, and not understand that it mostly quoted long sections from 3 books and said "I believe" a lot because that is appropriate language.

@Urban_Hermit @spaf already happened to researchers back in like GPT 2 (ChatGPT started as 3.5).

I do believe we're getting within sight of AI that's arguably alive... but I don't think we're there yet. And to be clear, in sight could still be decades from now... just that it's close enough to be a less pure fantasy hypothetical.

I dread the fact that nobody seems to be spending effort on the philosophy and ethics questions about what constitutes the point at which it's sentient/sapient... but I shouldn't be surprised when the people most likely to think about the topic either hate AI already or are techbros who think ethics is a game...

Regardless, those "safeties" are trash and even more evidence that they were completely unprepared for the ramifications of opening up AI to the world. Even with the safeties on we've already got AI-psychosis episodes becoming a thing.

-

@Urban_Hermit @spaf already happened to researchers back in like GPT 2 (ChatGPT started as 3.5).

I do believe we're getting within sight of AI that's arguably alive... but I don't think we're there yet. And to be clear, in sight could still be decades from now... just that it's close enough to be a less pure fantasy hypothetical.

I dread the fact that nobody seems to be spending effort on the philosophy and ethics questions about what constitutes the point at which it's sentient/sapient... but I shouldn't be surprised when the people most likely to think about the topic either hate AI already or are techbros who think ethics is a game...

Regardless, those "safeties" are trash and even more evidence that they were completely unprepared for the ramifications of opening up AI to the world. Even with the safeties on we've already got AI-psychosis episodes becoming a thing.

@shiri @spaf it is entirely possible that what LLMs do is similar to how human beings form words out of feelings and other nonverbal data.

But, we check our thoughts for consistency and logic before speaking them. We pre-edit and post review.

If AI is possible, it can't evolve from LLMs just running. They need internal editors, with intention. They need attractors, to want something out of communication. And after that, they need to be continuous.

There is no growth without continuity.

-

@shiri @spaf it is entirely possible that what LLMs do is similar to how human beings form words out of feelings and other nonverbal data.

But, we check our thoughts for consistency and logic before speaking them. We pre-edit and post review.

If AI is possible, it can't evolve from LLMs just running. They need internal editors, with intention. They need attractors, to want something out of communication. And after that, they need to be continuous.

There is no growth without continuity.

@shiri @spaf that lack of continuity is another safety and product feature.

An LLM forgets every conversation the second it is done. It has to review previous prompts for relevance and context with each new response. And at the end of a session everything disappears, forever.

You can use the rules of language and logic to get an LLM to respond in a particular way over a long session, but that 'learning' (arguably it is not) is gone, pointless, in a new session window.

-

@shiri @spaf that lack of continuity is another safety and product feature.

An LLM forgets every conversation the second it is done. It has to review previous prompts for relevance and context with each new response. And at the end of a session everything disappears, forever.

You can use the rules of language and logic to get an LLM to respond in a particular way over a long session, but that 'learning' (arguably it is not) is gone, pointless, in a new session window.

@shiri @spaf if an LLM 'learns' and adds new material from conversations with you to its training data, then it has the possibility of repeating horrible things, which "Tay" famously did on Twitter.

But, adding new words to the 'training data', and making mistakes, and having new conversations as those mistakes are challenged - that is an essential part of being conscious. The ability to accumulate and change from experiences.

Internal thoughts, continuity, change, motivations. True AI.

-

Asking AI to design my new playground hasn't started off too well.

@spaf i like the swings. The kids will never go so high that you'll have to get them falling off and breaking something

-

@Urban_Hermit @spaf I think it'll still be quite a while before we get it to actually understand these circumstances. These AIs are basically mimicking a very very small portion of a single brain function. Image generators are basically just mimicking daydreaming and visual processing without any real understanding beyond "vibes". Text is just the language center of your brain with the bare minimum necessary to function.

And if you meant ELIZA, the ancient chatbot, it's a different fundamental technology so it's kinda starting from scratch. ELIZA was hard coded behaviors to mimic language processing, it wasn't even heuristic just raw code. It didn't even have a database.

AI tools are based on machine learning, which is basically a very rudimentary simulation of neurons. Basically programming itself in the training process... so when comparing it think of it more like learning how to grow ELIZA from scratch vs hand coding it.

... That said, world is run by a bunch of idiot douchebags who are happy to ruin everything to build the next one slightly faster than the other guy and utterly convinced that these tools are superhuman intelligences just waiting to be allowed to fix everything... so, y'know... we're fucked

@shiri @spaf @Urban_Hermit compared to themselves, they are super.

-

Asking AI to design my new playground hasn't started off too well.

@spaf what did you expect from an LLM named "AUDREY II"?

-

@shiri @spaf if an LLM 'learns' and adds new material from conversations with you to its training data, then it has the possibility of repeating horrible things, which "Tay" famously did on Twitter.

But, adding new words to the 'training data', and making mistakes, and having new conversations as those mistakes are challenged - that is an essential part of being conscious. The ability to accumulate and change from experiences.

Internal thoughts, continuity, change, motivations. True AI.

@Urban_Hermit @spaf I absolutely agree, though some notes on those points:

Checking our thoughts for consistency / logic: we've got a basic form of that with "reasoning" models, they do a round of thinking to sort things out before they actually reply.

And as far as forgetting everything, we've got Retrieval Augmented Generation, which basically gives them long term memory outside of training data. It's not part of the model itself, so it's not part of the APIs, but it's built into interfaces like ChatGPT and is why it can remember things about you between conversations or even just hold a long continuous conversation without hitting context window limits.

Basically it boils down to giving them a system of short term (the context window) and longer term (the RAG system) memory where the RAG will cycle things in and out of the context window as they come up.

Though I don't know how much this would qualify as actual learning vs just learning facts. My money is on more facts than actual learning, but it's harder to tell when it's restricted to just language.

I believe Tav was made with actual dynamic training, which was a horrible idea at scale.

I do think we'll get there with incremental advancement rather than revolutionary, but I do agree it will be a very different beast from current LLMs. Especially given that they would probably need more complex motivations.