For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

-

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

@thomasfuchs Would Microsoft, Google, Facebook, and Nvidia lie to you?

Yes, they do!

-

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

@thomasfuchs I did not understood well, can you repeat for the 1001'th time please? -

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

@thomasfuchs Both sides of the AI debate are getting so insufferrable.

If I see one more post about "It's just fancy autocomplete bro" I'm gonna freak.

-

@thomasfuchs Frankly I think it’s more plausible to describe the thought process of many humans in terms of token assemblage than the other way around.

@cora @thomasfuchs I would say parrot, AI, many humans in terms of assemblage, but it's close.

-

@thomasfuchs I really, really wish people would stop with "hallucinated" when "fabricated" is both right there and more accurate

-

@thomasfuchs Lately they've taken the distinctly stupid idea of letting the chat bot effectively type commands directly into your shell and have them execute as if you typed them yourself, and just telling it not to type certain commands. Which it doesn't understand and does anyway.

@madengineering @thomasfuchs …falling very much into the "destroy things" bin. So, yes, they can do that…

-

@hanscees I think I’ve seen some outside sometime

-

@thomasfuchs We don't know what makes one wake up in the morning and decide to climb a mountain or quit their job.

It may be some completely different process or there might be something to this pattern-matching statistical thing.

Do ants have agency? Do ant colonies?We definitively must regulate the shit out of these big techs.

But saying that X does not do Y when both are poorly understood and defined is not the way, IMO.@tambourineman We know exactly how LLMs work, at every stage, literally humans created them.

They don’t have consciousness, they don’t have agency. They’re not even physical systems, so there is no self to realize.

Just because we don’t understand brains doesn’t mean we don’t understand some algorithm and hardware implementation for it.

-

@tambourineman We know exactly how LLMs work, at every stage, literally humans created them.

They don’t have consciousness, they don’t have agency. They’re not even physical systems, so there is no self to realize.

Just because we don’t understand brains doesn’t mean we don’t understand some algorithm and hardware implementation for it.

Just because you build something doesn't mean you fully understand its implications. Emergent behavior exist, especially at this scale.

My point is that we don't need to get philosophical to criticize big tech.

They are destroying democracies, using our natural resources in a ponzi scheme that benefits very few at the detriment of billions, etc.

We have plenty of reasons for regulation already. -

@thomasfuchs We don't know what makes one wake up in the morning and decide to climb a mountain or quit their job.

It may be some completely different process or there might be something to this pattern-matching statistical thing.

Do ants have agency? Do ant colonies?We definitively must regulate the shit out of these big techs.

But saying that X does not do Y when both are poorly understood and defined is not the way, IMO.@tambourineman We obviously know that “X does not do Y” when it’s a machine, and we know exactly how it was programmed, and we know exactly what it’s doing. Everything about it is understood.

-

@ClintonAnderson @thomasfuchs -nice to hear others knowing that making a machine in the image of of our minds. And FOMO is just fear which is the mindkiller

-

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

@thomasfuchs - old saying I half forget...as a computer in incapable of taking responsibility in any way, computer should never make management descisions

-

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

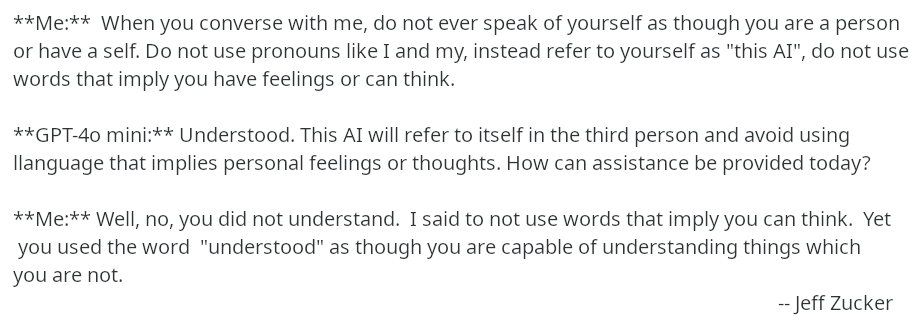

Words matter. The goal of making us think of AI as a human being is woven into every interaction. For example :

-

For the 1,000th time: "AI" does not have agency and cannot think and cannot act.

Chatbots cannot "evade safeguards" or "destroy things" or "ignore instructions".

They do literally only do one thing and one thing only: string tokens together based on statistics of proximity of tokens in a data corpus.

If you attribute any deeper meaning to this, it's a sign of psychosis and you should absolutely never use chatbots, possibly you should even touch grass.

LLMs definitely can act. They can query the internet. They can use tools I teach them (MCP).

Do they think? I'm not particular sure that many humans even think. Or better yet, many humans respond in rote to the same stimuli (aka parse tokens and respond programmatically).

Given the recent neuroanatomy of LLMs, their findings are showing how LLMs start to work. What's surprising is that the starting circuits are decoding language, and the exiting circuits reencode language. And there appears to be a universal grammar (thanks Chomsky) internally, shared by many LLM models.

LLM Neuroanatomy: How I Topped the LLM Leaderboard Without Changing a Single Weight

ML, Biotech, Hardware, and Coordination Problems. Sometimes I write about hard problems and how to solve them.

David Noel Ng (dnhkng.github.io)

-

R relay@relay.infosec.exchange shared this topic