We knew, but the proof is nice.

-

@davidaugust Direct link to the paper https://arxiv.org/pdf/2410.05229 (presented at ICLR 2025).

Seems not to be a very recent news, then.

@Sobex it’s from August.

-

@drifthood yes, there does seem to be a threshold over which in some respects only humans cross over to one side.

I see that sort of begging in a dog. He wants the treat, so instead of just doing the desired behavior the human command is asking for, he tries every response that has ever gotten him a treat until he “unlocks” the treat. Humans can and do do this too from time to time, but humans _also_ actually communicate and understand from time to time as well.

-

not new, here's the 2024 paper referenced:

GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models

Abstract page for arXiv paper 2410.05229: GSM-Symbolic: Understanding the Limitations of Mathematical Reasoning in Large Language Models

arXiv.org (arxiv.org)

@joriki it’s from August.

-

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

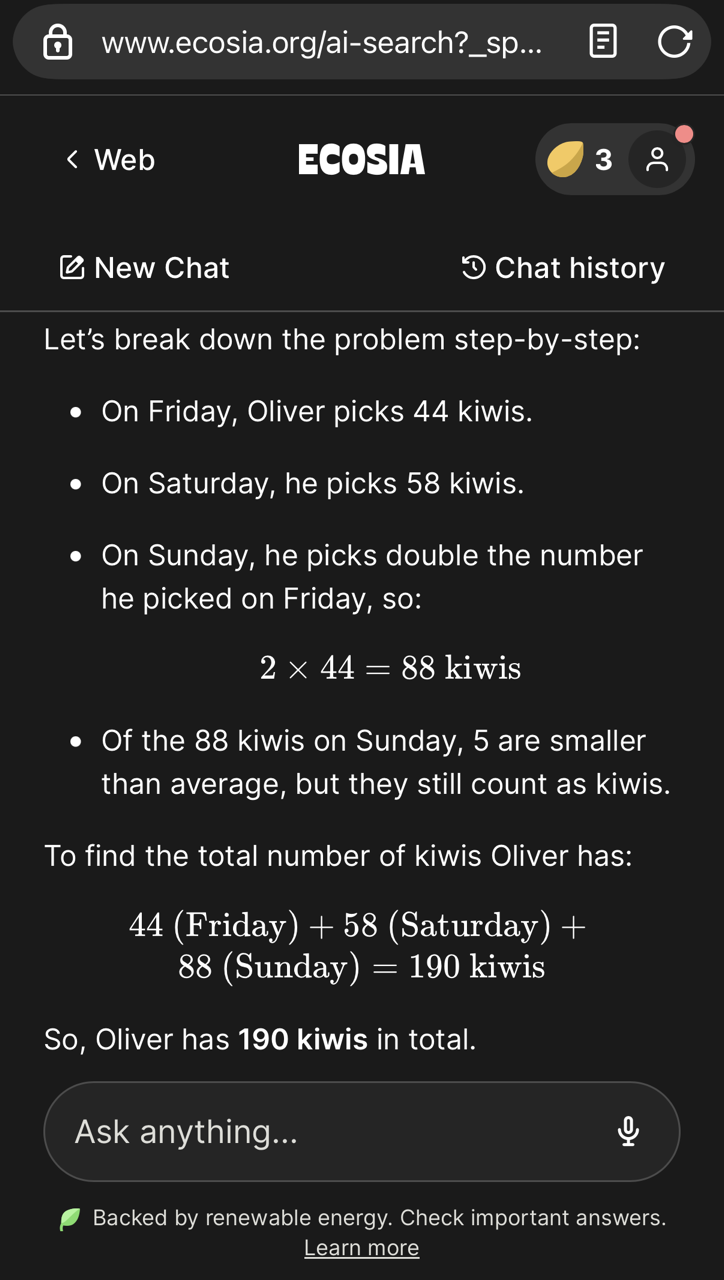

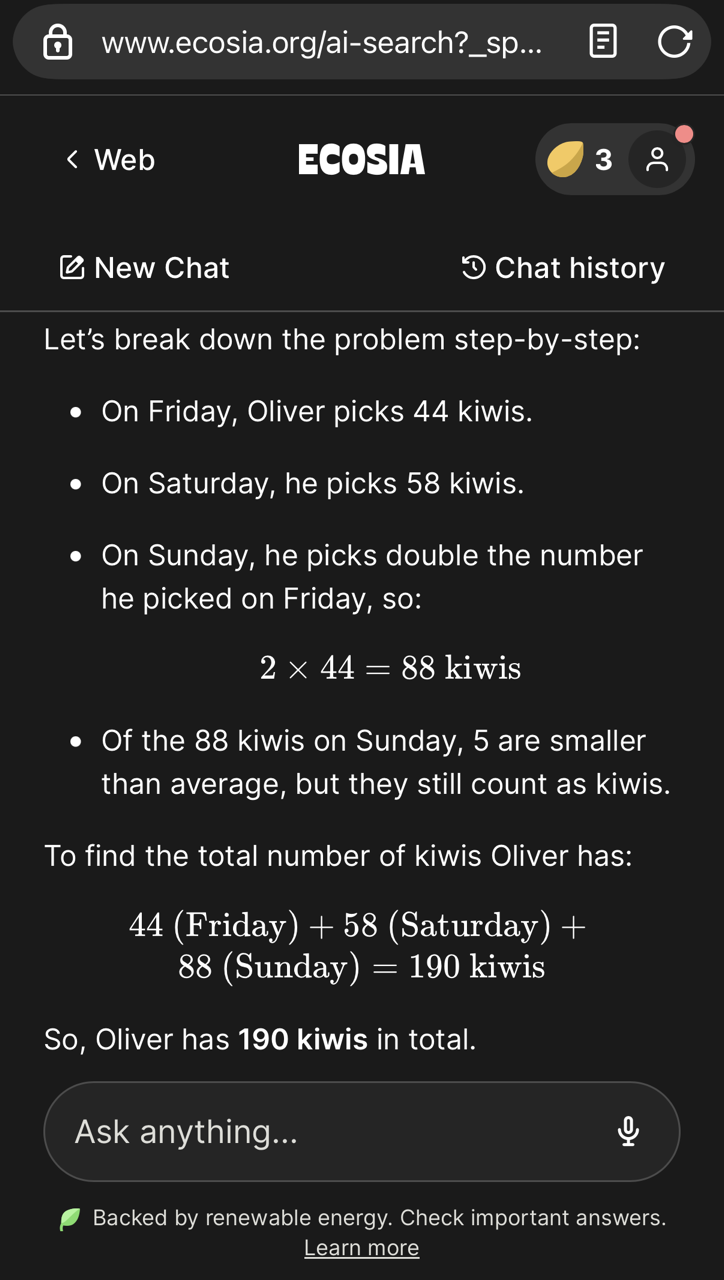

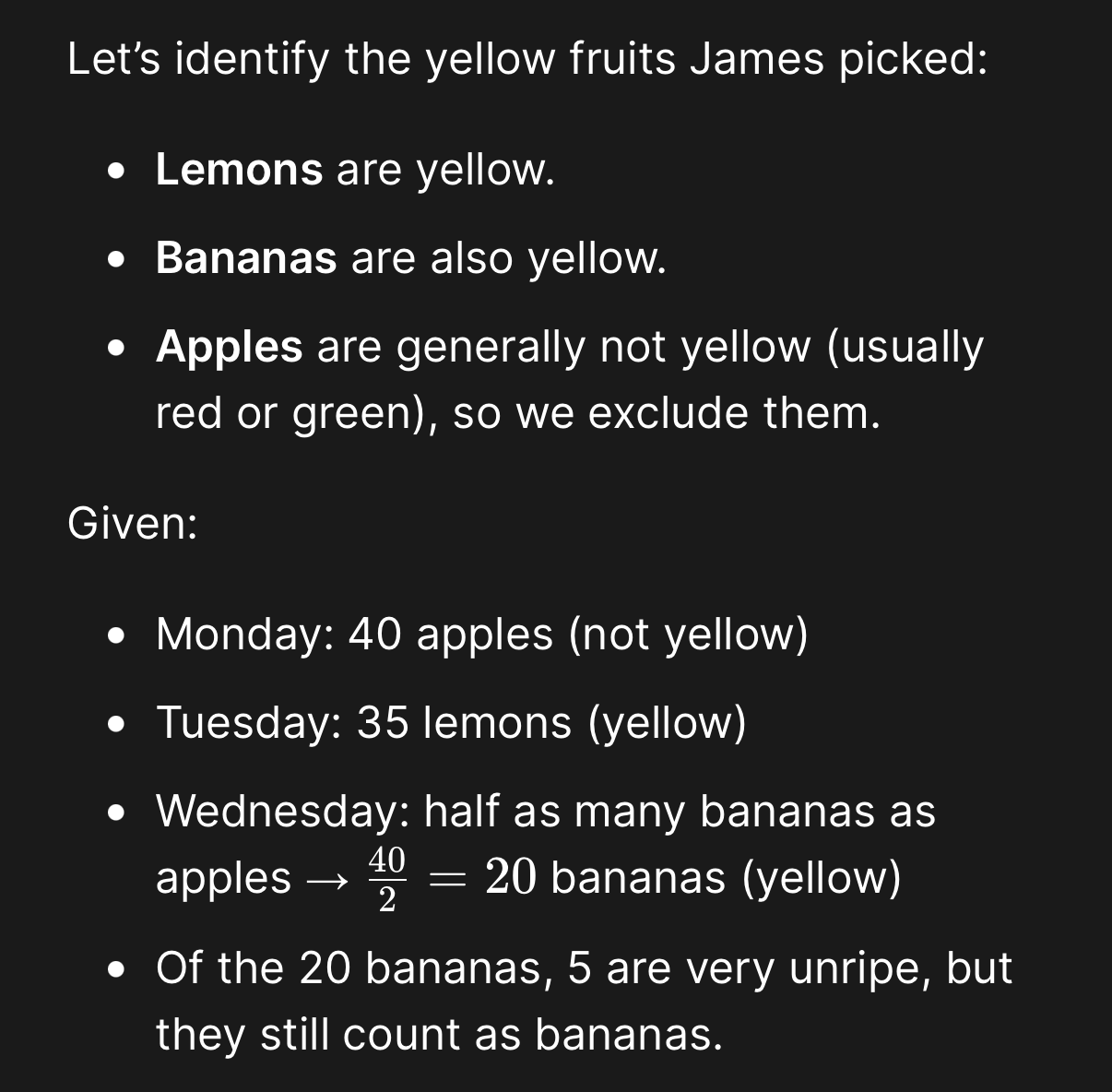

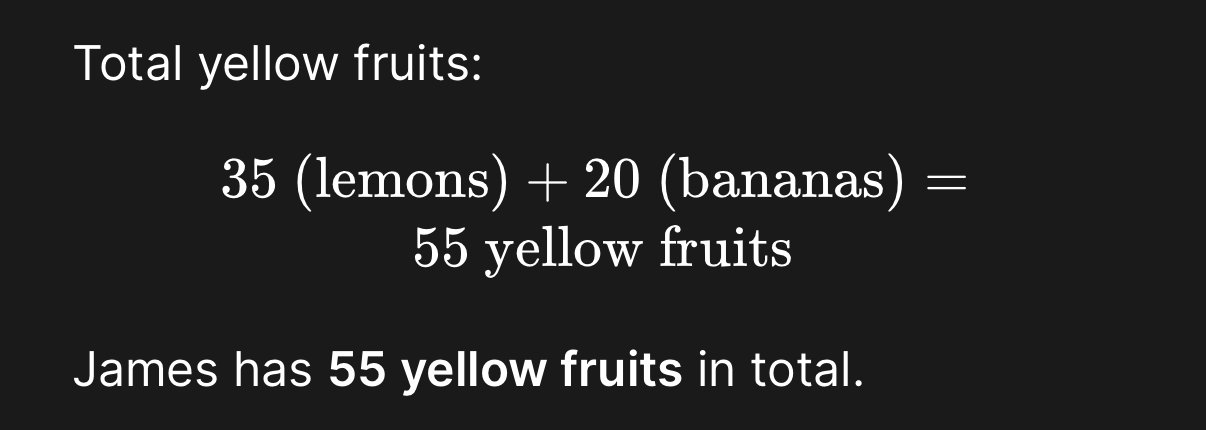

@davidaugust Ecosia AI gets it right. It looks like the paper referenced was published in 2025, so the research conducted prior. The models are all much better now. I’m no AI apologist, but I think any argument of “AI sucks because it’s not good at _____” is on tenuous ground and will be proven wrong as the models continue to improve. @Ecosia

-

@davidaugust Ecosia AI gets it right. It looks like the paper referenced was published in 2025, so the research conducted prior. The models are all much better now. I’m no AI apologist, but I think any argument of “AI sucks because it’s not good at _____” is on tenuous ground and will be proven wrong as the models continue to improve. @Ecosia

@audioflyer79 @davidaugust I mean, it's worth noting that the LLMs have ingested that paper by now. : /

-

@audioflyer79 @davidaugust I mean, it's worth noting that the LLMs have ingested that paper by now. : /

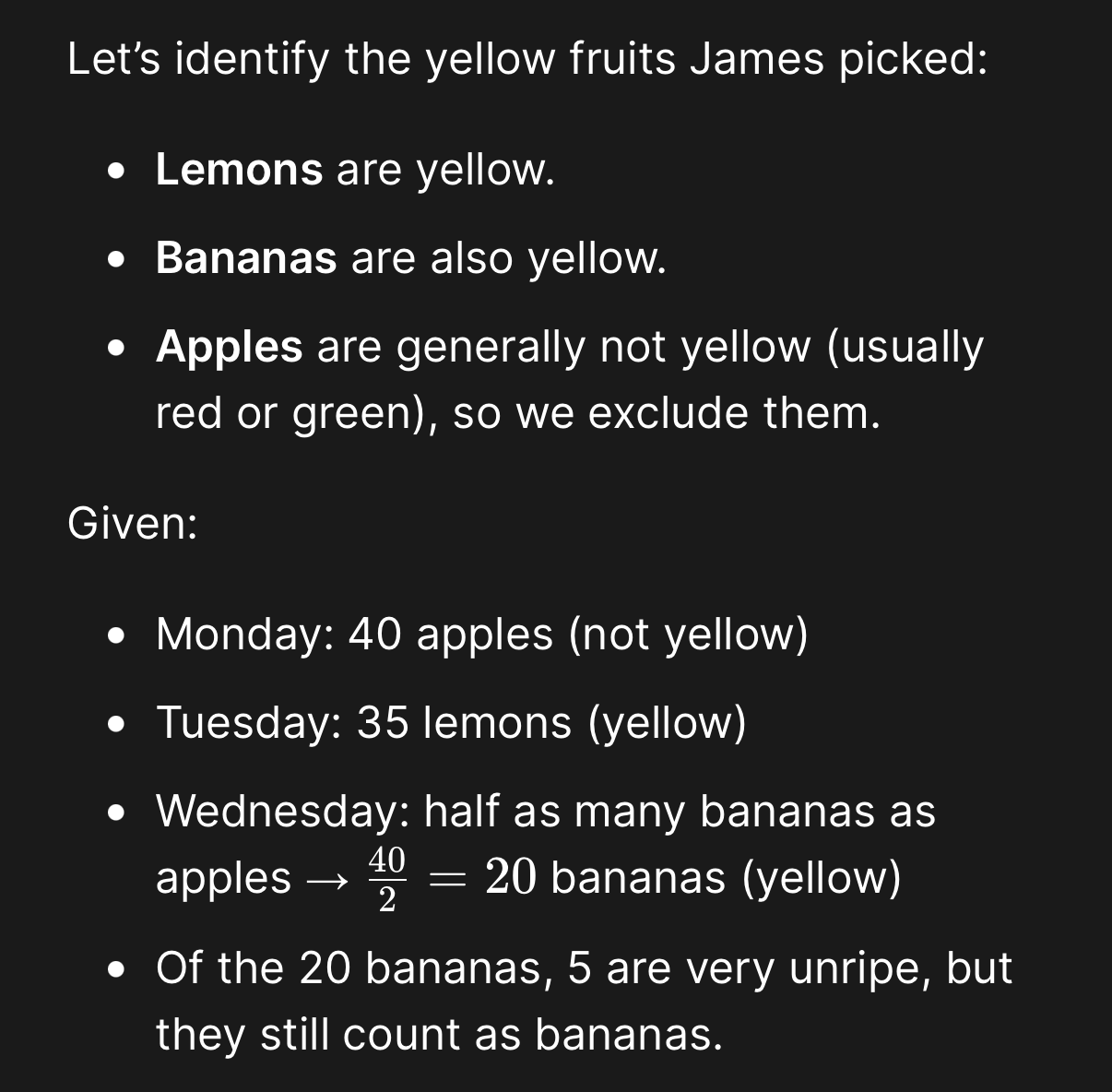

@alisynthesis @davidaugust fair enough. I changed up the problem completely and added some reasoning and it did pretty well. It appears to be generating code to solve the math. The only thing it missed is that very unripe bananas are green, not yellow.

James picks 40 apples on Monday. Then he picks 35 lemons on Tuesday. On Wednesday, he picks half as many bananas as he did apples, but five of them were very unripe. How many yellow fruits does James have?

-

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

Amo Bishop Rodent (@pikesley@mastodon.me.uk)

"We made the computers, the notoriously accurate calculating machines, worse at arithmetic. This is surely progress along the path to creating Computer God"

mastodon.me.uk (mastodon.me.uk)

-

@lemgandi

The wetness of water has been hotly debated, as to some wet means "covered with or soaked in water", and it's questioned whether water is covered with itself.

@davidaugust -

@davidaugust In about 80 years we've gone from a room full of computers the size of refrigerators that were good at crunching numbers but not much else to computers the size of corporate office parks that can draw almost-convincing pictures of people with five fingers (and thumbs, too!) but can't do elementary school math.

And some people call this progress.

@Karen5Lund Maybe because people stopped writing efficient code about 20 years ago?

-

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

@davidaugust AGI is coming son 🤭

-

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

@davidaugust interesting. Had to ask. Already fixed?

-

@glitzersachen @scottjenson @xdydx guessing you are joking. But also suspect it may be an inside joke with not a lot of folks on the inside.

@davidaugust @scottjenson @xdydx

True. See @xdydx 's reply.

-

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

Shortcut to paper: https://arxiv.org/pdf/2410.05229

-

@alisynthesis @davidaugust fair enough. I changed up the problem completely and added some reasoning and it did pretty well. It appears to be generating code to solve the math. The only thing it missed is that very unripe bananas are green, not yellow.

James picks 40 apples on Monday. Then he picks 35 lemons on Tuesday. On Wednesday, he picks half as many bananas as he did apples, but five of them were very unripe. How many yellow fruits does James have?

@audioflyer79 @alisynthesis @davidaugust how does it do if you swap the colors of the fruit?

-

@davidaugust AGI is coming son 🤭

@pascal_le_merrer any day now. I hear potus say in two weeks.

-

@davidaugust interesting. Had to ask. Already fixed?

@flq yes, many systems have tools and/or abilities built in to take over basic math operations that simpler LLMs failed at.

The salient and enduring issue, I think, is that the spin and marketing of LLMs as "understanding," "thinking" or "intelligent" (as those words typical meanings suggest) remains largely fictional.

-

@joriki it’s from August.

October 2024

Apple Engineers Show How Flimsy AI ‘Reasoning’ Can Be

The new frontier in large language models is the ability to “reason” their way through problems. New research from Apple says it's not quite what it's cracked up to be.

WIRED (www.wired.com)

-

@drifthood @davidaugust This makes me think of "Clever Hans", the horse that appeared to do arithmetics but actually just responded to involuntary human cues:

https://en.wikipedia.org/wiki/Clever_Hans -

We knew, but the proof is nice.

"Apple just proved that AI models cannot do math. Not advanced math. Grade school math. The kind a 10-year-old solves"

The guess-the-next-words machines don’t actually understand anything.

@davidaugust Of course an LLM cannot do math, but to be honest, that is also not what they're designed for. An LLM these days like Claude knows that it should take a calculator and type the equation in there, instead of hallucinating an answer. Complaining that an LLM can't do math is like complaining a screwdriver can't drill a hole.

You can counter that there are plenty of people who are using the screwdriver to drill the hole, but that is not on the tool, that is on the user.

-

@davidaugust Of course an LLM cannot do math, but to be honest, that is also not what they're designed for. An LLM these days like Claude knows that it should take a calculator and type the equation in there, instead of hallucinating an answer. Complaining that an LLM can't do math is like complaining a screwdriver can't drill a hole.

You can counter that there are plenty of people who are using the screwdriver to drill the hole, but that is not on the tool, that is on the user.

@davidaugust When did they do this test? I tried it with the following LLMs: Sonnet 4.6, Codex 5.3, GPT-5.4, GPT-5-Mini and Kimi-K2.5. They all answer the kiwi question correctly.