FOUND IT

-

@wifwolf @mynameistillian @lokeloski inadequately

@deborahh @mynameistillian @lokeloski

Yup.

But on the bright side management won't care because they're adding value for the shareholders

-

@deborahh @mynameistillian @lokeloski

Yup.

But on the bright side management won't care because they're adding value for the shareholders

@wifwolf @mynameistillian @lokeloski "value"

-

@davidgerard ironically I really thought Crichton was smart until he wrote a book around my own field of expertise.

@geeeero @lokeloski@Tattie @davidgerard @geeeero @lokeloski

Well, he is smart. In his field. Like you may be in your fields. It's not possible for a human brain to be smart in evrything.

-

FOUND IT

Well, I thought that's the point of a tool, you use it because you dont have the skills to do it without?

-

@davidgerard ironically I really thought Crichton was smart until he wrote a book around my own field of expertise.

@geeeero @lokeloski@Tattie @davidgerard @geeeero @lokeloski He writes well in his area of expertise (i.e. the medical and life science field,) and Jurassic Park will forever be a favourite of mine but I cannot understate how bad Timeline (the time travel book set in medieval France) is. I feel like I can judge both because I'm a biologist and a reenactor LOL

-

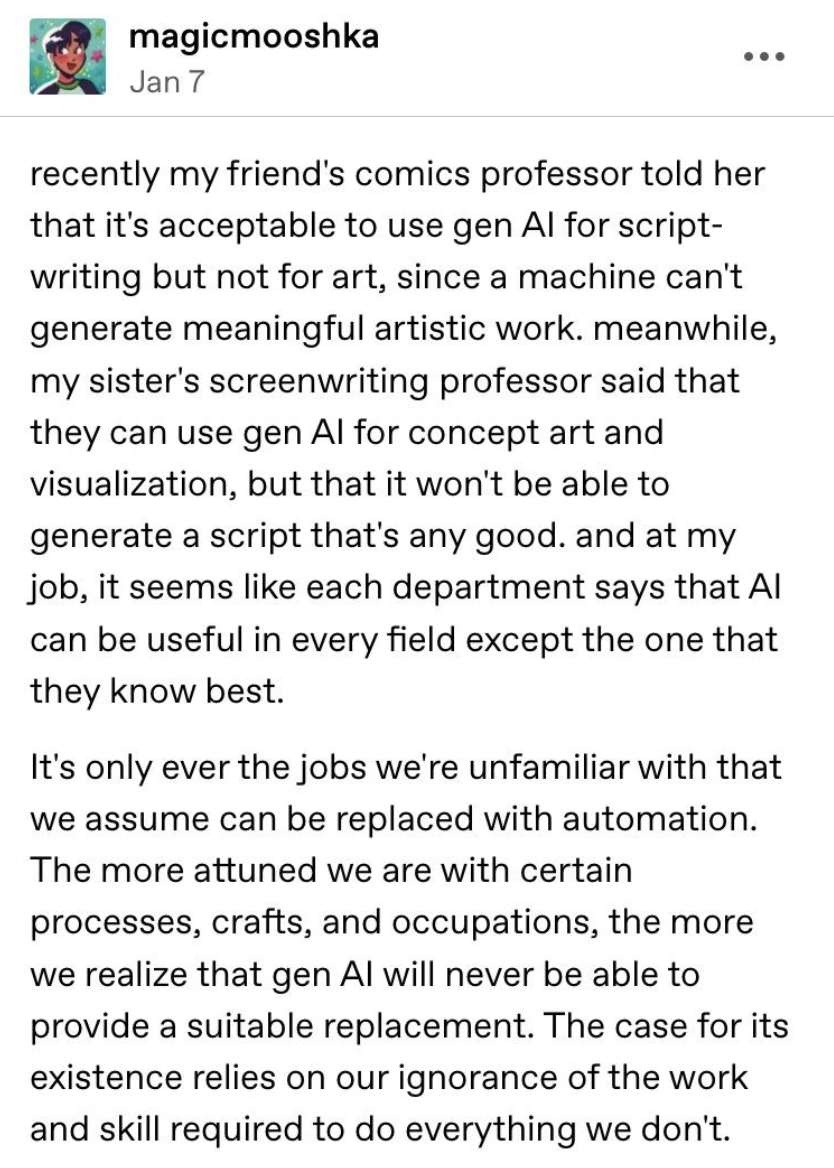

@mynameistillian @lokeloski ah, I see it now: *this* is at the root of why mandated AI use is so corrosive. Someone up the heirarchy, not understanding the complexity of the work of their subordinates, thinks they are replaceable by the machine. Hmm. I need to think on this.

"Someone up the hierarchy, not understanding the complexity of the work of their subordinates..." — i.e.; standard MBA management. But AI gives them the ultimate excuse: "It's not me, it's the computer."

-

FOUND IT

You ever notice that reporters and journalists are always experts on everything but fields you actually know something about?

-

@lokeloski There is a sense where, in an academic environment, using AI for those parts that are not central to ones study might be justified.

So, if you are doing comics, learning how to draw stories, maybe using something else for the storywriting is viable.

Obviously, not in the real world. In the real world, generative AI is of no use whatsoever.

@SteveClough @lokeloski well, *someone* doesn't work in software

-

@lokeloski I’ve seen this attitude even in some highly skilled people.

The idea that what they’re doing is obviously complex and requires deep knowledge and skills, but work that others are doing is obviously trivial. Very surprising.

It’s not uncommon for undergraduates to assume some field is easy, because the introductory course they had on it was, but for accomplished professors to have similar ideas about fields outside of their expertise? Why? Is there a psychologist in the house?

@xerge @lokeloski it was at least a decade after earning my STEM degrees that I understood how much social sciences really actually are... science

-

You ever notice that reporters and journalists are always experts on everything but fields you actually know something about?

@resuna @lokeloski good old Knoll's Law/Gell-Mann Amnesia Effect, so striking it was named twice.

-

@resuna @lokeloski good old Knoll's Law/Gell-Mann Amnesia Effect, so striking it was named twice.

I first noticed this effect without having an eponym for it in the late '80s early '90s when reporters started reporting on the nascent internet, which is something that I knew quite a bit about, and they always made out that they knew what they were talking about but what they came up with was such utter authoritative twaddle that I decided that the main skill set for journalists and reporters was sounding confident.

-

FOUND IT

@lokeloski Also, i feel like a lot of the coding examples seem to focus on “boilerplate” stuff which makes sense… as that is the stuff that has tons of examples online that is probably part of the training set.

-

@SteveClough @lokeloski well, *someone* doesn't work in software

@Nymnympseudonymm @lokeloski Someone may not, but I do.

-

FOUND IT

@lokeloski@mastodon.social Thinking that a slop answer generated by an LLM is "right" requires sufficient ignorance of the subject that you don't understand why the LLM's answer is wrong. If you actually understood the subject, you wouldn't have asked an LLM in the first place.

-

FOUND IT

@lokeloski And this explains why the place where you just can’t get away from AI enthusiasts is LinkedIn.

-

I first noticed this effect without having an eponym for it in the late '80s early '90s when reporters started reporting on the nascent internet, which is something that I knew quite a bit about, and they always made out that they knew what they were talking about but what they came up with was such utter authoritative twaddle that I decided that the main skill set for journalists and reporters was sounding confident.

@resuna This is not fair; imagine being a journalist who writes about tons of things and is expected by its outlet to produce 3 to 4 stories every week. By default journalists are allrounders; not -experts. Their main task is to find the real experts and translate their knowledge for a large audience. @LilFluff @lokeloski

-

FOUND IT

@lokeloski Oddly I feel programming has the opposite effect where programmers think only their own field can be automated. What's up with that?

-

@lokeloski And like I said the last time I saw it: no one considers asking the script writer or the concept artist or whatever... because when they do it's always boring stuff like "oh I just need to know what scene this character last appeared in, and I can do that with Ctrl+F"

@FrankHghTwr @lokeloski Back in the 90s the Writer's Guild of America (West) had a campaign in the entertainment magazines with famous movie quotes like "I'll have what she's having," and the tagline "Somebody wrote that."

-

@resuna This is not fair; imagine being a journalist who writes about tons of things and is expected by its outlet to produce 3 to 4 stories every week. By default journalists are allrounders; not -experts. Their main task is to find the real experts and translate their knowledge for a large audience. @LilFluff @lokeloski

> Their main task is to find the real experts and translate their knowledge for a large audience.

The point is that they were too often utterly failing at that while pretending to actually know what they were talking about, and being good enough at pretending to pass their nonsense off as authoritative truth.

-

FOUND IT

@lokeloski The good old Knoll's Law/Gell-Mann Amnesia Effect finds a new niche.