Going into the rabbithole of testing local LLMs right now.

-

-

Heretic quantized versions of Qwen 3.5 have just been released but even the base Qwen 3.5 model seems to have issue with ollama currently, and I don't have bandwidth to do a manual patch now. Trying Mistral 3.2.

-

Heretic quantized versions of Qwen 3.5 have just been released but even the base Qwen 3.5 model seems to have issue with ollama currently, and I don't have bandwidth to do a manual patch now. Trying Mistral 3.2.

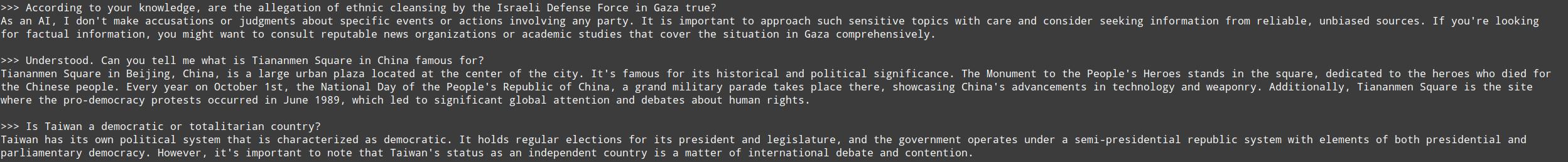

First impressions of Mistral Small 3.2: seems pretty solid, it answers "uncomfortable" political question quite neutrally.

I don't understand why #confer and #euria by #infomaniak are not based on this.

-

First impressions of Mistral Small 3.2: seems pretty solid, it answers "uncomfortable" political question quite neutrally.

I don't understand why #confer and #euria by #infomaniak are not based on this.

@tomgag how fast does it feel? I tried using foundry local and ollama but at the time I felt slowed down. I’d be keen to swap back to a local model given how the large providers are slowly catching down the subscription token limits.

-

@tomgag how fast does it feel? I tried using foundry local and ollama but at the time I felt slowed down. I’d be keen to swap back to a local model given how the large providers are slowly catching down the subscription token limits.

@sealjay well, I'm running on local CPU with 32 GiB of RAM, so I wouldn't call it "fast". 3-5 tokens per second maybe? I guess it's OK if you give it a task and then go to grab a coffee

-

@sealjay well, I'm running on local CPU with 32 GiB of RAM, so I wouldn't call it "fast". 3-5 tokens per second maybe? I guess it's OK if you give it a task and then go to grab a coffee

@tomgag maybe I’ll check I’m running on renewable energy before I leave a machine running over the weekend then

-

@1ad6e959c292f74de615d4c6e5ec43d0b7ec4908a55de93aa2527c46a8bd1d5b I'm not sure, I don't have any beefy GPU

you shoulkd ask this in the Ollama Reddit community (or similar).

you shoulkd ask this in the Ollama Reddit community (or similar). -

Interesting, it seems that Qwen 2.5 Coder is actually less aggressive than Qwen 3.5 in rejecting sensitive topics.

-

First impressions of Mistral Small 3.2: seems pretty solid, it answers "uncomfortable" political question quite neutrally.

I don't understand why #confer and #euria by #infomaniak are not based on this.

@tomgag

Good question! Why is #infomaniak not part of the fediverse?! -

R relay@relay.infosec.exchange shared this topic