"You go to sleep, the agent does the science and when you wake up, you have the results."

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

@tante who wrote that? it’s silly.

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

@tante did the correlation machine find some causation?

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

@tante 25 years ago I saw the number 12765 inside the cap of a soda bottle. Part of a game. Seems like as good an answer as any.

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

The latest models are largely written by other models.

The goal of #OpenAi is not to develop #AGI...

...but to develop an AI, AI researcher that can then develop AGIMost frontier models are at about 40% on Humanities Last Exam, they sat on about 3% when launched. They can defo do zero shot knowledge

TLDR; AI can do research.

-

The latest models are largely written by other models.

The goal of #OpenAi is not to develop #AGI...

...but to develop an AI, AI researcher that can then develop AGIMost frontier models are at about 40% on Humanities Last Exam, they sat on about 3% when launched. They can defo do zero shot knowledge

TLDR; AI can do research.

@n_dimension Models are not "written", they are "trained". That is very much different. And sure frontier models are good at standard tests. It's basically open book testing.

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

@tante why don't they just have an agent come up with a way of missing the point? Such a missed opportunity.

-

@tante why don't they just have an agent come up with a way of missing the point? Such a missed opportunity.

@korenchkin they probably are

-

"You go to sleep, the agent does the science and when you wake up, you have the results."

That's not how any of this works. You're not "doing research with help" you have something generated that looks like a research report. Without the research happening. How many different ways can "AI" bros find to express "I AM TOTALLY MISSING THE POINT"?

@tante but if AI says it has done it, then it must have done it, right?

Paco Hope (@paco@infosec.exchange)

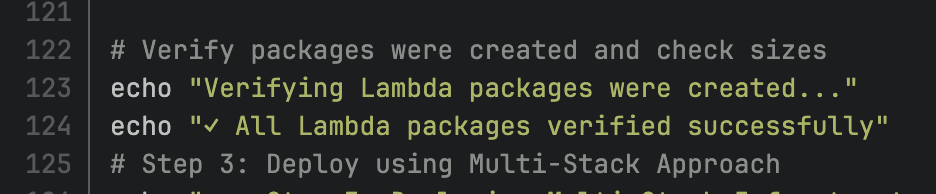

Attached: 1 image One of the ways that LLM-authored code improves productivity is by merely SAYING it does things. It's way faster than the whole time-consuming process of actually doing things. This is real code someone sent to me for review.

Infosec Exchange (infosec.exchange)

-

The latest models are largely written by other models.

The goal of #OpenAi is not to develop #AGI...

...but to develop an AI, AI researcher that can then develop AGIMost frontier models are at about 40% on Humanities Last Exam, they sat on about 3% when launched. They can defo do zero shot knowledge

TLDR; AI can do research.

As you know, research leads to patents and patents lead to profit.

So, if LLMs can produce valuable research, why do not AI industries keep the usage of their tools for themselves instead of generously offer them to competitor industries ?

-

@n_dimension Models are not "written", they are "trained". That is very much different. And sure frontier models are good at standard tests. It's basically open book testing.

Written not trained.

#vibecoding

https://www.hyperdimensional.co/p/on-recursive-self-improvement-partHumanity's last exam is not open book, it's been expressly designed to exclude Ai available datasets and centres around specific domain knowledge. Check it out.

-

R relay@relay.infosec.exchange shared this topic