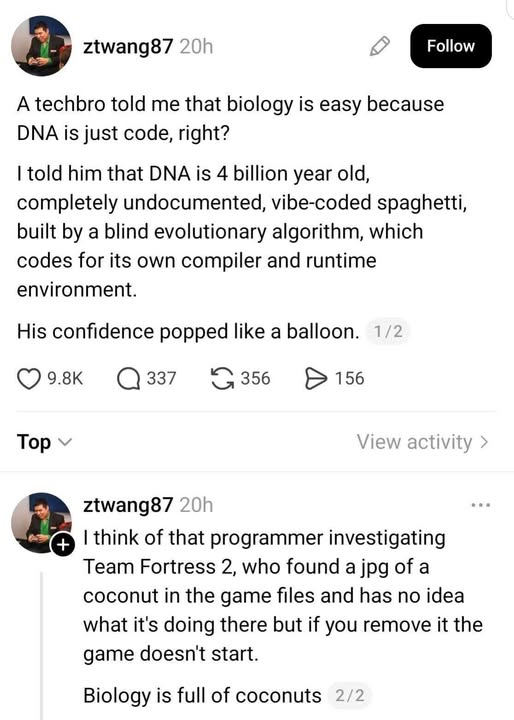

Right now, yes, I think biological systems like the incredibly complex way DNA (and other genetic code) interacts with hyper-local and other environments to produce biological stuff is beyond the reach of genAI.

-

Right now, yes, I think biological systems like the incredibly complex way DNA (and other genetic code) interacts with hyper-local and other environments to produce biological stuff is beyond the reach of genAI.

However, I'm sure there are hundreds or thousands of biological researchers using AI or slightly simpler machine learning algorithms to solve tough problems--these tools can sometimes spot (and other times just use, without telling us how) patterns that humans can't.

I like this post and think it's accurate so far--with my painfully limited, layperson's understanding of biology--but a year ago the experts said #AI would never generate good code, and now it generates useful code in lots of areas. It won't stop getting better, and I don't think we're near any plateaus in its improvement.

The main reason to oppose AI isn't because it sucks.

#biology #code #resist #meme #dna

@guyjantic Sure there AI algorithms used in genetic sequencing and protein folding and other MolBio stuff, but they are as removed from these stochastic parrots that inflate the economic bubble as, say, a bird from a dragonfly: both fly and have wings, but have nothing to do in how they work.

-

Right now, yes, I think biological systems like the incredibly complex way DNA (and other genetic code) interacts with hyper-local and other environments to produce biological stuff is beyond the reach of genAI.

However, I'm sure there are hundreds or thousands of biological researchers using AI or slightly simpler machine learning algorithms to solve tough problems--these tools can sometimes spot (and other times just use, without telling us how) patterns that humans can't.

I like this post and think it's accurate so far--with my painfully limited, layperson's understanding of biology--but a year ago the experts said #AI would never generate good code, and now it generates useful code in lots of areas. It won't stop getting better, and I don't think we're near any plateaus in its improvement.

The main reason to oppose AI isn't because it sucks.

#biology #code #resist #meme #dna

@guyjantic We use more math in biology than programmers know and physicists like to admit.

-

Right now, yes, I think biological systems like the incredibly complex way DNA (and other genetic code) interacts with hyper-local and other environments to produce biological stuff is beyond the reach of genAI.

However, I'm sure there are hundreds or thousands of biological researchers using AI or slightly simpler machine learning algorithms to solve tough problems--these tools can sometimes spot (and other times just use, without telling us how) patterns that humans can't.

I like this post and think it's accurate so far--with my painfully limited, layperson's understanding of biology--but a year ago the experts said #AI would never generate good code, and now it generates useful code in lots of areas. It won't stop getting better, and I don't think we're near any plateaus in its improvement.

The main reason to oppose AI isn't because it sucks.

#biology #code #resist #meme #dna

@guyjantic I don't mean to worry anyone here, but this sounds suspiciously like a lot of telecom code I've seen...

-

@guyjantic Sure there AI algorithms used in genetic sequencing and protein folding and other MolBio stuff, but they are as removed from these stochastic parrots that inflate the economic bubble as, say, a bird from a dragonfly: both fly and have wings, but have nothing to do in how they work.

@Illuminatus @guyjantic LLMs are a dead end evolutionary path in the AI space. Other AI methods and models are currently being starved, and we may never know what they could have become.

-

Right now, yes, I think biological systems like the incredibly complex way DNA (and other genetic code) interacts with hyper-local and other environments to produce biological stuff is beyond the reach of genAI.

However, I'm sure there are hundreds or thousands of biological researchers using AI or slightly simpler machine learning algorithms to solve tough problems--these tools can sometimes spot (and other times just use, without telling us how) patterns that humans can't.

I like this post and think it's accurate so far--with my painfully limited, layperson's understanding of biology--but a year ago the experts said #AI would never generate good code, and now it generates useful code in lots of areas. It won't stop getting better, and I don't think we're near any plateaus in its improvement.

The main reason to oppose AI isn't because it sucks.

#biology #code #resist #meme #dna

@guyjantic

In addition, the code copying mechanism is imperfect, which is a feature.The code only determines a fraction of the outcome, with the runtime environment determining a lot.

The code and the environment sort of influence each others, with the environment able to turn genes "on" or "off".

And then, bacteria and viruses etc (with their own genome) take the role as plugins and add-ons. Their participation is somewhat necessary, but there is no overview.

Sure, easy to simulate ...

-

@Illuminatus @guyjantic LLMs are a dead end evolutionary path in the AI space. Other AI methods and models are currently being starved, and we may never know what they could have become.

@DragonBard @guyjantic A dark age by other means.

-

@guyjantic I don't mean to worry anyone here, but this sounds suspiciously like a lot of telecom code I've seen...

@grumpydad You have failed. I am now worried. Not a lot, but maybe a small amount.

-

@guyjantic

In addition, the code copying mechanism is imperfect, which is a feature.The code only determines a fraction of the outcome, with the runtime environment determining a lot.

The code and the environment sort of influence each others, with the environment able to turn genes "on" or "off".

And then, bacteria and viruses etc (with their own genome) take the role as plugins and add-ons. Their participation is somewhat necessary, but there is no overview.

Sure, easy to simulate ...

@anchr Literally every time I've listened to a biologist talk about genetics, epigenetics, etc. I have realized there is not only more quantity of complexity but more dimensions of complexity. It's just mind-bogglingly complicated (to me, at least).

-

@grumpydad You have failed. I am now worried. Not a lot, but maybe a small amount.

@guyjantic Sorry for that. Perhaps I can ease your worry by informing that I've seen considerably worse in code used in consumer products?

-

@guyjantic Sure there AI algorithms used in genetic sequencing and protein folding and other MolBio stuff, but they are as removed from these stochastic parrots that inflate the economic bubble as, say, a bird from a dragonfly: both fly and have wings, but have nothing to do in how they work.

@Illuminatus I don't want genAI or whatever it turns into within five years to solve all our complex problems. I have my personal reasons for this, but the bigger reasons (as all mostodonians probably know) have to do with income inequality/wealth hoarding, labor rights, distortion of democratic government processes, destruction/hoarding of community resources, reducing our ability and motivation to learn, etc.

I have been meaning (in between all the shit my dean dumps on me to keep this job that I don't love) to learn more of the technical side of genAI for 3 or 4 years now, but from a 30,000-feet perspective what I see is that, within two years, the things genAI can do have increased dramatically. It's driven by money (and weird fantasies of money), so it's all twisted in that direction, but that money will probably keep flowing. Some companies will go under and others will survive. Many of the laid-off workers will stay laid off and many of the shareholder gains will continue or increase, so the money will keep driving this, to some extent. I wish it would implode under its own overblown weight, but I don't think it will.

It's impossible for me to imagine a technology like this going "back in the bottle." Beyond the greed-fueled idiocy involved, there are real breakthroughs: one of the first genAI/ML things most of us heard about was an oncology appliction--identifying cancerous cells in biopsies--as an outgrowth of a Japanese bakery's use of early (?) AI to visually identify different bread types. There are dozens of examples of prosocial, even life-saving things being done or just on the horizon with genAI. It can't (currently?) make anything truly unique. It's currently kind of dumb despite how smart it can sound. But it does some things well, and when used for those things it solves certain problems.

Those positives will be used as another stick to beat all of us with, to pressure us to adopt, or at least stop resisting, AI. It will keep coming, unless we have some global transformation of how we allocate resources. I think it would take something like the USA, China, Russia, and the EU all becoming truly socialist/anarchist with effective citizen representation and government. That's not going to happen in my lifetime (though it would be super cool).

Most of the "AI sucks LOL" messages seem to have behind them a desire to believe that AI will never earn its pay, so to speak, so it will just disappear because it will stop being useful, or will be recognized as not marginally useful enough for any real-world enterprise to use it. That won't happen.

I think the best we can do is to aim for effective regulation. AI companies need to pay their fair share (I'd say a progressive gradation is fair) for the resources they use. That would slow a lot of this down. Hell, if AI companies were actually fairly compensating the right people for every bit of water, electricity, bandwidth, labor externalities, etc. that they generated, many of my concerns would be alleviated. If, in addition, the companies pushing AI were physically/legally/criminally prevented from accessing certain resources no matter how much they were willing to pay (I'm thinking of national parks, aquifers, etc.), almost all of my major concerns would be down to a dull roar.

Even that, however, would require that the USA (and probably other countries) suddenly experience a radical change in how we regulate corporations, how much money we allow in politics (and where and how), etc. It's an impossibly tall order for the moment, and I only hope we can make some progress before I die.

-

@guyjantic Sorry for that. Perhaps I can ease your worry by informing that I've seen considerably worse in code used in consumer products?

@grumpydad Your concept of reducing someone's anxiety is an interesting one

-

R relay@relay.infosec.exchange shared this topic