There are millions of these single query scrapers.

-

RE: https://social.treehouse.systems/@mgorny/116465292654071299

There are millions of these single query scrapers. As open projects we should band together and block them preemptively. This is the only way we can put a stop to this.

-

RE: https://social.treehouse.systems/@mgorny/116465292654071299

There are millions of these single query scrapers. As open projects we should band together and block them preemptively. This is the only way we can put a stop to this.

Here are 91,372 IP addresses pretending to be normal browsers, while sending a *single* query to me today. http://berthub.eu/tmp/singlequery-2026-04-26.csv - feel free to block them all!

-

Here are 91,372 IP addresses pretending to be normal browsers, while sending a *single* query to me today. http://berthub.eu/tmp/singlequery-2026-04-26.csv - feel free to block them all!

@bert_hubert how did you generate that list? Perhaps we can do the same on ftp.nluug.nl an on mastodon.nl and so on?

-

@bert_hubert how did you generate that list? Perhaps we can do the same on ftp.nluug.nl an on mastodon.nl and so on?

@koen This is based on a modified version of https://github.com/berthubert/audience-minutes/ - it needs a bit of work to be better suitable for this purpose.

-

Here are 91,372 IP addresses pretending to be normal browsers, while sending a *single* query to me today. http://berthub.eu/tmp/singlequery-2026-04-26.csv - feel free to block them all!

@bert_hubert residential proxies are a problem that cannot be easily solved, unless you block the entire Internet.

-

@bert_hubert residential proxies are a problem that cannot be easily solved, unless you block the entire Internet.

@brown I’m fine with blocking residential proxy enablers.

-

@brown I’m fine with blocking residential proxy enablers.

@bert_hubert @brown time for a second Internet I feel. The first one is about to become usless.

-

Here are 91,372 IP addresses pretending to be normal browsers, while sending a *single* query to me today. http://berthub.eu/tmp/singlequery-2026-04-26.csv - feel free to block them all!

@bert_hubert Curious: would I be blocked if I left open a tab and restarted my browser that day but didn't use the website otherwise?

-

@bert_hubert Curious: would I be blocked if I left open a tab and restarted my browser that day but didn't use the website otherwise?

@hashbang your browser would still send multiple queries, for CSS and JS and images etc.

-

@brown I’m fine with blocking residential proxy enablers.

@bert_hubert @brown how will you know who they are, since each IP is used only once? Also, since most ISPs use NAT or CGNAT, there would be significant collateral damage.

Eventually, any URL that can cause significant CPU expenditur like Git history or search will have to be put behind an authenticated-user-wall. For sites without a real user base, we could imaging something like PrivacyPass, except means to identify human users. The residential proxy providers don't usually have access to the user's browser and its cookies.

-

@bert_hubert @brown how will you know who they are, since each IP is used only once? Also, since most ISPs use NAT or CGNAT, there would be significant collateral damage.

Eventually, any URL that can cause significant CPU expenditur like Git history or search will have to be put behind an authenticated-user-wall. For sites without a real user base, we could imaging something like PrivacyPass, except means to identify human users. The residential proxy providers don't usually have access to the user's browser and its cookies.

@fazalmajid @brown I worded it carefully - we need to work together so we can spot "only once" queries across sites. The collateral damage is fine, will make people care about this stuff.

-

@hashbang your browser would still send multiple queries, for CSS and JS and images etc.

@bert_hubert True, I was just unsure if that is what you meant. Phew. My tab saving strategy can continue then

-

@fazalmajid @brown I worded it carefully - we need to work together so we can spot "only once" queries across sites. The collateral damage is fine, will make people care about this stuff.

@bert_hubert @brown what, like spam traps deliberately placed in robots.txt where LLM crawlers will be unable to resist? I shudder to think about how much bandwidth would be required to synchronize the lookup tables of the the LLMbot-RBLs, not to mention query traffic.

As for the collateral damage, the ordinary user of an ISP has no control over whether their neigbor signs up with a residential proxy for a couple of bucks per month, or even to figure out what happened. As for pressuring ISPs, the media groups have been trying to do that for decades with copyright infringers, to no avail.

-

@bert_hubert @brown what, like spam traps deliberately placed in robots.txt where LLM crawlers will be unable to resist? I shudder to think about how much bandwidth would be required to synchronize the lookup tables of the the LLMbot-RBLs, not to mention query traffic.

As for the collateral damage, the ordinary user of an ISP has no control over whether their neigbor signs up with a residential proxy for a couple of bucks per month, or even to figure out what happened. As for pressuring ISPs, the media groups have been trying to do that for decades with copyright infringers, to no avail.

@fazalmajid @brown the alternative is no services. The pain is here or there, and I'm fine with the pain being somewhere else.

-

@fazalmajid @brown the alternative is no services. The pain is here or there, and I'm fine with the pain being somewhere else.

@bert_hubert @brown what I am saying is an allowlist with some robust yet privacy-preserving authentication is more likely to succeed than a whack-a-mole blocklist.

-

@brown I’m fine with blocking residential proxy enablers.

@bert_hubert @brown Have you considered putting something like Anubis in front of your site, to make it too costly for AI-bots to visit your site? https://anubis.techaro.lol

-

@bert_hubert @brown Have you considered putting something like Anubis in front of your site, to make it too costly for AI-bots to visit your site? https://anubis.techaro.lol

@kerfuffle @brown I personally do not suffer from this problem since my site can deal with the traffic. The problem is more for projects that burn more CPU. I'm told Anubis is a solved problem for these scrapers - they have sufficient CPU power...

-

@kerfuffle @brown I personally do not suffer from this problem since my site can deal with the traffic. The problem is more for projects that burn more CPU. I'm told Anubis is a solved problem for these scrapers - they have sufficient CPU power...

@bert_hubert @kerfuffle @brown As far as I'm aware Anubis still works just fine. However the reason it does is not that the needed CPU power would be too expensive for the scrapers, it's just that they haven't bothered to bypass it yet.

-

Here are 91,372 IP addresses pretending to be normal browsers, while sending a *single* query to me today. http://berthub.eu/tmp/singlequery-2026-04-26.csv - feel free to block them all!

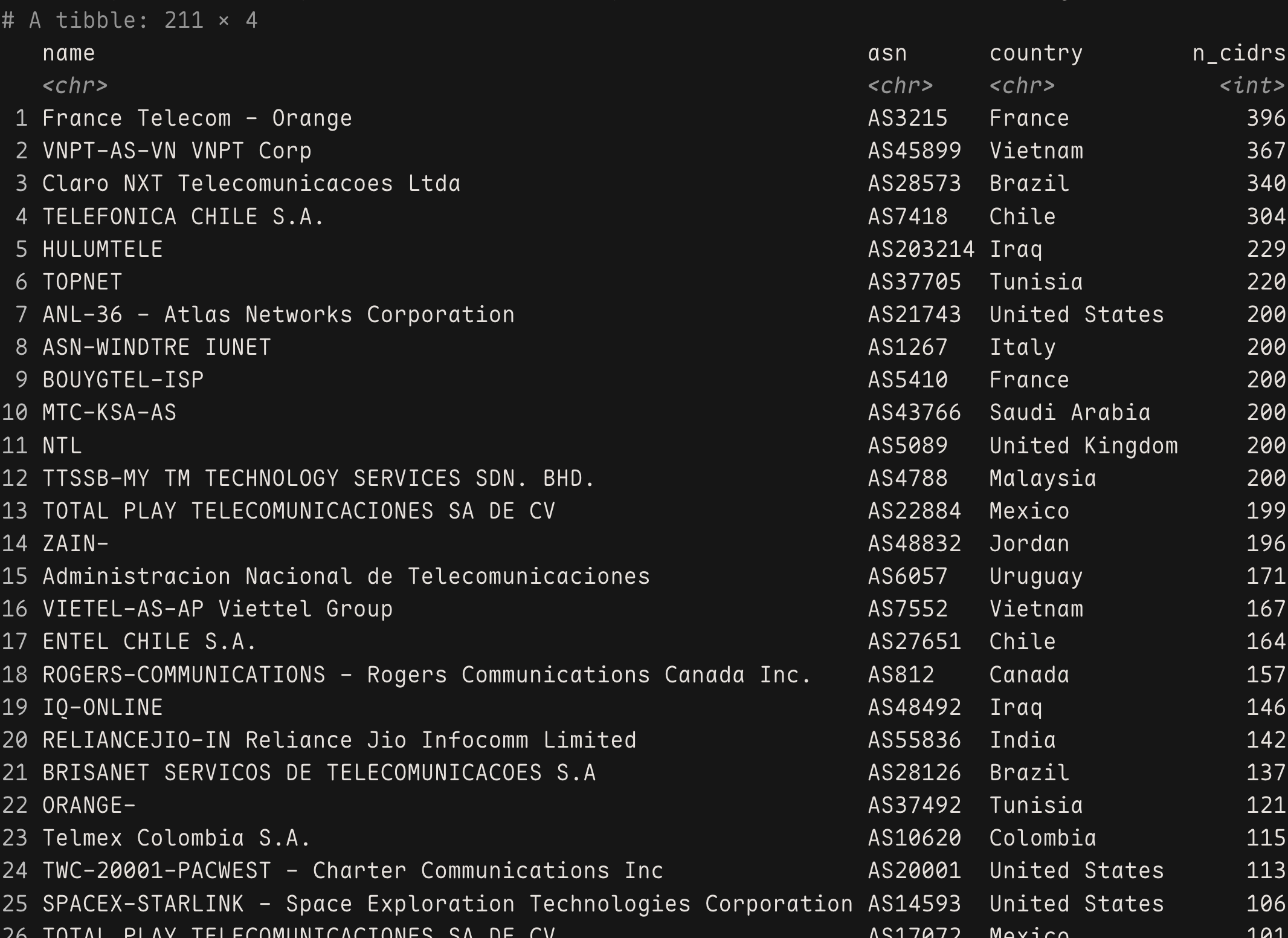

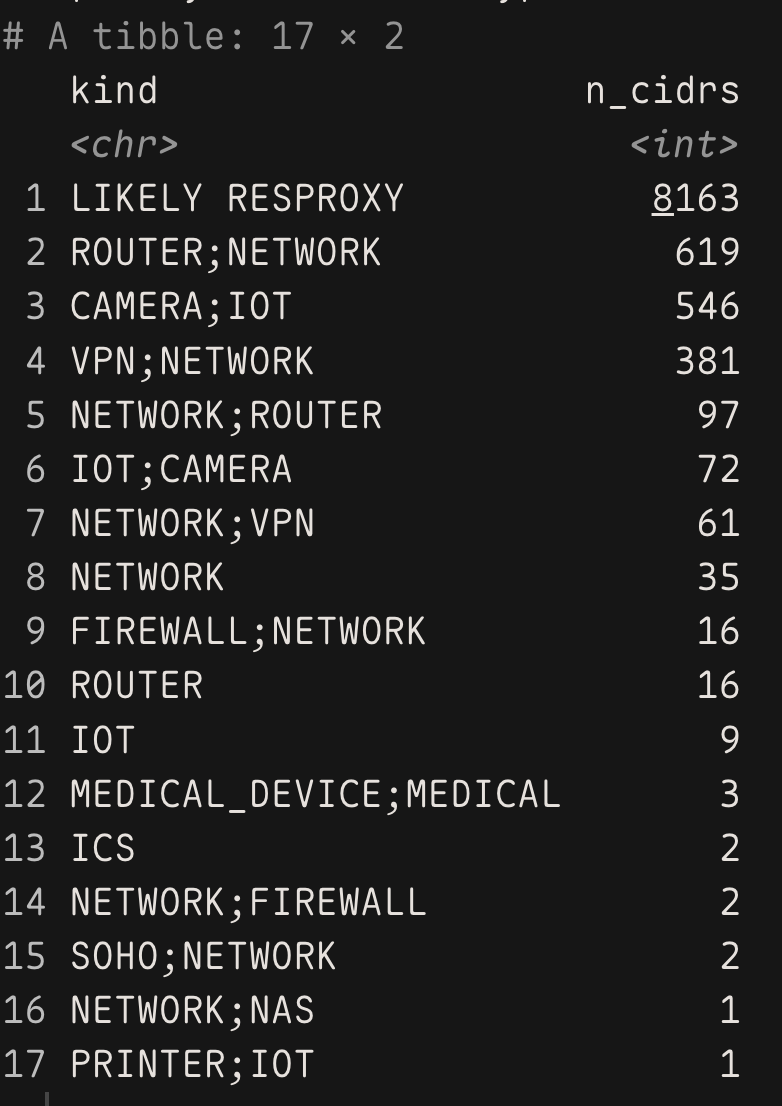

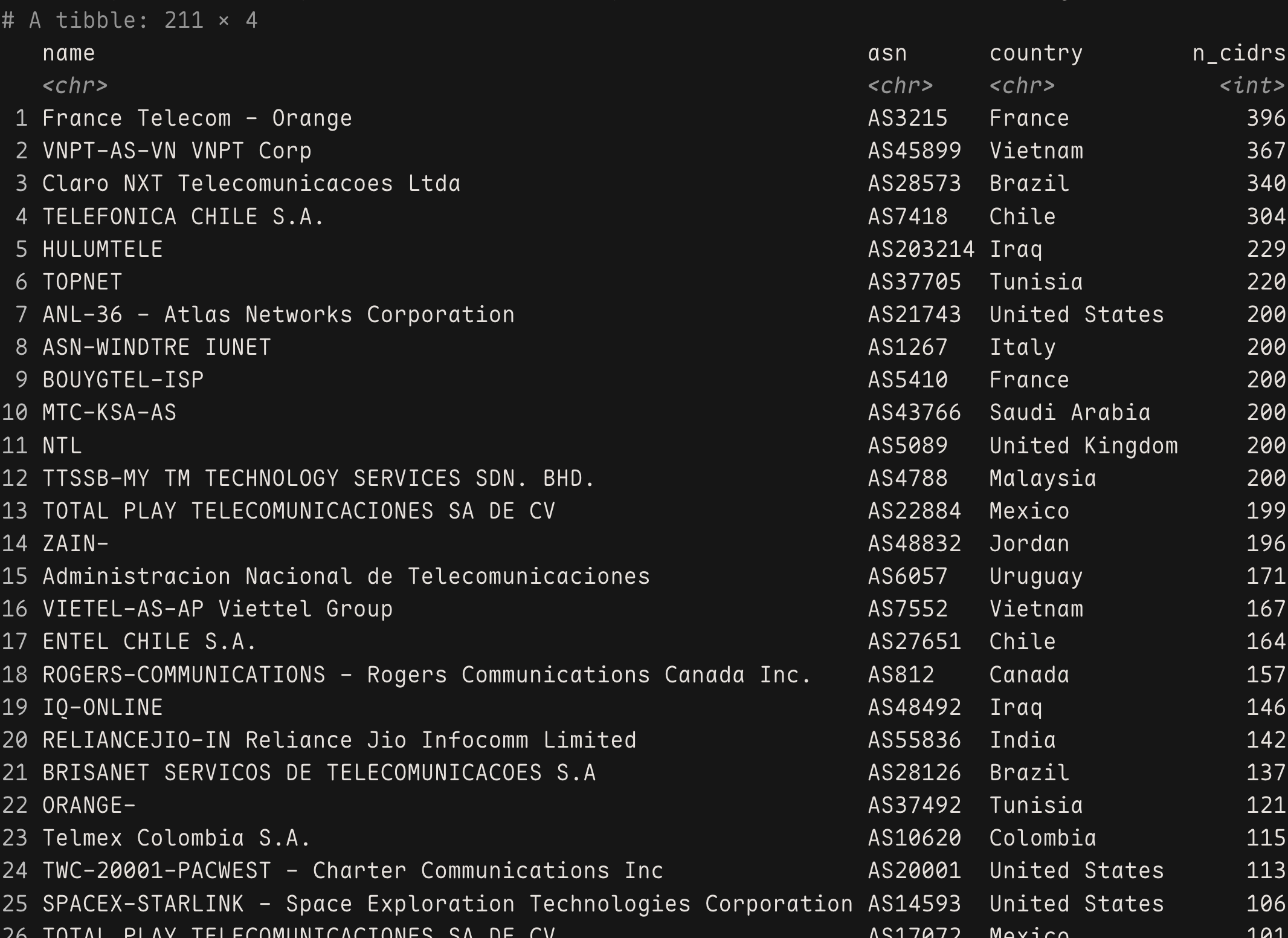

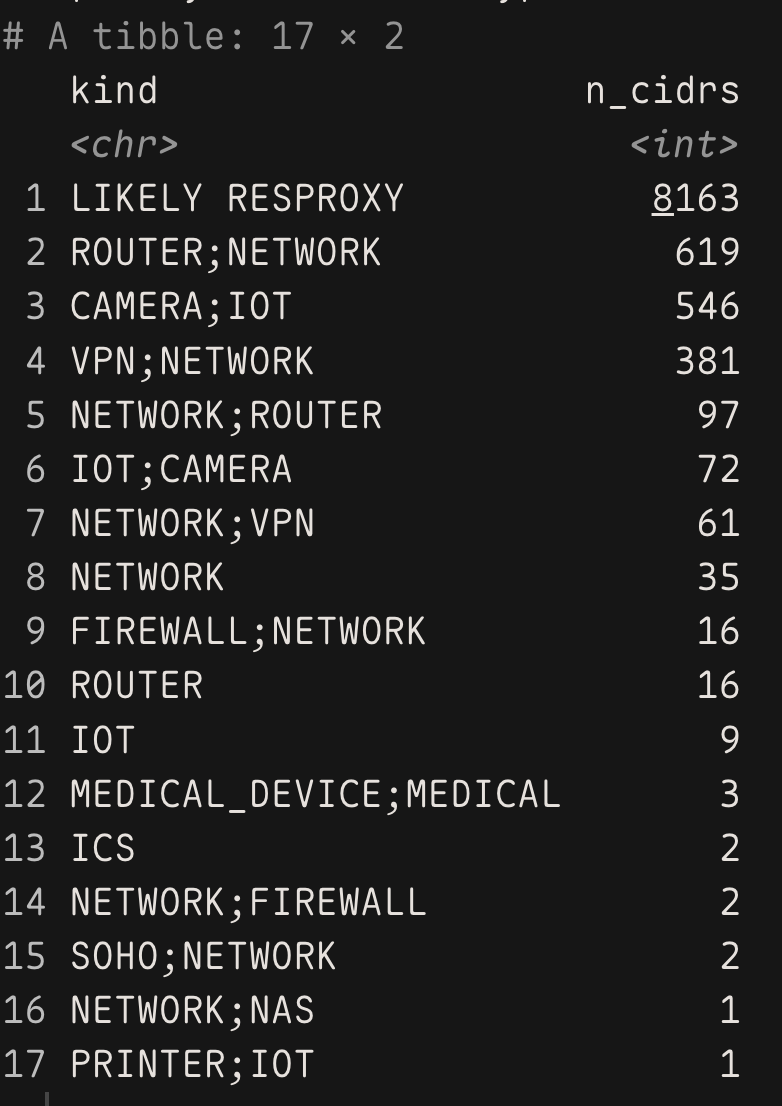

@bert_hubert oof. tons of residential proxies but also infected routers and IoT/ICS garbage. Mix of AI scrapers using res proxies and mirai-adjacent. Worst of both worlds.

-

@bert_hubert oof. tons of residential proxies but also infected routers and IoT/ICS garbage. Mix of AI scrapers using res proxies and mirai-adjacent. Worst of both worlds.

@hrbrmstr @bert_hubert huh, a little surprised spacex isnt higher on that list.