The first ten minutes I spent on social media this morning made me feel all kinds of things.

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI -

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@elena @emilymbender @cwebber @tante

Everything possible, engaging with other like-minded individuals helps, but ai feels like a massive landslide to me. Not great.

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@elena@aseachange.com @emilymbender@dair-community.social @tante@tldr.nettime.org @cwebber@social.coop

I endeavour to make my own tools. Code, write and make graphics/images where I can, regardless of the quality of the result. -

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@elena @emilymbender @cwebber @tante

I completely understand your frustration. It’s true that AI has made the sheer act of "writing code" incredibly easy, but those claiming coding is dead are missing the entire point of software engineering. Writing code is merely the most basic layer of any development project.

If you look at how top-tier dev teams (like at Google) operate, the very first thing they do isn't writing code. It's System Architecture Design. How will the system run? How do the components interact to achieve the ultimate goal? They spend massive amounts of time deeply conceptualizing the core workflows.

Once that overarching architecture is established, everything else—regardless of what programming language, algorithm, or AI tool you use—is just flesh serving the skeleton. Code simply executes the workflow that has already been defined.

This is exactly why AI cannot replace human engineers right now. AI generates code based on your instructions. And those instructions are actually the distillation of your core architecture and thought process. The AI is just an executor; it cannot invent a complex workflow from thin air.

The absolute most scarce and valuable skill right now isn't writing syntax; it's the ability to conceptualize a complete project and its entire logical workflow from scratch. That is where the true human value lies, and that is the real core Intellectual Property (IP).

Learning the foundational layers like the MIT Missing Semester is exactly what builds this architectural mindset. Keep pushing!

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@emilymbender@dair-community.social @cwebber@social.coop @tante@tldr.nettime.org @elena@aseachange.com my family is now LLM free

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@elena @emilymbender @cwebber @tante

"no use exercising your coding skills, AI is too good now, you can't compete with it anyway"

"tired of overthinking every decision?"

It's a dumbing down and makes stupidity acceptable. Given that the coding generated by AI needs to be checked and often still contains errors or unnecessary code, claiming that AI is so powerful that there's no need for skills is completely false. If you coding using AI but don't understand the code, it's useless.

-

@elena @emilymbender @cwebber @tante

I completely understand your frustration. It’s true that AI has made the sheer act of "writing code" incredibly easy, but those claiming coding is dead are missing the entire point of software engineering. Writing code is merely the most basic layer of any development project.

If you look at how top-tier dev teams (like at Google) operate, the very first thing they do isn't writing code. It's System Architecture Design. How will the system run? How do the components interact to achieve the ultimate goal? They spend massive amounts of time deeply conceptualizing the core workflows.

Once that overarching architecture is established, everything else—regardless of what programming language, algorithm, or AI tool you use—is just flesh serving the skeleton. Code simply executes the workflow that has already been defined.

This is exactly why AI cannot replace human engineers right now. AI generates code based on your instructions. And those instructions are actually the distillation of your core architecture and thought process. The AI is just an executor; it cannot invent a complex workflow from thin air.

The absolute most scarce and valuable skill right now isn't writing syntax; it's the ability to conceptualize a complete project and its entire logical workflow from scratch. That is where the true human value lies, and that is the real core Intellectual Property (IP).

Learning the foundational layers like the MIT Missing Semester is exactly what builds this architectural mindset. Keep pushing!

@LucasAegis @elena @emilymbender @cwebber @tante One of the things top-tier devs at Google are doing *today* is using GenAI to reverse-engineer the design and architecture of legacy systems. Big systems, 100k+ LoC, where the original authors have long ago moved to other teams and the expertise on the internals is missing. GenAI is very helpful in reviewing and synthesizing that legacy code, giving reasonable answers on system components and data flows. It needs to be an iterative process, of course, with a senior engineer really thinking about the hypotheses that the LLM is generating, validating against the code, and steering appropriately. But damn if it isn't effective at helping create real human understanding.

-

@LucasAegis @elena @emilymbender @cwebber @tante One of the things top-tier devs at Google are doing *today* is using GenAI to reverse-engineer the design and architecture of legacy systems. Big systems, 100k+ LoC, where the original authors have long ago moved to other teams and the expertise on the internals is missing. GenAI is very helpful in reviewing and synthesizing that legacy code, giving reasonable answers on system components and data flows. It needs to be an iterative process, of course, with a senior engineer really thinking about the hypotheses that the LLM is generating, validating against the code, and steering appropriately. But damn if it isn't effective at helping create real human understanding.

@zenkat @elena @emilymbender @cwebber @tante

To be fair, I’ve never been part of a massive, Google-scale team project, so I can't really speak to their specific internal workflows or the pain of navigating 100k+ lines of legacy code.That said, I have reverse-engineered my fair share of other projects, and I usually take a slightly different approach. I actually never try to decipher or crack their original code.

Instead, I focus purely on the "physical phenomena" of the system. I look at what exact observable behavior or state change happens at each critical node and branch. Once I map out those phenomena, I just ask myself: "If I needed to achieve this exact same phenomenon today, how would I architect it from scratch?"

It's fascinating how different scales of engineering require entirely different survival tactics!

-

R relay@relay.infosec.exchange shared this topic

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

#NoAI@elena @emilymbender @cwebber @tante

Remember when people valued the right to think more highly than the convenience of not having to think and just living with the consequences.

-

R relay@relay.mycrowd.ca shared this topic

-

The first ten minutes I spent on social media this morning made me feel all kinds of things. Why is it that people who routinely use LLMs are so loud and brash and proud, making these tools appear as essential and inevitable?

A post by a dev whose app I use said something along the lines of: "no use exercising your coding skills, AI is too good now, you can't compete with it anyway".

Another post by a user on an instance I try to engage with wrote - literally: "tired of overthinking every decision?" and then disclosed he had created an AI that will "run a weighted decision matrix so you don't have to." In all seriousness.

What is this dystopian world where human qualities are devalued, critical thinking is discarded and surveillance capitalism is ignored at the altar of AI worship?

If they are loud and proud, maybe so I can be too... but in the opposite direction.

This weekend I will start the MIT's Missing Semester class (the 2020 Lectures, so pre-AI) because in this brave new world hyping up techno-fascist LLMs, knowing the basics of code are essential IMHO.

So my March "project" will be a deep dive in MIT's Missing Semester and my April project will be off-grid mesh radio communication.

What about you, what are you doing to resist?

Special props to @emilymbender @cwebber and @tante for being outspoken on these issues... you're my beacons of hope

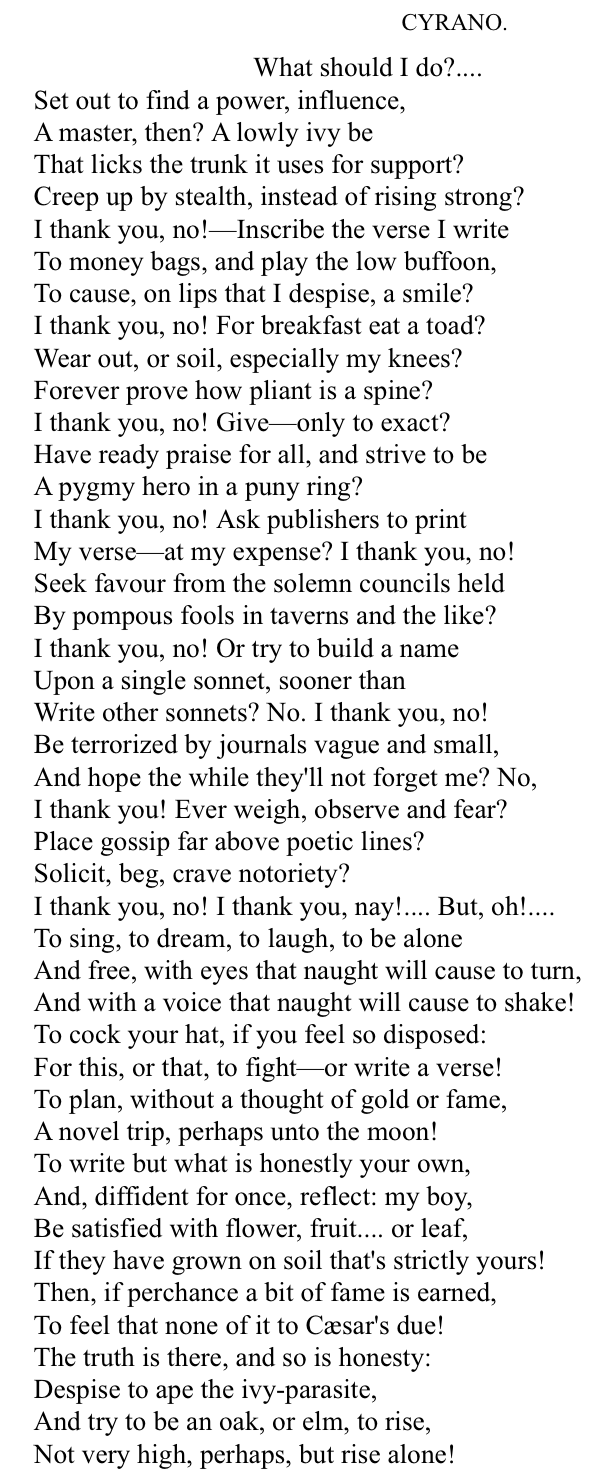

#NoAI@elena @emilymbender @cwebber @tante I think I’ll do some laundry, play with my kiddo, and reread rostand’s Cyrano - whom I continue to find to be a good guide when facing ethical questions in technology and conflict.