Happy Mainframe Day

-

@mappingsupport @aka_pugs What sort of terminals? Teletypes? And they had leased lines to the university?

360/91 was the high end mainframe at the time, that thing could have megabytes of RAM made by people manually stringing cores on wires.

@mike805 @mappingsupport Princeton's 360 was purely batch - no terminals other than the operator console (IBM 3215?). But there was a 370 in the same room running time-sharing. IBM's native terminals were the 3277 (weird-ass CRT) and the 2741 (printer/keyboard based on the Selectric typewriter). But as time went on, ASCII CRTs were dominant.

And the model 91 had a whopping 2 (two!) megabytes of core.

-

@mike805 @mappingsupport Princeton's 360 was purely batch - no terminals other than the operator console (IBM 3215?). But there was a 370 in the same room running time-sharing. IBM's native terminals were the 3277 (weird-ass CRT) and the 2741 (printer/keyboard based on the Selectric typewriter). But as time went on, ASCII CRTs were dominant.

And the model 91 had a whopping 2 (two!) megabytes of core.

@aka_pugs @mappingsupport So you could use ASCII character terminals with a mainframe?

I know the 3270s were more like a browser where you got a whole screenful at a time, and the response was only sent back when you pressed enter or a function key.

I ran into one of those IBM block terminals at a university library once, and it's still one of the fastest interactive query systems I've ever seen. They had that optimized.

-

@mappingsupport @aka_pugs What sort of terminals? Teletypes? And they had leased lines to the university?

360/91 was the high end mainframe at the time, that thing could have megabytes of RAM made by people manually stringing cores on wires.

-

Happy Mainframe Day!

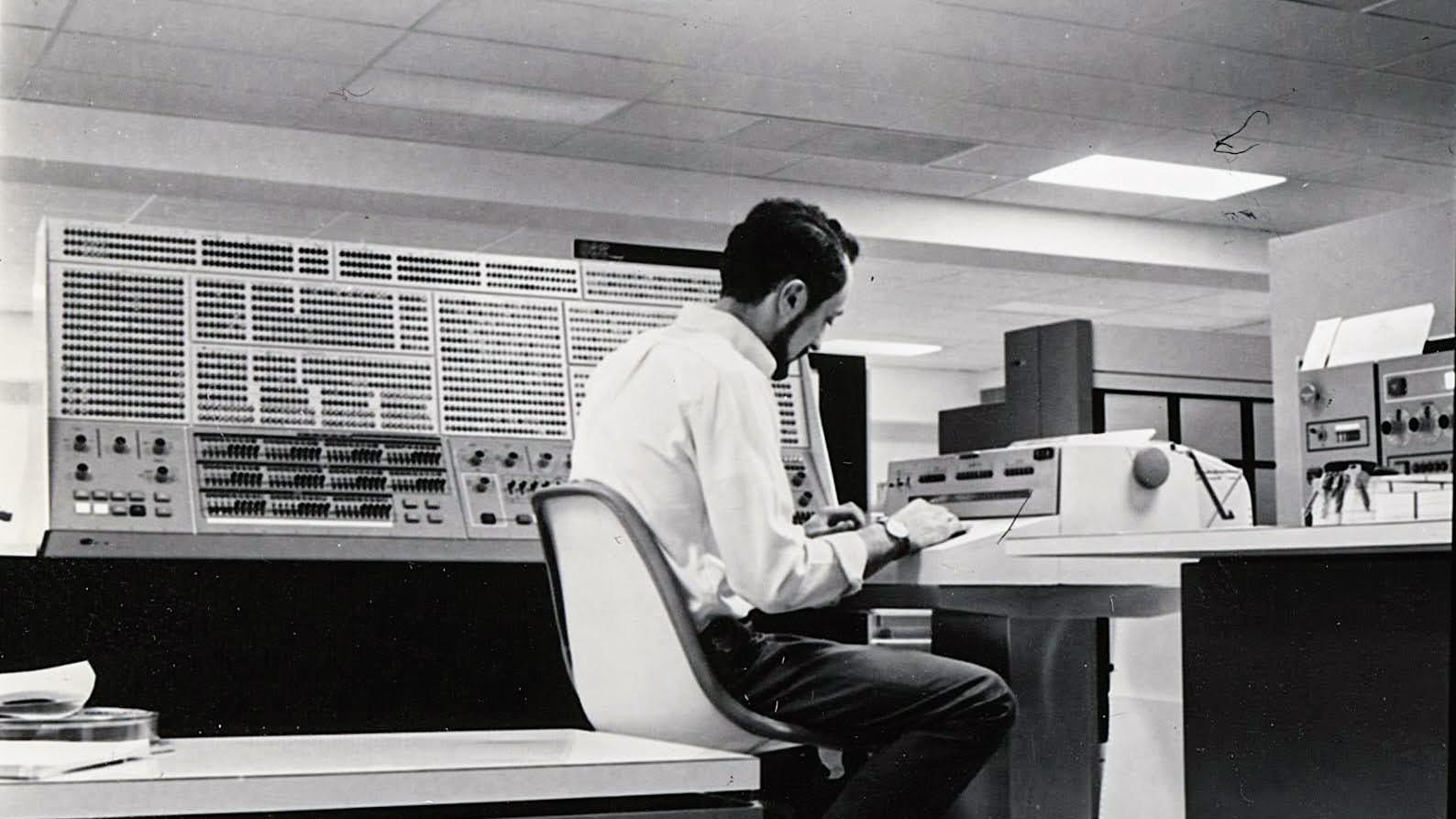

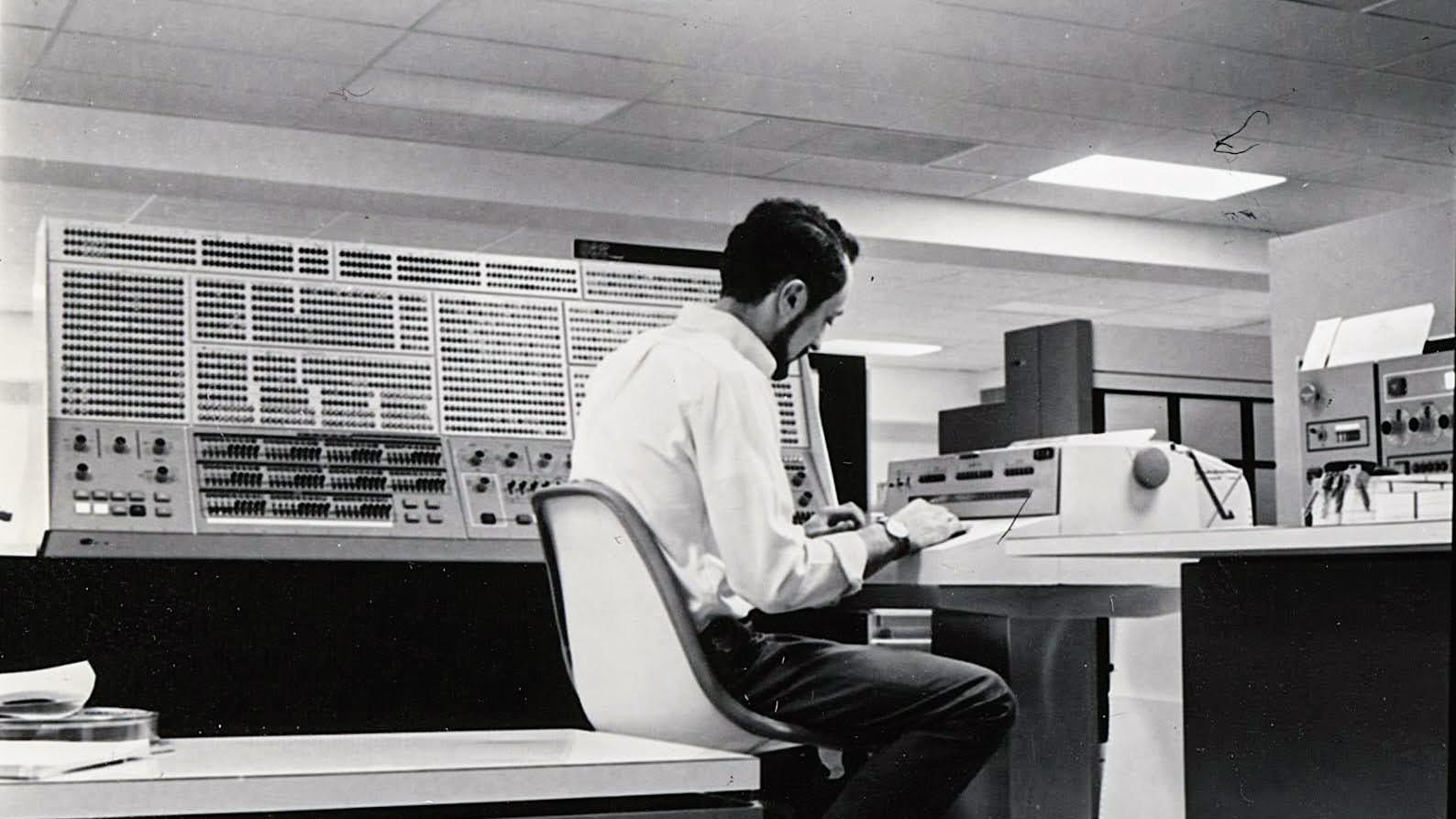

OTD 1964: IBM announces the System/360 family. 8-bit bytes ftw!Shown: Operator at console of Princeton's IBM/360 Model 91.

@aka_pugs Really was the beginning of the modern era of computing, starting with the normalisation of 8-bit bytes and character addressable architecture.

Well, that's all true so long as we don't mention EBCDIC

-

@aka_pugs Really was the beginning of the modern era of computing, starting with the normalisation of 8-bit bytes and character addressable architecture.

Well, that's all true so long as we don't mention EBCDIC

@markd They had ASCII mode, but the peripherals never got the memo.

-

Happy Mainframe Day!

OTD 1964: IBM announces the System/360 family. 8-bit bytes ftw!Shown: Operator at console of Princeton's IBM/360 Model 91.

@aka_pugs Only 8 bits per word? That will never be enough for anyone! 18 bit words like the PDP-1 or 12 bit words like the PDP-8, now that's serious computing! Also, 8 bit words make octal representation quite pointless…

-

@markd They had ASCII mode, but the peripherals never got the memo.

@aka_pugs @markd ASCII mode was only about how some of the decimal arithmetic instructions behaved. For the printers, the character set was pretty arbitrary, and the Translate instruction would have allowed for easy compatibility no matter what. The real EBCDIC issue was the card reader—and per Fred Brooks, IBM wanted to go with ASCII but their big data processing customers talked them out of it.. But that's a story for another post. (And 8-bit bytes? Brooks felt that 8-bit bytes and 32-bit words was one of the most important innovations in the S/360 line. It wasn't a foregone conclusion—many scientific computing folks really wanted to stick with 36-bit words, for extra precision. IBM ran *lots* of simulations to assure everyone that 32 bit floating point was ok.)

Why yes, in grad school I did take computer architecture from Brooks…

-

R relay@relay.infosec.exchange shared this topic

-

@aka_pugs Really was the beginning of the modern era of computing, starting with the normalisation of 8-bit bytes and character addressable architecture.

Well, that's all true so long as we don't mention EBCDIC

@markd a former colleague of mine used to joke that EBCDIC was the first strong crypto algorithm to be exported from the US

-

@aka_pugs Really was the beginning of the modern era of computing, starting with the normalisation of 8-bit bytes and character addressable architecture.

Well, that's all true so long as we don't mention EBCDIC

-

@aka_pugs @markd ASCII mode was only about how some of the decimal arithmetic instructions behaved. For the printers, the character set was pretty arbitrary, and the Translate instruction would have allowed for easy compatibility no matter what. The real EBCDIC issue was the card reader—and per Fred Brooks, IBM wanted to go with ASCII but their big data processing customers talked them out of it.. But that's a story for another post. (And 8-bit bytes? Brooks felt that 8-bit bytes and 32-bit words was one of the most important innovations in the S/360 line. It wasn't a foregone conclusion—many scientific computing folks really wanted to stick with 36-bit words, for extra precision. IBM ran *lots* of simulations to assure everyone that 32 bit floating point was ok.)

Why yes, in grad school I did take computer architecture from Brooks…

@SteveBellovin @aka_pugs If you were on the non-EBCDIC side of the fence you got the impression that IBM sales pushed EBCDIC pretty hard as a competitive advantage - even if their engineering covertly preferred ASCII.

The 32-bit word must have been a harder-sell for the blue suits since the competition were selling 60bit and 36bit amongst other oddballs.

Fortunately the emergence of commercial customers marked the declining relevance of scientific computing... Did IBM get lucky or were they prescient?

But yeah, the S/360 definitely marked the end of the beginning of computing in multiple ways.

-

@SteveBellovin @aka_pugs If you were on the non-EBCDIC side of the fence you got the impression that IBM sales pushed EBCDIC pretty hard as a competitive advantage - even if their engineering covertly preferred ASCII.

The 32-bit word must have been a harder-sell for the blue suits since the competition were selling 60bit and 36bit amongst other oddballs.

Fortunately the emergence of commercial customers marked the declining relevance of scientific computing... Did IBM get lucky or were they prescient?

But yeah, the S/360 definitely marked the end of the beginning of computing in multiple ways.

@markd @SteveBellovin IBM had a huge lead in commercial data processing because of their punch card business. And that world did not care about floating point. The model 91 was an ego-relief product, not a real business. IMO.

Data processing and HPC markets never converged - until maybe AI.

-

@markd @SteveBellovin IBM had a huge lead in commercial data processing because of their punch card business. And that world did not care about floating point. The model 91 was an ego-relief product, not a real business. IMO.

Data processing and HPC markets never converged - until maybe AI.

@aka_pugs @markd @SteveBellovin

But still, FORTRAN IV got lots of use especially on 360/50…85 in universities & R&D labs. i suspect not much on /30 /40.

I still think of 360 as a huge bet to consolidate the chaos of the 701…7094 36-bit path and the 702…7074 &1401 variable-string paths.

And for fun: I asked both Gene Amdahl & Fred Brooks why they used 24-bit addressing, ignoring high 8-bits… which caused a lot of problems/complexity later.

A: save hardware on 360/30, w/8-bit data paths. -

@aka_pugs @markd @SteveBellovin

But still, FORTRAN IV got lots of use especially on 360/50…85 in universities & R&D labs. i suspect not much on /30 /40.

I still think of 360 as a huge bet to consolidate the chaos of the 701…7094 36-bit path and the 702…7074 &1401 variable-string paths.

And for fun: I asked both Gene Amdahl & Fred Brooks why they used 24-bit addressing, ignoring high 8-bits… which caused a lot of problems/complexity later.

A: save hardware on 360/30, w/8-bit data paths.@JohnMashey @markd @SteveBellovin I love this quote from Boeing about the 360.

See https://drive.google.com/file/d/1Zb6s_Ti7ON-6DNGg8VB2VvLSbh72_bgM/view?usp=sharing

-

@JohnMashey @markd @SteveBellovin I love this quote from Boeing about the 360.

See https://drive.google.com/file/d/1Zb6s_Ti7ON-6DNGg8VB2VvLSbh72_bgM/view?usp=sharing

@aka_pugs @JohnMashey @markd @SteveBellovin Huh, interesting comment on hex floating point. I’ve long thought that a controversial choice. I remember hearing an IBM numerical analyst claim that the hex floating point was “cleaner” than competing formats (this predated IEEE 754) but much literature today echoes the criticism given here that the hex format effectively shortens the significand.

-

@aka_pugs @markd @SteveBellovin

But still, FORTRAN IV got lots of use especially on 360/50…85 in universities & R&D labs. i suspect not much on /30 /40.

I still think of 360 as a huge bet to consolidate the chaos of the 701…7094 36-bit path and the 702…7074 &1401 variable-string paths.

And for fun: I asked both Gene Amdahl & Fred Brooks why they used 24-bit addressing, ignoring high 8-bits… which caused a lot of problems/complexity later.

A: save hardware on 360/30, w/8-bit data paths.@JohnMashey @aka_pugs @markd @SteveBellovin sounds like Motorola copied their reasoning years later, with the MC68000.

For us UMich folks, the 360/67 was the machine that mattered...

-

@aka_pugs @JohnMashey @markd @SteveBellovin Huh, interesting comment on hex floating point. I’ve long thought that a controversial choice. I remember hearing an IBM numerical analyst claim that the hex floating point was “cleaner” than competing formats (this predated IEEE 754) but much literature today echoes the criticism given here that the hex format effectively shortens the significand.

@stuartmarks @aka_pugs @JohnMashey @markd There are many different points here to respond to; let me first address the EBCDIC/ASCII issue, and why IBM's sales reps pushed it.

As @aka_pugs pointed out, IBM had a huge commercial data processing business dating back to the pre-computer punch card days. (IBM was formed by the merger of several companies, including Hollerith's own.) Look at the name of the company: International *Business* Machines. You could do amazing things with just punch card machines—Arthur Clarke's classic 1946 story Rescue Party (https://en.wikipedia.org/wiki/Rescue_Party) referred in passing to "Hollerith analyzers". But punch cards had a crucial limit: your life was much better if all of the data for a record fit onto a single 80-column card. This meant that space was at a premium, so it was common to overpunch numeric columns, e.g., age, with "zone punch" in the 11 or 12 rows. Thus, a card column with just a punch in the 1 row was the digit 1, but if it had a row 12 punch as well it was either the letter A *or* the digit 1 and a binary signal for whatever was encoded by the row 12 punch. The commercial computers of the 1950s, which used 6-bit "bytes" for decimal digits as "BCD"—binary-coded decimal—mirrored this: the two high-order bits could be encoded data.

The essential point here is that with BCD, it was possible to do context-free decomposition, in a way that you couldn't do with ASCII. The IBM engineers wanted the S/360 to be an ASCII machine, but the big commercial customers pushed back very hard. IBM bowed to the commercial reality (but with the ASCII bit for dealing with "packed decimal" conversions), and marketed the machine that way: "you don't have to worry about your old data, because EBCDIC"—extended BCD interachange code—"your old files are still good." That's why the sales people talked it up—they saw this as a major commercial advantage. -

@JohnMashey @aka_pugs @markd @SteveBellovin sounds like Motorola copied their reasoning years later, with the MC68000.

For us UMich folks, the 360/67 was the machine that mattered...

@hyc @aka_pugs @markd @SteveBellovin

16MB in S/360 & 68K, ignoring high bits, => clever programmers used high 8 bits for flags, as I did when writing ASSIST in 1970, still running as late as 2015, likely still.

68000 to 68020, 24 to 32-bit caused trouble for Mac II software.

I wrote of this in BYTE 1991, see section

“The mainframe, minicomputer, and microprocessor”

https://www.bourguet.org/v2/comparch/mashey-byte-1991

MIPS R4000 was released later in 1991. It translated 40 bits, but trapped high-order bits not all 0s/1s. -

@hyc @aka_pugs @markd @SteveBellovin

16MB in S/360 & 68K, ignoring high bits, => clever programmers used high 8 bits for flags, as I did when writing ASSIST in 1970, still running as late as 2015, likely still.

68000 to 68020, 24 to 32-bit caused trouble for Mac II software.

I wrote of this in BYTE 1991, see section

“The mainframe, minicomputer, and microprocessor”

https://www.bourguet.org/v2/comparch/mashey-byte-1991

MIPS R4000 was released later in 1991. It translated 40 bits, but trapped high-order bits not all 0s/1s.@hyc @aka_pugs @markd @SteveBellovin

Some users who knew about R4000 wanted to use the high bits as tag bits. I said NO!

-

@hyc @aka_pugs @markd @SteveBellovin

Some users who knew about R4000 wanted to use the high bits as tag bits. I said NO!

@JohnMashey @hyc @aka_pugs @markd Brooks said that the 24-bit address decision was an economic one. But he also recognized, and stated, that "every successful architecture runs out of address space." (Aside: that's one reason why IPv6 addresses are 128 bits instead of 64—I and a few others insisted on it, and I specifically quoted Brooks' observation.) But there was one really crucial error in the S/360 architecture: the Load Address instruction was defined by the architecture to zero the high-order byte, making it impossible to use that instruction on 32-bit address machines. Since LA was the most common instruction used, per actual hardware traces, this was a serious issue. (It wasn't only used for addresses; indeed, many of the instances were to provide what Brooks called the "indispensable small positive constant".) The I/O architecture was also 24-bit, but that didn't bother the architects—they figured it would be replaced with something smarter later on anyway.

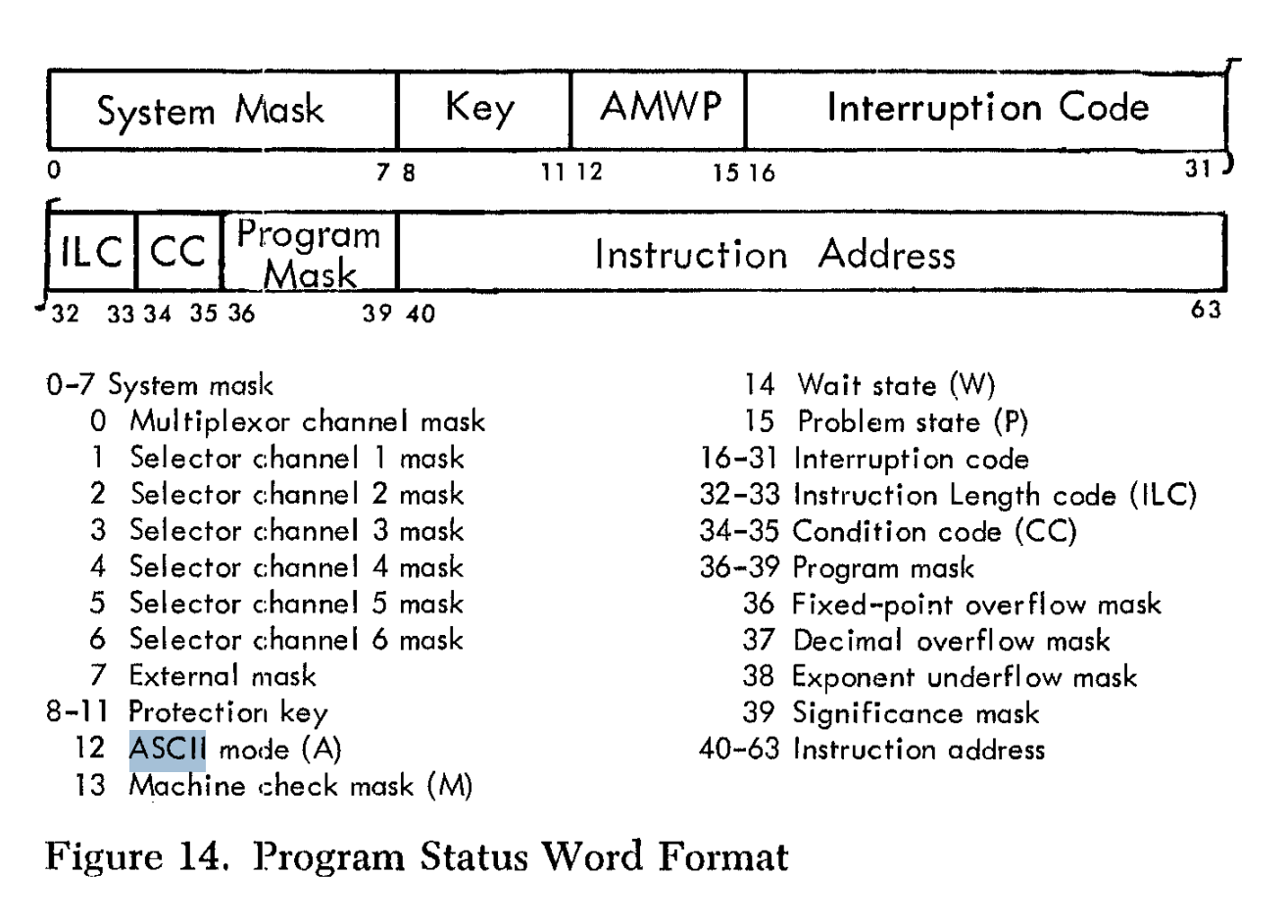

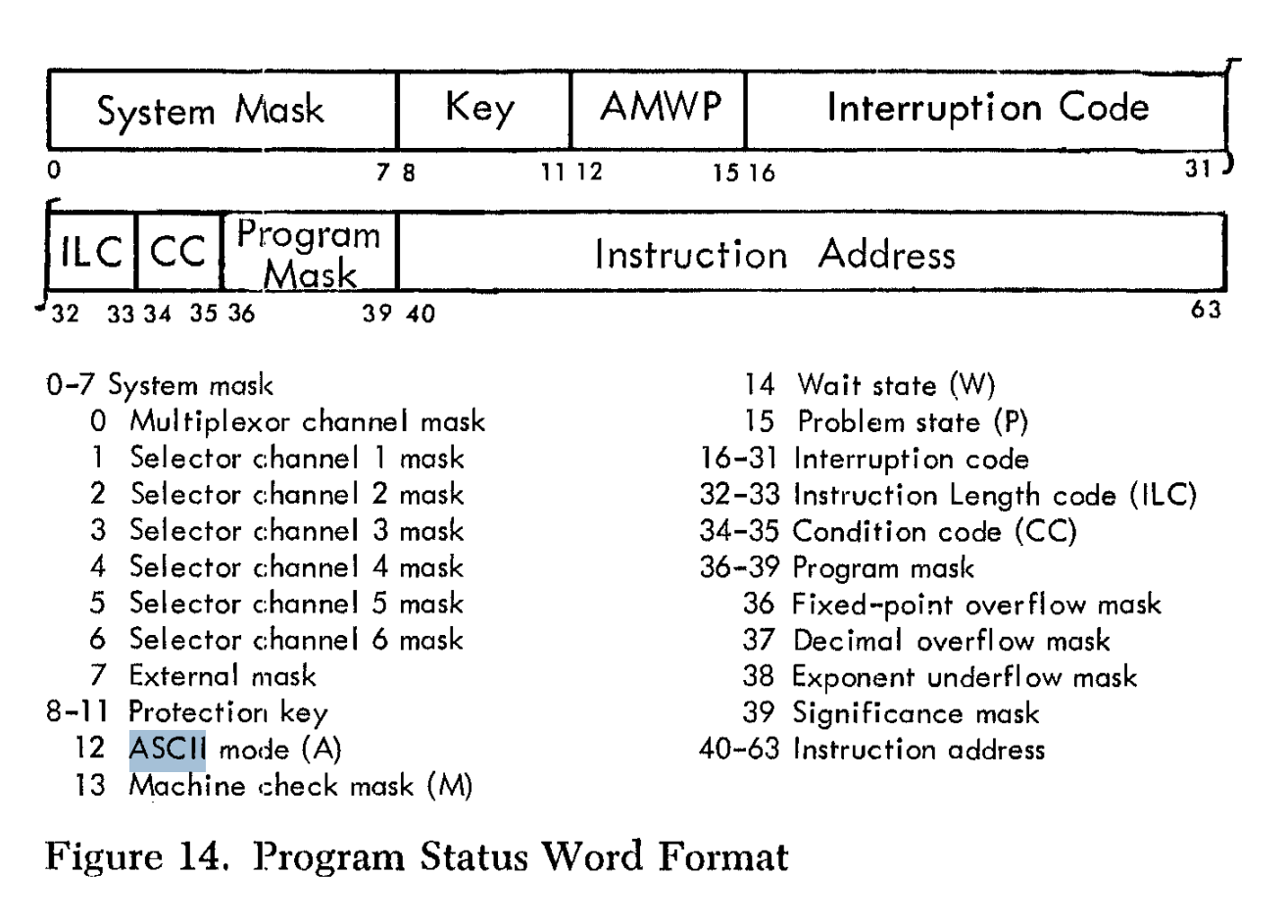

Update: I forgot about the Branch and Link instructions, which were used for subroutine calls. Per the Principles of Operation manual, "The rightmost 32 bits of the PSW, including the updated instruction address, are stored." The high-order 8 bits of the PSW included the "condition code", used for conditional branches, and the "program mask", which could be and was changed by application programs to disable some software-related interrupts, e.g., fixed-point overflow. This instruction was also not 32-bit-address compatible. (In Blaauw and Brooks, they note that extension to 32-bit addressing was seen as desirable and necessary from the very beginning.)

-

@JohnMashey @hyc @aka_pugs @markd Brooks said that the 24-bit address decision was an economic one. But he also recognized, and stated, that "every successful architecture runs out of address space." (Aside: that's one reason why IPv6 addresses are 128 bits instead of 64—I and a few others insisted on it, and I specifically quoted Brooks' observation.) But there was one really crucial error in the S/360 architecture: the Load Address instruction was defined by the architecture to zero the high-order byte, making it impossible to use that instruction on 32-bit address machines. Since LA was the most common instruction used, per actual hardware traces, this was a serious issue. (It wasn't only used for addresses; indeed, many of the instances were to provide what Brooks called the "indispensable small positive constant".) The I/O architecture was also 24-bit, but that didn't bother the architects—they figured it would be replaced with something smarter later on anyway.

Update: I forgot about the Branch and Link instructions, which were used for subroutine calls. Per the Principles of Operation manual, "The rightmost 32 bits of the PSW, including the updated instruction address, are stored." The high-order 8 bits of the PSW included the "condition code", used for conditional branches, and the "program mask", which could be and was changed by application programs to disable some software-related interrupts, e.g., fixed-point overflow. This instruction was also not 32-bit-address compatible. (In Blaauw and Brooks, they note that extension to 32-bit addressing was seen as desirable and necessary from the very beginning.)

@SteveBellovin @hyc @aka_pugs @markd

Agreed, I wrote a lot of S/360 assembly code & LA was very useful.

Although not architectural, but software convention, using high-order bit in last ptr argument in argument list persisted.On economics, I do wonder how much $ the 360/30-based decision cost IBM in the long term, in terms of software/hardware complexity.