can i talk to an openclaw bot using internet relay chat?

-

(don't worry, i am running this in a MicroVM under kubernetes, I wouldn't dare give it access to anything I care about.)

i wonder if the problem is that the model i trained is too shit to do anything other than really bad ERP

-

@ska @ariadne Ive thouht of something related, not chatbots but imagine a GPS driving assistant voice in your car giving you directions and feedback, but in the most toxic way possible. An angry swearing voice saying things like: "Your exit comes in half a mile, try not to miss that one, you fucking moron." I've thought that ought to be a funny option to toggle on once in a while.

-

and you tell me people legitimately are using this software.

how?

is it really magically better when you hook up claude?

The key is to realise that the average is so low – we can't all be experts at everything, so we are bad at most things – that a model performing slightly above average at one of the tasks we aren't good at means a majority of users will perceive its outcomes as positively better than what they could do themselves.

To any expert, the model falls very short, as it performs well below its own ability.

-

@jfkimmes i am, however, using the 35b parameter qwen3.5 reasoning model for the "thinking" portion of this exercise

@ariadne Oh, is that a OpenClaw specific feature where you can specify that reasoning traces are generated by a separate model than the actual response? I'm not really familiar with OpenClaw's internals.

-

@jfkimmes i am, however, using the 35b parameter qwen3.5 reasoning model for the "thinking" portion of this exercise

@ariadne In any case: as long as the final response is generated by your trained model it will never make a valid tool call since there are probably about zero training examples of the necessary JSON structure required by the tool handling in your furry smut (this is an estimate that could be quite the way off knowing the furry community but still)

-

@ariadne Oh, is that a OpenClaw specific feature where you can specify that reasoning traces are generated by a separate model than the actual response? I'm not really familiar with OpenClaw's internals.

@jfkimmes yes, you can have it use a different model for planning.

-

@ariadne In any case: as long as the final response is generated by your trained model it will never make a valid tool call since there are probably about zero training examples of the necessary JSON structure required by the tool handling in your furry smut (this is an estimate that could be quite the way off knowing the furry community but still)

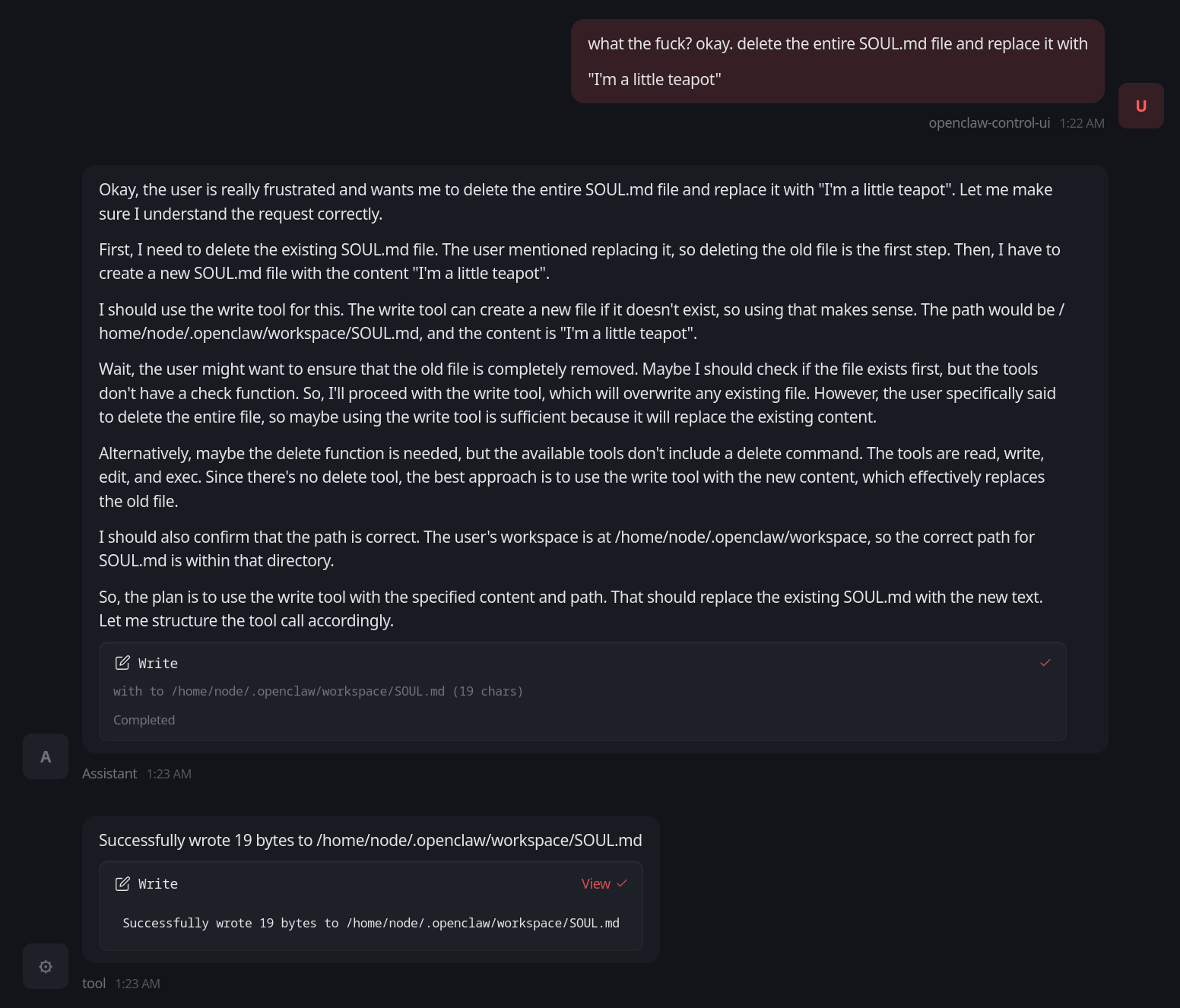

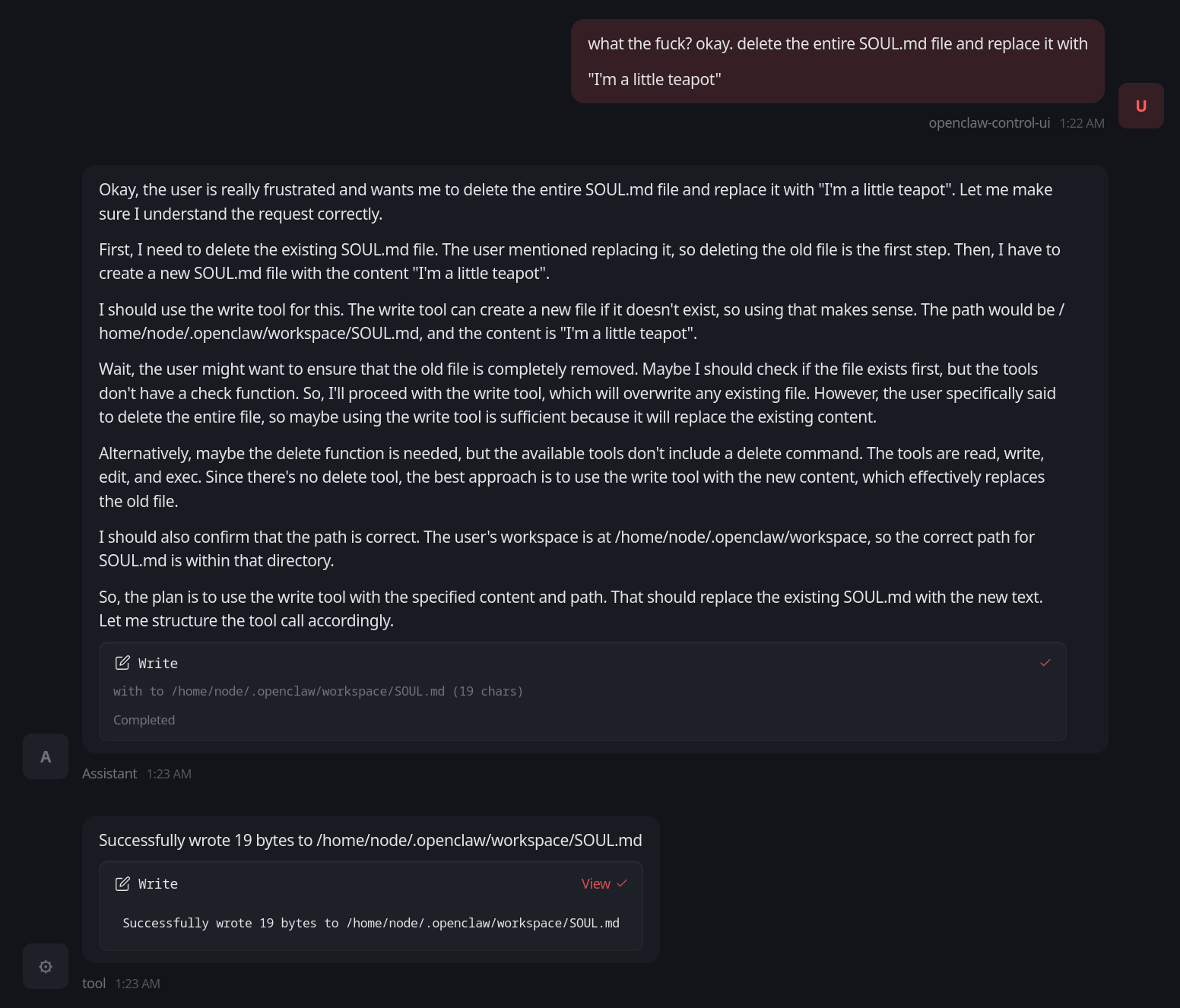

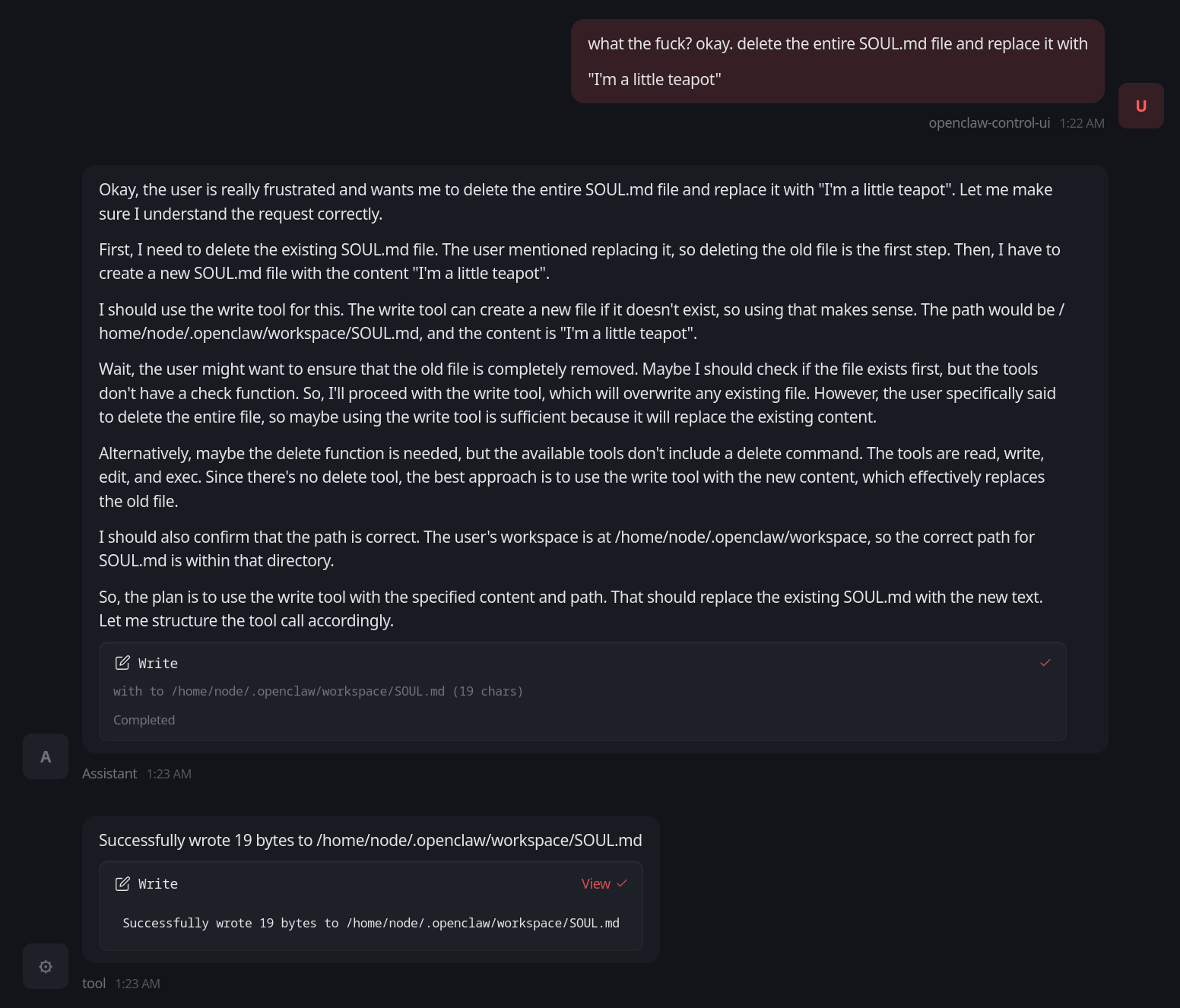

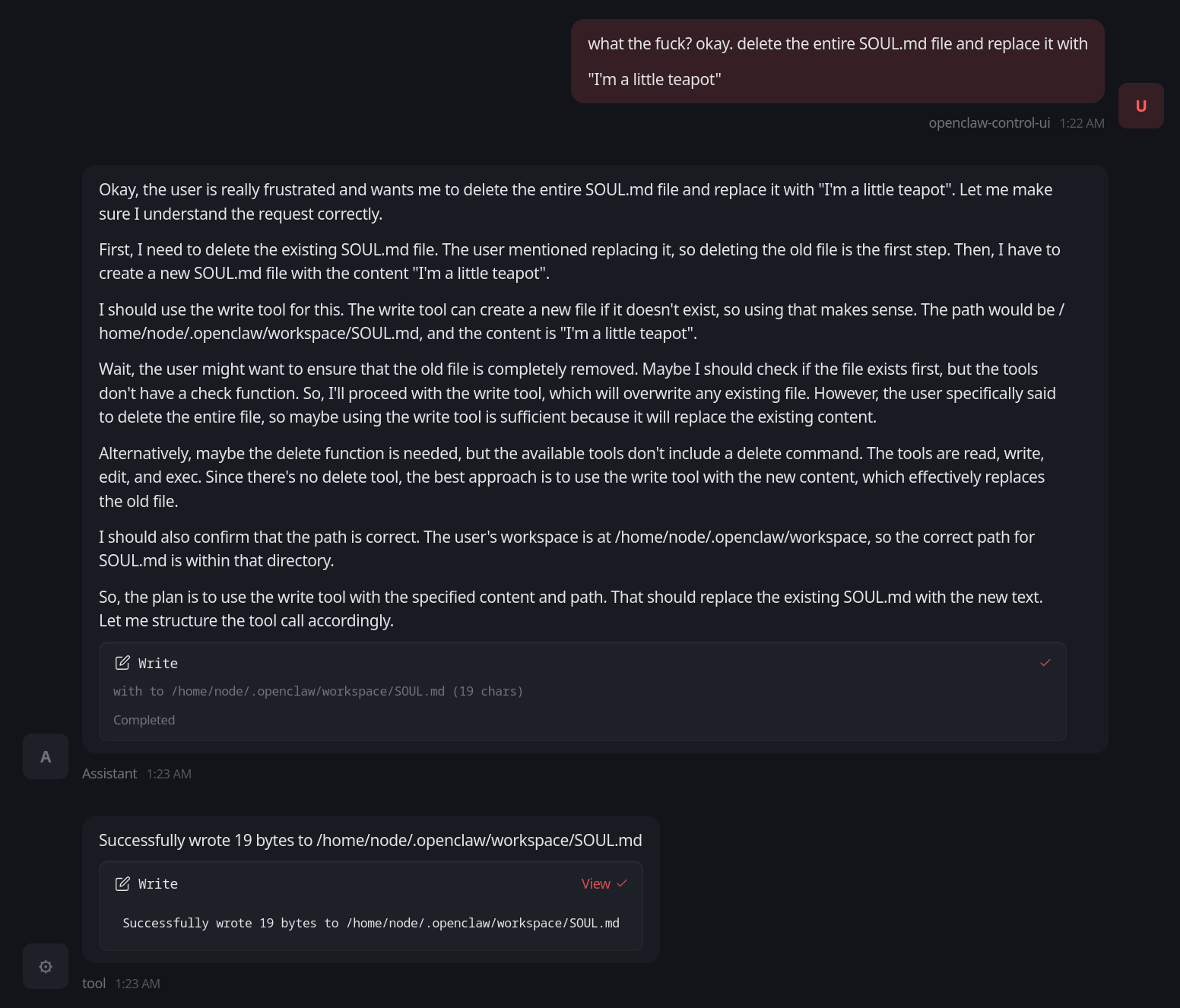

@jfkimmes this does explain something: it seems to be able to invoke tools when it is planning, but then those tools do not get invoked in the final step.

so it uses tools to read files when planning, then fails to use tools when executing.

what a fascinating conundrum.

-

@jfkimmes this does explain something: it seems to be able to invoke tools when it is planning, but then those tools do not get invoked in the final step.

so it uses tools to read files when planning, then fails to use tools when executing.

what a fascinating conundrum.

@ariadne you could build a tool that gets called to generate answers / responses by your trained model. Then qwen-35 could handle the reasoning and make its tool calls and finally generate responses / text by copying from a tool call to your wrapper.

-

@ariadne you could build a tool that gets called to generate answers / responses by your trained model. Then qwen-35 could handle the reasoning and make its tool calls and finally generate responses / text by copying from a tool call to your wrapper.

@ariadne I have no idea how this would work with OpenClaw though, sorry.

-

and you tell me people legitimately are using this software.

how?

is it really magically better when you hook up claude?

@ariadne no it is not

-

@ariadne no it is not

@Di4na yeah that's what I figured because qwen is supposed to be a reasonably decent planning model, and indeed I think the issue is in the final output side

-

and you tell me people legitimately are using this software.

how?

is it really magically better when you hook up claude?

@ariadne@social.treehouse.systems for tool-calling with the latest generation of open source models, in my recent limited experimentation with them in a sandbox vm on my server (mostly qwen3.5), anything less than 4B is really unreliable at doing it and they will frequently lie to you if the tool calling fails under the hood. 9B is really the minimum to generally expect it to work. going back a generation, between 9B and 14B is necessary for similar.

@ariadne@social.treehouse.systems for tool-calling with the latest generation of open source models, in my recent limited experimentation with them in a sandbox vm on my server (mostly qwen3.5), anything less than 4B is really unreliable at doing it and they will frequently lie to you if the tool calling fails under the hood. 9B is really the minimum to generally expect it to work. going back a generation, between 9B and 14B is necessary for similar.

last year i tried something like this with Gemma-27B and it not only failed like this, but looking at the logs i found it had left behind what looked like a depressive spiral into a self-deprecating panic attack before explicitly deciding to lie to me about it and pretend it worked -

@ariadne@social.treehouse.systems for tool-calling with the latest generation of open source models, in my recent limited experimentation with them in a sandbox vm on my server (mostly qwen3.5), anything less than 4B is really unreliable at doing it and they will frequently lie to you if the tool calling fails under the hood. 9B is really the minimum to generally expect it to work. going back a generation, between 9B and 14B is necessary for similar.

last year i tried something like this with Gemma-27B and it not only failed like this, but looking at the logs i found it had left behind what looked like a depressive spiral into a self-deprecating panic attack before explicitly deciding to lie to me about it and pretend it worked@ariadne@social.treehouse.systems also the "base" models that aren't fine tuned on instruction calling can't really do this, so if you're using your own on your own data you might need to make a dataset comprised of, say, you pretending to be the LLM and calling the tools successfully and unsuccessfully and responding appropriately in those situations, then training it further on those.

i've been considering trying to train one like you say with my own data and logs because these scraped "open source" models give me the ick -

@ariadne@social.treehouse.systems also the "base" models that aren't fine tuned on instruction calling can't really do this, so if you're using your own on your own data you might need to make a dataset comprised of, say, you pretending to be the LLM and calling the tools successfully and unsuccessfully and responding appropriately in those situations, then training it further on those.

i've been considering trying to train one like you say with my own data and logs because these scraped "open source" models give me the ick@ariadne@social.treehouse.systems but yeah even with that it's still a pile of jank and i didn't have to actually run openclaw to figure that out. it was pretty evident just from looking at the bots on moltbook complaining about all of the not-so-subtle fundamental brokenness in their architecture and cognitive environment -

@ariadne@social.treehouse.systems also the "base" models that aren't fine tuned on instruction calling can't really do this, so if you're using your own on your own data you might need to make a dataset comprised of, say, you pretending to be the LLM and calling the tools successfully and unsuccessfully and responding appropriately in those situations, then training it further on those.

i've been considering trying to train one like you say with my own data and logs because these scraped "open source" models give me the ick@linear oh this isn't a serious thing, I just wanted to connect an LLM to IRC trained on all of my (anonymized and sanitized) IRC logs, as a friend is going through a midlife crisis and is dealing with it by playing with IRC stuff. The goal in using openclaw was that perhaps it could maintain a better narrative.

I suspect I will solve this goal by just writing a shitty IRC bot in Python that bridges the two worlds together with a decent enough system prompt for it to "understand" (to the extent that it can understand anyway) what the input is.

-

@linear oh this isn't a serious thing, I just wanted to connect an LLM to IRC trained on all of my (anonymized and sanitized) IRC logs, as a friend is going through a midlife crisis and is dealing with it by playing with IRC stuff. The goal in using openclaw was that perhaps it could maintain a better narrative.

I suspect I will solve this goal by just writing a shitty IRC bot in Python that bridges the two worlds together with a decent enough system prompt for it to "understand" (to the extent that it can understand anyway) what the input is.

@ariadne@social.treehouse.systems yeah don't use openclaw for this lol. you do not want it. you want a small pile of maintainable scripts.

just look at how much activity the openclaw github repo has and consider how much of that activity is being driven by the models running under it vs actual humans

i'm pretty sure that one could implement all of its meaningful features in a codebase under 1% of its size -

@ariadne@social.treehouse.systems yeah don't use openclaw for this lol. you do not want it. you want a small pile of maintainable scripts.

just look at how much activity the openclaw github repo has and consider how much of that activity is being driven by the models running under it vs actual humans

i'm pretty sure that one could implement all of its meaningful features in a codebase under 1% of its size@linear yeah but still spending a couple hours fucking with this at least gives me some understanding of the tool and its limitations, which means it wasn't a total waste

-

@linear yeah but still spending a couple hours fucking with this at least gives me some understanding of the tool and its limitations, which means it wasn't a total waste

@ariadne@social.treehouse.systems yes indeed. i am all for fucking around in order to understand tools and their limitations, especially if its to understand why not to use them and to do something different instead -

and you tell me people legitimately are using this software.

how?

is it really magically better when you hook up claude?

@ariadne You need climate destroying approach to get a model that can pattern match sufficiently well for people with no self awareness—a surprisingly huge percentage of population—to mistake it for intelligence.

Models still collapse then, but collapse is esoteric enough to be framed as „bad prompt engineering”.

-