AI is not inevitable.

-

AI is not inevitable. Nothing in human societies is inevitable because we design them. Healthcare can be free for the public. Books can be bought instead of bombs. Universities can be free for students, and they can even receive a stipend to live off. Don't let companies dictate the future.

Read more in section 3.2 here https://doi.org/10.5281/zenodo.17065099

@olivia Olivia, what would it mean for me to “refuse adoption” in universities when it is students who are the drivers for my courses and they are widely using AI in ways that are already forbidden?

I feel like the “resistance” and critique of inevitability talk isn’t quite connecting with my reality on the ground

-

@olivia Olivia, what would it mean for me to “refuse adoption” in universities when it is students who are the drivers for my courses and they are widely using AI in ways that are already forbidden?

I feel like the “resistance” and critique of inevitability talk isn’t quite connecting with my reality on the ground

I glanced at your work on "Science communication as collective intelligence".

I think that we need to redesign university courses so that learning is communal for the solution of a specific goal.

I think though that Olivia's points are complementary to that goal. Universities should resist AI adoption as imposed by external economic forces.

-

I glanced at your work on "Science communication as collective intelligence".

I think that we need to redesign university courses so that learning is communal for the solution of a specific goal.

I think though that Olivia's points are complementary to that goal. Universities should resist AI adoption as imposed by external economic forces.

@apostolis @olivia I’ve have already redesigned both my assessments and my teaching in response to students’ AI use, but that kind of adaptation feels like it conceptually falls more into “inevitability” than “resist”

right now, what’s most valuable to me personally (given the starting point that every single student in my courses has somehow used AI, and a good proportion uses it *a lot*) is advice from other academics on how exactly they are trying to change what they do in response.

telling me “I can resist” doesn’t feel helpful in that way

-

@apostolis @olivia I’ve have already redesigned both my assessments and my teaching in response to students’ AI use, but that kind of adaptation feels like it conceptually falls more into “inevitability” than “resist”

right now, what’s most valuable to me personally (given the starting point that every single student in my courses has somehow used AI, and a good proportion uses it *a lot*) is advice from other academics on how exactly they are trying to change what they do in response.

telling me “I can resist” doesn’t feel helpful in that way

@apostolis @olivia I guess a different way of putting this all is that for the multiple ways in which AI is currently negatively affecting my work, both in teaching and research, the drivers underlying the use are not ,industry forces’ in the way the quoted passage in Olivia’s post is assuming, it is the independent, voluntary action of other individuals within the system (students, other researchers)

that whole frame (industry forces) captures well what is happening in many jobs, but it doesn’t capture what is happening in mine

-

@apostolis @olivia I guess a different way of putting this all is that for the multiple ways in which AI is currently negatively affecting my work, both in teaching and research, the drivers underlying the use are not ,industry forces’ in the way the quoted passage in Olivia’s post is assuming, it is the independent, voluntary action of other individuals within the system (students, other researchers)

that whole frame (industry forces) captures well what is happening in many jobs, but it doesn’t capture what is happening in mine

@UlrikeHahn @apostolis how do the students know to use this software if not through industry advertising?

-

@apostolis @olivia I guess a different way of putting this all is that for the multiple ways in which AI is currently negatively affecting my work, both in teaching and research, the drivers underlying the use are not ,industry forces’ in the way the quoted passage in Olivia’s post is assuming, it is the independent, voluntary action of other individuals within the system (students, other researchers)

that whole frame (industry forces) captures well what is happening in many jobs, but it doesn’t capture what is happening in mine

@apostolis @olivia the reason why this ultimately matters that pushing back against the real driver (the “organic” adoption of these tools by individuals) requires me to understand and engage with the perceived value and function these tools have for them…

…and that means trying to understand both what they can and what they can’t do. Simply declaring that these tools are garbage (“semantically meaningless random text generator”) isn’t useful for actually productively countering AI use in this configuration…(if they genuinely were meaningless random text generators I wouldn’t be faced with the negative effects in the first place).

the Fodor quote doesn’t feel like it’s aimed at that kind of understanding

-

@UlrikeHahn @apostolis how do the students know to use this software if not through industry advertising?

@olivia @apostolis are you suggesting that my resistance activity should be attempting to end industry advertising?

-

@olivia @apostolis are you suggesting that my resistance activity should be attempting to end industry advertising?

@olivia @apostolis what I’m trying to get at is the difference between somebody who is in a job where their line manager is telling them to use AI (I know many such people) and what is actually happening in my own academic and research environment where that isn’t happening and drivers of use are completely different

-

@olivia @apostolis what I’m trying to get at is the difference between somebody who is in a job where their line manager is telling them to use AI (I know many such people) and what is actually happening in my own academic and research environment where that isn’t happening and drivers of use are completely different

@UlrikeHahn @apostolis ok, thanks for sharing

-

@UlrikeHahn @apostolis ok, thanks for sharing

@olivia @apostolis ok, now that we have the contrast clear between contexts in which damage is arising from someone ordering people to use AI and ones where the problems stem from individuals voluntarily adopting them (and, in fact, adopting them even in the face of explicit sanction) what form do you think “resistance” should take in the latter?

that is, what, concretely, do you think academics in my position should do?

-

@apostolis @olivia the reason why this ultimately matters that pushing back against the real driver (the “organic” adoption of these tools by individuals) requires me to understand and engage with the perceived value and function these tools have for them…

…and that means trying to understand both what they can and what they can’t do. Simply declaring that these tools are garbage (“semantically meaningless random text generator”) isn’t useful for actually productively countering AI use in this configuration…(if they genuinely were meaningless random text generators I wouldn’t be faced with the negative effects in the first place).

the Fodor quote doesn’t feel like it’s aimed at that kind of understanding

@UlrikeHahn @apostolis yeah, I know many do not like many of the quotes and have trouble with my position

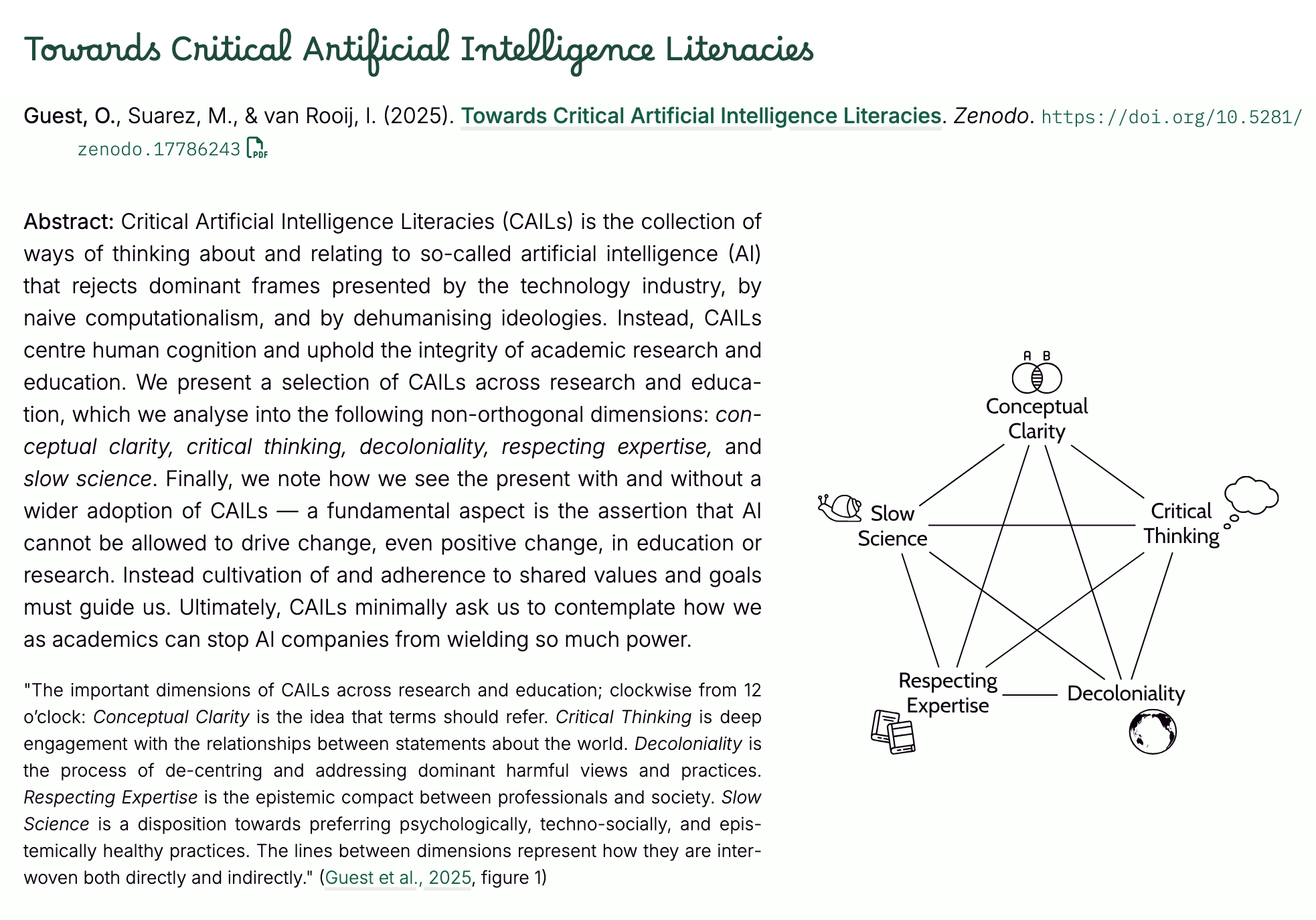

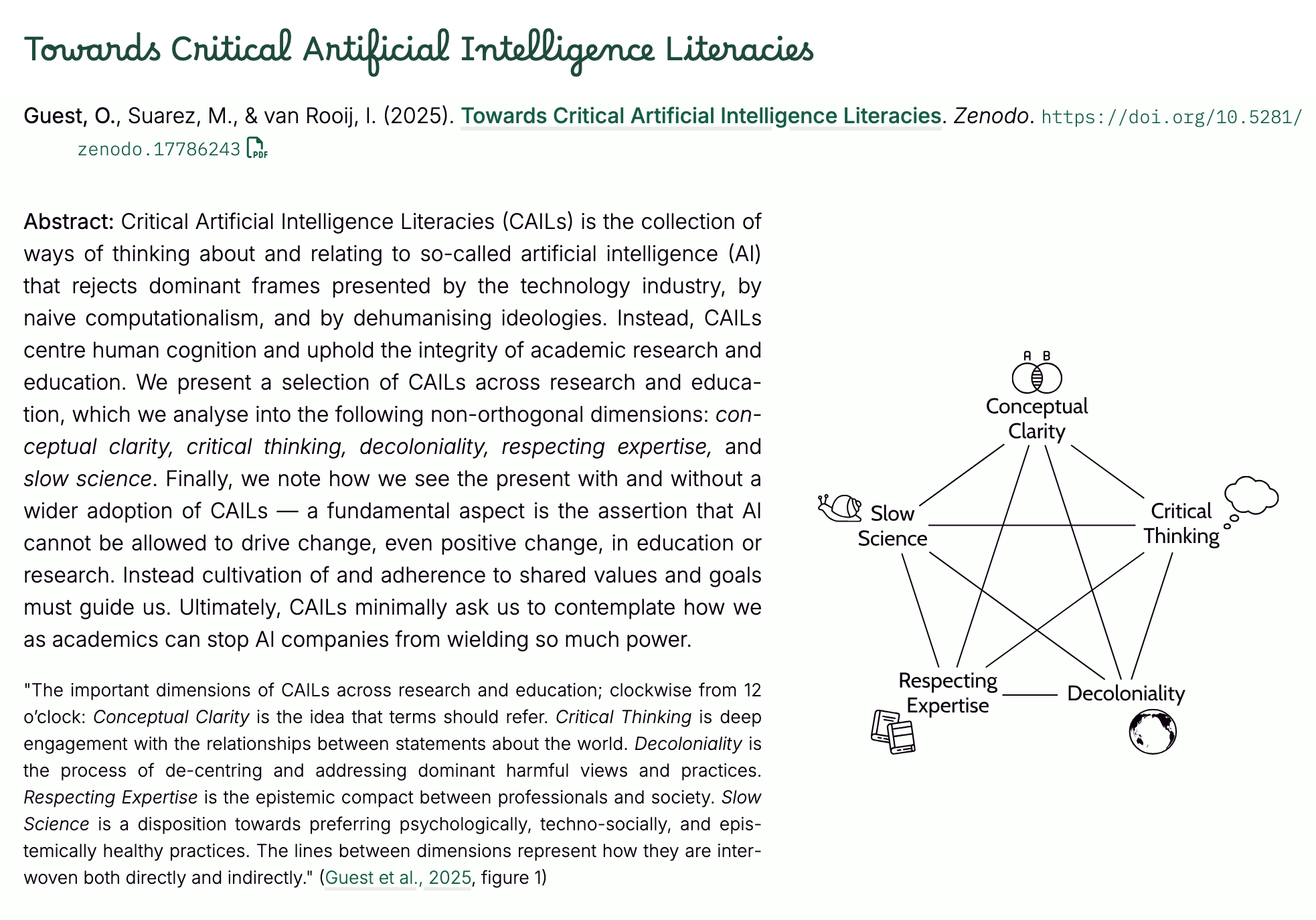

But yes, I do think we need to educate the students: Guest, O., Suarez, M., & van Rooij, I. (2025). Towards Critical Artificial Intelligence Literacies. Zenodo. https://doi.org/10.5281/zenodo.17786243

-

R relay@relay.infosec.exchange shared this topic

-

@olivia @apostolis ok, now that we have the contrast clear between contexts in which damage is arising from someone ordering people to use AI and ones where the problems stem from individuals voluntarily adopting them (and, in fact, adopting them even in the face of explicit sanction) what form do you think “resistance” should take in the latter?

that is, what, concretely, do you think academics in my position should do?

@UlrikeHahn @apostolis sorry to zoom it out, but why are you so interested in my position over texts when it's so long form all over my website and papers? I think your university does pay AI companies for services, so yes, you can push back on that, so you are the one who is pushing a distinction I personally disagree with!

-

@UlrikeHahn @apostolis yeah, I know many do not like many of the quotes and have trouble with my position

But yes, I do think we need to educate the students: Guest, O., Suarez, M., & van Rooij, I. (2025). Towards Critical Artificial Intelligence Literacies. Zenodo. https://doi.org/10.5281/zenodo.17786243

@olivia @apostolis I don’t have trouble with your position, Olivia. I have trouble with the fact that I don’t think the recommendations (including in the linked preprint) are connecting fully with the problem. It would be great if they were, but -from my day to day experience with how AI is up-ending science academia- they aren’t. Not because they are wrong, but because they are insufficient

so it’s important to me to figure out why they’re insufficient and what else we could/should be doing

-

@UlrikeHahn @apostolis sorry to zoom it out, but why are you so interested in my position over texts when it's so long form all over my website and papers? I think your university does pay AI companies for services, so yes, you can push back on that, so you are the one who is pushing a distinction I personally disagree with!

@olivia @apostolis we just crossed replies… maybe the one I just sent answers that?

-

@olivia @apostolis I don’t have trouble with your position, Olivia. I have trouble with the fact that I don’t think the recommendations (including in the linked preprint) are connecting fully with the problem. It would be great if they were, but -from my day to day experience with how AI is up-ending science academia- they aren’t. Not because they are wrong, but because they are insufficient

so it’s important to me to figure out why they’re insufficient and what else we could/should be doing

@UlrikeHahn @apostolis ok, I'm excited to see what you come up with!

-

@olivia @apostolis I don’t have trouble with your position, Olivia. I have trouble with the fact that I don’t think the recommendations (including in the linked preprint) are connecting fully with the problem. It would be great if they were, but -from my day to day experience with how AI is up-ending science academia- they aren’t. Not because they are wrong, but because they are insufficient

so it’s important to me to figure out why they’re insufficient and what else we could/should be doing

Sorry to interject my uneducated opinion , but both directions are insufficient alone.

You can look at it from both directions, top-down and bottoms-up. And both are necessary.

-

@UlrikeHahn @apostolis ok, I'm excited to see what you come up with!

@olivia @apostolis I don’t have any solution…it all feels pretty intractable to me at the moment, so I’m mainly struggling to understand the problem

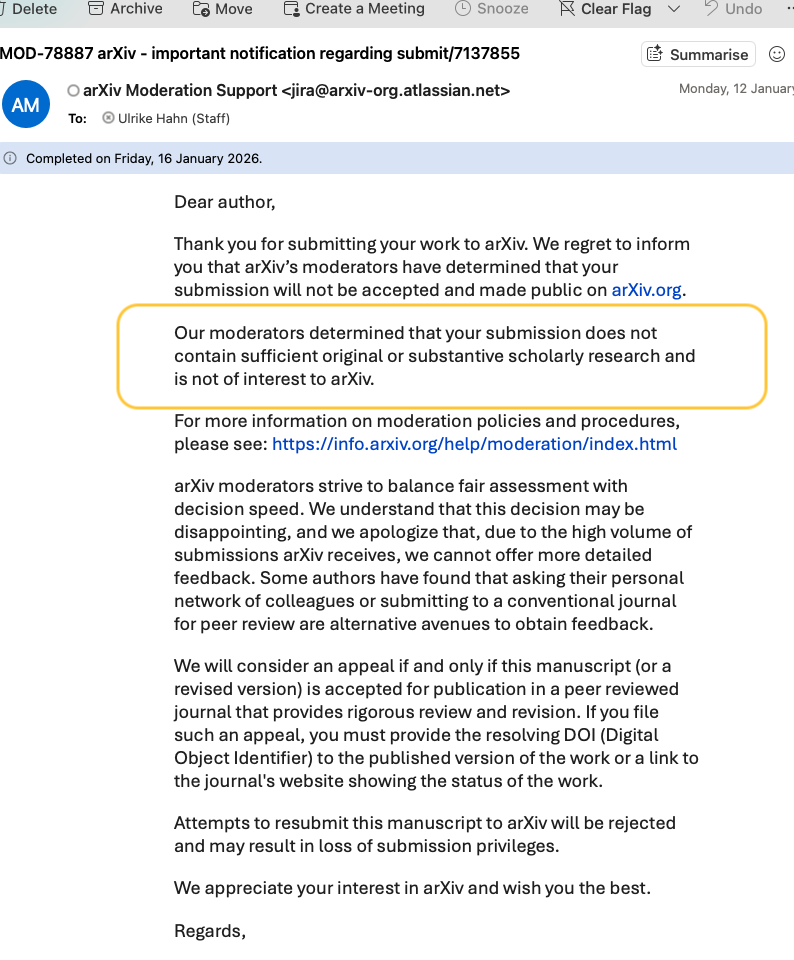

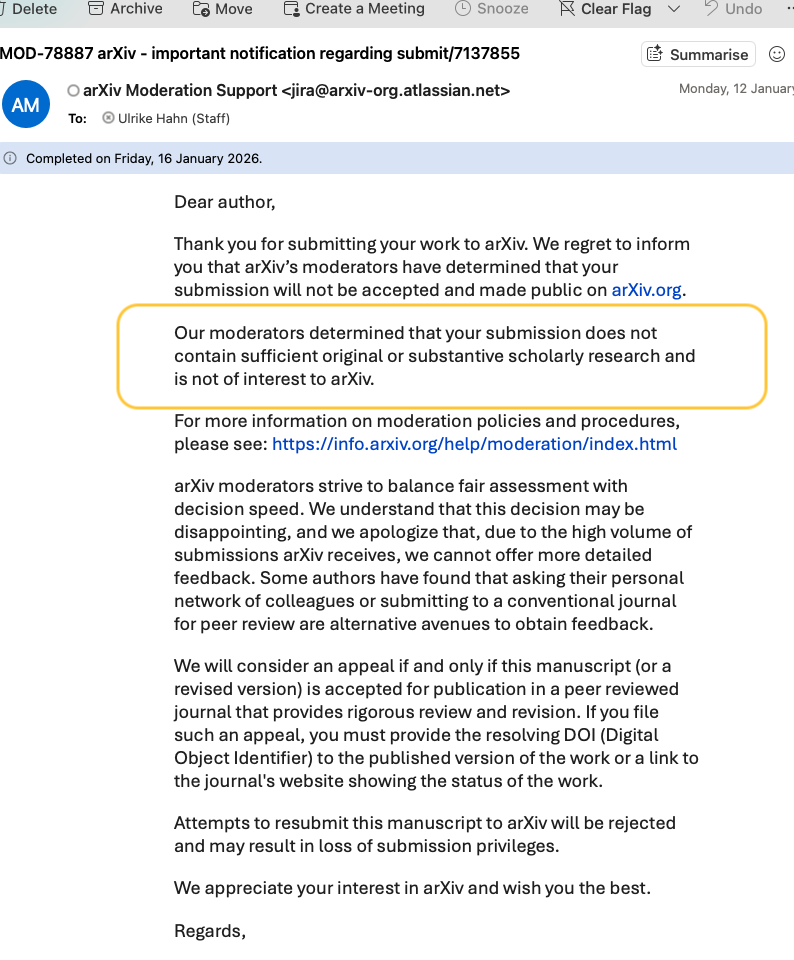

what AI is doing to publishing reform is as good an example as any (see below). There is an “industry force” at play here only in as much as there is an industry irresponsibly making available particular products.

The actual causal pathways by which AI is breaking the system involves multiple distinct actors with very different motivations (outright AI slop/fraud, malicious actors, scientists using AI for research in ways that increase productivity but still leaves them in charge), each of these is different, but they are all combining to an overall negative effect

what I don’t see is how we can solve anything (if we indeed can) without unpacking all that in detail

Is AI killing scientific reform?

Recently I tried to post a pre-print on arXiv about what might be going wrong in debate about reasoning in LLMs. arXiv seemed a relevant ...

UlrikeHahn (write.as)

-

Sorry to interject my uneducated opinion , but both directions are insufficient alone.

You can look at it from both directions, top-down and bottoms-up. And both are necessary.

@apostolis @olivia no disagreement with that!

-

@apostolis @olivia no disagreement with that!

@UlrikeHahn @apostolis it's funny mine is seen as top down tho, but sure, both in this schema are needed — but I am not by any means at any top in any sense

-

@olivia @apostolis I don’t have any solution…it all feels pretty intractable to me at the moment, so I’m mainly struggling to understand the problem

what AI is doing to publishing reform is as good an example as any (see below). There is an “industry force” at play here only in as much as there is an industry irresponsibly making available particular products.

The actual causal pathways by which AI is breaking the system involves multiple distinct actors with very different motivations (outright AI slop/fraud, malicious actors, scientists using AI for research in ways that increase productivity but still leaves them in charge), each of these is different, but they are all combining to an overall negative effect

what I don’t see is how we can solve anything (if we indeed can) without unpacking all that in detail

Is AI killing scientific reform?

Recently I tried to post a pre-print on arXiv about what might be going wrong in debate about reasoning in LLMs. arXiv seemed a relevant ...

UlrikeHahn (write.as)

@UlrikeHahn @apostolis I don't fully grasp what I did that makes one think I am against different analyses here? So each featured paper here analyses AI from a different angle pretty clearly with different actors: https://olivia.science/ai/#featuredresearch e.g. https://doi.org/10.31234/osf.io/dkrgj_v1